机器学习(周志华) 西瓜书 第五章课后习题5.6—— Python实现

-

实验题目

试设计一个BP改进算法,能通过动态调整学习率显著提升收敛速度。试编程实现该算法,并选择两个UCI数据集与标准BP算法进行实验比较。

-

实验原理

BP构造:

Sigmoid函数:

误差逆传播:

-

实验过程

数据集获取

选取最受欢迎的Iris数据集:

http://archive.ics.uci.edu/ml/machine-learning-databases/iris/

算法实现

构造BP神经网络模块

数据集读取

数据集预处理

主函数

-

实验结果

case1

case2

-

程序清单:

NeuralNetwork.py

import math

import random

import numpy as np

class inputlayer:

def __init__(self):

self.output = 0

def setOutput(self,number):

self.output= number

class hiddenlayer:

def __init__(self):

self.output = 0

self.input = 0

self.threshold = 0

self.weight = []

def setOutput(self,number):

self.output = number

def setInput(self,number):

self.input = number

def setThreshold(self,number):

self.threshold = number

def setSingleWeight(self,number,weight):

self.weight[number] = weight

def setWeight(self,weight):

self.weight = weight

def sigma(number):

if number>=0:

return 1.0/(1+np.exp(-number))

else:

return np.exp(number)/(1.0+np.exp(number))

def initInput(number):

res = []

for i in range(number):

temp = inputlayer()

res.append(temp)

return res

def initHidden(number,inputSize):

res = []

for i in range(number):

temp = hiddenlayer()

weight = []

for k in range(inputSize):

temp1 = random.random()

weight.append(temp1)

temp.setWeight(weight)

temp.setThreshold(random.random())

res.append(temp)

return res

def initOutput(number,hiddenSize):

res = []

for i in range(number):

temp = hiddenlayer()

weight = []

for k in range(hiddenSize):

temp1 = random.random()

weight.append(temp1)

temp.setWeight(weight)

temp.setThreshold(random.random())

res.append(temp)

return res

def calcInput(hiddenLayer,inputLayers):

total =0

for i in range(len(inputLayers)):

total += inputLayers[i].output*hiddenLayer.weight[i]

return total

#用权重和阈值等跑网络

def result(inputLayer,hiddenLayer,outputLayer,xi,yi):

result = []

for i in range(len(xi)):

inputLayer[i].setOutput(xi[i])

for i in range(len(hiddenLayer)):

hiddenLayer[i].setInput(calcInput(hiddenLayer[i],inputLayer))

hiddenLayer[i].setOutput(sigma(hiddenLayer[i].input-hiddenLayer[i].threshold))

for i in range(len(outputLayer)):

outputLayer[i].setInput(calcInput(outputLayer[i],hiddenLayer))

outputLayer[i].setOutput(sigma(outputLayer[i].input-outputLayer[i].threshold))

result.append(outputLayer[i].output)

return result

def calcG(yi,yk):

g = []

for i in range(len(yi)):

temp = yk[i]*(1-yk[i])*(yi[i]-yk[i])

g.append(temp)

return g

def calcE(hiddenLayer,outputLayer,g):

e = []

for i in range(len(hiddenLayer)):

temp = hiddenLayer[i].output* (1 - hiddenLayer[i].output)

temp2 = 0

for k in range(len(outputLayer)):

temp2+=outputLayer[k].weight[i]*g[k]

temp = temp2*temp

e.append(temp)

return e

def calc_flag(a,deta):

if abs(a)>deta:

return 1

return 0

#输入层到隐层的权值,隐层的阈值,隐层到输出层的权值,输出层的阈值四种参数

def run(data_train, data_test, inputSize, hiddenSize, outputSize, deta, version):

x, y = data_train

test_x, test_y = data_test

inputLayer = initInput(inputSize)

hiddenLayer = initHidden(hiddenSize,inputSize)

outputLayer = initOutput(outputSize,hiddenSize)

yita = 0.005

flag,count = 1,0

E , temp_e= 777,888

while(flag):

count+=1

if E<= 5:

break

temp_e = E

E = 0

for xi,yi in zip(x,y):

yk = result(inputLayer,hiddenLayer,outputLayer,xi,yi)#返回list

g = calcG(yi,yk)

e = calcE(hiddenLayer,outputLayer,g)

for i in range(len(yk)):

E+=1/2*(yk[i]-yi[i])**2

for i in range(len(outputLayer)):

for k in range(len(hiddenLayer)):

temp = yita*g[i]*hiddenLayer[k].output

outputLayer[i].weight[k] +=temp

temp2 = -1*yita*g[i]

outputLayer[i].threshold+=temp2

for h in range(len(hiddenLayer)):

for i in range(len(inputLayer)):

temp = yita*e[h]*xi[i]

hiddenLayer[h].weight[i]+=temp

temp2 = -1*yita*e[h]

hiddenLayer[h].threshold+=temp2

if abs(temp_e - E)>0.01 and version==1 and yita<0.020:

yita*=1.1

elif abs(temp_e - E) <0.05 and version==1 and yita>0.001:

yita/=1.1

right = 0

size = outputSize

for xi,yi in zip(test_x,test_y):

yk = result(inputLayer,hiddenLayer,outputLayer,xi,yi)

if list(yk).index(max(list(yk))) == list(yi).index(max(list(yi))):

right+=1

print('Accuracy:', str(right/test_x.shape[0])+'%')

print('loop number:', str(count), '\n')main.py

import NeuralNetwork

import numpy as np

import pandas as pd

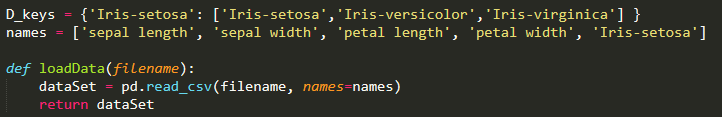

D_keys = {'Iris-setosa': ['Iris-setosa','Iris-versicolor','Iris-virginica'] }

names = ['sepal length', 'sepal width', 'petal length', 'petal width', 'Iris-setosa']

def loadData(filename):

dataSet = pd.read_csv(filename, names=names)

return dataSet

def processData(dataSet):

for key, value in D_keys.items():

dataSet[key].replace(value, list(range(len(value))), inplace=True)

dataSet['Iris-versicolor'] = 0

dataSet['Iris-virginica'] = 0

for i in range(dataSet.shape[0]):

dataSet.iloc[i,6] = 1 if dataSet.iloc[i,4] == 2 else 0

dataSet.iloc[i,5] = 1 if dataSet.iloc[i,4] == 1 else 0

dataSet.iloc[i,4] = 1 if dataSet.iloc[i,4] == 0 else 0

index_train = list(range(35)) + list(range(50,85)) + list(range(100,135))

data_train = dataSet.iloc[index_train]

data_test = dataSet.drop(index_train)

train_x = np.array(data_train[['sepal length', 'sepal width', 'petal length', 'petal width']])

train_y = np.array(data_train[['Iris-setosa','Iris-versicolor','Iris-virginica'] ])

test_x = np.array(data_test[['sepal length', 'sepal width', 'petal length', 'petal width']])

test_y = np.array(data_test[['Iris-setosa','Iris-versicolor','Iris-virginica']])

return (train_x, train_y), (test_x, test_y)

if __name__ == '__main__':

# 读取数据

filename = '../UCI/iris/iris.data'

dataSet = loadData(filename)

data_train, data_test = processData(dataSet)

print('Standard BP:')

NeuralNetwork.run(data_train, data_test, 4, 4, 3, 0.055, 0)

print('Dynamic BP:')

NeuralNetwork.run(data_train, data_test, 4, 4, 3, 0.03, 1)