版权声明:个人原创,禁止私自转载,如需转载引用请私信联系——Zetrue_Li https://blog.csdn.net/weixin_37922777/article/details/89456712

机器学习(周志华) 西瓜书 第七章课后习题7.6—— Python实现

-

实验题目

试编程实现AODE分类器,并以西瓜数据集3.0为训练集,并以西瓜数据集3.0为训练集,对P.151“测1”样本进行判别。

-

实验原理

半朴素贝叶斯分类器:适当考虑一部分属性间的相互依赖信息,从而不需进行完全联合概率计算,又不至于彻底忽略了比较强的属性依赖关系;

AODE:将每个属性作为超父(所有属性都依赖于同一个属性,即称为超父),来构建SPODE,然后将那些足够训练数据支撑的SPODE集成作为最终结果,即

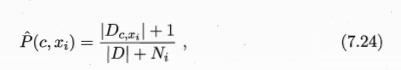

AODE类先验概率计算式,不考虑将连续型属性作为超父

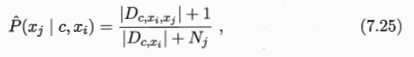

AODE离散型属性条件概率计算式

AODE连续型属性条件概率计算式,计算方法与全朴素贝叶斯lianxu 型条件概率类似,都将其看成是基于给定条件区域的的正态分布

-

实验过程

数据集获取

获取书中的西瓜数据集3.0,并存为data_3.txt

编号,色泽,根蒂,敲声,纹理,脐部,触感,密度,含糖率,好瓜

1,青绿,蜷缩,浊响,清晰,凹陷,硬滑,0.697,0.46,是

2,乌黑,蜷缩,沉闷,清晰,凹陷,硬滑,0.774,0.376,是

3,乌黑,蜷缩,浊响,清晰,凹陷,硬滑,0.634,0.264,是

4,青绿,蜷缩,沉闷,清晰,凹陷,硬滑,0.608,0.318,是

5,浅白,蜷缩,浊响,清晰,凹陷,硬滑,0.556,0.215,是

6,青绿,稍蜷,浊响,清晰,稍凹,软粘,0.403,0.237,是

7,乌黑,稍蜷,浊响,稍糊,稍凹,软粘,0.481,0.149,是

8,乌黑,稍蜷,浊响,清晰,稍凹,硬滑,0.437,0.211,是

9,乌黑,稍蜷,沉闷,稍糊,稍凹,硬滑,0.666,0.091,否

10,青绿,硬挺,清脆,清晰,平坦,软粘,0.243,0.267,否

11,浅白,硬挺,清脆,模糊,平坦,硬滑,0.245,0.057,否

12,浅白,蜷缩,浊响,模糊,平坦,软粘,0.343,0.099,否

13,青绿,稍蜷,浊响,稍糊,凹陷,硬滑,0.639,0.161,否

14,浅白,稍蜷,沉闷,稍糊,凹陷,硬滑,0.657,0.198,否

15,乌黑,稍蜷,浊响,清晰,稍凹,软粘,0.36,0.37,否

16,浅白,蜷缩,浊响,模糊,平坦,硬滑,0.593,0.042,否

17,青绿,蜷缩,沉闷,稍糊,稍凹,硬滑,0.719,0.103,否算法实现

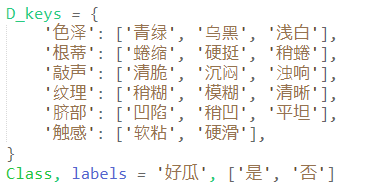

数据定义,定义属性及其取值种类、类标签种类

读取数据函数

生成预测数据

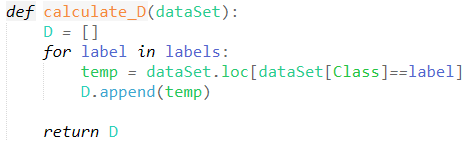

按标签类别生成不同标签类样本组成的集合

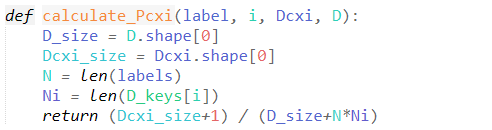

计算类先验概率P(c,xi)

计算离散型类条件概率P(xj|c,ci)

计算连续型类条件概率P(xj|c,ci)

计算将测试样本判定为label的概率可信度

预测测试样本的标签

main函数,调用上述功能函数

-

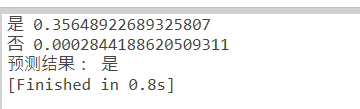

实验结果

-

程序清单:

import math

import numpy as np

import pandas as pd

D_keys = {

'色泽': ['青绿', '乌黑', '浅白'],

'根蒂': ['蜷缩', '硬挺', '稍蜷'],

'敲声': ['清脆', '沉闷', '浊响'],

'纹理': ['稍糊', '模糊', '清晰'],

'脐部': ['凹陷', '稍凹', '平坦'],

'触感': ['软粘', '硬滑'],

}

Class, labels = '好瓜', ['是', '否']

# 读取数据

def loadData(filename):

dataSet = pd.read_csv(filename)

dataSet.drop(columns=['编号'], inplace=True)

return dataSet

# 配置测1数据

def load_data_test():

array = ['青绿', '蜷缩', '浊响', '清晰', '凹陷', '硬滑', 0.697, 0.460, '']

dic = {a: b for a, b in zip(dataSet.columns, array)}

return dic

def calculate_D(dataSet):

D = []

for label in labels:

temp = dataSet.loc[dataSet[Class]==label]

D.append(temp)

return D

def calculate_Pcxi(label, i, Dcxi, D):

D_size = D.shape[0]

Dcxi_size = Dcxi.shape[0]

N = len(labels)

Ni = len(D_keys[i])

return (Dcxi_size+1) / (D_size+N*Ni)

def calculate_Pcxi_xj_D(key, value, Dcxi):

Dcxi_size = Dcxi.shape[0]

Dcxi_xj_size = Dcxi.loc[Dcxi[key]==value].shape[0]

Nj = len(D_keys[key])

return (Dcxi_xj_size+1) / (Dcxi_size+Nj)

def calculate_Pcxi_xj_C(key, value, Dcxi):

mean, var = Dcxi[key].mean(), Dcxi[key].var()

exponent = math.exp(-(math.pow(value-mean, 2) / (2*var)))

return (1 / (math.sqrt(2*math.pi*var)) * exponent)

def calculate_probability(label, i, Dcxi, D, data_test):

prob = calculate_Pcxi(label, i, Dcxi, D)

for key in D.columns[:-1]:

value = data_test[key]

if key in D_keys:

prob *= calculate_Pcxi_xj_D(key, value, Dcxi)

else:

prob *= calculate_Pcxi_xj_C(key, value, Dcxi)

return prob

def predict(dataSet, data_test, m=5):

Dcs = calculate_D(dataSet)

max_prob = -1

for label, Dc in zip(labels, Dcs):

prob = 0

for key, value in data_test.items():

# 不考虑xi为连续变量

if key not in D_keys:

continue

Dxi_size = dataSet.loc[dataSet[key]==value].shape[0]

if Dxi_size < m:

continue

Dcxi = Dc.loc[Dc[key]==value]

prob += calculate_probability(label, key, Dcxi, dataSet, data_test)

if prob > max_prob:

best_label = label

max_prob = prob

print(label, prob)

return best_label

if __name__ == '__main__':

# 读取数据

filename = 'data_3.txt'

dataSet = loadData(filename)

data_test = load_data_test()

label = predict(dataSet, data_test)

print('预测结果:', label)