Logistic回归及python实现

1、logistics回归原理及sigmoid函数

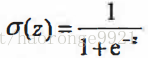

1.1、sigmoid函数

logistics回归函数是能接受所有的输人然后预测出类别。例如,在两个类的清况下,logistics回归函数输出0或1。或许你之前接触过具有这种性质的函数,该函数称为海维寡德阶跃函数 (Heaviside step function), 或者直接称为单位阶跃函数。然而,海维寒德阶跃函数的问题在于: 该函数在跳跃点上从0瞬间跳跃到I,这个瞬间跳跃过程有时很难处理。幸好,另一个函数也有类似的性质。,且数学上更易处理,这就是Sigmoid函数。Sigmoid函数具体的计算公式如下:

刻度足够大,Sigmoid函数看起来很像一个阶跃函数。

1.2、logistics 回归

知道了sigmoid函数,现在介绍Logistic回归分类器:我们可以在每个特征上都乘以一个回归系数,然后把所有的结果值相加,将这个总和代人Sigmoid函数中, 进而得到一个范围在0…..1之间的数值。任何大于0.5的数据被分入1类, 小于0.5 即被归入0类。所以, Logistic回归也可以被看成是一种概率估计。

2、logistics回归到实现和解释

2.1、纯python实现logistics回归

'''

Created on Oct 27, 2010

Logistic Regression Working Module

@author: Peter

'''

from numpy import *

def loadDataSet():

dataMat = [];

labelMat = []

fr = open('testSet.txt')

for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0, float(lineArr[0]), float(lineArr[1])])

labelMat.append(int(lineArr[2]))

return dataMat, labelMat

def sigmoid(inX):

return 1.0 / (1 + exp(-inX))

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn) # convert to NumPy matrix

labelMat = mat(classLabels).transpose() # convert to NumPy matrix

m, n = shape(dataMatrix)

alpha = 0.001

maxCycles = 500

weights = ones((n, 1))

for k in range(maxCycles): # heavy on matrix operations

h = sigmoid(dataMatrix * weights) # matrix mult

error = (labelMat - h) # vector subtraction

weights = weights + alpha * dataMatrix.transpose() * error # matrix mult

return weights

def plotBestFit(weights):

import matplotlib.pyplot as plt

dataMat, labelMat = loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

xcord1 = [];

ycord1 = []

xcord2 = [];

ycord2 = []

for i in range(n):

if int(labelMat[i]) == 1:

xcord1.append(dataArr[i, 1]);

ycord1.append(dataArr[i, 2])

else:

xcord2.append(dataArr[i, 1]);

ycord2.append(dataArr[i, 2])

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(xcord1, ycord1, s=30, c='red', marker='s')

ax.scatter(xcord2, ycord2, s=30, c='green')

x = arange(-3.0, 3.0, 0.1)

y = (-weights[0] - weights[1] * x) / weights[2]

ax.plot(x, y)

plt.xlabel('X1');

plt.ylabel('X2');

plt.show()

def stocGradAscent0(dataMatrix, classLabels):

m, n = shape(dataMatrix)

alpha = 0.01

weights = ones(n) # initialize to all ones

for i in range(m):

h = sigmoid(sum(dataMatrix[i] * weights))

error = classLabels[i] - h

weights = weights + alpha * error * dataMatrix[i]

return weights

def stocGradAscent1(dataMatrix, classLabels, numIter=150):

m, n = shape(dataMatrix)

weights = ones(n) # initialize to all ones

for j in range(numIter):

dataIndex = list(range(m))

for i in range(m):

alpha = 4 / (1.0 + j + i) + 0.0001 # apha decreases with iteration, does not

randIndex = int(random.uniform(0, len(dataIndex))) # go to 0 because of the constant

h = sigmoid(sum(dataMatrix[randIndex] * weights))

error = classLabels[randIndex] - h

weights = weights + alpha * error * dataMatrix[randIndex]

del (dataIndex[randIndex])

return weights

def classifyVector(inX, weights):

prob = sigmoid(sum(inX * weights))

if prob > 0.5:

return 1.0

else:

return 0.0

def colicTest():

frTrain = open('horseColicTraining.txt');

frTest = open('horseColicTest.txt')

trainingSet = []

trainingLabels = []

for line in frTrain.readlines():

currLine = line.strip().split('\t')

lineArr = []

for i in range(21):

lineArr.append(float(currLine[i]))

trainingSet.append(lineArr)

trainingLabels.append(float(currLine[21]))

trainWeights = stocGradAscent1(array(trainingSet), trainingLabels, 1000)

errorCount = 0;

numTestVec = 0.0

for line in frTest.readlines():

numTestVec += 1.0

currLine = line.strip().split('\t')

lineArr = []

for i in range(21):

lineArr.append(float(currLine[i]))

if int(classifyVector(array(lineArr), trainWeights)) != int(currLine[21]):

errorCount += 1

errorRate = (float(errorCount) / numTestVec)

print("the error rate of this test is: %f" % errorRate)

return errorRate

def multiTest():

numTests = 10;

errorSum = 0.0

for k in range(numTests):

errorSum += colicTest()

print("after %d iterations the average error rate is: %f" % (numTests, errorSum / float(numTests)))

multiTest()注:本篇博客参考自机器学习实战