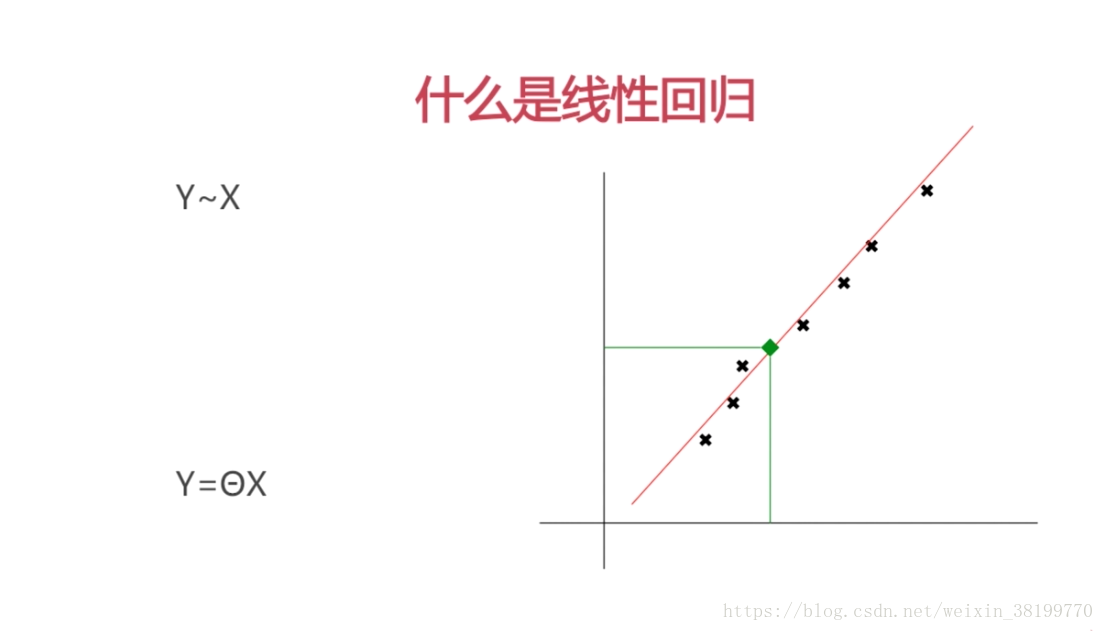

什么是线性回归

通过训练来得到线性方程系数ehta的过程叫做线性回归,这样我们可以预测某个x对应的y值

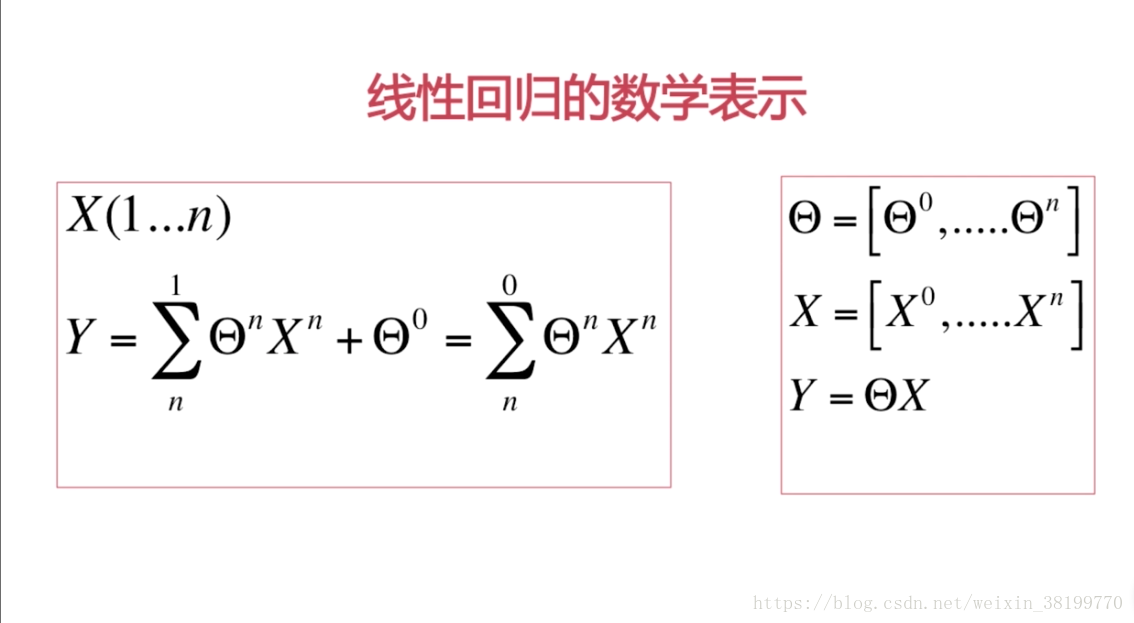

线性 回归的数学表示

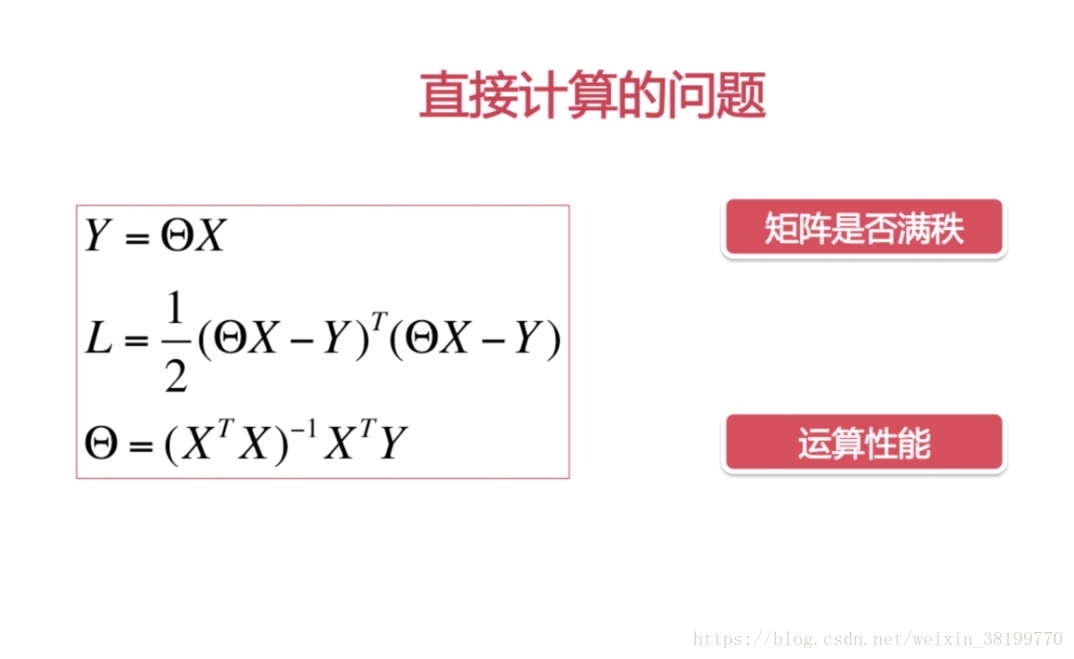

线性回归中的最小二乘 法

通过对L求导使ehta最小

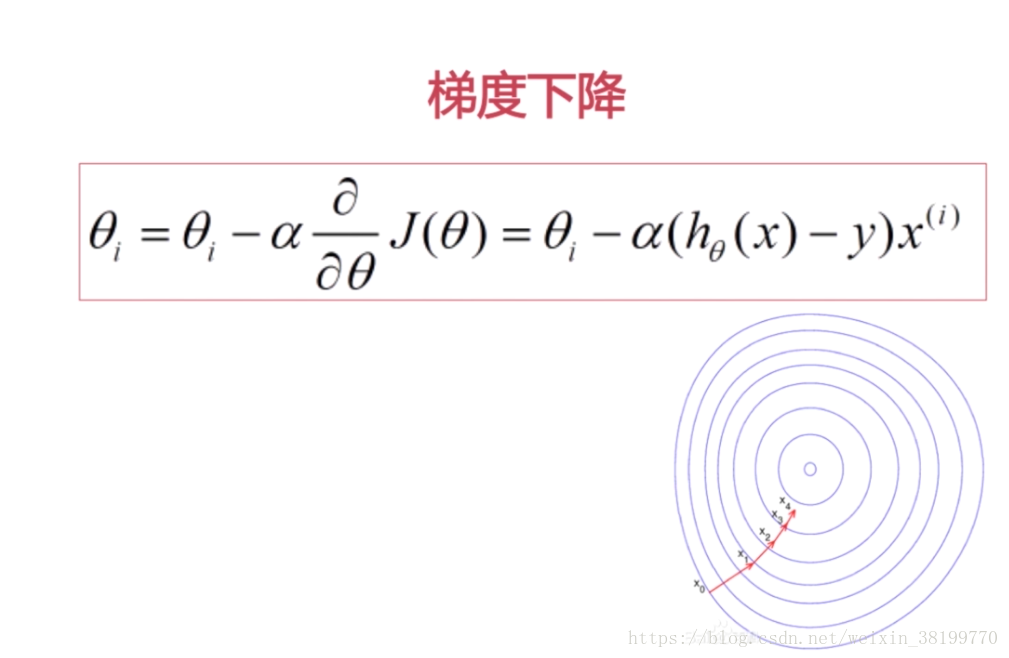

线性回归的梯度下降算法

复习numpy的向量操作

#coding=gbk

import numpy as np

from numpy.linalg import inv

from numpy import dot

from numpy import mat

#inv 求矩阵的逆

#dot求矩阵点乘

#mat是矩阵

A = np.mat([1,1])

#B = np.array([1,1])

print('矩阵A:\n',A)

print("A的转置:\n",A.T)

#reshape给矩阵整形

print(A.reshape(2,1))

#print('B:\n',B)

B = mat([[1,2],[2,3]])

print(B.reshape(1,4))

print("B的逆:\n",inv(B))

print("矩阵B:\n",B)

print("B的第一行:\n",B[0,:])

print("B的第一列:\n",B[:,0])

#A 1*2 B 2*2

print('A和B点乘',dot(A,B))

print("B点乘A的转置:\b",dot(B,A.T))

实现最小二乘法

#coding=gbk

import numpy as np

from numpy.linalg import inv

from numpy import dot

from numpy import mat

#y=2x

X = mat([1,2,3]).reshape(3,1)

Y = 2*X

#theta = (X'X)^-1X'Y

theta = dot(dot(inv(dot(X.T,X)),X.T),Y)

print (theta)实现梯度下降

#coding=gbk

import numpy as np

from numpy.linalg import inv

from numpy import dot

from numpy import mat

#y=2x

X = mat([1,2,3]).reshape(3,1)

Y = 2*X

#theta = theta - alpha*(theta*X -Y)*X

theta = 1.

alpha = 0.1

for i in range(100):

theta = theta + np.sum(alpha * (Y - dot(X, theta))*X.reshape(1,3))/3.

print (theta)回归分析实战

随机数据生成代码,生成data.csv文件

import random

def Y(X1, X2, X3):

return 0.65 * X1 + 0.70 * X2 - 0.55 * X3 + 1.95

def Produce():

filename = 'data.csv'

with open(filename, 'w') as file:

file.write('X1,X2,X3,Y\n')

for i in range(200):

random.seed()

x1 = random.random() * 10

x2 = random.random() * 10

x3 = random.random() * 10

y = Y(x1, x2, x3)

try:

file.write(str(x1) + ',' + str(x2) + ',' + str(x3) + ',' + str(y) + '\n')

except Exception as e:

print ('Write Error')

print (str(e))

Produce()分别用最小二乘法和梯度下降法计算theta

#coding=gbk

import numpy as np

from numpy.linalg import inv

from numpy import dot

from numpy import mat

import pandas as pd

#获取X和Y向量

dataset = pd.read_csv('data.csv')

#print(dataset)

temp = dataset.iloc[:,0:3]#取数据集中

temp['x0'] = 1#截距

X = temp.iloc[:,[3,0,1,2]]

Y = dataset.iloc[:,3].values.reshape(200,1)

#Y = dataset.iloc[:,3].values.reshape(200,1)

#print(Y)

#最小二乘法

theta = dot(dot(inv(dot(X.T,X)),X.T),Y)

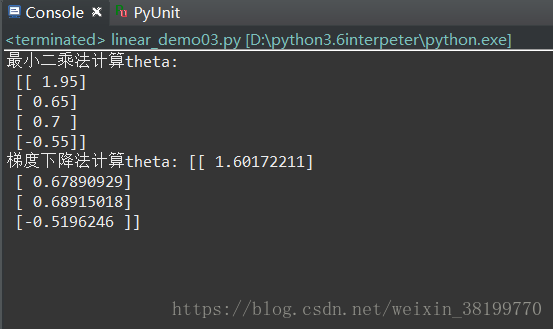

print("最小二乘法计算theta:\n",theta.reshape(4,1))

#梯度下降法

theta = np.array([1.,1.,1.,1.]).reshape(4,1)

alpha = 0.02#学习率

temp = theta #缓存,一块同步更新,很重要

X0 = X.iloc[:,0].values.reshape(len(Y),1)

X1 = X.iloc[:,1].values.reshape(len(Y),1)

X2 = X.iloc[:,2].values.reshape(len(Y),1)

X3 = X.iloc[:,3].values.reshape(len(Y),1)

for i in range(10000):

temp[0] = theta[0] + alpha*np.sum((Y-dot(X,theta))*X0)/200.

temp[1] = theta[1] + alpha*np.sum((Y-dot(X,theta))*X1)/200.

temp[2] = theta[2] + alpha*np.sum((Y-dot(X,theta))*X2)/200.

temp[3] = theta[3] + alpha*np.sum((Y-dot(X,theta))*X3)/200.

theta = temp

print ("梯度下降法计算theta:",theta)

运行结果