参考文章:https://blog.csdn.net/qq_40717846/article/details/78405586

敲代码最开心的时候莫过于与别人分享自己新敲出来的有趣玩意儿,接下来请看我这个小白捣鼓的小玩意儿。

任务:

- 爬取拉勾网信息

- 数据处理

- 制图

所需知识只有一点点(毕竟是个小白):

- requests基础部分

- json

- pyecharts

- wordcloud

接下来开始敲代码了,代码分成了3个部分:爬取、制图、生成词云

爬取部分:

首先要说明的是,拉勾网有反爬虫,所以,requests中的头参首和cookie应当写全(直接在浏览器上F12,然后复制粘贴就行)

然后就可以进行爬取了,主要代码如下:

import json

import requests

header = {"Cookie":"JSESSIONID=ABAAABAAAGFABEFB093DFBA72E00093316821E95E4971CF; user_trace_token=20180921154401-430b2b64-ca4b-411e-be98-f86172880bd3; _ga=GA1.2.231300040.1537515842; LGSID=20180921154401-1b73362c-bd72-11e8-a516-525400f775ce; PRE_UTM=; PRE_HOST=; PRE_SITE=; PRE_LAND=https%3A%2F%2Fwww.lagou.com%2Fjobs%2Flist_%25E4%25BA%2592%25E8%2581%2594%25E7%25BD%2591%25E5%25A4%25A7%25E6%2595%25B0%25E6%258D%25AE%3Fpx%3Ddefault%26city%3D%25E4%25B8%258A%25E6%25B5%25B7; LGUID=20180921154401-1b73384e-bd72-11e8-a516-525400f775ce; Hm_lvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1537515842; _gid=GA1.2.1479618188.1537515843; Hm_lpvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1537515859; LGRID=20180921154418-259c60d0-bd72-11e8-bb56-5254005c3644; SEARCH_ID=801b32db85184bc0910c10a3da7a18ad",

"Host":"www.lagou.com",

'Origin': 'https://www.lagou.com',

'Referer':'https://www.lagou.com/jobs/list_%E4%BA%92%E8%81%94%E7%BD%91%E5%A4%A7%E6%95%B0%E6%8D%AE?px=default&city=%E4%B8%8A%E6%B5%B7',

'User-Agent':'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36'}

Data = {'first':'true','kd':'互联网大数据','pn':'1'}

def get_result(myurl):

for page in range(1,31):

content_next = json.loads(requests.post(myurl +str(page),data=Data,headers=header,verify=False).text)

company_info = content_next.get('content').get('positionResult').get('result')

if company_info:

for p in company_info:

line = str(p['city']).replace(',',';') + ',' + str(p['companyFullName']).replace(',',';') + ',' + str(p['companyId']).replace(',',';') + ',' + \

str(p['companyLabelList']).replace(',',';') + ',' + str(p['companyShortName']).replace(',',';') + ',' + str(p['companySize']).replace(',',';') + ',' + \

str(p['businessZones']).replace(',',';') + ',' + str(p['firstType']).replace(',',';') + ',' + str(

p['secondType']).replace(',',';') + ',' + \

str(p['education']).replace(',',';') + ',' + str(p['industryField']).replace(',',';') +',' + \

str(p['positionId']).replace(',',';')+',' + str(p['positionAdvantage']).replace(',',';') +',' + str(p['positionName']).replace(',',';') +',' + \

str(p['positionLables']).replace(',',';') +',' + str(p['salary']).replace(',',';') +',' + str(p['workYear']).replace(',',';') + '\n'

file.write(line)

注:replace(‘,’,‘;’)是将爬取的信息中的“,”改成“;”,为了方便后面制表。

if __name__=='__main__':

title = 'city,companyFullName,companyId,companyLabelList,companyShortName,companySize,businessZones,firstType,secondType,education,industryField,positionId,positionAdvantage,positionName,positionLables,salary,workYear\n'

file = open('%s.txt' % '爬取拉勾网', 'a')

file.write(title)

cityList = ['北京', '上海','深圳','广州','杭州','成都','南京','武汉','西安','厦门','长沙','苏州','天津','郑州']

for city in cityList:

print('爬取%s'%city)

myurl ='https://www.lagou.com/jobs/positionAjax.json?px=default&city={}&needAddtionalResult=false&pn='.format(city)

get_result(myurl)

file.close()

然后就可以获得爬取的文件了

到这里,第一步就完成了。

制图部分:

爬取到的文件,薪水是一个区间,不方便处理,所以要进行求平均。

def cut_word(word,method):

position=word.find('-')

length=len(word)

if position !=-1:

bottomsalary=word[:position-1]

topsalary=word[position+1:length-1]

else:

bottomsalary=word[:word.upper().find('K')]

topsalary=bottomsalary

if method=="bottom":

return bottomsalary

else:

return topsalary

df_duplicates['topsalary']=df_duplicates.salary.apply(cut_word, method="top")

df_duplicates['bottomsalary']=df_duplicates.salary.apply(cut_word, method="bottom")

df_duplicates.bottomsalary.astype('int')

df_duplicates.topsalary.astype('int')

df_duplicates["avgsalary"]=df_duplicates.apply(lambda x:(int(x.bottomsalary)+int(x.topsalary))/2,axis=1)

然后,就可以根据数据画图了。

注:要先把文件格式转化为csv,可方便后续的操作:

(之前将“,”改成“;”就是为了方便这里操作)

然后就生成了表格

然后利用pyecharts模块画图就行了,代码如下:

import pandas as pd

from pyecharts import Bar

from pyecharts import Page

from pyecharts import Pie

df = pd.read_csv('/home/lsgo28/PycharmProjects/demo/爬取拉勾网.csv')

df_duplicates=df.drop_duplicates(subset='positionId',keep='first')

city_list = df_duplicates["city"].drop_duplicates(keep='first')

money = []

money1 = []

number = []

number1 = []

k = 0

l = 0

m = 0

n = 0

for city in city_list:

for city_,money_ in zip(df_duplicates["city"],df_duplicates["avgsalary"]):

if city_==city:

k +=money_

l += 1

number.append(l)

money.append(k/l)

workyears_list = ['应届毕业生','1年以下','1-3年','3-5年','5-10年','10年以上','不限']

for workyears in workyears_list:

for workyears_,money_ in zip(df_duplicates["workYear"],df_duplicates["avgsalary"]):

if workyears_==workyears:

m += money_

n += 1

number1.append(n)

money1.append(m/n)

pie = Pie("职位数量饼图")

bar = Bar("各大城市职业的平均月薪(K)")

bar1 = Bar("各大城市职位数")

bar2 = Bar("各类工作经验平均月薪(K)")

pie.add("",workyears_list,number1,is_stack=True)

bar.add("月薪",city_list,money,is_stack=True)

bar1.add("公司",city_list,number,is_stack=True)

bar2.add("经验",workyears_list,number1,is_stack=True)

page = Page()

page.add(bar)

page.add(bar1)

page.add(bar2)

page.add(pie)

page.render("图文.html")

这样,想要的图片就画完了

到这里,第二步就完成了

画词云

想画词云,首先得有一个储存词语的txt文件,我们就将爬取的文件中positionLables列中的词语绘制一个词云

显然,一个单元格中存有不止一个的词语,这样就很难生成一个词云。

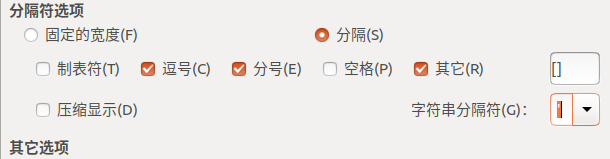

你可以通过jieba分词包对该文件进行分词(有点麻烦),我这里用了一个取巧的办法:将该列复制,然后打开一个新的csv文件,粘贴进去,然后这样改:

就把【】‘’去掉了,而且也达到了分词的效果,

然后把csv格式转化成txt就行了。

然后选取一个喜欢的背景图片:

接着就是画词云了,代码如下:

import numpy as np

from PIL import Image

import matplotlib.pyplot as plt

from wordcloud import WordCloud,ImageColorGenerator

bg = np.array(Image.open("12.jpg"))

with open('/home/lsgo28/PycharmProjects/demo/ciyun.txt','r') as f:

fl = f.read()

fl = fl.replace(',','\n')

wc = WordCloud(background_color="white",

max_words=200,

mask=bg,

max_font_size=60,

random_state=42,

font_path='/home/lsgo28/PycharmProjects/demo/ziti.ttf').generate(fl)

image_color = ImageColorGenerator(bg)

plt.imshow(wc.recolor(color_func=image_color))

wc.to_file("ciyun.png")

大功告成