一、写在前面

fcn是首次使用cnn来实现语义分割的,论文地址:fully convolutional networks for semantic segmentation

实现代码地址:https://github.com/shelhamer/fcn.berkeleyvision.org

全卷积神经网络主要使用了三种技术:

1. 卷积化(Convolutional)

2. 上采样(Upsample)

3. 跳跃结构(Skip Layer)

为了便于理解,我拿最简单的结构voc-fcn-alexnet进行说明,该网络结构主要用到了前面两个技术,不包含跳跃结构。

二、voc-fcn-alexnet 的train.prototxt文件

layer { name: "data" type: "Python" top: "data" top: "label" python_param { module: "voc_layers" layer: "SBDDSegDataLayer" param_str: "{\'sbdd_dir\': \'../data/sbdd/dataset\', \'seed\': 1337, \'split\': \'train\', \'mean\': (104.00699, 116.66877, 122.67892)}" } } layer { name: "conv1" type: "Convolution" bottom: "data" top: "conv1" convolution_param { num_output: 96 pad: 100 kernel_size: 11 group: 1 stride: 4 } } layer { name: "relu1" type: "ReLU" bottom: "conv1" top: "conv1" } layer { name: "pool1" type: "Pooling" bottom: "conv1" top: "pool1" pooling_param { pool: MAX kernel_size: 3 stride: 2 } } layer { name: "norm1" type: "LRN" bottom: "pool1" top: "norm1" lrn_param { local_size: 5 alpha: 0.0001 beta: 0.75 } } layer { name: "conv2" type: "Convolution" bottom: "norm1" top: "conv2" convolution_param { num_output: 256 pad: 2 kernel_size: 5 group: 2 stride: 1 } } layer { name: "relu2" type: "ReLU" bottom: "conv2" top: "conv2" } layer { name: "pool2" type: "Pooling" bottom: "conv2" top: "pool2" pooling_param { pool: MAX kernel_size: 3 stride: 2 } } layer { name: "norm2" type: "LRN" bottom: "pool2" top: "norm2" lrn_param { local_size: 5 alpha: 0.0001 beta: 0.75 } } layer { name: "conv3" type: "Convolution" bottom: "norm2" top: "conv3" convolution_param { num_output: 384 pad: 1 kernel_size: 3 group: 1 stride: 1 } } layer { name: "relu3" type: "ReLU" bottom: "conv3" top: "conv3" } layer { name: "conv4" type: "Convolution" bottom: "conv3" top: "conv4" convolution_param { num_output: 384 pad: 1 kernel_size: 3 group: 2 stride: 1 } } layer { name: "relu4" type: "ReLU" bottom: "conv4" top: "conv4" } layer { name: "conv5" type: "Convolution" bottom: "conv4" top: "conv5" convolution_param { num_output: 256 pad: 1 kernel_size: 3 group: 2 stride: 1 } } layer { name: "relu5" type: "ReLU" bottom: "conv5" top: "conv5" } layer { name: "pool5" type: "Pooling" bottom: "conv5" top: "pool5" pooling_param { pool: MAX kernel_size: 3 stride: 2 } } layer { name: "fc6" type: "Convolution" bottom: "pool5" top: "fc6" convolution_param { num_output: 4096 pad: 0 kernel_size: 6 group: 1 stride: 1 } } layer { name: "relu6" type: "ReLU" bottom: "fc6" top: "fc6" } layer { name: "drop6" type: "Dropout" bottom: "fc6" top: "fc6" dropout_param { dropout_ratio: 0.5 } } layer { name: "fc7" type: "Convolution" bottom: "fc6" top: "fc7" convolution_param { num_output: 4096 pad: 0 kernel_size: 1 group: 1 stride: 1 } } layer { name: "relu7" type: "ReLU" bottom: "fc7" top: "fc7" } layer { name: "drop7" type: "Dropout" bottom: "fc7" top: "fc7" dropout_param { dropout_ratio: 0.5 } } layer { name: "score_fr" type: "Convolution" bottom: "fc7" top: "score_fr" param { lr_mult: 1 decay_mult: 1 } param { lr_mult: 2 decay_mult: 0 } convolution_param { num_output: 21 pad: 0 kernel_size: 1 } } layer { name: "upscore" type: "Deconvolution" bottom: "score_fr" top: "upscore" param { lr_mult: 0 } convolution_param { num_output: 21 bias_term: false kernel_size: 63 stride: 32 } } layer { name: "score" type: "Crop" bottom: "upscore" bottom: "data" top: "score" crop_param { axis: 2 offset: 18 } } layer { name: "loss" type: "SoftmaxWithLoss" bottom: "score" bottom: "label" top: "loss" loss_param { ignore_label: 255 normalize: true } }

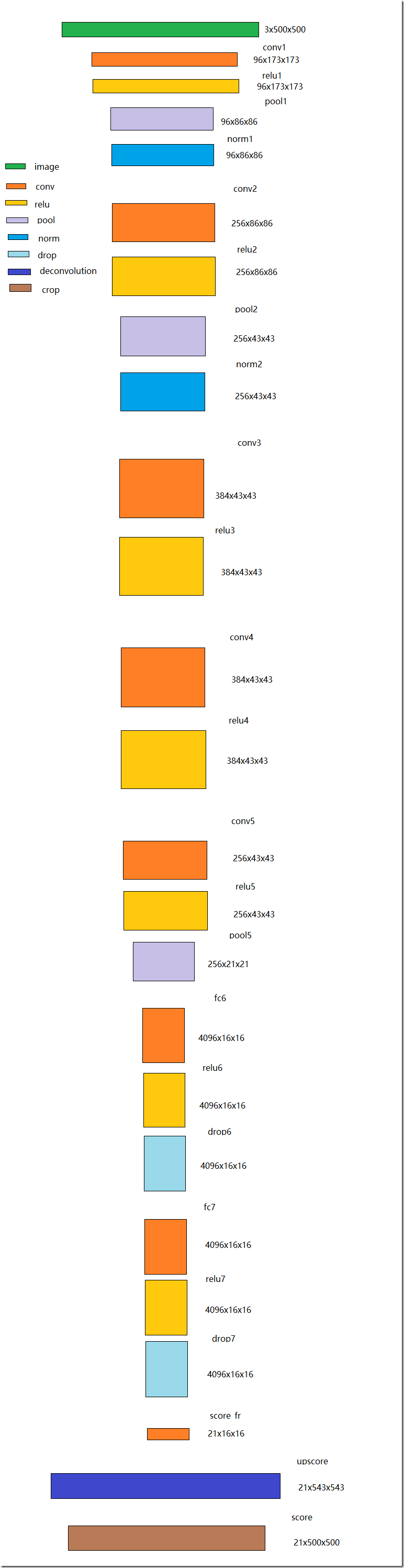

三、网络结构

假设输入的图片为500x500,

根据train.prototxt文件,可以得到上图的网络结构,该网络结构除了前五层的卷积层,也把后面的三层改为了卷积层,score_fr是卷积层的最后一层,也叫heatmap热图,热图就是我们最重要的高维特诊图,得到高维特征的heatmap之后,就是最重要的一步也是最后的一步,对原图像进行upsampling(即反卷积),把图像进行放大,得到原图像的大小。

四、损失函数

该网络的损失函数为SoftmaxWithLoss。首先进行softmax求解,求出每个像素点属于不同类别的概率,因为总共是分为21类,所以每个像素点对应21个概率值(输出通道数为21)。然后求解每个像素点所属实际类别概率的log值之和的平均,再取负数,可得到损失函数,参考如下:

end