版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/u012292754/article/details/85262064

1 启动 Spark-shell

[hadoop@node1 ~]$ spark-shell

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

18/12/26 17:10:21 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

18/12/26 17:10:21 WARN SparkConf:

SPARK_WORKER_INSTANCES was detected (set to '1').

This is deprecated in Spark 1.0+.

Please instead use:

- ./spark-submit with --num-executors to specify the number of executors

- Or set SPARK_EXECUTOR_INSTANCES

- spark.executor.instances to configure the number of instances in the spark config.

Spark context Web UI available at http://192.168.30.131:4040

Spark context available as 'sc' (master = local[*], app id = local-1545815422338).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.1.3

/_/

Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_181)

Type in expressions to have them evaluated.

Type :help for more information.

scala>

1.1 sc

SparkContext: Spark 程序的入口点,封装了整个spark运行环境的信息

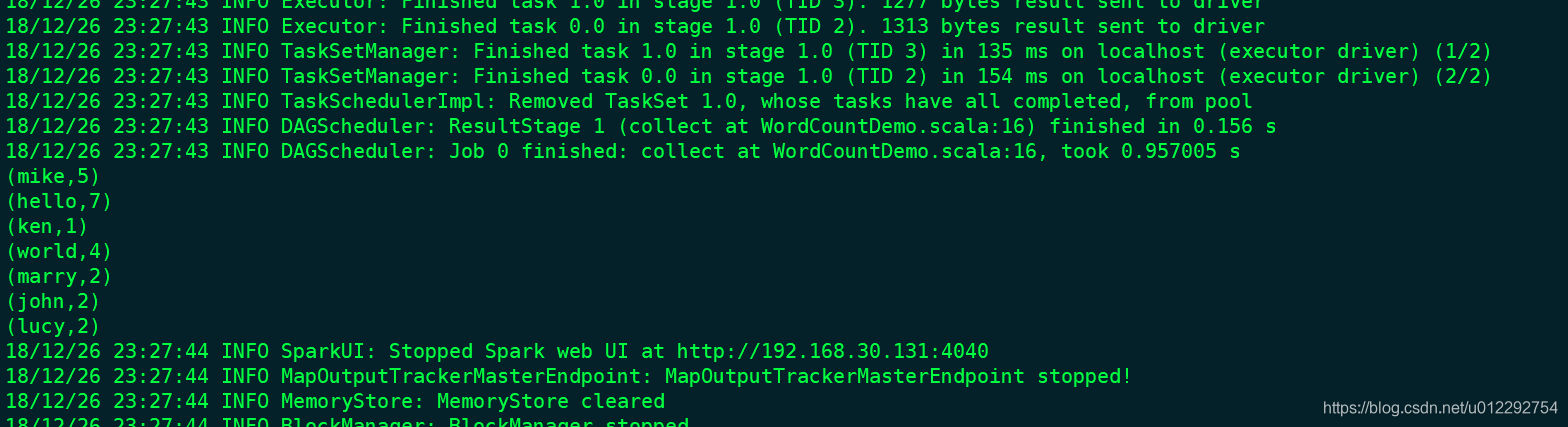

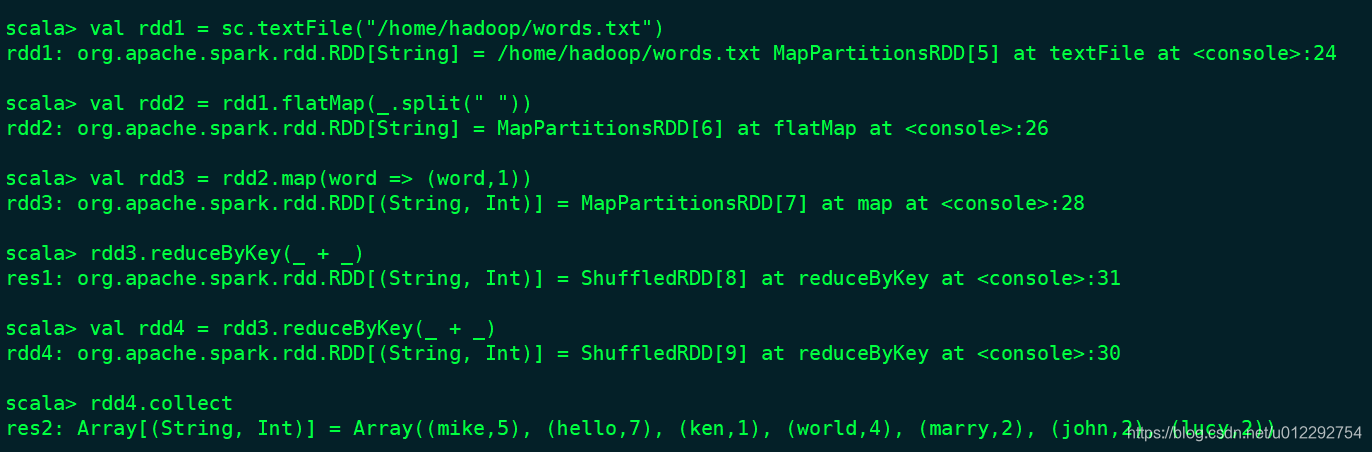

1.2 统计单词

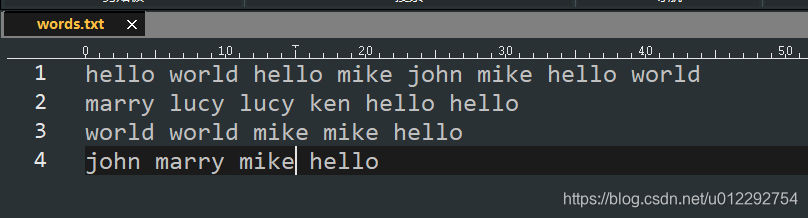

words.txt

1.3 过滤单词

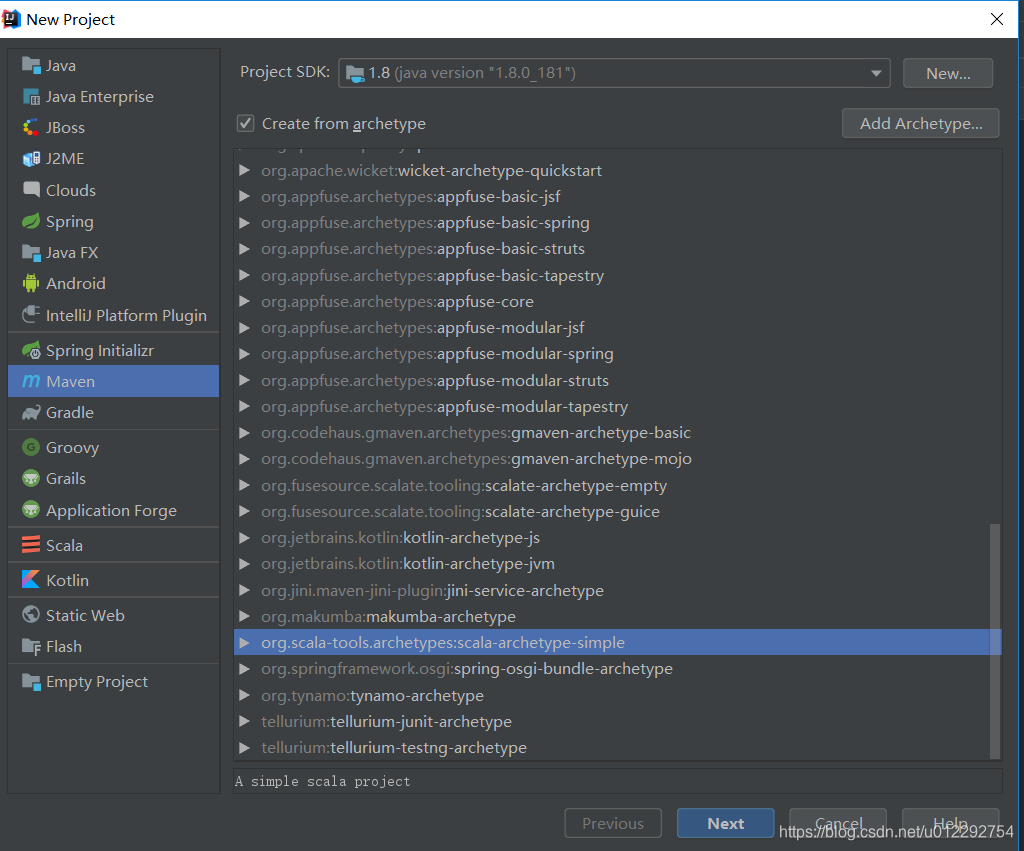

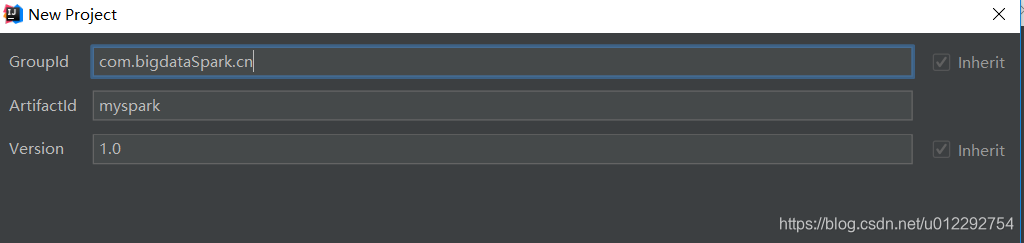

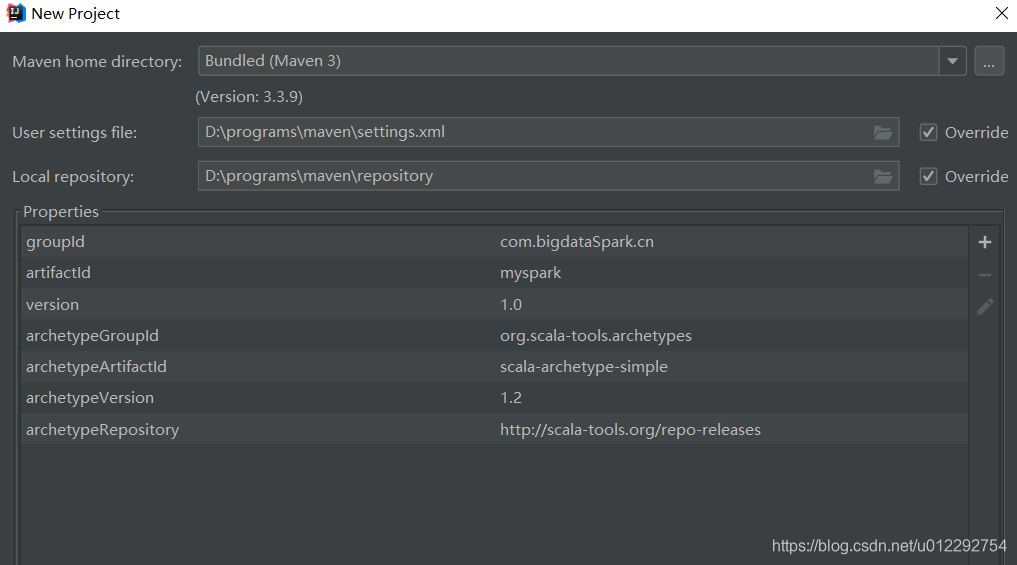

2 IDEA 下maven创建 Spark 应用程序

2.1 修改 POM 文件

- 修改 scala 版本

<properties>

<scala.version>2.11.8</scala.version>

<spark.version>2.2.0</spark.version>

</properties>

- 添加 CDH 库

<!--添加库-->

<repository>

<id>cloudera</id>

<url>https://repository.cloudera.com/artifactory/cloudera-repos</url>

</repository>

- 添加依赖

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

</dependencies>

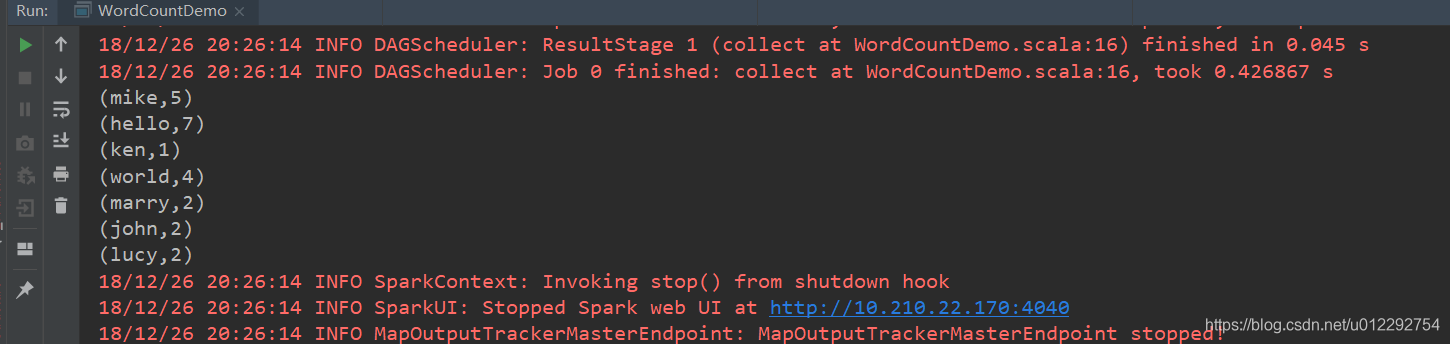

2.2 API应用编程

SparkContext:Spark功能的主要入口点,代表到 Spark 集群的连接,可以创建 RDD,累加器和广播变量;- 每个 JVM 只能激活一个 SparkContext

SparkConf: Spark 配置对象,设置 Spark 应用各种参数,k-v形式

package com.bigdataSpark.cn

import org.apache.spark.{SparkConf, SparkContext}

object WordCountDemo {

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setMaster("local[2]").setAppName("WordCountDemo")

val sc = new SparkContext(conf)

val rdd1 = sc.textFile("d:/words.txt")

val rdd2 = rdd1.flatMap(_.split(" "))

val rdd3 = rdd2.map((_,1))

val rdd4 = rdd3.reduceByKey(_ + _)

val r = rdd4.collect

r.foreach(println)

sc.stop()

}

}

2.3 Java 版的 Spark API 单词统计

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction;

import scala.Tuple2;

import java.util.ArrayList;

import java.util.Iterator;

import java.util.List;

public class WordCountJava {

public static void main(String[] args) {

SparkConf conf = new SparkConf().setMaster("local[2]").setAppName("WordCountJava");

JavaSparkContext sc = new JavaSparkContext(conf);

JavaRDD<String> rdd1 = sc.textFile("d:/words.txt");

JavaRDD<String> rdd2 = rdd1.flatMap(new FlatMapFunction<String, String>() {

public Iterator<String> call(String s) throws Exception {

List<String> list = new ArrayList<String>();

String[] arr = s.split(" ");

for (String ss : arr) {

list.add(ss);

}

return list.iterator();

}

});

JavaPairRDD<String, Integer> rdd3 = rdd2.mapToPair(new PairFunction<String, String, Integer>() {

public Tuple2<String, Integer> call(String s) throws Exception {

return new Tuple2<String, Integer>(s, 1);

}

});

JavaPairRDD<String, Integer> rdd4 = rdd3.reduceByKey(new Function2<Integer, Integer, Integer>() {

public Integer call(Integer v1, Integer v2) throws Exception {

return v1 + v2;

}

});

List<Tuple2<String, Integer>> list = rdd4.collect();

for (Tuple2<String, Integer> t : list) {

System.out.println(t._1+":"+t._2);

}

}

}

2.4 打包在集群运行

- 修改 Scala 版 wordcount 源码

package com.bigdataSpark.cn

import org.apache.spark.{SparkConf, SparkContext}

object WordCountDemo {

def main(args: Array[String]): Unit = {

val conf = new SparkConf()//.setMaster("local[2]").setAppName("WordCountDemo")

val sc = new SparkContext(conf)

val rdd1 = sc.textFile(args(0))

val rdd2 = rdd1.flatMap(_.split(" "))

val rdd3 = rdd2.map((_,1))

val rdd4 = rdd3.reduceByKey(_ + _)

val r = rdd4.collect

r.foreach(println)

sc.stop()

}

}

- 然后用 Maven 打包,上传到集群

spark-submit

[hadoop@node1 ~]$ spark-submit --help

Usage: spark-submit [options] <app jar | python file> [app arguments]

Usage: spark-submit --kill [submission ID] --master [spark://...]

Usage: spark-submit --status [submission ID] --master [spark://...]

Usage: spark-submit run-example [options] example-class [example args]

Options:

--master MASTER_URL spark://host:port, mesos://host:port, yarn, or local.

--deploy-mode DEPLOY_MODE Whether to launch the driver program locally ("client") or

on one of the worker machines inside the cluster ("cluster")

(Default: client).

--class CLASS_NAME Your application's main class (for Java / Scala apps).

--name NAME A name of your application.

--jars JARS Comma-separated list of local jars to include on the driver

and executor classpaths.

--packages Comma-separated list of maven coordinates of jars to include

on the driver and executor classpaths. Will search the local

maven repo, then maven central and any additional remote

repositories given by --repositories. The format for the

coordinates should be groupId:artifactId:version.

--exclude-packages Comma-separated list of groupId:artifactId, to exclude while

resolving the dependencies provided in --packages to avoid

dependency conflicts.

--repositories Comma-separated list of additional remote repositories to

search for the maven coordinates given with --packages.

--py-files PY_FILES Comma-separated list of .zip, .egg, or .py files to place

on the PYTHONPATH for Python apps.

--files FILES Comma-separated list of files to be placed in the working

directory of each executor. File paths of these files

in executors can be accessed via SparkFiles.get(fileName).

--conf PROP=VALUE Arbitrary Spark configuration property.

--properties-file FILE Path to a file from which to load extra properties. If not

specified, this will look for conf/spark-defaults.conf.

--driver-memory MEM Memory for driver (e.g. 1000M, 2G) (Default: 1024M).

--driver-java-options Extra Java options to pass to the driver.

--driver-library-path Extra library path entries to pass to the driver.

--driver-class-path Extra class path entries to pass to the driver. Note that

jars added with --jars are automatically included in the

classpath.

--executor-memory MEM Memory per executor (e.g. 1000M, 2G) (Default: 1G).

--proxy-user NAME User to impersonate when submitting the application.

This argument does not work with --principal / --keytab.

--help, -h Show this help message and exit.

--verbose, -v Print additional debug output.

--version, Print the version of current Spark.

Spark standalone with cluster deploy mode only:

--driver-cores NUM Cores for driver (Default: 1).

Spark standalone or Mesos with cluster deploy mode only:

--supervise If given, restarts the driver on failure.

--kill SUBMISSION_ID If given, kills the driver specified.

--status SUBMISSION_ID If given, requests the status of the driver specified.

Spark standalone and Mesos only:

--total-executor-cores NUM Total cores for all executors.

Spark standalone and YARN only:

--executor-cores NUM Number of cores per executor. (Default: 1 in YARN mode,

or all available cores on the worker in standalone mode)

YARN-only:

--driver-cores NUM Number of cores used by the driver, only in cluster mode

(Default: 1).

--queue QUEUE_NAME The YARN queue to submit to (Default: "default").

--num-executors NUM Number of executors to launch (Default: 2).

If dynamic allocation is enabled, the initial number of

executors will be at least NUM.

--archives ARCHIVES Comma separated list of archives to be extracted into the

working directory of each executor.

--principal PRINCIPAL Principal to be used to login to KDC, while running on

secure HDFS.

--keytab KEYTAB The full path to the file that contains the keytab for the

principal specified above. This keytab will be copied to

the node running the Application Master via the Secure

Distributed Cache, for renewing the login tickets and the

delegation tokens periodically.

[hadoop@node1 ~]$

spark-submit --master local[2] --name WordCountScala --class com.bigdataSpark.cn.WordCountDemo /home/hadoop/myspark-1.0.jar /home/hadoop/words.txt