版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/u012292754/article/details/85338559

1 Spark Streaming

- spark core 的扩展,针对实时数据处理,具有可扩展、高吞吐、容错;

- 内部,spark 接受实时数据流,分成 batch 进行处理,最终在每个 batch 产生结果;

1.1 discretized stream or DStream

- 通过kafka,flume 等输入产生,或者通过其他的 DStream 进行高阶变换产生;

- 在内部,DStream 表现为 RDD 序列;

2 Spark Streaming 测试案例

- POM 添加依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

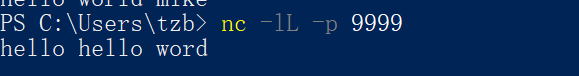

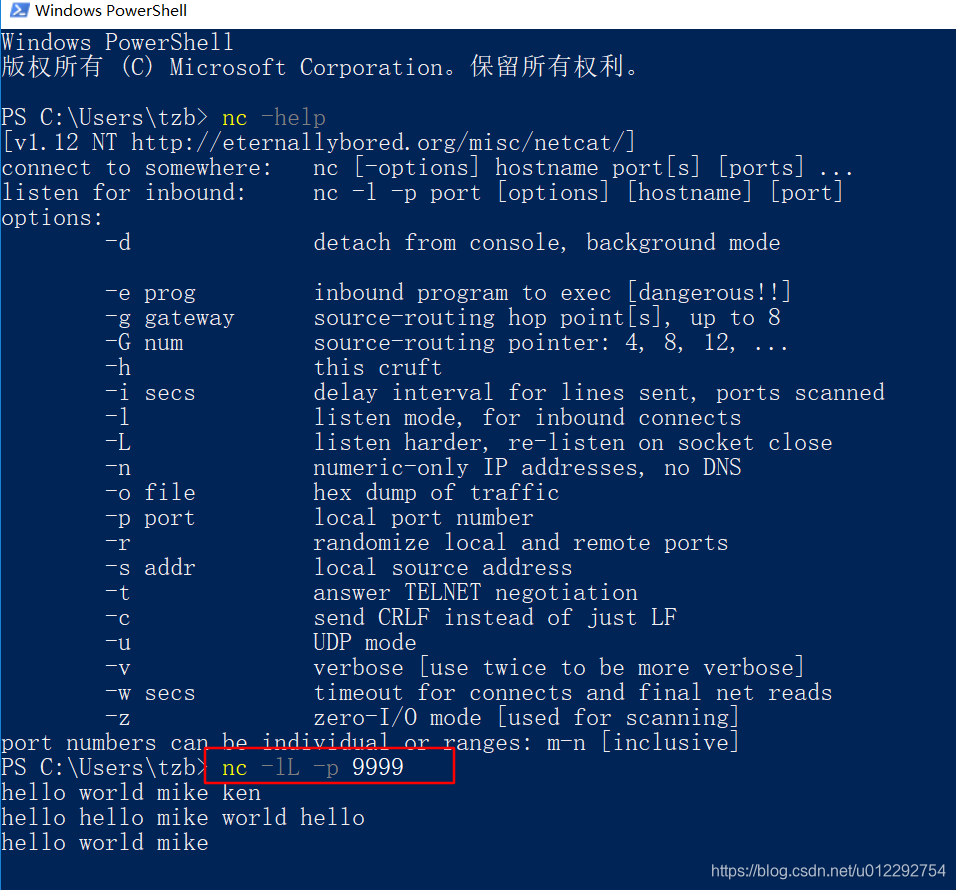

2.1 Scala 流式单词统计

package sparkstreaming

import org.apache.spark.SparkConf

import org.apache.spark.streaming.{Seconds, StreamingContext}

object StramingWordCount {

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setMaster("local[4]").setAppName("NetWordCount")

val ssc = new StreamingContext(conf, Seconds(10))

val lines = ssc.socketTextStream("localhost", 9999)

val words = lines.flatMap(_.split(" "))

val pairs = words.map((_, 1))

val count = pairs.reduceByKey(_ + _)

count.print

ssc.start()

ssc.awaitTermination()

}

}

2.2 Java 版流式单词统计

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction;

import org.apache.spark.streaming.Durations;

import org.apache.spark.streaming.api.java.JavaDStream;

import org.apache.spark.streaming.api.java.JavaPairDStream;

import org.apache.spark.streaming.api.java.JavaReceiverInputDStream;

import org.apache.spark.streaming.api.java.JavaStreamingContext;

import scala.Tuple2;

import java.util.ArrayList;

import java.util.Iterator;

import java.util.List;

public class JavaStreamingWordcount {

public static void main(String[] args) throws InterruptedException {

SparkConf conf = new SparkConf().setAppName("JavaStreamingWordcount").setMaster("local[2]");

JavaStreamingContext jsc = new JavaStreamingContext(conf, Durations.seconds(5));

JavaReceiverInputDStream sock = jsc.socketTextStream("localhost", 9999);

JavaDStream<String> wordsDS = sock.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterator call(String str) throws Exception {

List<String> list = new ArrayList<String>();

String[] arr = str.split(" ");

for (String s : arr) {

list.add(s);

}

return list.iterator();

}

});

JavaPairDStream<String, Integer> pairDS = wordsDS.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String s) throws Exception {

return new Tuple2<String, Integer>(s, 1);

}

});

JavaPairDStream<String, Integer> countDS = pairDS.reduceByKey(new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer v1, Integer v2) throws Exception {

return v1 + v2;

}

});

countDS.print();

jsc.start();

jsc.awaitTermination();

}

}