一、sprapy爬虫框架

pip install pypiwin32

1) 创建爬虫框架

scrapy startproject Project # 创建爬虫项目

You can start your first spider with:

cd Project

scrapy genspider example example.com

cd Project # 进入项目

scrapy genspider chouti chouti.com # 创建爬虫

2)执行爬虫

class ChoutiSpider(scrapy.Spider): name = 'chouti' allowed_domains = ['chouti.com'] # start_urls = ['http://dig.chouti.com/'] # start_urls = ['http://www.autohome.com.cn/news'] def parse(self, response): # response 访问网页的后的返回值 print(response) # <200 https://www.autohome.com.cn/news/> print(response.url) # https://www.autohome.com.cn/news/

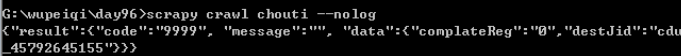

(debug模式) scrapy --help 参数帮助 pip install pypiwin32 # 执行爬虫的依赖包 scrapy crawl chouti # 执行爬虫,查看经过的中间键 # 常用执行爬虫操作 scrapy crawl chouti --nolog # 执行爬虫

3)处理显示编码

import scrapy import sys import io sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030') # 处理显示编码 class ChoutiSpider(scrapy.Spider): ......... def parse(self, response): content = str(response.body,encoding='utf-8') print(content)

4)寻找标签:from scrapy.selector import Selector,HtmlXPathSelector

class ChoutiSpider(scrapy.Spider): name = 'chouti' allowed_domains = ['chouti.com'] # start_urls = ['http://dig.chouti.com/'] start_urls = ['http://www.autohome.com.cn/news'] def parse(self, response): ''' # # response 访问网页的后的返回值 # print(response) # <200 https://www.autohome.com.cn/news/> # # 查看访问的地址 # print(response.url) # https://www.autohome.com.cn/news/ # 获取到网页文本代码 # print(response.text) # 网页代码 print(response.body) ''' # 第一种 找到整个文档所有的 a 便签 # hax = Selector(response=response).xpath('//a') # 标签对象列表 # for i in hax: # print(i) # 便签对象 # 第二种 找到所有的div标签且属性是 id="content-list" # hax = Selector(response=response).xpath('//div[@id="content-list"]').extract() # 拿到便签非标签对象 # 第三种 找到所有的div标签且属性是 id="content-list",并寻找它的儿子标签 (/) # hxs = Selector(response=response).xpath('//div[@id="content-list"]/div[@class="item"]').extract() # 标签对象转换成字符串 # for i in hxs: # print(i) # 第四种 找到所有的div标签且属性是 id="content-list",并寻找它的儿子标签 (/) hxs = Selector(response=response).xpath('//div[@id="content-list"]/div[@class="item"]') for obj in hxs: # 在当前标签下取所有的a 标签 .//a a = obj.xpath('.//a[@class="show-content"]/text()').extract() # a = obj.xpath('.//a[@class="show-content"]/text()').extract_first() # 拿列表的第一个 # print(a) print(a.strip()) # 去除空白

标签寻找总结

// 表示子孙中 .// 当前对象的子孙中 / 儿子 /div 儿子中的div标签 /div[@id="i1"] 儿子中的div标签且id=i1 /div[@id="i1"] 儿子中的div标签且id=i1 obj.extract() # 列表中的每一个对象转换字符串 =》 [] obj.extract_first() # 列表中的每一个对象转换字符串 => 列表第一个元素 //div/text() 获取某个标签的文本

hax = Selector(response=response).xpath('//div[@id="dig_lepage"]//a/text()') # 拿内容

hax = Selector(response=response).xpath('//div[@id="dig_lepage"]//a/@href') # 拿标签属性

# starts-with(@href, "/all/hot/recent/ 以什么开头

hax = Selector(response=response).xpath('//a[starts-with(@href, "/all/hot/recent/")]/@href').extract()

# 正则取

hxs2 = Selector(response=response).xpath('//a[re:test(@href, "/all/hot/recent/\d+")]/@href').extract()

print(response.meta) 查询寻找深度

5.1)获取当前页的所有页面,即a 标签的href属性内容

class ChoutiSpider(scrapy.Spider): name = 'chouti' allowed_domains = ['chouti.com'] # start_urls = ['http://dig.chouti.com/'] start_urls = ['http://www.autohome.com.cn/news'] visited_urls = set() def parse(self, response): # 获取当前页的所有页码 ''' hax = Selector(response=response).xpath('//div[@id="dig_lepage"]//a/@href').extract() for item in hax: print(item) # 可能有重复的页面 ''' hax = Selector(response=response).xpath('//div[@id="dig_lepage"]//a/@href').extract() for item in hax: if item in self.visited_urls: print('已经存在') else: self.visited_urls.add(item) print(item)

对url内容加密保存

class ChoutiSpider(scrapy.Spider): name = 'chouti' allowed_domains = ['chouti.com'] # start_urls = ['http://dig.chouti.com/'] start_urls = ['http://www.autohome.com.cn/news'] visited_urls = set() def parse(self, response): hax = Selector(response=response).xpath('//div[@id="dig_lepage"]//a/@href').extract() for url in hax: md5_url = self.md5(url) if url in self.visited_urls: print('已经存在') else: self.visited_urls.add(md5_url) print(url) def md5(self,url): import hashlib obj = hashlib.md5() obj.update(bytes(url,encoding='utf-8')) return obj.hexdigest()

5.2)获取该网站的所有页面

# -*- coding: utf-8 -*- import scrapy from scrapy.selector import Selector,HtmlXPathSelector from scrapy.http import Request import sys import io sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030') # 处理显示编码 class ChoutiSpider(scrapy.Spider): name = 'chouti' allowed_domains = ['chouti.com'] # start_urls = ['http://dig.chouti.com/'] start_urls = ['http://www.autohome.com.cn/news'] visited_urls = set() def parse(self, response): hax = Selector(response=response).xpath('//a[starts-with(@href, "/all/hot/recent/")]/@href').extract() for url in hax: md5_url = self.md5(url) if url in self.visited_urls: pass else: print(url) self.visited_urls.add(md5_url) url = "http://dig.chouti.com%s" %url # 将新要访问的url添加到调度器 yield Request(url=url,callback=self.parse) def md5(self,url): import hashlib obj = hashlib.md5() obj.update(bytes(url,encoding='utf-8')) return obj.hexdigest()

5.3)设置访问深度,即不获取到所有的页面,递归寻找的层数

#配置文件最后写入 DEPIH_LIMIT = 1

6)数据保存操作

配置文件取消注释pipeline

ITEM_PIPELINES = { 'Project.pipelines.ProjectPipeline': 300, }

定义保存的数据类字段名

class ChoutiItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() title = scrapy.Field() href = scrapy.Field()

将获取的对象传递给pipelines进行持久化保存

def parse(self, response): hxs1 = Selector(response=response).xpath('//div[@id="content-list"]/div[@class="item"]') # 标签对象列表 for obj in hxs1: title = obj.xpath('.//a[@class="show-content"]/text()').extract_first().strip() href = obj.xpath('.//a[@class="show-content"]/@href').extract_first().strip() item_obj = ChoutiItem(title=title,href=href) # 将item 对象传递给pipeline yield item_obj

6.1)写入文件

class ProjectPipeline(object): def process_item(self, item, spider): print(spider,item) tpl = "%s\n%s\n\n" %(item['item'],item['href']) f = open('news.json','a') f.write(tpl) f.close()

7)知识小结

命令: scrapy startproject xxx cd xxx scrapy genspider name name.com scrapy crawl name 编写代码: a. name不能省略 b. start_urls,起始URL地址 c. allowed_domains = ["chouti.com"] 允许的域名 d. 重写start_requests,指定初始处理请求的函数 def start_requests(self): for url in self.start_urls: yield Request(url,callback=self.parse1) e. 响应response repsonse.url repsonse.text repsonse.body response.meta = {'depth': ‘深度’} f. 采集数据 Selector(response=response).xpath() //div //div[@id="i1"] //div[starts-with(@id,"i1")] //div[re:test(@id,"i1")] //div/a # obj.xpath('./') obj.xpath('.//') //div/a/text() //div/a/@href Selector().extract() Selector().extract_first() //a[@id] //a/@id g. yield Request(url='',callback='xx') h. yield Item(name='xx',titile='xxx') i. pipeline class Foo: def process_item(self,item,spider): .... settings = { "xx.xx.xxx.Foo1": 300, "xx.xx.xxx.Foo2": 400, # 谁大谁先执行 }

二、scrapy框架知识补充

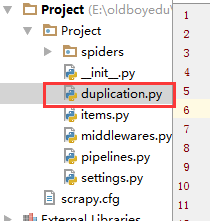

from scrapy.dupefilter import RFPDupeFilter # 查看去重的url源代码,在编写自己的

1)自定义类,url去重,内容保存方式

class RepeatFilter(object): def __init__(self): # 2 self.visited_set = set() @classmethod def from_settings(cls, settings): # 1 return cls() def request_seen(self, request): # 4 if request.url in self.visited_set: return True self.visited_set.add(request.url) return False def open(self): # can return deferred # 3 # print('open') pass def close(self, reason): # can return a deferred # 5 # print('close') pass def log(self, request, spider): # log that a request has been filtered # print('log....') pass

配置文件引入自定义类

DUPEFILTER_CLASS = "day96.duplication.RepeatFilter" # 自定义的 # DUPEFILTER_CLASS = "scrapy.dupefilters.RFPDupeFilter" # scrapy框架自带的

主逻辑文件调用回调函数

class ChoutiSpider(scrapy.Spider): name = 'chouti' allowed_domains = ['chouti.com'] # start_urls = ['http://dig.chouti.com/'] start_urls = ['http://www.autohome.com.cn/news'] def parse(self, response): hax2 = Selector(response=response).xpath('//a[starts-with(@href, "/all/hot/recent/")]/@href').extract() for url in hax2: url = "http://dig.chouti.com%s" %url yield Request(url=url,callback=self.parse)

2.1)pipelines数据库持久化补充(分工明细)

class ProjectPipeline(object): def __init__(self,conn_str): # 数据的初始化 self.conn_str = conn_str @classmethod def from_crawler(cls, crawler): """ 初始化时候,用于创建pipeline对象,读取配置文件 :param crawler: :return: """ conn_str = crawler.settings.get('DB') return cls(conn_str) def open_spider(self,spider): """ 爬虫开始执行时,调用 :param spider: :return: """ print('000000') self.conn = open(self.conn_str,'a') def close_spider(self,spider): """ 爬虫关闭时,被调用 :param spider: :return: """ print('1111111') self.conn.close() def process_item(self, item, spider): # 每当数据需要持久化时,就需要被调用 # if spider.name == "chouti": tpl = "%s\n%s\n\n" %(item['item'],item['href']) self.conn.write(tpl)

2.2)如果有多个pipelines时,是否考虑让下一个执行

配置文件配置pipelines。根据执行顺序考虑谁先谁后

ITEM_PIPELINES = { 'day96.pipelines.Day96Pipeline': 300, 'day96.pipelines.Day97Pipeline': 200, }

from scrapy.exceptions import DropItem

根据返回值决定是否交给下一个pipelines执行

class ProjectPipeline(object): def __init__(self,conn_str): # 数据的初始化 self.conn_str = conn_str @classmethod def from_crawler(cls, crawler): """ 初始化时候,用于创建pipeline对象,读取配置文件 :param crawler: :return: """ conn_str = crawler.settings.get('DB') return cls(conn_str) def open_spider(self,spider): """ 爬虫开始执行时,调用 :param spider: :return: """ print('000000') self.conn = open(self.conn_str,'a') def close_spider(self,spider): """ 爬虫关闭时,被调用 :param spider: :return: """ print('1111111') self.conn.close() def process_item(self, item, spider): # 每当数据需要持久化时,就需要被调用 # if spider.name == "chouti": tpl = "%s\n%s\n\n" %(item['item'],item['href']) self.conn.write(tpl) # 交给下一个pipeline处理 return item # 丢弃item,不交给下一个pipeline处理 # raise DropItem() class ProjectPipeline2(object): pass

2.3)pipelines总结

pipeline补充 from scrapy.exceptions import DropItem class Day96Pipeline(object): def __init__(self,conn_str): self.conn_str = conn_str @classmethod def from_crawler(cls, crawler): """ 初始化时候,用于创建pipeline对象 :param crawler: :return: """ conn_str = crawler.settings.get('DB') return cls(conn_str) def open_spider(self,spider): """ 爬虫开始执行时,调用 :param spider: :return: """ self.conn = open(self.conn_str, 'a') def close_spider(self,spider): """ 爬虫关闭时,被调用 :param spider: :return: """ self.conn.close() def process_item(self, item, spider): """ 每当数据需要持久化时,就会被调用 :param item: :param spider: :return: """ # if spider.name == 'chouti' tpl = "%s\n%s\n\n" %(item['title'],item['href']) self.conn.write(tpl) # 交给下一个pipeline处理 return item # 丢弃item,不交给 # raise DropItem() """ 4个方法 crawler.settings.get('setting中的配置文件名称且必须大写') process_item方法中,如果抛出异常DropItem表示终止,否则继续交给后续的pipeline处理 spider进行判断 """

3.1)使用cookie登录抽屉,验证是否成功

from scrapy.http.cookies import CookieJar 导入cookies模块

# -*- coding: utf-8 -*- import scrapy import sys import io from scrapy.http import Request from scrapy.selector import Selector, HtmlXPathSelector from ..items import ChoutiItem sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030') from scrapy.http.cookies import CookieJar class ChoutiSpider(scrapy.Spider): name = "chouti" allowed_domains = ["chouti.com",] start_urls = ['http://dig.chouti.com/'] def parse(self, response): cookie_obj = CookieJar() cookie_obj.extract_cookies(response,response.request) # print(cookie_obj._cookies) # 查看cookie # 带上用户名密码+cookie yield Request( url="http://dig.chouti.com/login", method='POST', body = "phone=8615331254089&password=woshiniba&oneMonth=1", headers={'Content-Type': "application/x-www-form-urlencoded; charset=UTF-8"}, cookies=cookie_obj._cookies, callback=self.check_login ) def check_login(self,response): print(response.text) # 验证是否登录成功

登录成功的信息

3.2)首页的当前页点赞

import scrapy import sys import io from scrapy.http import Request from scrapy.selector import Selector, HtmlXPathSelector from ..items import ChoutiItem sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030') from scrapy.http.cookies import CookieJar class ChoutiSpider(scrapy.Spider): name = "chouti" allowed_domains = ["chouti.com",] start_urls = ['http://dig.chouti.com/'] cookie_dict = None def parse(self, response): cookie_obj = CookieJar() cookie_obj.extract_cookies(response,response.request) # print(cookie_obj._cookies) # 查看cookie self.cookie_dict = cookie_obj._cookies # 带上用户名密码+cookie yield Request( url="http://dig.chouti.com/login", method='POST', body = "phone=8615331254089&password=woshiniba&oneMonth=1", headers={'Content-Type': "application/x-www-form-urlencoded; charset=UTF-8"}, cookies=cookie_obj._cookies, callback=self.check_login ) def check_login(self,response): print(response.text) # 验证是否登录成功 # 如果成功 yield Request(url="http://dig.chouti.com/",callback=self.good) def good(self,response): id_list = Selector(response=response).xpath('//div[@share-linkid]/@share-linkid').extract() for nid in id_list: print(nid) url = "http://dig.chouti.com/link/vote?linksId=%s" % nid yield Request( url=url, method="POST", cookies=self.cookie_dict, callback=self.show # 对发送点赞请求的返回数据 ) def show(self,response): # 查看是否点赞成功 print(response.text)

3.3)为所有的页面点赞

# -*- coding: utf-8 -*- import scrapy import sys import io from scrapy.http import Request from scrapy.selector import Selector, HtmlXPathSelector from ..items import ChoutiItem sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030') from scrapy.http.cookies import CookieJar class ChoutiSpider(scrapy.Spider): name = "chouti" allowed_domains = ["chouti.com",] start_urls = ['http://dig.chouti.com/'] cookie_dict = None def parse(self, response): cookie_obj = CookieJar() cookie_obj.extract_cookies(response,response.request) # print(cookie_obj._cookies) # 查看cookie self.cookie_dict = cookie_obj._cookies # 带上用户名密码+cookie yield Request( url="http://dig.chouti.com/login", method='POST', body = "phone=8615331254089&password=woshiniba&oneMonth=1", headers={'Content-Type': "application/x-www-form-urlencoded; charset=UTF-8"}, cookies=cookie_obj._cookies, callback=self.check_login ) def check_login(self,response): print(response.text) # 验证是否登录成功 # 如果成功 yield Request(url="http://dig.chouti.com/",callback=self.good) def good(self,response): id_list = Selector(response=response).xpath('//div[@share-linkid]/@share-linkid').extract() for nid in id_list: print(nid) url = "http://dig.chouti.com/link/vote?linksId=%s" % nid yield Request( url=url, method="POST", cookies=self.cookie_dict, callback=self.show # 对发送点赞请求的返回数据 ) # 找到所有的页面 page_urls = Selector(response=response).xpath('//div[@id="dig_lcpage"]//a/@href').extract() for page in page_urls: url = "http://dig.chouti.com%s" % page yield Request(url=url,callback=self.good) # 回调自己,为所有的页面内容点赞 def show(self,response): # 查看是否点赞成功 print(response.text)

配置文件设置访问深度,可以指定到页面的深度点赞

4)scrapy框架扩展

from scrapy.extensions.telnet import TelnetConsole 查看模拟扩展的源代码

自定义扩展内容

from scrapy import signals class MyExtend: def __init__(self,crawler): self.crawler = crawler # 钩子上挂障碍物 # 在指定信号上注册操作 crawler.signals.connect(self.start, signals.engine_started) crawler.signals.connect(self.close, signals.spider_closed) @classmethod def from_crawler(cls, crawler): return cls(crawler) def start(self): print('signals.engine_started.start') def close(self): print('signals.spider_closed.close')

配置文件引入extension.py

EXTENSIONS = { # 'scrapy.extensions.telnet.TelnetConsole': None, 'day96.extensions.MyExtend': 300, }

5)配置文件详解

# 设置浏览器信息 #USER_AGENT = 'day96 (+http://www.yourdomain.com)' USER_AGENT = 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/56.0.2924.87 Safari/537.36' # Obey robots.txt rules # 不遵守爬虫规则,任意爬 ROBOTSTXT_OBEY = False # Configure maximum concurrent requests performed by Scrapy (default: 16) #CONCURRENT_REQUESTS = 32 一次可发出32个请求。默认是16 #DOWNLOAD_DELAY = 3 # 执行过程慢一点,太快了,可能被封 # CONCURRENT_REQUESTS_PER_DOMAIN = 16 # 每个域名并发16个请求 # CONCURRENT_REQUESTS_PER_IP = 16 # 每一个ip 并发16个请求 # Disable cookies (enabled by default) # COOKIES_ENABLED = True # 是否爬取cookies,默认是True # COOKIES_DEBUG = True # 是否是调试模式,调试模式拿取cookies TELNETCONSOLE_ENABLED = True # telnet 127.0.0.1 6023 监听爬虫 # 设置请求头 # Override the default request headers: #DEFAULT_REQUEST_HEADERS = { # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', # 'Accept-Language': 'en', #} # 智能限速 #AUTOTHROTTLE_ENABLED = True # The initial download delay #AUTOTHROTTLE_START_DELAY = 5 # 第一个请求延迟5秒 # The maximum download delay to be set in case of high latencies #AUTOTHROTTLE_MAX_DELAY = 60 # 最大延迟60秒 # The average number of requests Scrapy should be sending in parallel to # each remote server #AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: #AUTOTHROTTLE_DEBUG = False DEPTH_LIMIT = 4 DEPTH_PRIORITY = 0 # 1 # 只能是0和1,深度优先还是广度优先。深度:0;广度1