统计学习方法第二章:感知机(perceptron)算法及python实现

统计学习方法第三章:k近邻法(k-NN),kd树及python实现

统计学习方法第四章:朴素贝叶斯法(naive Bayes),贝叶斯估计及python实现

统计学习方法第五章:决策树(decision tree),CART算法,剪枝及python实现

统计学习方法第五章:决策树(decision tree),ID3算法,C4.5算法及python实现

完整代码:

https://github.com/xjwhhh/LearningML/tree/master/StatisticalLearningMethod

欢迎follow和star

决策树(decision tree)是一种基本的分类与回归方法。决策树模型呈树状结构,在分类问题中,表示基于特征对实例进行分类的过程。

它可以认为是if-then规则的集合,也可以认为是定义在特征空间与类空间上的条件概率分布。

其主要优点是模型具有可读性,分类速度快。

学习时,利用训练数据,根据损失函数最小化的原则建立决策树模型。预测时,对新的数据,利用决策树模型进行分类。

决策树学习通常包括3个步骤:特征选择,决策树的生成和决策树的修剪

主要的决策树算法包括ID3,C4.5,CART。

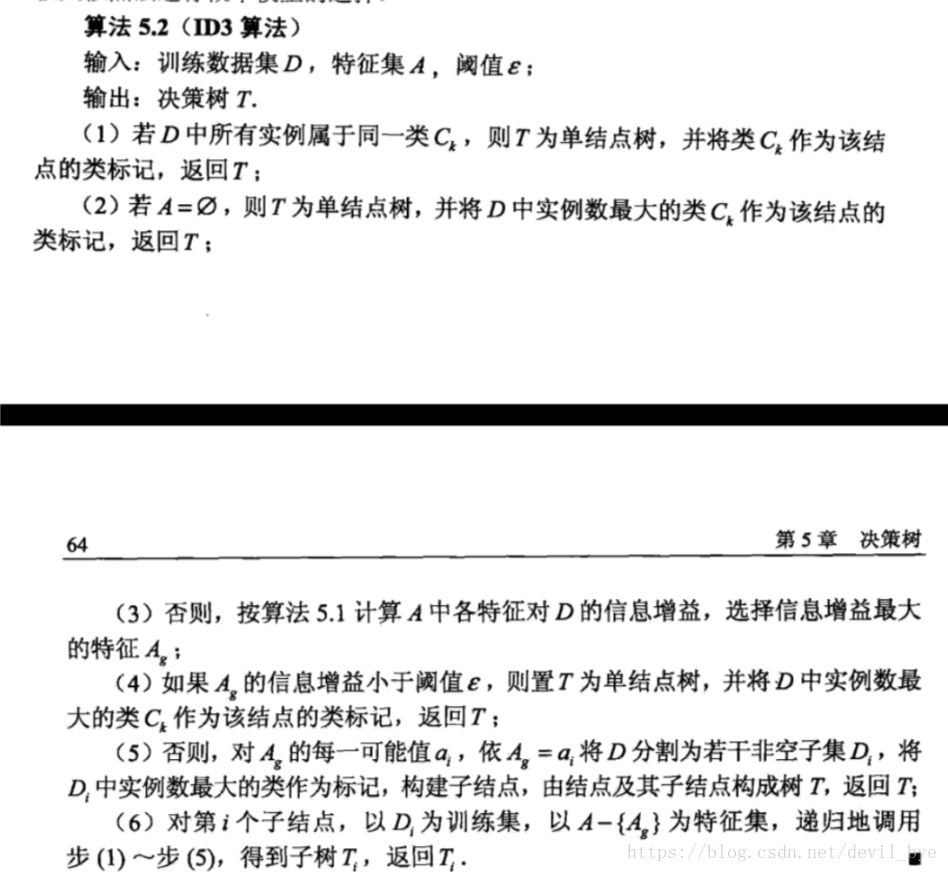

ID3算法的核心是在决策树各个结点上应用信息增益准则选择特征,递归的构建决策树。具体方法是:从根结点开始,对结点计算所有特征的信息增益,选择信息增益最大的特征作为结点的特征,由该特征的不同取值建立子结点;再对子结点递归的调用以上方法,构建决策树直到所有特征的信息增益很小或没有特征可以选择为止。最后得到一个决策树。ID3相当于用极大似然法进行概率模型的选择。

ID3算法

代码如下:

import cv2

import time

import logging

import numpy as np

import pandas as pd

import random

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

total_class = 10

def log(func):

def wrapper(*args, **kwargs):

start_time = time.time()

logging.debug('start %s()' % func.__name__)

ret = func(*args, **kwargs)

end_time = time.time()

logging.debug('end %s(), cost %s seconds' % (func.__name__, end_time - start_time))

return ret

return wrapper

# 二值化

def binaryzation(img):

cv_img = img.astype(np.uint8)

cv2.threshold(cv_img, 50, 1, cv2.THRESH_BINARY, cv_img)

return cv_img

@log

def binaryzation_features(trainset):

features = []

for img in trainset:

img = np.reshape(img, (10, 10))

cv_img = img.astype(np.uint8)

img_b = binaryzation(cv_img)

# hog_feature = np.transpose(hog_feature)

features.append(img_b)

features = np.array(features)

features = np.reshape(features, (-1, 100))

return features

class Tree(object):

def __init__(self, node_type, Class=None, feature=None):

self.node_type = node_type

self.dict = {}

self.Class = Class

self.feature = feature

def add_tree(self, val, tree):

self.dict[val] = tree

def predict(self, features):

if self.node_type == 'leaf':

return self.Class

if (features[self.feature] in self.dict.keys()):

tree = self.dict[features[self.feature]]

else:

if (self.Class is None):

return random.randint(0, 1)

else:

return self.Class

return tree.predict(features)

def calc_ent(x):

"""

calculate empirical entropy of x

"""

x_value_list = set([x[i] for i in range(x.shape[0])])

ent = 0.0

for x_value in x_value_list:

p = float(x[x == x_value].shape[0]) / x.shape[0]

logp = np.log2(p)

ent -= p * logp

return ent

def calc_condition_ent(train_feature, train_label):

"""

calculate empirical entropy H(y|x)

"""

# calc ent(y|x)

ent = 0

train_feature_set = set(train_feature)

# print("train_feature_set", train_feature_set)

for train_feature_value in train_feature_set:

Di = train_feature[train_feature == train_feature_value]

label_i = train_label[train_feature == train_feature_value]

# print("Di", Di)

train_label_set = set(train_label)

temp = 0

# print("train_label_set", train_label_set)

for train_label_value in train_label_set:

Dik = Di[label_i == train_label_value]

# print(Dik)

if (len(Dik) != 0):

p = float(len(Dik)) / len(Di)

logp = np.log2(p)

temp -= p * logp

ent += float(len(Di)) / len(train_feature) * temp

return ent

def recurse_train(train_set, train_label, features, epsilon):

global total_class

LEAF = 'leaf'

INTERNAL = 'internal'

# 步骤1——如果train_set中的所有实例都属于同一类Ck

label_set = set(train_label)

# print(label_set)

if len(label_set) == 1:

return Tree(LEAF, Class=label_set.pop())

# 步骤2——如果features为空

class_count0 = 0

class_count1 = 0

for i in range(len(train_label)):

if (train_label[i] == 1):

class_count1 += 1

else:

class_count0 += 1

if (class_count0 >= class_count1):

max_class = 0

else:

max_class = 0

if features is None:

return Tree(LEAF, Class=max_class)

if len(features) == 0:

return Tree(LEAF, Class=max_class)

# 步骤3——计算信息增益

max_feature = 0

max_gda = 0

D = train_label

HD = calc_ent(D)

for feature in features:

A = np.array(train_set[:, feature].flat)

gda = HD - calc_condition_ent(A, D)

if gda > max_gda:

max_gda, max_feature = gda, feature

# 步骤4——小于阈值

if max_gda < epsilon:

return Tree(LEAF, Class=max_class)

# 步骤5——构建非空子集

sub_features = features.remove(max_feature)

tree = Tree(INTERNAL, feature=max_feature)

feature_col = np.array(train_set[:, max_feature].flat)

feature_value_list = set([feature_col[i] for i in range(feature_col.shape[0])])

for feature_value in feature_value_list:

index = []

for i in range(len(train_label)):

if train_set[i][max_feature] == feature_value:

index.append(i)

sub_train_set = train_set[index]

sub_train_label = train_label[index]

sub_tree = recurse_train(sub_train_set, sub_train_label, sub_features, epsilon)

tree.add_tree(feature_value, sub_tree)

return tree

@log

def train(train_set, train_label, features, epsilon):

# print(features)

return recurse_train(train_set, train_label, features, epsilon)

@log

def predict(test_set, tree):

result = []

for features in test_set:

tmp_predict = tree.predict(features)

result.append(tmp_predict)

return np.array(result)

if __name__ == '__main__':

logger = logging.getLogger()

logger.setLevel(logging.DEBUG)

raw_data = pd.read_csv('../data/train_binary2.csv', header=0)

data = raw_data.values

images = data[0:, 1:]

labels = data[:, 0]

# 图片二值化

features = binaryzation_features(images)

# 选取 2/3 数据作为训练集, 1/3 数据作为测试集

train_features, test_features, train_labels, test_labels = train_test_split(features, labels, test_size=0.33,randomstate=1)

# print(train_features.shape)

tree = train(train_features, train_labels, [i for i in range(99)], 0.1)

test_predict = predict(test_features, tree)

# print(test_predict)

score = accuracy_score(test_labels, test_predict)

print("The accuracy score is ", score)

实现中最主要的还是对信息增益的计算,特征选择后结点的分类以及递归调用

但我这个正确率其实挺低的,自己也百思不得其解,我觉得关键算法应该是没有问题,如果有大神看出问题了希望指正!

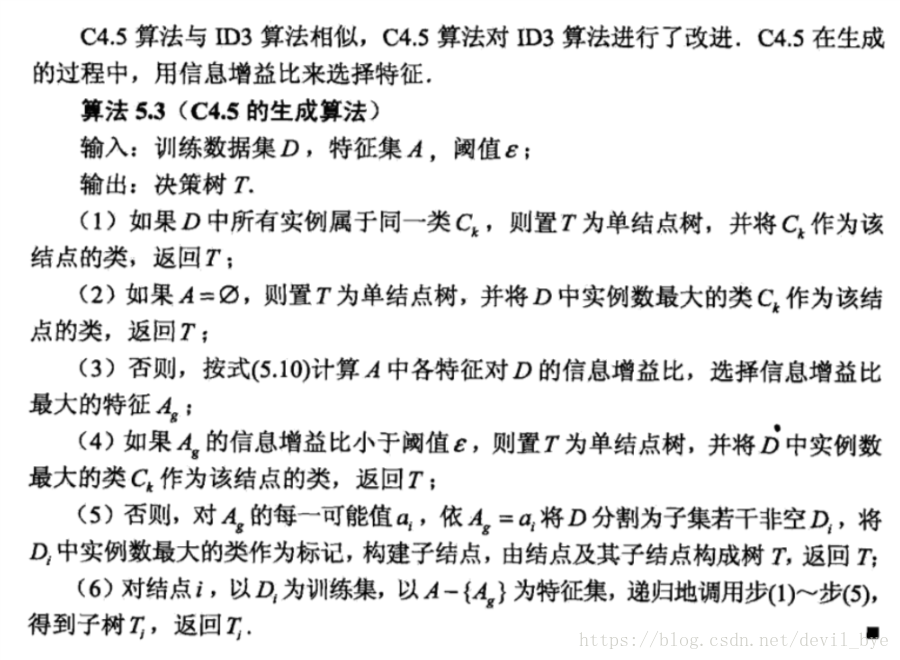

C4.5算法

C4.5算法其实与ID3类似,主要的不同就是C4.5使用信息增益比来选择特征

代码如下:

def recurse_train(train_set, train_label, features, epsilon):

LEAF = 'leaf'

INTERNAL = 'internal'

# 步骤1——如果train_set中的所有实例都属于同一类Ck

label_set = set(train_label)

# print(label_set)

if len(label_set) == 1:

return Tree(LEAF, Class=label_set.pop())

# 步骤2——如果features为空

class_count0 = 0

class_count1 = 0

for i in range(len(train_label)):

if (train_label[i] == 1):

class_count1 += 1

else:

class_count0 += 1

if (class_count0 >= class_count1):

max_class = 0

else:

max_class = 0

if features is None:

return Tree(LEAF, Class=max_class)

if len(features) == 0:

return Tree(LEAF, Class=max_class)

# 步骤3——计算信息增益

max_feature = 0

max_grda = 0

D = train_label

HD = calc_ent(D)

for feature in features:

A = np.array(train_set[:, feature].flat)

gda = HD - calc_condition_ent(A, D)

had = calc_ent(A)

grda = gda / had

if grda > max_grda:

max_grda, max_feature = grda, feature

# 步骤4——小于阈值

if max_grda < epsilon:

return Tree(LEAF, Class=max_class)

# 步骤5——构建非空子集

sub_features = features.remove(max_feature)

tree = Tree(INTERNAL, feature=max_feature)

feature_col = np.array(train_set[:, max_feature].flat)

feature_value_list = set([feature_col[i] for i in range(feature_col.shape[0])])

for feature_value in feature_value_list:

index = []

for i in range(len(train_label)):

if train_set[i][max_feature] == feature_value:

index.append(i)

sub_train_set = train_set[index]

sub_train_label = train_label[index]

sub_tree = recurse_train(sub_train_set, sub_train_label, sub_features, epsilon)

tree.add_tree(feature_value, sub_tree)

return tree

与ID3代码的不同只有在特征选择时的标准不同

在写代码的过程中参考了别人的实现,水平有限,如有错误,希望指出