1、name_scope(name)返回一个语义管理器,调用

with name_scope(...):将这个scope下的变量放在对应的scope的语义环境下

1)如果name是不以/结尾的,那么该函数就会创建一个新的scope出来,这个name会append在之前的一些创建的operation之上,例如下面代码的第九行。一旦name在之前出现过,将会重新命名,例如第十九行的inner_1

2) with g.name_scope(…) as scope:将会让代码重新回到一个绝对的scope中,脱离之前的继承性的scope,例如倒数第六行

3)一个None或者空string都会将目前的scope调整到最高级别,倒数第二行代码

with tf.Graph().as_default() as g:

c = tf.constant(5.0, name="c")

assert c.op.name == "c"

c_1 = tf.constant(6.0, name="c")

assert c_1.op.name == "c_1"

# Creates a scope called "nested"

with g.name_scope("nested") as scope:

nested_c = tf.constant(10.0, name="c")

assert nested_c.op.name == "nested/c"

# Creates a nested scope called "inner".

with g.name_scope("inner"):

nested_inner_c = tf.constant(20.0, name="c")

assert nested_inner_c.op.name == "nested/inner/c"

# Create a nested scope called "inner_1".

with g.name_scope("inner"):

nested_inner_1_c = tf.constant(30.0, name="c")

assert nested_inner_1_c.op.name == "nested/inner_1/c"

# Treats `scope` as an absolute name scope, and

# switches to the "nested/" scope.

with g.name_scope(scope):

nested_d = tf.constant(40.0, name="d")

assert nested_d.op.name == "nested/d"

with g.name_scope(""):

e = tf.constant(50.0, name="e")

assert e.op.name == "e"2、slim.model_variable,slim.variable

在tf中,创建一个变量,需要对他进行初始化,并且如果指定所在的gpu,那么需要给出明确的指令,with tf.device(‘/cpu:0’):,但是slim的variable创建就可以一键完成

weights = slim.variable('weights',

shape=[10, 10, 3 , 3],

initializer=tf.truncated_normal_initializer(stddev=0.1),

regularizer=slim.l2_regularizer(0.05),

device='/CPU:0')此外,在原始tf中,存在regular variables and local (transient) variables,regular variables可以被保存到disk上,但是 local (transient) variables却不可以,在slim中定义了model_variable,代表模型的参数,在训练过或者微调中使用到他或者在测试的时候从checkpoint加载他,slim.model_variable或者slim.fully_connected or slim.conv2d创建的变量都是model variable,但是如果有一些变量是其他方式构建的,但我们仍然希望他是model variable,需要怎么做呢?

# Letting TF-Slim know about the additional variable.

slim.add_model_variable(my_model_variable)3、tf.variable_scope,tf.get_variable_scope

variable_scope是一个语义管理器用来定义创建变量的ops,

with tf.variable_scope("foo"):

with tf.variable_scope("bar"):

v = tf.get_variable("v", [1])

assert v.name == "foo/bar/v:0"

变量重用,共享变量

def foo():

with tf.variable_scope("foo", reuse=tf.AUTO_REUSE):

v = tf.get_variable("v", [1])

return v

v1 = foo() # Creates v.

v2 = foo() # Gets the same, existing v.

assert v1 == v2with tf.variable_scope("foo") as scope:

v = tf.get_variable("v", [1])

scope.reuse_variables()

v1 = tf.get_variable("v", [1])

assert v1 == v如果不指定reuse=True就会报错,原因是不能创建同一个变量两次,如果制定了reuse=True,表示可以二者共享变量

with tf.variable_scope("foo"):

v = tf.get_variable("v", [1])

with tf.variable_scope("foo", reuse=True):

v1 = tf.get_variable("v", [1])

assert v1 == v如果不共享的话,那么

with tf.variable_scope("foo"):

v = tf.get_variable("v", [1]) #foo/v

with tf.variable_scope("foo"):

v1 = tf.get_variable("v", [1]) #foo/foo/v这样是可以的

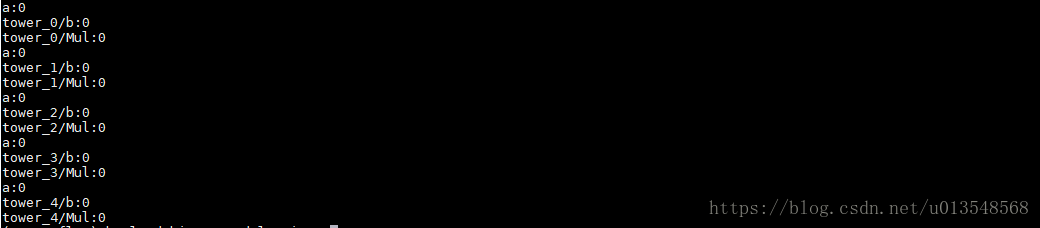

对于共享变量而言,name_scope对于共享是没有影响的,get_variable是共享的,其他方式建立的变量不共享

with tf.variable_scope(tf.get_variable_scope()):

for ii in range(5):

with tf.name_scope('tower_%d' % ii) as name_scope:

a = tf.get_variable('a',shape=[2])

b = tf.Variable(1,name='b')

c = tf.multiply(a,[1])

print(a.name)

print(b.name)

print(c.name)

tf.get_variable_scope().reuse_variables()

4、

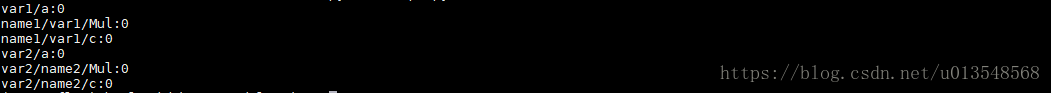

tf.name_scope和tf.variable_scope嵌套使用

import tensorflow as tf

with tf.name_scope("name1"):

with tf.variable_scope("var1"):

a = tf.get_variable("a",shape=[2])

b = tf.multiply(a,[1])

c = tf.Variable(2,name="c")

print(a.name)

print(b.name)

print(c.name)

with tf.variable_scope("var2"):

with tf.name_scope("name2"):

a = tf.get_variable("a",shape=[2])

b = tf.multiply(a,[1])

c = tf.Variable(1,name="c")

print(a.name)

print(b.name)

print(c.name)

1) 嵌套顺序对结果无影响

2) 对于tf.Variable,name_scope和variable_scope都对她的命名产生影响

3)tf.get_variable(),name_scope不对他产生影响

4)对于一般的op操作,例如mulitiply,add等操作,name_scope和variable_scope都会对结果产生影响。