目录

Babysetting the learning process

pART1

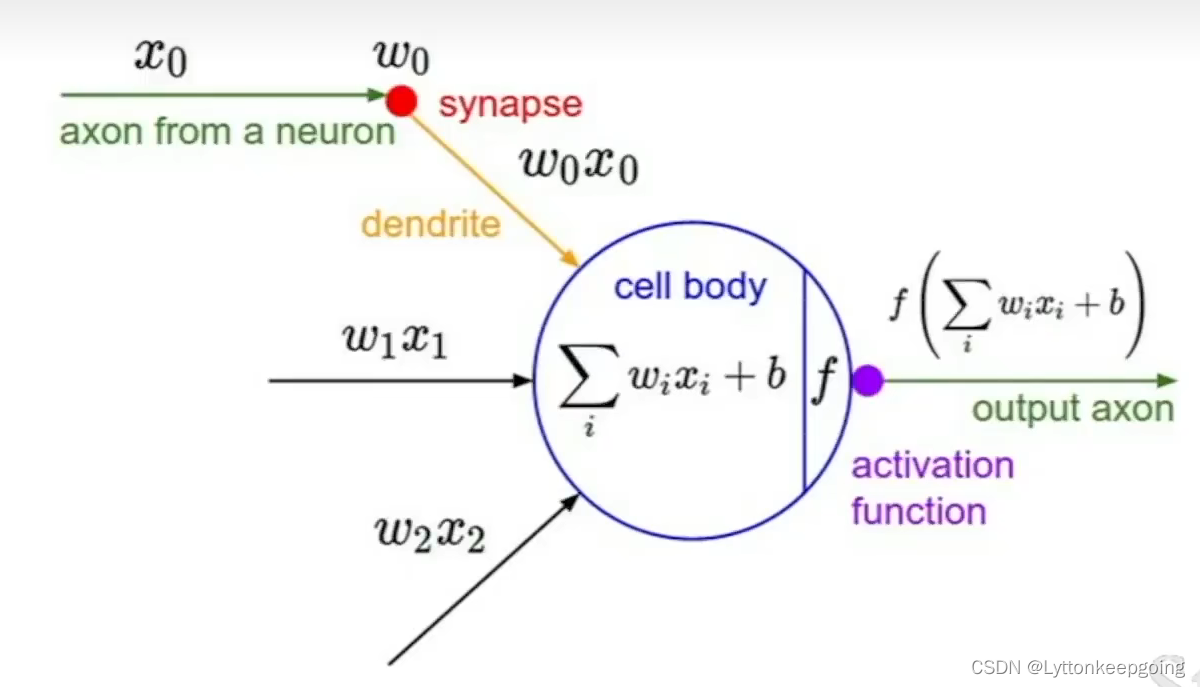

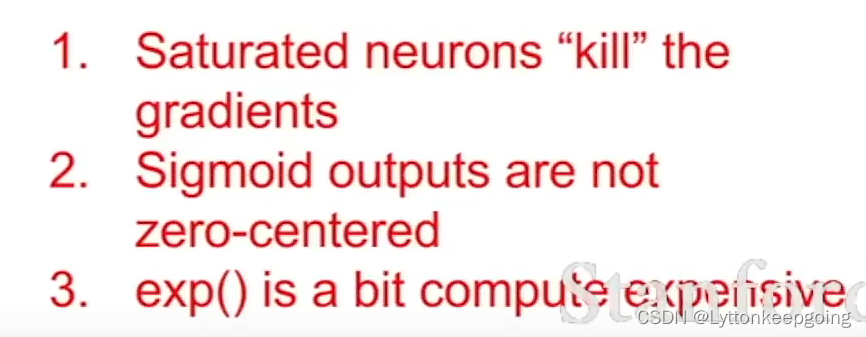

Activation Functions

In practice

Data Preprocessing

about PCA:

PCA(principal component analysis,主成分分析) - 简书 (jianshu.com)

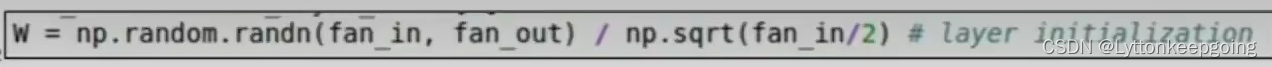

Weight Initialization

four ways for weights initialization

(21条消息) 权值初始化——高斯初始化,Xavier初始化,MSRA初始化,He初始化_caicaiatnbu的博客-CSDN博客_高斯分布初始化

(21条消息) 权值初始化——高斯初始化,Xavier初始化,MSRA初始化,He初始化_caicaiatnbu的博客-CSDN博客_高斯分布初始化

A good general rule of thumb is basically use the Xavier initialization to start with, and then you can also think about some of these other kinds of methods.

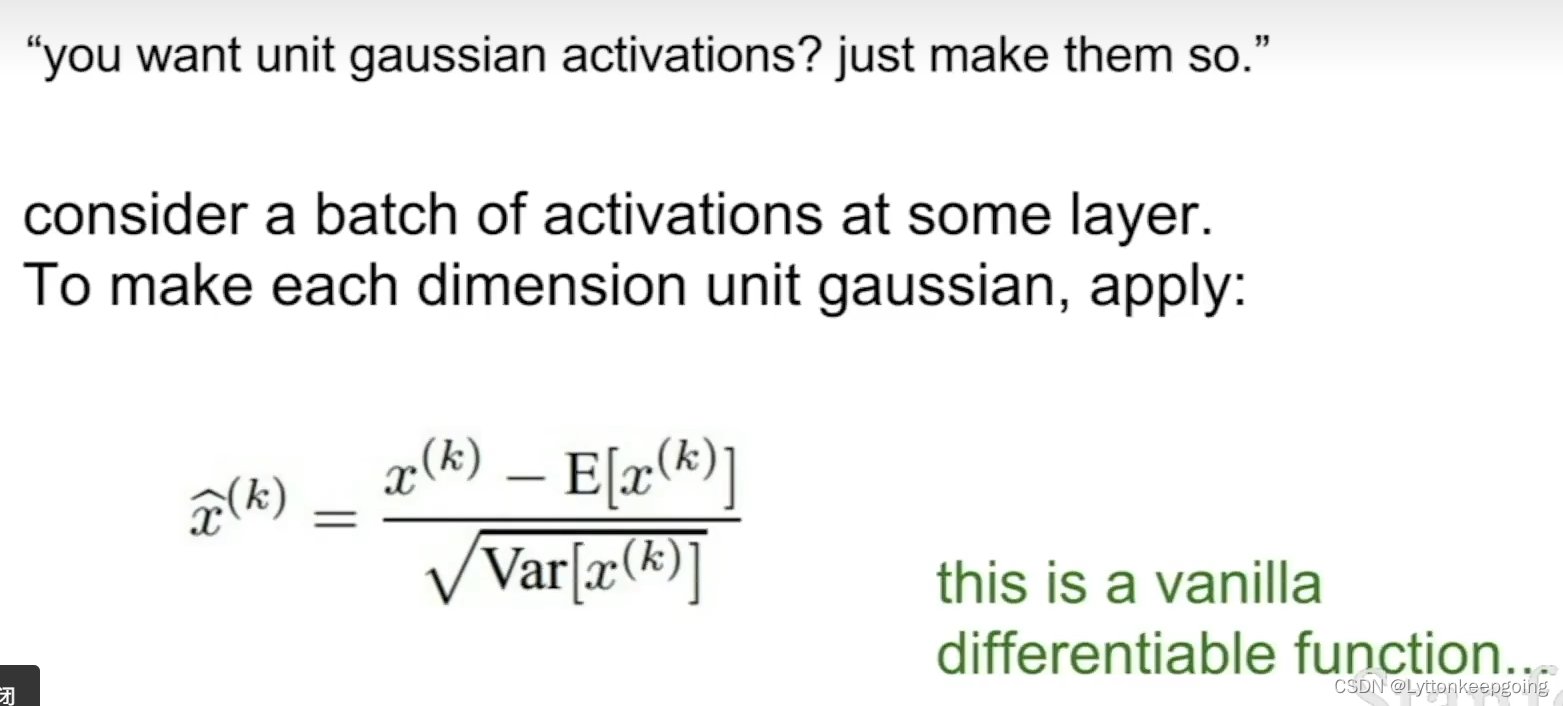

Batch Normalization***

We just estimate this at training time

Babysetting the learning process

How do we monitor training?and how do we adjust hyperparameters as we go to get a good learning result?

codes for initial network

def init_two_layer_model(input_size, hidden_size, output_size):

model = {}

model['W1'] = 0.0001 * np.random.randn(input_size, hidden_size)

model['b1'] = np.zeros(hidden_size)

model['W2'] = 0.0001 * np.random.randn(hidden_size, output_size)

model['b2'] = np.zeros(output_size)

return modelmodel = init_two_layer_model(32*32*3, 50, 10) # input_size , hidden_size ,number of classes

loss, grad = two_layer_net(X_train, model, y_train, 0.0) # 0.0 is the disable regularization

print loss

trying to train

trainer = ClassifierTrainer()

X_tiny = X_train[:20] # take 20 examples

y_tiny = y_train[:20]

best_model, stats = trainer.train(X_tiny, y_tiny, X_tiny, y_tiny, model, two_layer_net, num_epochs=200, reg = 0.0, update='sgd',learning_rate_decay=1, sample_batches=False, learning_rate=1e-3, sample_batches=True, verbose=True)