上一篇说到了线性回归,它是一个回归的例子,那么本章主要介绍一个分类的例子(逻辑回归)。

如上图所示,分类模型与回归模型主要的区别就是输出节点从一个变成了多个。

PS:本章做分类所使用的数据集是Fashion-MNIST数据集(服饰类),它与mnist数据集非常像。

1、数据获取

mnist_train=gn.data.vision.FashionMNIST(train=True)

mnist_test=gn.data.vision.FashionMNIST(train=False)

data,label=mnist_train[0]

print(data.shape,label) # 查看数据维度

结果:

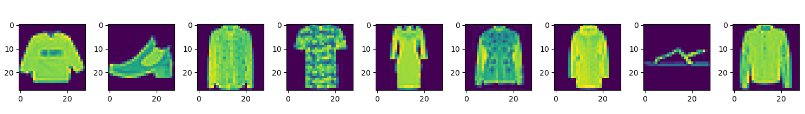

可以看到,该数据集是一张28X28的单通道图像,标签为2。打印图片看看结果:

def show_image(image): # 显示图像

n=image.shape[0]

_,figs=plt.subplots(1,n,figsize=(15,15))

for i in range(n):

figs[i].imshow(image[i].reshape((28,28)).asnumpy())

plt.show()

def get_fashion_mnist_labels(labels):# 显示图像标签

text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat',

'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

return [text_labels[int(i)] for i in labels]

show_image(data)

print(get_fashion_mnist_labels(label))

结果:

2、数据读取

batch_size=100

train_data=gn.data.DataLoader(dataset=mnist_train,batch_size=batch_size,shuffle=True)

test_data=gn.data.DataLoader(dataset=mnist_test,batch_size=batch_size,shuffle=False)

3、定义数据归一化操作

def transform(data,label):

return data.astype("float32")/255,label.astype("float32") # 样本归一化

4、初始化模型参数

num_input=28*28*1

num_output=10

w=nd.random_normal(shape=(num_input,num_output))

b=nd.random_normal(shape=(num_output))

params=[w,b]

与前几章一样,要对求导的参数开梯度:

for param in params:

param.attach_grad()

5、定义归一化函数

def softmax(x):

exp=nd.exp(x) # exp是一个矩阵

partition=exp.sum(axis=1,keepdims=True)

return exp/partition

6、定义网络

def net(X):

return softmax(nd.dot(X.reshape(-1, num_input), w) + b)

7、交叉熵损失函数

def cross_entropy(y_pre,y_true):

return -nd.pick(nd.log(y_pre),y_true)

8、定义准确率

def accuracy(output,label):

return nd.mean(output.argmax(axis=1)==label).asscalar()

9、定义测试集准确率

def evaluate_accuracy(data_iter,net):# 定义测试集准确率

acc=0

for data,label in data_iter:

data,label=transform(data,label)

output=net(data)

acc+=accuracy(output,label)

return acc/len(data_iter)

10、SGD梯度下降优化器

def SGD(params,lr):

for pa in params:

pa[:]=pa-lr*pa.grad # 参数沿着梯度的反方向走特定距离

11、训练

lr=0.1

epochs=20

for epoch in range(epochs):

train_loss=0

train_acc=0

for image,y in train_data:

image,y=transform(image,y) # 类型转换,数据归一化

with ag.record():

output=net(image)

loss=cross_entropy(output,y)

loss.backward()

# 将梯度做平均,这样学习率不会对batch_size那么敏感

SGD(params,lr/batch_size)

train_loss+=nd.mean(loss).asscalar()

train_acc+=accuracy(output,y)

test_acc=evaluate_accuracy(test_data,net)

print("Epoch %d, Loss:%f, Train acc:%f, Test acc:%f"

%(epoch,train_loss/len(train_data),train_acc/len(train_data),test_acc))

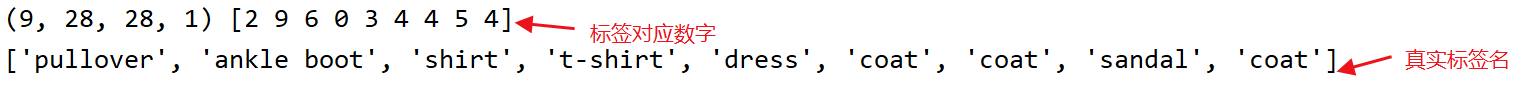

训练结果:

可以看到,loss值在慢慢减少,准确率均在提高。

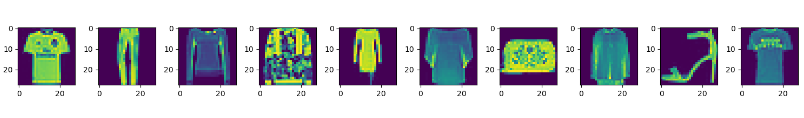

12、预测

训练完成后,可对样本进行预测。

image_10,label_10=mnist_test[:10] #拿到前10个数据

show_image(image_10)

print("真实样本标签:",label_10)

print("真实数字标签对应的服饰名:",get_fashion_mnist_labels(label_10))

image_10,label_10=transform(image_10,label_10)

predict_label=net(image_10).argmax(axis=1)

print("预测样本标签:",predict_label.astype("int8"))

print("预测数字标签对应的服饰名:",get_fashion_mnist_labels(predict_label.asnumpy()))

预测结果:

所有源码:

import mxnet.gluon as gn

import mxnet.autograd as ag

import mxnet.ndarray as nd

def transform(data, label):

return data.astype("float32") / 255, label.astype("float32") # 样本归一化

mnist_train = gn.data.vision.FashionMNIST(train=True)

mnist_test = gn.data.vision.FashionMNIST(train=False)

data, label = mnist_train[0:9]

print(data.shape, label) # 查看数据维度

import matplotlib.pyplot as plt

def show_image(image): # 显示图像

n = image.shape[0]

_, figs = plt.subplots(1, n, figsize=(15, 15))

for i in range(n):

figs[i].imshow(image[i].reshape((28, 28)).asnumpy())

plt.show()

def get_fashion_mnist_labels(labels): # 显示图像标签

text_labels = ['t-shirt', 'trouser', 'pullover', 'dress', 'coat',

'sandal', 'shirt', 'sneaker', 'bag', 'ankle boot']

return [text_labels[int(i)] for i in labels]

#

# show_image(data)

# print(get_fashion_mnist_labels(label))

'''----数据读取----'''

batch_size = 100

transformer = gn.data.vision.transforms.ToTensor()

train_data = gn.data.DataLoader(dataset=mnist_train, batch_size=batch_size, shuffle=True)

test_data = gn.data.DataLoader(dataset=mnist_test, batch_size=batch_size, shuffle=False)

'''----初始化模型参数----'''

num_input = 28 * 28 * 1

num_output = 10

w = nd.random_normal(shape=(num_input, num_output))

b = nd.random_normal(shape=(num_output))

params = [w, b]

for param in params:

param.attach_grad()

# 定义损失函数

def softmax(x):

exp = nd.exp(x) # exp是一个矩阵

partition = exp.sum(axis=1, keepdims=True)

return exp / partition

# 定义模型

def net(X):

return softmax(nd.dot(X.reshape(-1, num_input), w) + b)

# 交叉熵损失函数

def cross_entropy(y_pre,y_true):

return -nd.pick(nd.log(y_pre),y_true)

# 定义准确率

def accuracy(output,label):

return nd.mean(output.argmax(axis=1)==label).asscalar()

def evaluate_accuracy(data_iter,net):# 定义测试集准确率

acc=0

for data,label in data_iter:

data,label=transform(data,label)

output=net(data)

acc+=accuracy(output,label)

return acc/len(data_iter)

# 梯度下降优化器

def SGD(params,lr):

for pa in params:

pa[:]=pa-lr*pa.grad # 参数沿着梯度的反方向走特定距离

'''---训练---'''

lr=0.1

epochs=20

for epoch in range(epochs):

train_loss=0

train_acc=0

for image,y in train_data:

image,y=transform(image,y) # 类型转换,数据归一化

with ag.record():

output=net(image)

loss=cross_entropy(output,y)

loss.backward()

# 将梯度做平均,这样学习率不会对batch_size那么敏感

SGD(params,lr/batch_size)

train_loss+=nd.mean(loss).asscalar()

train_acc+=accuracy(output,y)

test_acc=evaluate_accuracy(test_data,net)

print("Epoch %d, Loss:%f, Train acc:%f, Test acc:%f"

%(epoch,train_loss/len(train_data),train_acc/len(train_data),test_acc))

'''----预测-------'''

# 训练完成后,可对样本进行预测

image_10,label_10=mnist_test[:10] #拿到前10个数据

show_image(image_10)

print("真实样本标签:",label_10)

print("真实数字标签对应的服饰名:",get_fashion_mnist_labels(label_10))

image_10,label_10=transform(image_10,label_10)

predict_label=net(image_10).argmax(axis=1)

print("预测样本标签:",predict_label.astype("int8"))

print("预测数字标签对应的服饰名:",get_fashion_mnist_labels(predict_label.asnumpy()))