Written by joezou(邹镇洪), 2019/4/5

项目 内容 这个作业属于课程 人工智能实战 2019 - 北京航空航天大学 这个作业的要求在 人工智能实战第五次作业(个人) 我在这个课程的目标是 学会利用云部署机器学习模型并完成一个app 这个作业在这些方面帮助我实现目标 练习python代码和学会softmax函数 其他参考文献 无

目的:拟合逻辑与门,逻辑或门

与门

| label | data1 | data2 | data3 | data4 |

|---|---|---|---|---|

| x1 | 0 | 0 | 1 | 1 |

| x2 | 0 | 1 | 0 | 1 |

| y | 0 | 0 | 0 | 1 |

或门

| label | data1 | data2 | data3 | data4 |

|---|---|---|---|---|

| x1 | 0 | 0 | 1 | 1 |

| x2 | 0 | 1 | 0 | 1 |

| y | 0 | 1 | 1 | 1 |

def ReadAndData(gate):

X = np.array([0,0,1,1,0,1,0,1]).reshape(2,4)

AND = np.array([0,0,0,1]).reshape(1,4)

OR = np.array([0,1,1,1]).reshape(1,4)

if gate=='and':

return X,AND

if gate=='or':

return X,OR

def Sigmoid(x):

s=1/(1+np.exp(-x))

return s

def ForwardCalculation(W,B,X):

z = np.dot(W, X) + B

a = Sigmoid(z)

return a

def BackPropagation(X,Y,A):

dloss_z = A - Y

db = dloss_z

dw = np.dot(dloss_z, X.T)

return dw, db

def UpdateWeights(w, b, dW, dB, eta):

w = w - eta * dW

b = b - eta * dB

return w,b

def CheckLoss(w, b, X, Y, count):

A = ForwardCalculation(w, b, X)

p1 = Y * np.log(A)

p2 = (1-Y) * np.log(1-A)

LOSS = -(p1 + p2)

loss = np.sum(LOSS) / count

return loss

def InitialParameters(num_input, num_output, flag):

if flag == 0:

W1 = np.zeros((num_output, num_input))

elif flag == 1:

W1 = np.random.normal(size=(num_output, num_input))

elif flag == 2:

W1=np.random.uniform(-np.sqrt(6)/np.sqrt(num_input+num_output),\

np.sqrt(6)/np.sqrt(num_output+num_input),\

size=(num_output,num_input))

B1 = np.zeros((num_output, 1))

return W1,B1

def ShowResult(W,B,X,Y):

w = -W[0,0]/W[0,1]

b = -B[0,0]/W[0,1]

x = np.array([0,1])

y = w * x + b

plt.plot(x,y)

for i in range(X.shape[1]):

if Y[0,i] == 0:

plt.scatter(X[0,i],X[1,i],marker="o",c='b',s=64)

else:

plt.scatter(X[0,i],X[1,i],marker="^",c='r',s=64)

plt.axis([-0.1,1.1,-0.1,1.1])

plt.show()

if __name__ == '__main__':

n_input = 2

n_output = 1

W,B = InitialParameters(n_input, n_output,1)

# initialize_data

eta = 0.1

iteration, max_iteration = 0, 10000

eps = 1e-2

loss = 0

GATE = 'or'

X, Y = ReadAndData(GATE)

# count of samples

num_features = X.shape[0]

num_example = X.shape[1]

for iteration in range(max_iteration):

for i in range(num_example):

# get x and y value for one sample

x = X[:,i].reshape(num_features,1)

y = Y[:,i].reshape(1,1)

# get z from x,y

z = ForwardCalculation(W, B, x)

# calculate gradient of w and b

dW, dB = BackPropagation(x, y, z)

# update w,b

W, B = UpdateWeights(W, B, dW, dB, eta)

# calculate loss for this batch

loss = CheckLoss(W,B,X,Y,num_example)

# condition 1 to stop

if loss < eps:

break;

#print(iteration,i,loss,W,B)

if loss < eps:

break;

print("Gate = %s, w = %.5f %.5f, b = %.5f" % (GATE,W[0,0],W[0,1],B[0,0]))

ShowResult(W,B,X,Y)结果展示:

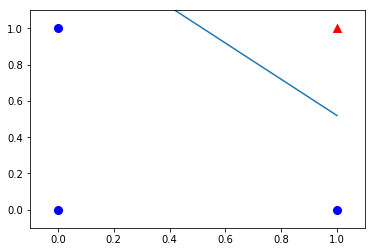

Gate = or, flag = 0, w = 8.51377 8.51633, b = -3.79194, iteration=2322

Gate = or, flag = 1, w = 8.51580 8.51501, b = -3.79102, iteration=2319

Gate = or, flag = 2, w = 8.51418 8.51649, b = -3.79098, iteration=2312

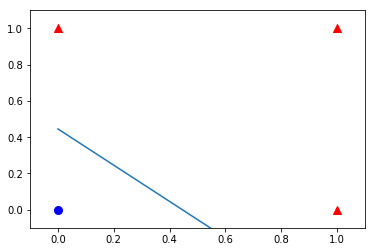

Gate = and, flag = 0, w = 8.53594 8.53331, b = -12.97199, iteration=4321

Gate = and, flag = 1, w = 8.53464 8.53318, b = -12.97296, iteration=4311

Gate = and, flag = 2, w = 8.53470 8.53323, b = -12.97305, iteration=4319可知参数初始化在当前网络下没有明显差距

绘图

- 与门

- 或门