版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/Apple_hzc/article/details/84144712

一、Deeplearning-assignment

在本次作业中,我们将学习如何通过残差网络(ResNets)建立更深的卷及网络。理论上,深层次的网络可以表示非常复杂的函数,但在实践中,他们是很难创建和训练的。残差网络使得建立比以前更深层次的网络成为可能。对于残差网络的详细讲解,具体可参考该论文:Deep Residual Learning for Image Recognition

在这个任务中,您将:

- 实现ResNets的基本构建块。

- 整理这些构建块来实现和训练图像分类的最先进的神经网络。

随着神经网络层数的递增,它越来越能实现一些很复杂的函数功能,它可以在很多不同的抽象层学习特征(从低层到高层),但也随之带来一些问题。在某些时候,深层神经网络并不会出现好的效果,比如一个巨大的障碍就是会出现梯度消失的问题。

很深的网络常常会有一个梯度迅速趋于零的时候,从而使梯度下降的过程变得相当缓慢。更具体地说,在梯度下降过程中,从最后一层backprop回到第一层,每一步乘以权重矩阵,从而能够迅速减少指数梯度为零(或者,在某些情况下,成倍增长迅速)。

现在你需要通过建立一个残差网络来解决问题。

二、相关算法代码

import numpy as np

from keras import layers

from keras.layers import Input, Add, Dense, Activation, ZeroPadding2D, BatchNormalization, Flatten, Conv2D, \

AveragePooling2D, MaxPooling2D, GlobalMaxPooling2D

from keras.models import Model, load_model

from keras.preprocessing import image

from keras.utils import layer_utils

from keras.utils.data_utils import get_file

from keras.applications.imagenet_utils import preprocess_input

from IPython.display import SVG

from keras.utils.vis_utils import model_to_dot

from keras.utils import plot_model

from resnets_utils import *

from keras.initializers import glorot_uniform

import scipy.misc

from matplotlib.pyplot import imshow

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

import keras.backend as K

K.set_image_data_format('channels_last')

K.set_learning_phase(1)

X_train_orig, Y_train_orig, X_test_orig, Y_test_orig, classes = load_dataset()

X_train = X_train_orig / 255.

X_test = X_test_orig / 255.

Y_train = convert_to_one_hot(Y_train_orig, 6).T

Y_test = convert_to_one_hot(Y_test_orig, 6).T

# print("number of training examples = " + str(X_train.shape[0]))

# print("number of test examples = " + str(X_test.shape[0]))

# print("X_train shape: " + str(X_train.shape))

# print("Y_train shape: " + str(Y_train.shape))

# print("X_test shape: " + str(X_test.shape))

# print("Y_test shape: " + str(Y_test.shape))

def identity_block(X, f, filters, stage, block):

conv_name_base = 'res' + str(stage) + block + '_branch'

bn_name_base = 'bn' + str(stage) + block + '_branch'

F1, F2, F3 = filters

X_shortcut = X

# First component of main path

X = Conv2D(filters=F1, kernel_size=(1, 1), strides=(1, 1), padding='valid', name=conv_name_base + '2a',

kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2a')(X)

X = Activation('relu')(X)

# Second component of main path (≈3 lines)

X = Conv2D(filters=F2, kernel_size=(f, f), strides=(1, 1), padding='same', name=conv_name_base + '2b',

kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2b')(X)

X = Activation('relu')(X)

# Third component of main path (≈2 lines)

X = Conv2D(filters=F3, kernel_size=(1, 1), strides=(1, 1), padding='valid', name=conv_name_base + '2c',

kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2c')(X)

# Final step: Add shortcut value to main path, and pass it through a RELU activation (≈2 lines)

X = Add()([X, X_shortcut])

X = Activation('relu')(X)

return X

# tf.reset_default_graph()

# with tf.Session() as test:

# np.random.seed(1)

# A_prev = tf.placeholder("float", [3, 4, 4, 6])

# X = np.random.randn(3, 4, 4, 6)

# A = identity_block(A_prev, f = 2, filters = [2, 4, 6], stage = 1, block = 'a')

# test.run(tf.global_variables_initializer())

# out = test.run([A], feed_dict={A_prev: X, K.learning_phase(): 0})

# print("out = " + str(out[0][1][1][0]))

def convolutional_block(X, f, filters, stage, block, s=2):

conv_name_base = 'res' + str(stage) + block + '_branch'

bn_name_base = 'bn' + str(stage) + block + '_branch'

F1, F2, F3 = filters

X_shortcut = X

# First component of main path

X = Conv2D(filters=F1, kernel_size=(1, 1), strides=(s, s), padding='valid', name=conv_name_base + '2a',

kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2a')(X)

X = Activation('relu')(X)

# Second component of main path (≈3 lines)

X = Conv2D(filters=F2, kernel_size=(f, f), strides=(1, 1), padding='same', name=conv_name_base + '2b',

kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2b')(X)

X = Activation('relu')(X)

# Third component of main path (≈2 lines)

X = Conv2D(filters=F3, kernel_size=(1, 1), strides=(1, 1), padding='valid', name=conv_name_base + '2c',

kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2c')(X)

##### SHORTCUT PATH #### (≈2 lines)

X_shortcut = Conv2D(filters=F3, kernel_size=(1, 1), strides=(s, s), padding='valid', name=conv_name_base + '1',

kernel_initializer=glorot_uniform(seed=0))(X_shortcut)

X_shortcut = BatchNormalization(axis=3, name=bn_name_base + '1')(X_shortcut)

# Final step: Add shortcut value to main path, and pass it through a RELU activation (≈2 lines)

X = Add()([X, X_shortcut])

X = Activation('relu')(X)

return X

# tf.reset_default_graph()

# with tf.Session() as test:

# np.random.seed(1)

# A_prev = tf.placeholder("float", [3, 4, 4, 6])

# X = np.random.randn(3, 4, 4, 6)

# A = convolutional_block(A_prev, f=2, filters=[2, 4, 6], stage=1, block='a')

# test.run(tf.global_variables_initializer())

# out = test.run([A], feed_dict={A_prev: X, K.learning_phase(): 0})

# print("out = " + str(out[0][1][1][0]))

def ResNet50(input_shape=(64, 64, 3), classes=6):

# Define the input as a tensor with shape input_shape

X_input = Input(input_shape)

# Zero-Padding

X = ZeroPadding2D((3, 3))(X_input)

# Stage 1

X = Conv2D(64, (7, 7), strides=(2, 2), name='conv1', kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name='bn_conv1')(X)

X = Activation('relu')(X)

X = MaxPooling2D((3, 3), strides=(2, 2))(X)

# Stage 2

X = convolutional_block(X, f=3, filters=[64, 64, 256], stage=2, block='a', s=1)

X = identity_block(X, 3, [64, 64, 256], stage=2, block='b')

X = identity_block(X, 3, [64, 64, 256], stage=2, block='c')

### START CODE HERE ###

# Stage 3 (≈4 lines)

X = convolutional_block(X, f=3, filters=[128, 128, 512], stage=3, block='a', s=2)

X = identity_block(X, 3, [128, 128, 512], stage=3, block='b')

X = identity_block(X, 3, [128, 128, 512], stage=3, block='c')

X = identity_block(X, 3, [128, 128, 512], stage=3, block='d')

# Stage 4 (≈6 lines)

X = convolutional_block(X, f=3, filters=[256, 256, 1024], stage=4, block='a', s=2)

X = identity_block(X, 3, [256, 256, 1024], stage=4, block='b')

X = identity_block(X, 3, [256, 256, 1024], stage=4, block='c')

X = identity_block(X, 3, [256, 256, 1024], stage=4, block='d')

X = identity_block(X, 3, [256, 256, 1024], stage=4, block='e')

X = identity_block(X, 3, [256, 256, 1024], stage=4, block='f')

# Stage 5 (≈3 lines)

X = convolutional_block(X, f=3, filters=[512, 512, 2048], stage=5, block='a', s=2)

X = identity_block(X, 3, [512, 512, 2048], stage=5, block='b')

X = identity_block(X, 3, [512, 512, 2048], stage=5, block='c')

# AVGPOOL (≈1 line). Use "X = AveragePooling2D(...)(X)"

X = AveragePooling2D((2, 2), strides=(2, 2))(X)

### END CODE HERE ###

# output layer

X = Flatten()(X)

X = Dense(classes, activation='softmax', name='fc' + str(classes), kernel_initializer=glorot_uniform(seed=0))(X)

# Create model

model = Model(inputs=X_input, outputs=X, name='ResNet50')

return model

model = ResNet50(input_shape=(64, 64, 3), classes=6)

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

model.fit(X_train, Y_train, epochs=2, batch_size=32)

preds = model.evaluate(X_test, Y_test)

print ("Loss = " + str(preds[0]))

print ("Test Accuracy = " + str(preds[1]))

三、总结

ResNet50是一个非常强大的对图像进行分类的模型,我们希望你可以用你所学的知识应用到分类问题上,并达到一定的精度。祝贺你已经实现了一个很好的图像分类系统。

在这次作业中,我们需要注意以下几点:

- 神经网络并不是越深效果越好,因为越深由于梯度消失的缘故,对网络的训练难度也会增大。

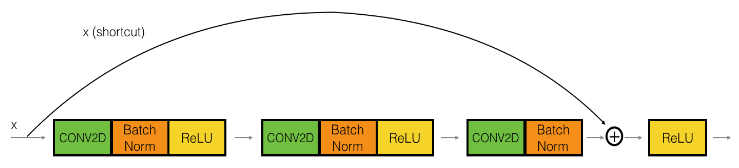

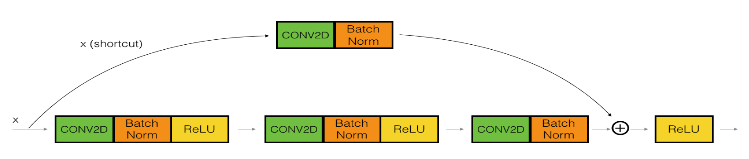

- 跳跃连接能够帮助解决梯度消失问题。

- 残差网络主要包括两个block:identity block和convolutional block。