Sigmoid函数:

(

)

在每个特征上都乘以一个回归系数,然后把所有结果值相加,将这个总和代入Sigmoid函数中,进而得到一个范围在0~1直接的数值。(1类:大于0.5; 0类:小于0.5)

梯度上升法

梯度上升法基于的思想是:要找到函数的最大值,最好是沿着该函数的梯度方向探寻

- 梯度上升的迭代公式: ( 为步长)

- 梯度下降的迭代公式: ( 为步长)

Logistic回归梯度上升优化算法:

from numpy import *

def loadDataSet():

dataMat = []

labelMat = []

fr = open('testSet.txt')

for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0, float(lineArr[0]), float(lineArr[1])])

labelMat.append(int(lineArr[2]))

return dataMat,labelMat

def sigmoid(inX):

return 1.0/(1+exp(-inX))

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

labelMat = mat(classLabels).transpose()#转置矩阵

m,n = shape(dataMatrix)

alpha = 0.001

maxCycles = 500#迭代次数上限

weights = ones((n,1))

for k in range(maxCycles):

h = sigmoid(dataMatrix * weights)

error = (labelMat - h)

weights = weights + alpha * dataMatrix.transpose() * error

return weights

def plotBestFit(weights):

import matplotlib.pyplot as plt

dataMat,labelMat = loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]#行

xcord1 = []

ycord1 = []

xcord2 = []

ycord2 = []

for i in range(n):

if int(labelMat[i]) == 1:

xcord1.append(dataArr[i,1])

ycord1.append(dataArr[i,2])

else:

xcord2.append(dataArr[i,1])

ycord2.append(dataArr[i,2])

fig = plt.figure()

ax = fig.add_subplot(111)

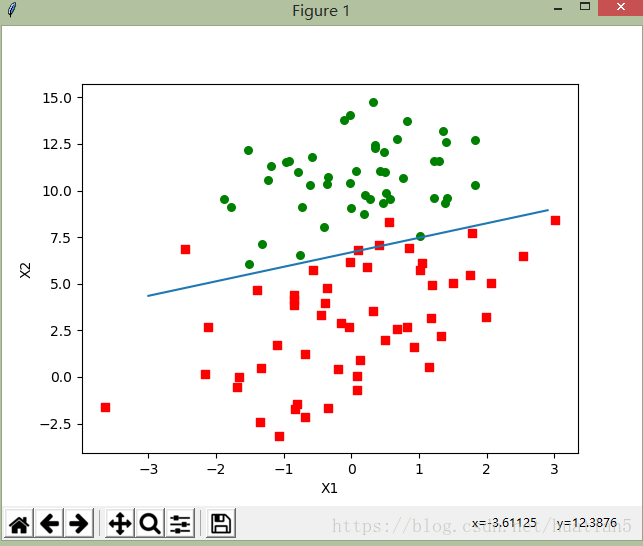

ax.scatter(xcord1, ycord1, s=30, c='red', marker='s')

ax.scatter(xcord2, ycord2, s=30,c='green')

x = arange(-3.0, 3.0, 0.1)

y = (-weights[0] - weights[1] * x) / weights[2]

ax.plot(x,y)

plt.xlabel('X1')

plt.ylabel('X2')

plt.show()

if __name__ == '__main__':

dataArr,labelMat = loadDataSet()

w = gradAscent(dataArr,labelMat)

plotBestFit(w.getA())输出结果:

随机梯度上升

随机梯度上升算法:

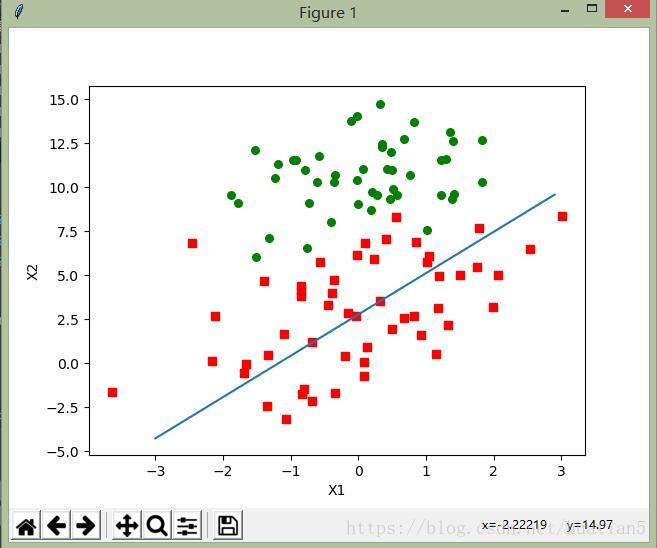

def stocGradAscent0(dataMatrix, classLabels):

m,n = shape(dataMatrix)

alpha = 0.01

weights = ones(n)

for i in range(m):

h = sigmoid(sum(dataMatrix[i]*weights))

error = classLabels[i] - h

weights = weights + alpha * error * dataMatrix[i]

return weights输出结果: