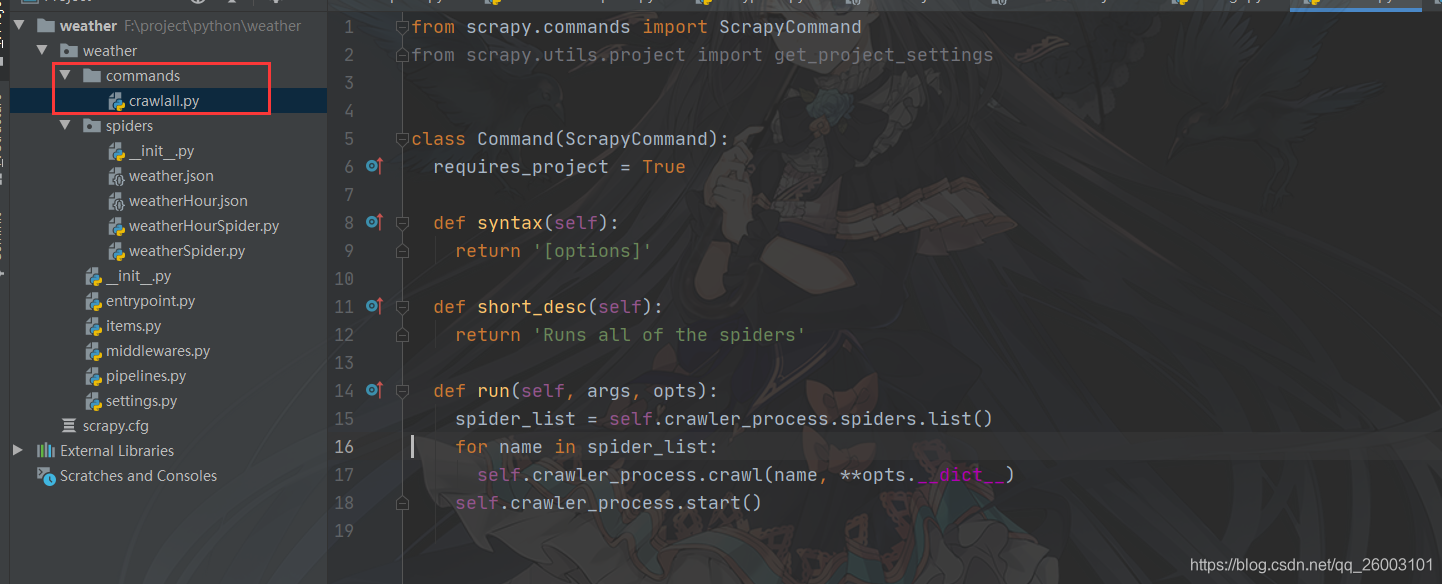

新建目录commands和文件crawlall.py

from scrapy.commands import ScrapyCommand

from scrapy.utils.project import get_project_settings

class Command(ScrapyCommand):

requires_project = True

def syntax(self):

return '[options]'

def short_desc(self):

return 'Runs all of the spiders'

def run(self, args, opts):

spider_list = self.crawler_process.spiders.list()

for name in spider_list:

self.crawler_process.crawl(name, **opts.__dict__)

self.crawler_process.start()

配置文件增加配置

COMMANDS_MODULE="weather.commands"

命令执行

[root@AlexWong /]# scrapy crawlall

或者本地入口执行文件entrypoint.py

# 入口执行文件

from scrapy import cmdline

cmdline.execute(['scrapy', 'crawlall'])