multilayer perceptron多层感知机

一、多层感知机的基本知识

隐藏层

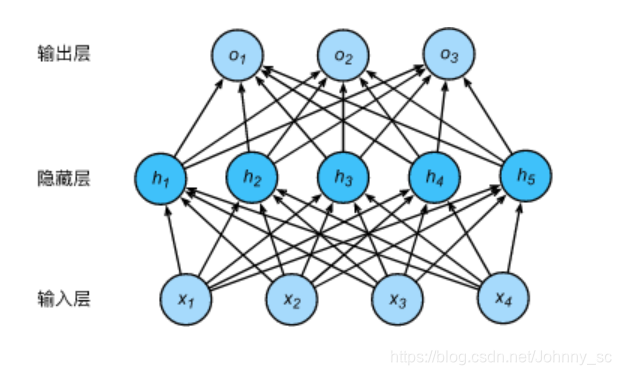

下图展示了一个多层感知机的神经网络图,它含有一个隐藏层,该层中有5个隐藏单元。

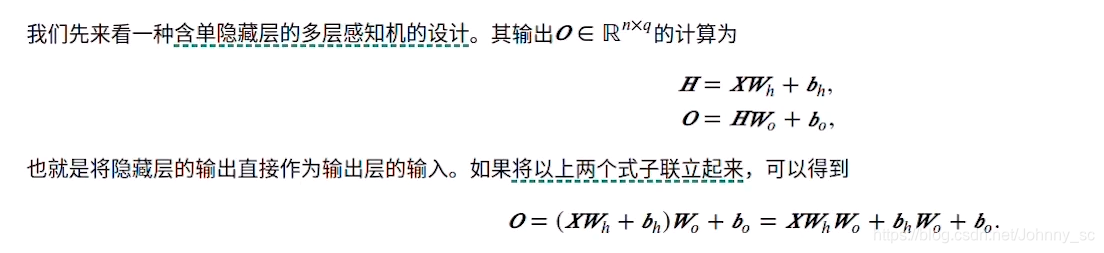

含单层隐藏层的多层感知机公式:

从联立后的式子可以看出,虽然神经网络引入隐藏层,但依然等价于一个单层神经网络。不难发现,即便添加再多的隐藏层,以上设计只能等价于仅含输出层的单层神经网络。所以,如果需要对隐藏层的输出作一些非线性变化,就需要引入激活函数。

*多层感知机中引入激活函数的原因:

将多个无激活函数的线性层叠加起来,其表达能力与单个线性层相同,所以,如果需要对隐藏层的输出作一些非线性变化,就需要引入激活函数

二、使用多层感知机图像分类的从零开始的实现

import torch

import numpy as np

import sys

sys.path.append("/home/kesci/input")

import d2lzh1981 as d2l

print(torch.__version__)

获取数据集

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size,root='/home/kesci/input/FashionMNIST2065')

定义模型参数

num_inputs, num_outputs, num_hiddens = 784, 10, 256

W1 = torch.tensor(np.random.normal(0, 0.01, (num_inputs, num_hiddens)), dtype=torch.float)

b1 = torch.zeros(num_hiddens, dtype=torch.float)

W2 = torch.tensor(np.random.normal(0, 0.01, (num_hiddens, num_outputs)), dtype=torch.float)

b2 = torch.zeros(num_outputs, dtype=torch.float)

params = [W1, b1, W2, b2]

for param in params:

param.requires_grad_(requires_grad=True)

定义激活函数

#使用ReLU激活函数,比0大则保留,比0小则对应0

def relu(X):

return torch.max(input=X, other=torch.tensor(0.0))

定义网络

def net(X):

X = X.view((-1, num_inputs)) #将X的形状改变

H = relu(torch.matmul(X, W1) + b1) #用X和权重W作矩阵乘法,加上偏移量b,输入激活函数ReLU中得到H

return torch.matmul(H, W2) + b2 #再做一次线性的矩阵乘法,加上偏差作为输出

定义损失函数

loss = torch.nn.CrossEntropyLoss()

训练

num_epochs, lr = 5, 100.0

# def train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size,

# params=None, lr=None, optimizer=None):

# for epoch in range(num_epochs):

# train_l_sum, train_acc_sum, n = 0.0, 0.0, 0

# for X, y in train_iter:

# y_hat = net(X)

# l = loss(y_hat, y).sum()

#

# # 梯度清零

# if optimizer is not None:

# optimizer.zero_grad()

# elif params is not None and params[0].grad is not None:

# for param in params:

# param.grad.data.zero_()

#

# l.backward()

# if optimizer is None:

# d2l.sgd(params, lr, batch_size)

# else:

# optimizer.step() # “softmax回归的简洁实现”一节将用到

#

#

# train_l_sum += l.item()

# train_acc_sum += (y_hat.argmax(dim=1) == y).sum().item()

# n += y.shape[0]

# test_acc = evaluate_accuracy(test_iter, net)

# print('epoch %d, loss %.4f, train acc %.3f, test acc %.3f'

# % (epoch + 1, train_l_sum / n, train_acc_sum / n, test_acc))

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, params, lr)

三、 使用pytorch的简洁实现

import torch

from torch import nn

from torch.nn import init

import numpy as np

import sys

sys.path.append("/home/kesci/input")

import d2lzh1981 as d2l

print(torch.__version__)

初始化模型和各个参数

num_inputs, num_outputs, num_hiddens = 784, 10, 256

net = nn.Sequential(

d2l.FlattenLayer(),

nn.Linear(num_inputs, num_hiddens),

nn.ReLU(),

nn.Linear(num_hiddens, num_outputs),

)

for params in net.parameters():

init.normal_(params, mean=0, std=0.01)

训练

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size,root='/home/kesci/input/FashionMNIST2065')

loss = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(net.parameters(), lr=0.5)

num_epochs = 5

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, None, None, optimizer)

错题:

多层感知机

答:256256的图片总共有256256=65536个元素,与隐层单元元素两两相乘,得到655361000,然后隐层单元元素分别于输出类别个数两两相乘,即100010,最后两者相加得:

655361000 + 100010 = 65546000