import torch

import torch.utils.data as Data

import torch.nn.functional as F

import matplotlib.pyplot as plt

torch.manual_seed(1) # reproducible

LR = 0.01

BATCH_SIZE = 32

EPOCH = 12

# fake dataset

x = torch.unsqueeze(torch.linspace(-1, 1, 100), dim=1)

y = x.pow(2) + 0.1*torch.normal(torch.zeros(*x.size()))

# plot dataset

# plt.scatter(x.data.numpy(), y.data.numpy())

# plt.show()

# 使用上节内容提到的 data loader 一会进行数据批处理

torch_dataset=Data.TensorDataset(x,y)

loader=Data.DataLoader(dataset=torch_dataset,batch_size=BATCH_SIZE,shuffle=True,num_workers=2)

#每个优化器优化一个神经网络

'''

为了对比每一种优化器, 我们给他们各自创建一个神经网络,

但这个神经网络都来自同一个 Net 形式.

'''

# 默认的 network 形式

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hidden = torch.nn.Linear(1, 20) # hidden layer

self.predict = torch.nn.Linear(20, 1) # output layer

def forward(self, x):

x = F.relu(self.hidden(x)) # activation function for hidden layer

x = self.predict(x) # linear output

return x

if __name__ == '__main__':

# 为每个优化器创建一个 net

net_SGD = Net()

net_Momentum = Net()

net_RMSprop = Net()

net_Adam = Net()

nets = [net_SGD, net_Momentum, net_RMSprop, net_Adam]

# 建立上面4个网络对应的优化器)

opt_SGD = torch.optim.SGD(net_SGD.parameters(), lr=LR)

opt_Momentum = torch.optim.SGD(net_Momentum.parameters(), lr=LR, momentum=0.8)

opt_RMSprop = torch.optim.RMSprop(net_RMSprop.parameters(), lr=LR, alpha=0.9)

opt_Adam = torch.optim.Adam(net_Adam.parameters(), lr=LR, betas=(0.9, 0.99))

optimizers = [opt_SGD, opt_Momentum, opt_RMSprop, opt_Adam]

loss_func = torch.nn.MSELoss()#假设损失函数一样

losses_his = [[], [], [], []] # record loss

# 接下来训练和 loss 画图.

for epoch in range(EPOCH):

print('Epoch: ', epoch)

for step, (b_x, b_y) in enumerate(loader): # for each training step

for net, opt, l_his in zip(nets, optimizers, losses_his):

output = net(b_x) # get output for every net

loss = loss_func(output, b_y) # compute loss for every net

opt.zero_grad() # clear gradients for next train

loss.backward() # backpropagation, compute gradients

opt.step() # apply gradients

l_his.append(loss.data.numpy()) # loss recoder

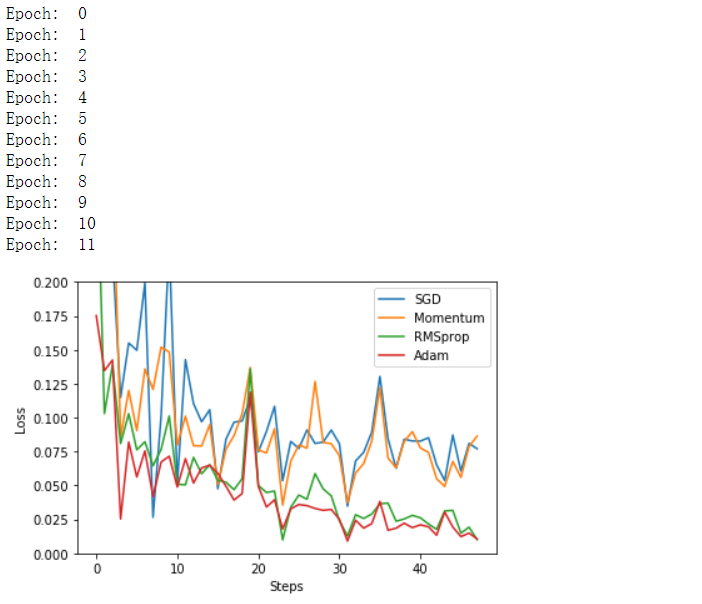

labels = ['SGD', 'Momentum', 'RMSprop', 'Adam']

for i, l_his in enumerate(losses_his):

plt.plot(l_his, label=labels[i])

plt.legend(loc='best')

plt.xlabel('Steps')

plt.ylabel('Loss')

plt.ylim((0, 0.2))

plt.show()