参考伯禹学习平台《动手学深度学习》课程内容内容撰写的学习笔记

原文链接:https://www.boyuai.com/elites/course/cZu18YmweLv10OeV/video/qC-4p–OiYRK9l3eHKAju

感谢伯禹平台,Datawhale,和鲸,AWS给我们提供的免费学习机会!!

总的学习感受:伯禹的课程做的很好,课程非常系统,每个较高级别的课程都会有需要掌握的前续基础知识的介绍,因此很适合本人这种基础较差的同学学习,建议基础较差的同学可以关注伯禹的其他课程:

数学基础:https://www.boyuai.com/elites/course/D91JM0bv72Zop1D3

机器学习基础:https://www.boyuai.com/elites/course/5ICEBwpbHVwwnK3C

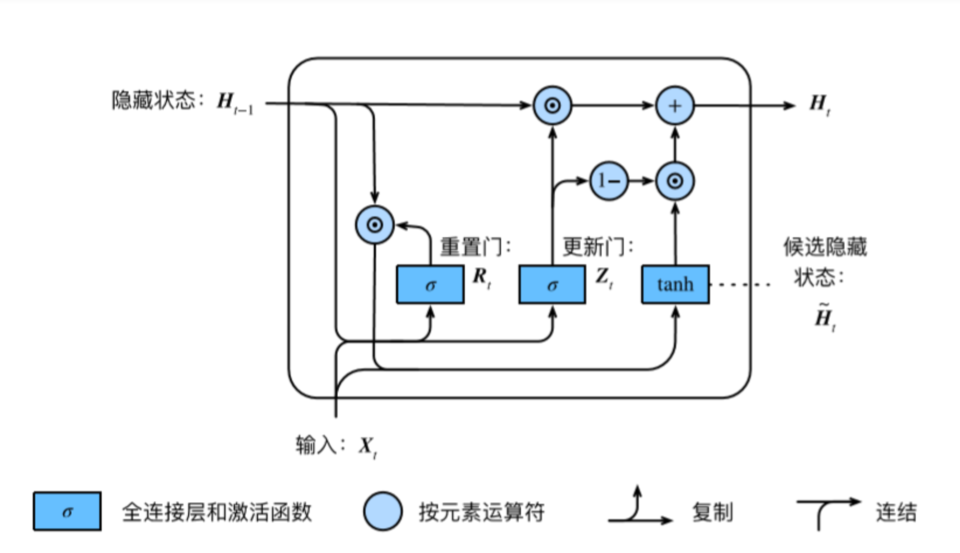

GRU

RNN存在的问题:梯度较容易出现衰减或爆炸(BPTT)

⻔控循环神经⽹络:捕捉时间序列中时间步距离较⼤的依赖关系

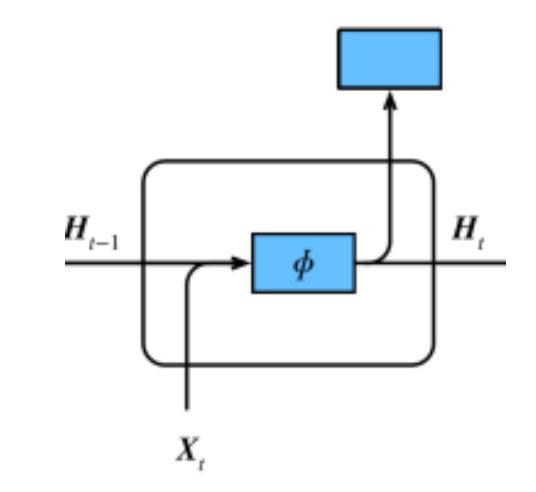

RNN:

Ht=ϕ(XtWxh+Ht−1Whh+bh)

GRU:

Rt=σ(XtWxr+Ht−1Whr+br)Zt=σ(XtWxz+Ht−1Whz+bz)H

t=tanh(XtWxh+(Rt⊙Ht−1)Whh+bh)Ht=Zt⊙Ht−1+(1−Zt)⊙H

t

• 重置⻔有助于捕捉时间序列⾥短期的依赖关系; (大小都是h)

•** 更新⻔有助于捕捉时间序列⾥⻓期的依赖关系。**

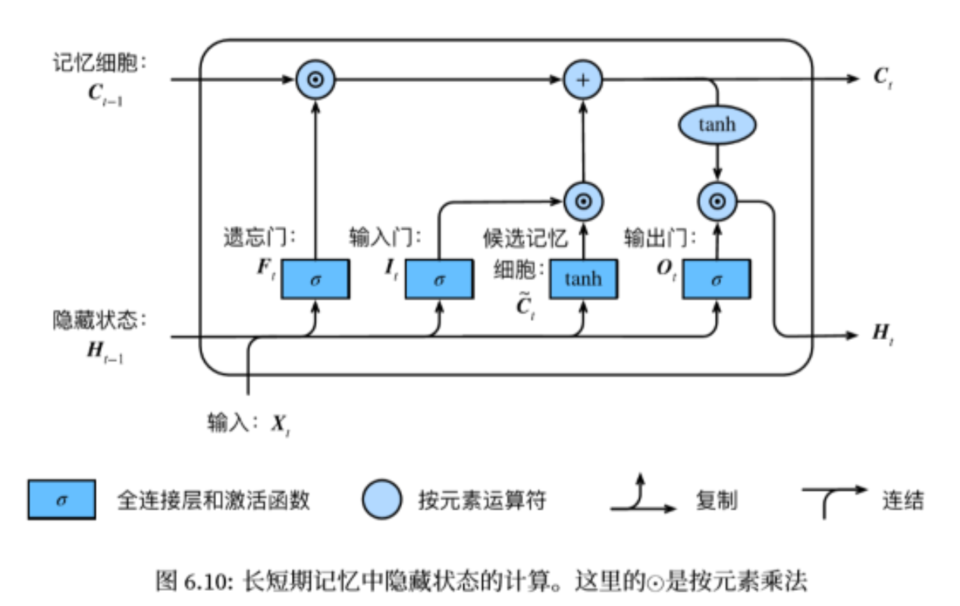

LSTM

** 长短期记忆long short-term memory **:

遗忘门:控制上一时间步的记忆细胞

输入门:控制当前时间步的输入

输出门:控制从记忆细胞到隐藏状态

记忆细胞:⼀种特殊的隐藏状态的信息的流动

It=σ(XtWxi+Ht−1Whi+bi)Ft=σ(XtWxf+Ht−1Whf+bf)Ot=σ(XtWxo+Ht−1Who+bo)C

t=tanh(XtWxc+Ht−1Whc+bc)Ct=Ft⊙Ct−1+It⊙C

tHt=Ot⊙tanh(Ct)

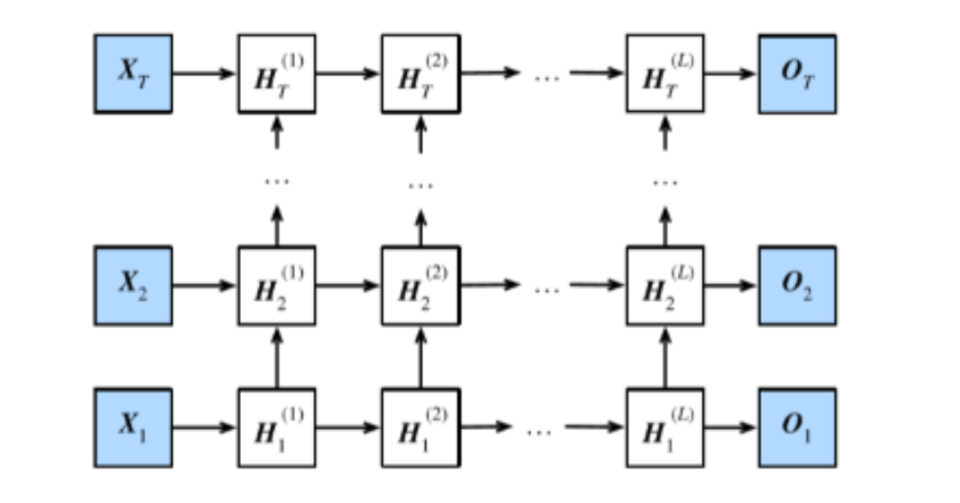

深度循环神经网络

Ht(1)=ϕ(XtWxh(1)+Ht−1(1)Whh(1)+bh(1))Ht(ℓ)=ϕ(Ht(ℓ−1)Wxh(ℓ)+Ht−1(ℓ)Whh(ℓ)+bh(ℓ))Ot=Ht(L)Whq+bq

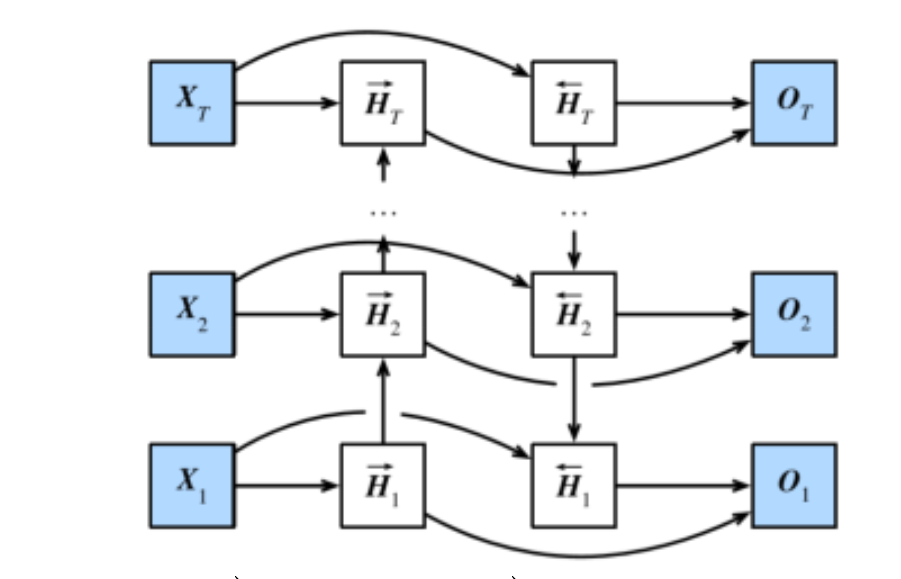

双向循环神经网络

H

tH

t=ϕ(XtWxh(f)+H

t−1Whh(f)+bh(f))=ϕ(XtWxh(b)+H

t+1Whh(b)+bh(b))

Ht=(H

t,H

t)

Ot=HtWhq+bq

可以通过前后的词来估计当前的词,更加准确。