MNIST数据集

7万张图片

每张图片是一个28*28像素点的手写数字,黑底白字,黑底用0表示,白字用0~1之间的浮点数表示,越接近于1颜色越白

模块化搭建神经网络八股

手写数字识别准确率输出

前向传播 mnist_forward.py

反向传播 mnist_backward.py

测试输出准确率 mnist_test.py

mnist_forward.py

import tensorflow as tf INPUT_NODE = 784 #神经网络输入节点是784个,输入的是图片像素值,每张图片28*28共有784个像素点,每个像素点是01之间的浮点数 OUTPUT_NODE = 10 #输出10个数,每个数表示输出索引号出现的概率 LAYER1_NODE = 500 #隐藏层的节点个数 def get_weight(shape, regularizer): w = tf.Variable(tf.truncated_normal(shape,stddev=0.1)) if regularizer != None: tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w)) return w def get_bias(shape): b = tf.Variable(tf.zeros(shape)) return b #搭建网络,描述输入到输出的数据流 def forward(x, regularizer): w1 = get_weight([INPUT_NODE, LAYER1_NODE], regularizer) b1 = get_bias([LAYER1_NODE]) y1 = tf.nn.relu(tf.matmul(x, w1) + b1) w2 = get_weight([LAYER1_NODE, OUTPUT_NODE], regularizer) b2 = get_bias([OUTPUT_NODE]) y = tf.matmul(y1, w2) + b2 return ymnist_backward.py

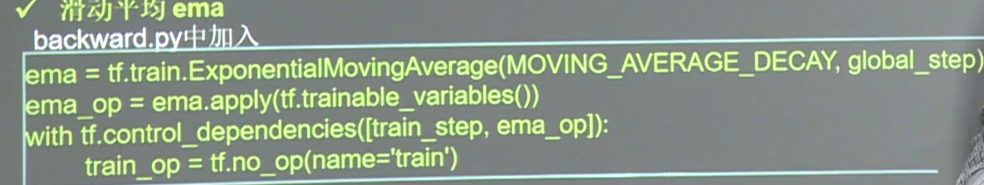

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data import mnist_forward import os BATCH_SIZE = 200 #喂入神经网络多少张图片 LEARNING_RATE_BASE = 0.1 #最开始的学习率 LEARNING_RATE_DECAY = 0.99 #学习率衰减率 REGULARIZER = 0.0001 #正则化系数 STEPS = 50000 #共训练多少轮 MOVING_AVERAGE_DECAY = 0.99 #滑动平均衰减率 MODEL_SAVE_PATH = "./model/" #模型的保存路径 MODEL_NAME = "mnist_model" #模型保存的文件名 def backward(mnist): x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE]) y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE]) y = mnist_forward.forward(x, REGULARIZER) global_step = tf.Variable(0, trainable=False) ce = tf.nn.sparse_softmax_cross_entropy_with_logits( logits=y, labels=tf.argmax(y_, 1)) cem = tf.reduce_mean(ce) loss = cem + tf.add_n(tf.get_collection('losses')) learning_rate = tf.train.exponential_decay( LEARNING_RATE_BASE, global_step, mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY, staircase=True) #定义训练过程 train_step = tf.train.GradientDescentOptimizer( learning_rate).minimize(loss, global_step=global_step) #定义滑动平均 ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step) ema_op = ema.apply(tf.trainable_variables()) with tf.control_dependencies([train_step, ema_op]): train_op = tf.no_op(name='train') saver = tf.train.Saver() with tf.Session() as sess: init_op = tf.global_variables_initializer() sess.run(init_op) for i in range(STEPS): xs, ys = mnist.train.next_batch(BATCH_SIZE) _, loss_value, step = sess.run( [train_op, loss, global_step], feed_dict={x: xs, y_: ys}) if i % 1000 == 0: print("After %d training step(s), loss on training batch is %g." % ( step, loss_value)) saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step) def main(): mnist = input_data.read_data_sets("./data/", one_hot=True) backward(mnist) if __name__ == '__main__': main()mnist_test.py

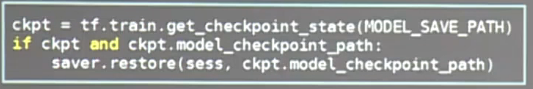

#coding:utf-8 import time import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data import mnist_forward import mnist_backward TEST_INTERVAL_SECS = 5 #定义程序循环的间隔时间是5秒 def test(mnist): with tf.Graph().as_default() as g: #tf.Graph() 复现计算图 x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE]) y_ = tf.placeholder(tf.float32, [None, mnist_forward.OUTPUT_NODE]) y = mnist_forward.forward(x, None) ema = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY) ema_restore = ema.variables_to_restore() saver = tf.train.Saver(ema_restore) correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) while True: with tf.Session() as sess: ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH) #先判断是否已经有模型,如果有,恢复模型到当前会话 if ckpt and ckpt.model_checkpoint_path: saver.restore(sess, ckpt.model_checkpoint_path) #恢复global_step值 global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1] #准确率计算 accuracy_score = sess.run(accuracy, feed_dict={x: mnist.test.images, y_: mnist.test.labels}) print("After %s training step(s), test accuracy = %g" % (global_step, accuracy_score)) else: print('No checkpoint file found') return time.sleep(TEST_INTERVAL_SECS) def main(): mnist = input_data.read_data_sets("./data/", one_hot=True) test(mnist) if __name__ == '__main__': main()全连接网络实践

如何实现断点续训

mnist_app.py

# coding:utf-8 import tensorflow as tf import numpy as np from PIL import Image import mnist_backward import mnist_forward def restore_model(testPicArr): #重现计算图 with tf.Graph().as_default() as tg: x = tf.placeholder(tf.float32, [None, mnist_forward.INPUT_NODE]) y = mnist_forward.forward(x, None) preValue = tf.argmax(y, 1) variable_averages = tf.train.ExponentialMovingAverage( mnist_backward.MOVING_AVERAGE_DECAY) variables_to_restore = variable_averages.variables_to_restore() saver = tf.train.Saver(variables_to_restore) with tf.Session() as sess: ckpt = tf.train.get_checkpoint_state( mnist_backward.MODEL_SAVE_PATH) if ckpt and ckpt.model_checkpoint_path: saver.restore(sess, ckpt.model_checkpoint_path) preValue = sess.run(preValue, feed_dict={x: testPicArr}) return preValue else: print("No checkpoint file found") return -1 def pre_pic(picName): img = Image.open(picName) reIm = img.resize((28, 28), Image.ANTIALIAS) im_arr = np.array(reIm.convert('L')) threshold = 50 #模型要求的是黑底白字,我们输入的图片是白底黑字,所以要给输入图片反色 #遍历每个像素点,给图片做二值化处理,让图片只有纯白色点和纯黑色点,这样可以滤掉手写数字图片中的噪声,留下图片主要特征 for i in range(28): for j in range(28): im_arr[i][j] = 255 - im_arr[i][j] if (im_arr[i][j] < threshold): im_arr[i][j] = 0 else: im_arr[i][j] = 255 nm_arr = im_arr.reshape([1, 784]) nm_arr = nm_arr.astype(np.float32) img_ready = np.multiply(nm_arr, 1.0 / 255.0) return img_ready def application(): readin = input("input the number of test pictures:") #输入要识别几张图片 testNum = int(readin) for i in range(testNum): #给出要识别图片的路径和名称 testPic = input("the path of test picture:") testPicArr = pre_pic(testPic) #把图片处理成符合的格式 preValue = restore_model(testPicArr) print("The prediction number is:", preVale) def main(): application() if __name__ == '__main__': main()制作数据集

代码详见视频吧

人工智能实践:Tensorflow笔记(四):全连接网络基础与实践

猜你喜欢

转载自blog.csdn.net/hxxjxw/article/details/100042013

今日推荐

周排行