内容来自mooc人工智能实践第五讲

一、MNIST数据集一些用到的基础函数语法

############ warm up ! ############

# 导入imput_data模块

from tensorflow.examples.tutorials.mnist import input_data

# 加载数据集,以读热码的形式存取

mnist = input_data.read_data_sets('./data/', one_hot = True)

# 打印训练集、验证集、测试集所含有的样本数

print("train data size:", mnist.train.num_examples)

print("validation data size:", mnist.validation.num_examples)

print("test data size:", mnist.test.num_examples)

# 查看训练集中指定编号的标签或图片数据

mnist.train.labels[0]

mnist.train.images[0]

# 将训练集中一定batchsize的数据和标签赋给左边的变量

xs, ys = minist.train.next_batch(BATCH_SIZE)

# 打印形状

print("xs shape: ", xs.shape)

print("ys shape: ", ys.shape)

# 从集合中取全部变量,生成一个列表

tf.get_collection("")

# 列表内对应元素相加

tf.add_n([])

# 将x转化为指定类型

tf.cast(x, dtype)

# 对比两个矩阵或者向量的每个元素,对应元素相等时依次返回True,否则False

A = [[1,3,4,5,6]]

B = [[1,3,4,3,2]]

with tf.Session() as sess:

print(sess.run(tf.equal(A, B)))

# 求均值

# 若不指定第二个参数,则在所有元素中求平均值

# 若第二个参数0,则在第一维元素上取平均值

# 若第二个参数1,则在第二维元素上求平均值

tf.reduce_mean(x, axis)

# 返回axis指定的维度中,列表x最大值对应的索引号

tf.argmax(x, axis)

# 拼接路径

import os

os.path.join("home", "name") # 返回home/name

# 按指定拆分符,对字符串切片,返回分割后的列表(字符串)

# 用于从一个文件中读取global step的值

'./mode/mnist_model-1001'.split('/')[-1].split('-')[-1] # 返回1001

# 用于复现已经定义好了的神经网络

with tf.Gragh().as_default() as g: # 其内定义的节点在计算图g中

###### 模型的保存 ######

# 反向传播中,一般每隔一定轮数把神经网络模型保存下来

# 保存三个文件

# 1.当前图结构的.meta文件

# 2.当前参数名的.index文件

# 3.当前参数的.data文件

saver = tf.train.Saver() # 实例化saver对象

with tf.Session() as sess: # 在with结构中循环一定轮数时,保存模型到当前会话

for i in range(STEPS):

if i % 轮数 == 0: # 拼接成./MODEL_SAVE_PATH/MODEL_NAME-global_step路径

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step = global_step)

# 将神经网络模型中的所有参数等信息保存到指定路径中,并在存放网络模型的文件夹名称中注明保存模型时的训练轮数

# 测试网络效果时,需要将训练好的神经网络模型加载

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(存储路径)

if ckpt and ckpt.model_checkpoint_path: #若ckpt和保存的模型在指定路径中存在

saver.restore(sess, ckpt.model_checkpoint_path) #则将保存的神经网络模型加载到当前会话中

# 加载模型中参数的滑动平均值

# 保存模型时,若模型中采用了滑动平均,则参数的滑动平均值会保存在相应文件中

ema = tf.train.ExponentialMovingAverage(滑动平均基数)

ema_restore = ema.variables_to_restore()

# 实例化可以还原滑动平均值的saver对象

saver = tf.train.Saver(ema_restore)

# 神经网络模型准确率的评估方法

# y 表示在一组batch_size大小的数据上,神经网络模型的预测结果

# y.shape = [batch_size, 10]

# 判断预测记过张量和实际标签张量的每个维度是否相等

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

# 将布尔值转化为实数型

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

二、测试过程test.py及主函数

######## test.py ##########

def test(mnist):

with tf.Gragh().as_default() as g:

#占位

x = tf.placeholder(dtype, shape)

y_= tf.placeholder(dtype, shape)

# 前向传播,预测结果y

y = mnist_forward.forward(x, None)

# 实例化可以还原滑动平均的saver

ema = tf.train.ExponentialMovingAverage(滑动衰减率)

ema_restore = ema.variables_to_restore()

# 实例化可以还原滑动平均值的saver对象

saver = tf.train.Saver(ema_restore)

# 计算正确率

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

while True:

with tf.Session() as sess:

# 加载训练好的模型

ckpt = tf.train.get_checkpoint_state(存储路径)

#若ckpt和保存的模型在指定路径中存在

if ckpt and ckpt.model_checkpoint_path:

# 恢复会话

saver.restore(sess, ckpt.model_checkpoint_path)

# 恢复轮数

global_ste = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

# 计算准确率

accuracy_score = sess.run(accuracy, feed_dict = {x:测试数据, y_:测试数据标签})

# 打印提示

print("after %s training steps, test_accuracy = %g" %(global_step, accuracy_score))

#如果没有模型

else:

print("no checkpoint file found")

return

######## main function #############

def main():

mnist = input_data.read_data_sets('./data/', one_hot = True)

# 调用定义好的测试函数

test(mnist)

if __name__ == '__main__':

main()

三、完整代码

- ①

mnist_forward.py

# mnist_forward.py

# coding: utf-8

import tensorflow as tf

INPUT_NODE = 784

OUTPUT_NODE = 10

LAYER1_NODE = 500

# 给w赋初值,并把w的正则化损失加到总损失中

def get_weight(shape, regularizer):

w = tf.Variable(tf.truncated_normal(shape, stddev = 0.1))

if regularizer != None: tf.add_to_collection('losses', tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

# 给b赋初值

def get_bias(shape):

b = tf.Variable(tf.zeros(shape))

return b

def forward(x, regularizer):

w1 = get_weight([INPUT_NODE, LAYER1_NODE], regularizer)

b1 = get_bias([LAYER1_NODE])

y1 = tf.nn.relu(tf.matmul(x, w1) + b1)

w2 = get_weight([LAYER1_NODE, OUTPUT_NODE], regularizer)

b2 = get_bias([OUTPUT_NODE])

y = tf.matmul(y1, w2) + b2 #输出层不通过激活函数

return y

- ②

mnist_backward.py

# mnist_backward.py

# coding: utf-8

import tensorflow as tf

# 导入imput_data模块

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import os

# 定义超参数

BATCH_SIZE = 200

LEARNING_RATE_BASE = 0.1 #初始学习率

LEARNING_RATE_DECAY = 0.99 # 学习率衰减率

REGULARIZER = 0.0001 # 正则化参数

STEPS = 50000 #训练轮数

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH = "./model/"

MODEL_NAME = "mnist_model"

def backward(mnist):

# placeholder占位

x = tf.placeholder(tf.float32, shape = (None, mnist_forward.INPUT_NODE))

y_ = tf.placeholder(tf.float32, shape = (None, mnist_forward.OUTPUT_NODE))

# 前向传播推测输出y

y = mnist_forward.forward(x, REGULARIZER)

# 定义global_step轮数计数器,定义为不可训练

global_step = tf.Variable(0, trainable = False)

# 包含正则化的损失函数

# 交叉熵

ce = tf.nn.sparse_softmax_cross_entropy_with_logits(logits = y, labels = tf.argmax(y_, 1))

cem = tf.reduce_mean(ce)

# 使用正则化时的损失函数

loss = cem + tf.add_n(tf.get_collection('losses'))

# 定义指数衰减学习率

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples/BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase = True)

# 定义反向传播方法:包含正则化

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step = global_step)

# 定义滑动平均时,加上:

ema = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

ema_op = ema.apply(tf.trainable_variables())

with tf.control_dependencies([train_step, ema_op]):

train_op = tf.no_op(name = 'train')

# 实例化saver

saver = tf.train.Saver()

# 训练过程

with tf.Session() as sess:

# 初始化所有参数

init_op = tf.global_variables_initializer()

sess.run(init_op)

# 循环迭代

for i in range(STEPS):

# 将训练集中一定batchsize的数据和标签赋给左边的变量

xs, ys = mnist.train.next_batch(BATCH_SIZE)

# 喂入神经网络,执行训练过程train_step

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict = {x: xs, y_: ys})

if i % 1000 == 0: # 拼接成./MODEL_SAVE_PATH/MODEL_NAME-global_step路径

# 打印提示

print("after %d steps, loss on traing batch is %g" %(step, loss_value))

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step = global_step)

def main():

mnist = input_data.read_data_sets('./data/', one_hot = True)

# 调用定义好的测试函数

backward(mnist)

# 判断python运行文件是否为主文件,如果是,则执行

if __name__ == '__main__':

main()

- ③

mnist_test.py

# coding:utf-8

# mnist_test.py

# 延时

import time

import tensorflow as tf

# 导入imput_data模块

from tensorflow.examples.tutorials.mnist import input_data

import mnist_forward

import mnist_backward

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' #hide warnings

# 程序循环间隔时间5秒

TEST_INTERVAL_SECS = 5

def test(mnist):

# 用于复现已经定义好了的神经网络

with tf.Graph().as_default() as g: # 其内定义的节点在计算图g中

# placeholder占位

x = tf.placeholder(tf.float32, shape=(None, mnist_forward.INPUT_NODE))

y_ = tf.placeholder(tf.float32, shape=(None, mnist_forward.OUTPUT_NODE))

# 前向传播推测输出y

y = mnist_forward.forward(x, None)

# 实例化带滑动平均的saver对象

# 这样,所有参数在会话中被加载时,会被复制为各自的滑动平均值

ema = tf.train.ExponentialMovingAverage(mnist_backward.MOVING_AVERAGE_DECAY)

ema_restore = ema.variables_to_restore()

saver = tf.train.Saver(ema_restore)

# 计算正确率

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

while True:

with tf.Session() as sess:

# 加载训练好的模型,也即把滑动平均值赋给各个参数

ckpt = tf.train.get_checkpoint_state(mnist_backward.MODEL_SAVE_PATH)

#若ckpt和保存的模型在指定路径中存在

if ckpt and ckpt.model_checkpoint_path:

# 恢复会话

saver.restore(sess, ckpt.model_checkpoint_path)

# 恢复轮数

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

# 计算准确率

accuracy_score = sess.run(accuracy, feed_dict={x: mnist.test.images, y_: mnist.test.labels})

# 打印提示

print("after %s training steps, test accuracy = %g" % (global_step, accuracy_score))

#如果没有模型

else:

print("no checkpoint file found")

return

time.sleep(TEST_INTERVAL_SECS)

def main():

mnist = input_data.read_data_sets('./data/', one_hot=True)

# 调用定义好的测试函数

test(mnist)

if __name__ == '__main__':

main()

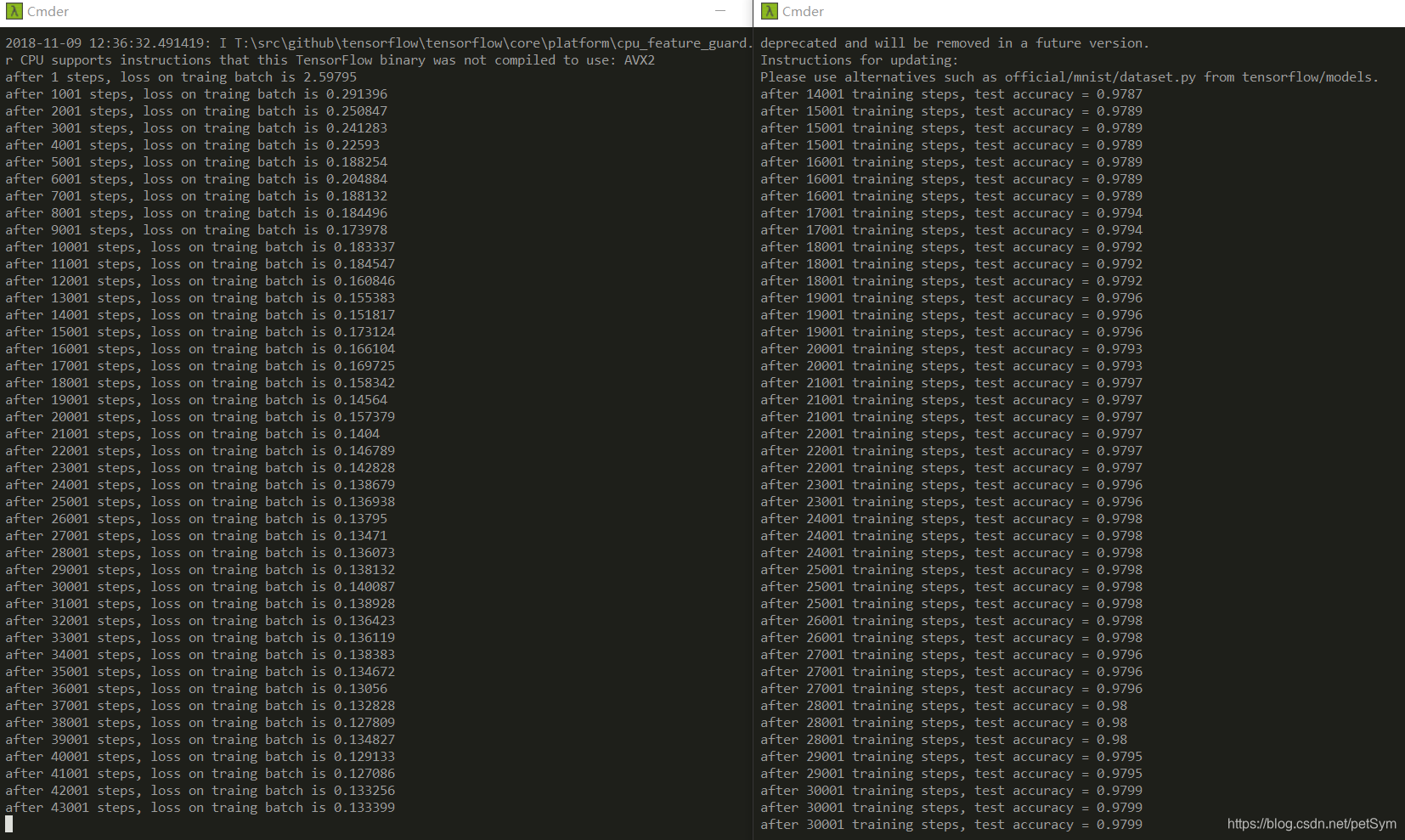

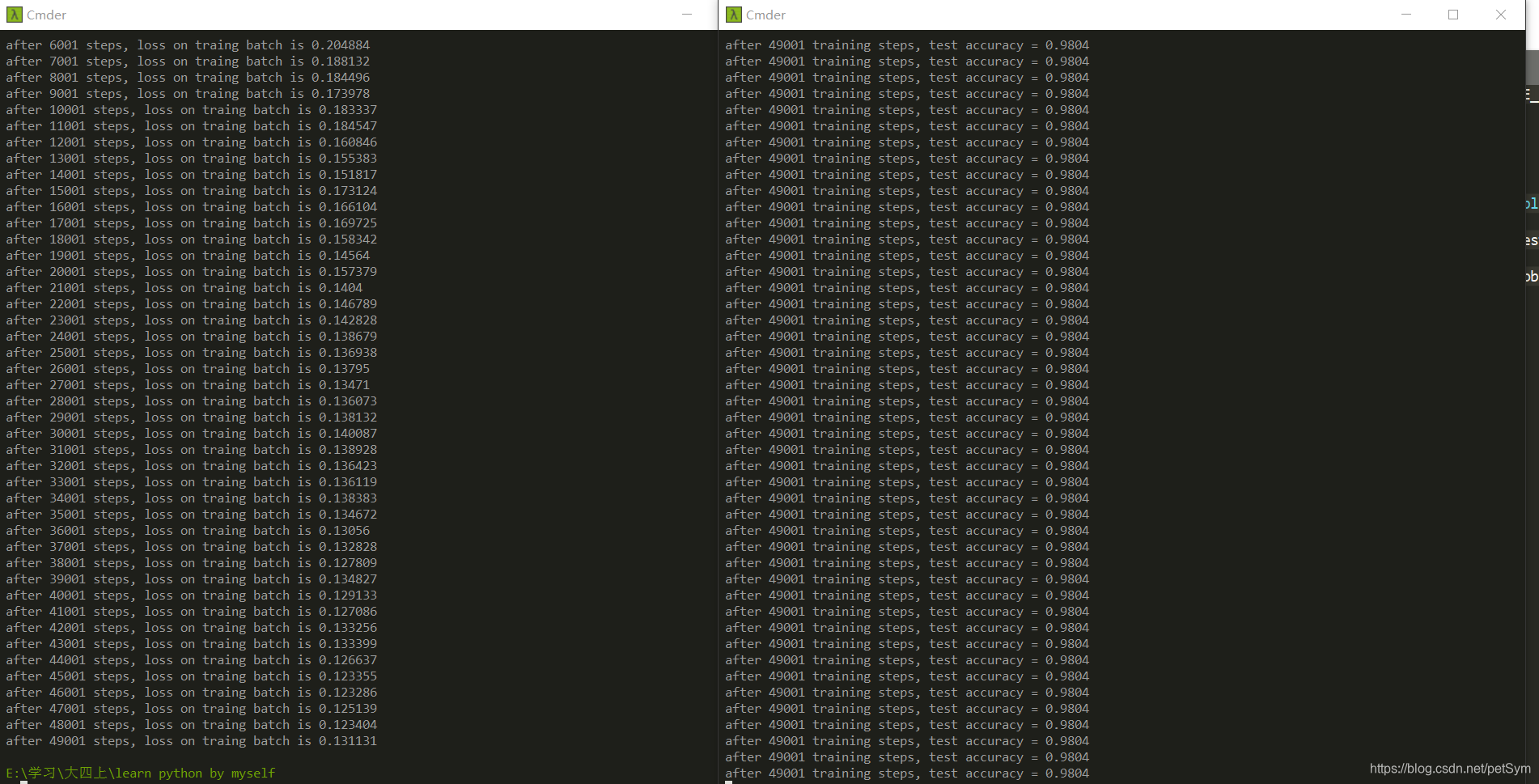

从终端运行结果可以看出,随着训练轮数的增加,网络模型的损失函数值不断降低,并且在测试集上的准确率在不断提升,有较好的泛化能力。

从上图结果可以看出,最终迭代后准确率基本稳定不变了。