版权声明:欢迎大家转载分享,转载请注明出处,有需要请留言联系我~~~ https://blog.csdn.net/crazyice521/article/details/53289207

一、逻辑回归介绍

逻辑回归(Logistic Regression)是机器学习中的一种分类模型,由于算法的简单和高效,在实际中应用非常广泛。本文主要从Tensorflow框架下代码应用去分析这个模型。因为比较简单,大家都学习过,就简要介绍一下。

二、求解

回归求解的一般步骤就是:

①寻找假设函数

②构造损失函数

③求解使得损失函数最小化时的回归参数

sigmoid 函数

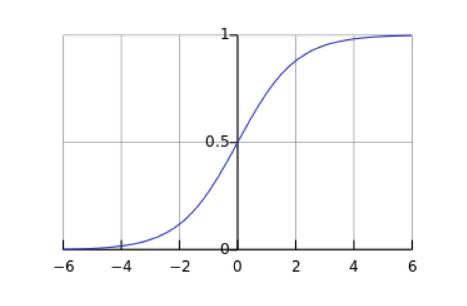

在介绍逻辑回归模型之前,我们先引入sigmoid函数,其数学形式是:

对应的函数曲线如下图所示:

从上图可以看到sigmoid函数是一个s形的曲线,它的取值在[0, 1]之间,在远离0的地方函数的值会很快接近0/1。

三、代码

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("MNIST_data/",one_hot=True)

#参数定义

learning_rate = 0.01

training_epoch = 25

batch_size = 100

display_step=1

x=tf.placeholder(tf.float32,[None,784])

y=tf.placeholder(tf.float32,[None,10])

#变量定义

W=tf.Variable(tf.zeros([784,10]))

b=tf.Variable(tf.zeros([10]))

#计算预测值

pred = tf.nn.softmax(tf.matmul(x,W)+b)

#计算损失值 使用相对熵计算损失值

cost=tf.reduce_mean(-tf.reduce_sum(y*tf.log(pred),reduction_indices=1))

#定义优化器

optimizer=tf.train.GradientDescentOptimizer(learning_rate).minimize(cost)

#初始化所有变量值

init = tf.init_all_variables()

with tf.Session() as sess:

sess.run(init)

for epoch in range(training_epoch):

avg_cost = 0.

total_batch = int(mnist.train.num_examples/batch_size)

for i in range(total_batch):

batch_xs,batch_ys=mnist.train.next_batch(batch_size)

_,c=sess.run([optimizer,cost],feed_dict={x:batch_xs,y:batch_ys})

avg_cost+=c/total_batch

if (epoch+1)%display_step==0:

print "Epoch:", '%04d' % (epoch+1), "cost=", "{:.9f}".format(avg_cost)

print "Optimization Finished!"

# Test model

correct_prediction = tf.equal(tf.argmax(pred, 1), tf.argmax(y, 1))

# Calculate accuracy for 3000 examples

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

print "Accuracy:", accuracy.eval({x: mnist.test.images[:3000], y: mnist.test.labels[:3000]})