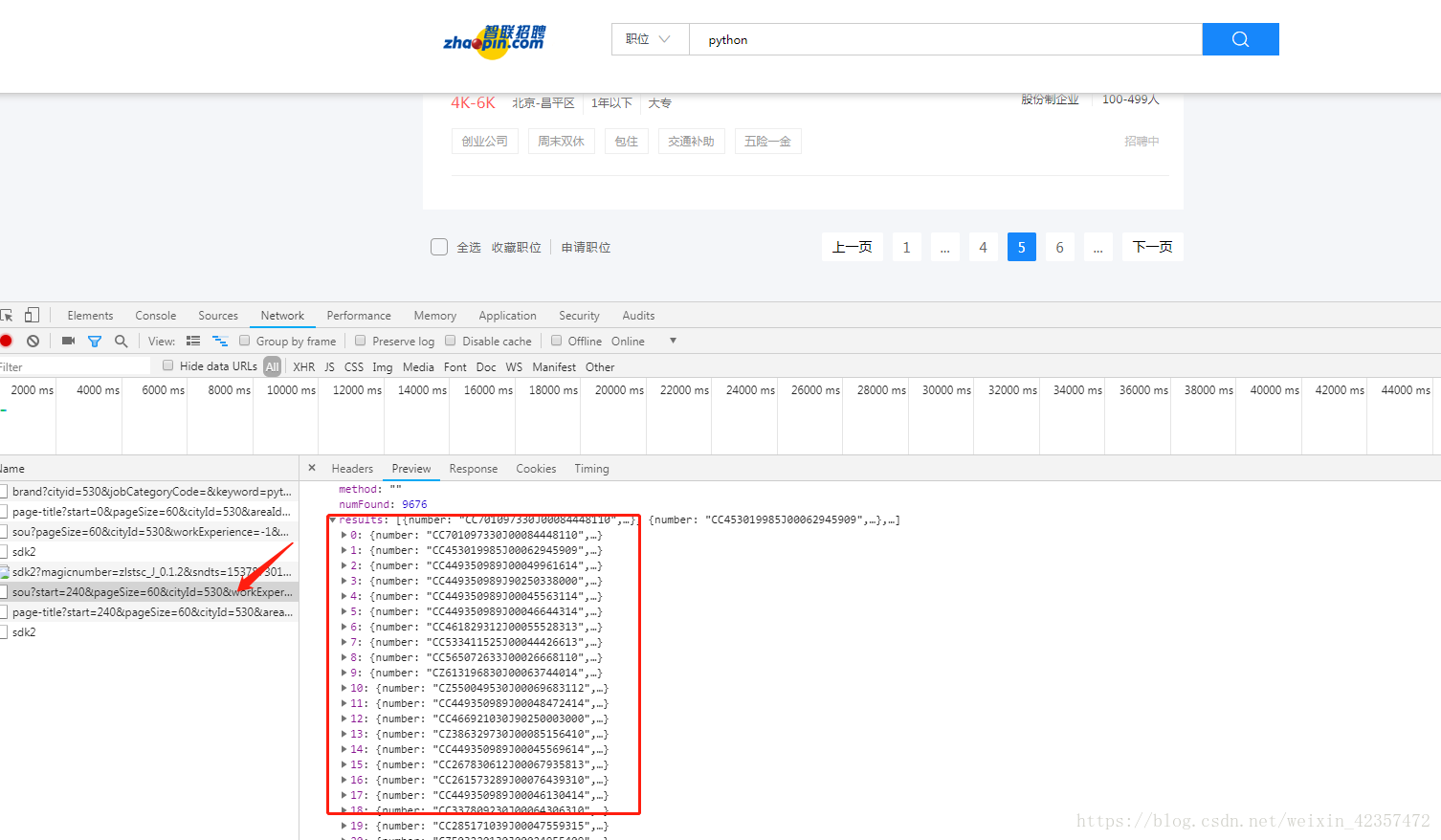

#首先分析目标站点,分析得出结果是在json接口里,然后抓取企业信息需要再次请求页面进行抓取

#1.直接requests请求进行抓取保存

##需要注意点:

- 可能不同企业单页排版不一样,需要判断采取不同形式

- 保存为csv文件注意格式,保证数据表格不换行需要添加 newline=’’

import requests

import json

from lxml import etree

import csv

lists=[]

for n in range(0,1):

url="https://fe-api.zhaopin.com/c/i/sou?start={}&pageSize=60&cityId=530&workExperience=-1&education=-1&companyType=-1&employmentType=-1&jobWelfareTag=-1&kw=python&kt=3&lastUrlQuery=%7B%22p%22:2,%22pageSize%22:%2260%22,%22jl%22:%22530%22,%22kw%22:%22python%22,%22kt%22:%223%22%7D".format(n*60)

response=json.loads(requests.get(url).text)

# print(response)

for i in range(0,60):

page=response["data"]["results"][i]["company"]["url"]

# print(page)

if len(page)< 48:

html=requests.get(page).text

a=etree.HTML(html)

dizi=a.xpath('//table[@class="comTinyDes"]//span[@class="comAddress"]/text()')

jianjie=a.xpath('string(//div[@class="part2"]//div)').strip()

gongsi = response["data"]["results"][i]["company"]["name"]

guimo = response["data"]["results"][i]["company"]["size"]["name"]

xinchou = response["data"]["results"][i]["salary"]

lists.append([i+1,gongsi,page,guimo,xinchou,dizi,jianjie])

print(lists)

print(gongsi)

print(page)

print(guimo)

print(xinchou)

print(dizi)

print(jianjie)

print("*"*50)

# with open("aa.txt","a",encoding="utf-8") as f:

# f.write("{}{} {} {} {} {} {}".format(i+1,gongsi,page,guimo,xinchou,dizi,jianjie))

# f.write("\n")

else:

continue

with open("aa.csv", 'w', encoding='utf-8',newline='') as f:

k = csv.writer(f, dialect='excel')

k.writerow(["数量", "公司", "网址", "规模", "薪酬", "地址", "简介"])

for list in lists:

k.writerow(list)

# print("="*20)

#2.用scrapy框架进行抓取

需要注意点:

- 需要同时请求两次页面两个response需要保存到同一个item,在这需要用meta传递

例子: https://www.zhihu.com/question/54773510

def parse(self, response):

item = ItemClass()

yield Request(url, meta={'item': item}, callback=self.parse_item)

def parse(self, response):

item = response.meta['item']

item['field'] = value

yield item

作者:何健

链接:https://www.zhihu.com/question/54773510/answer/141177867

来源:知乎

著作权归作者所有。商业转载请联系作者获得授权,非商业转载请注明出处。

- 保存为csv文件换行问题处理 scrapy crawl zhilian -o aaa.csv

修改scrapy的源代码 源码目录D:\Python36\Lib\site-packages\scrapy\exporters.py

添加一行 newline="",

class CsvItemExporter(BaseItemExporter):

def __init__(self, file, include_headers_line=True, join_multivalued=',', **kwargs):

...

self.stream = io.TextIOWrapper(

file,

newline="", # 新添加的

line_buffering=False,

write_through=True,

encoding=self.encoding

) if six.PY3 else file

---------------------

本文来自 范翻番樊 的CSDN 博客 ,全文地址请点击:https://blog.csdn.net/u011361138/article/details/79912895?utm_source=copy

- scrapy引用items方法常路径不对出错解决方法

这是因为编译器的问题,pycharm不会将当前文件目录自动加入自己的sourse_path

那么具体的解决方法如下:

1)找到你的scrapy项目上右键

2)然后点击make_directory as

3)最后点击sources root

4)看到文件夹编程蓝色就成功了

#最后是scrapy抓取智联招聘代码spider:

# -*- coding: utf-8 -*-

import scrapy

import json

from zhilianzp.items import ZhilianzpItem

cc={}

class ZhilianSpider(scrapy.Spider):

name = 'zhilian'

# start_urls = ['https://www.baidu.com/']

def start_requests(self):

url = "https://fe-api.zhaopin.com/c/i/sou?start=0&pageSize=60&cityId=530&workExperience=-1&education=-1&companyType=-1&employmentType=-1&jobWelfareTag=-1&kw=python&kt=3&lastUrlQuery=%7B%22p%22:2,%22pageSize%22:%2260%22,%22jl%22:%22530%22,%22kw%22:%22python%22,%22kt%22:%223%22%7D"

yield scrapy.Request(url=url,callback=self.parse)

def parse(self, response):

content=json.loads(response.text)

item = ZhilianzpItem()

for i in range(0, 60):

page = content["data"]["results"][i]["company"]["url"]

# item = ZhilianzpItem()

# print(page)

if len(page) < 48:

item["gongsi"]=content["data"]["results"][i]["company"]["name"]

item["guimo"]=content["data"]["results"][i]["company"]["size"]["name"]

item["xinchou"]=content["data"]["results"][i]["salary"]

yield scrapy.Request(page,meta={"key":item},callback=self.next_parse)

# print(item["gongsi"])

else:

continue

# return content

# yield item

def next_parse(self,response):

# item = ZhilianzpItem()

item=response.meta['key']

# item["gongsi"] = content["data"]["results"][i]["company"]["name"]

item["dizi"]= response.xpath('//table[@class="comTinyDes"]//span[@class="comAddress"]/text()').extract()

item["jianjie"] = response.xpath('string(//div[@class="part2"]//div)').extract_first()

yield item

# print(jianjie)