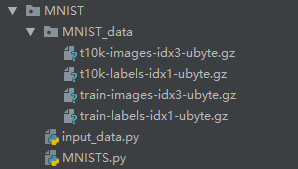

0.文件目录如图

1.CNN实现如下,每行代码都有注释

import tensorflow as tf

import input_data

#卷积网络

mnist = input_data.read_data_sets('MNIST_data', one_hot=True) #载入数据

sess = tf.InteractiveSession() #创建会话

x = tf.placeholder("float", shape=[None, 784]) #创建变量x,训练时输入数据,None一次输入的样本数量,784是照片转换的数组

y_ = tf.placeholder("float", shape=[None, 10]) #创建变量y_,训练时输出数据 ,有十个数字,对应输出数组长度为10

def weight_variable(shape): #权重,shape待输入

initial = tf.truncated_normal(shape, stddev=0.1) #tf.truncated_normal(shape, stddev=0.1),shape表示生成张量的维度,mean是均值,stddev是标准差,truncated为截断正态分布

#生成[n,n]张量,一个方差为零的正态分布,这样防止梯度为零,应为激励函数为RELU函数,初始权重为零的话,节点输出均为零

return tf.Variable(initial) #返回权重变量初始值

def bias_variable(shape): #偏置

initial = tf.constant(0.1, shape=shape) #偏置为常量

return tf.Variable(initial)

def conv2d(x, W): ####卷积

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME') #[1,stride,stride,1]图像只有两维,中间两个参数是二维方向上的步长 ‘VALID’ 'SAME'(卷积核可以停留在图像边缘)

def max_pool_2x2(x): ####池化

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME') #ksize:池化窗口的大小,取一个四维向量,一般是[1, height, width, 1],因为我们不想在batch和channels上做池化,所以这两个维度设为了1

###第一层卷积

W_conv1 = weight_variable([5, 5, 1, 32]) #参数为shape,5*5,单通道输入,32通道特征输出

b_conv1 = bias_variable([32]) #参数为shape,32通道特征输出,故b的shape为长度32 [ 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1 0.1]

x_image = tf.reshape(x, [-1,28,28,1]) #[-1,28,28,1] batch数量不限,28*28像素的图片 深度为1

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) #激活函数

h_pool1 = max_pool_2x2(h_conv1) #池化

###第二层卷积

W_conv2 = weight_variable([5, 5, 32, 64]) #5*5核,32输入,64输出

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

###密集连接层

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64]) #把最后一层池化层转为 7*7*64列的矩阵

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

###Dropout

keep_prob = tf.placeholder("float")

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

###输出层

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

###训练和评估模型

y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

sess.run(tf.initialize_all_variables())

for i in range(20000):

batch = mnist.train.next_batch(50)

if i%100 == 0:

train_accuracy = accuracy.eval(feed_dict={x:batch[0], y_: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %g"%(i, train_accuracy))

# print(sess.run(y))

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

print("test accuracy %g"%accuracy.eval(feed_dict={x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))