在之前写的NO.8文章中介绍了应用Selenium模拟浏览器的操作实现抓取JavaScript动态渲染的页面,俗称可见及可爬。最近在研究Scrapy框架,因此尝试Scrapy框架对接Selenium实现淘宝的爬取功能。

一、分析网页

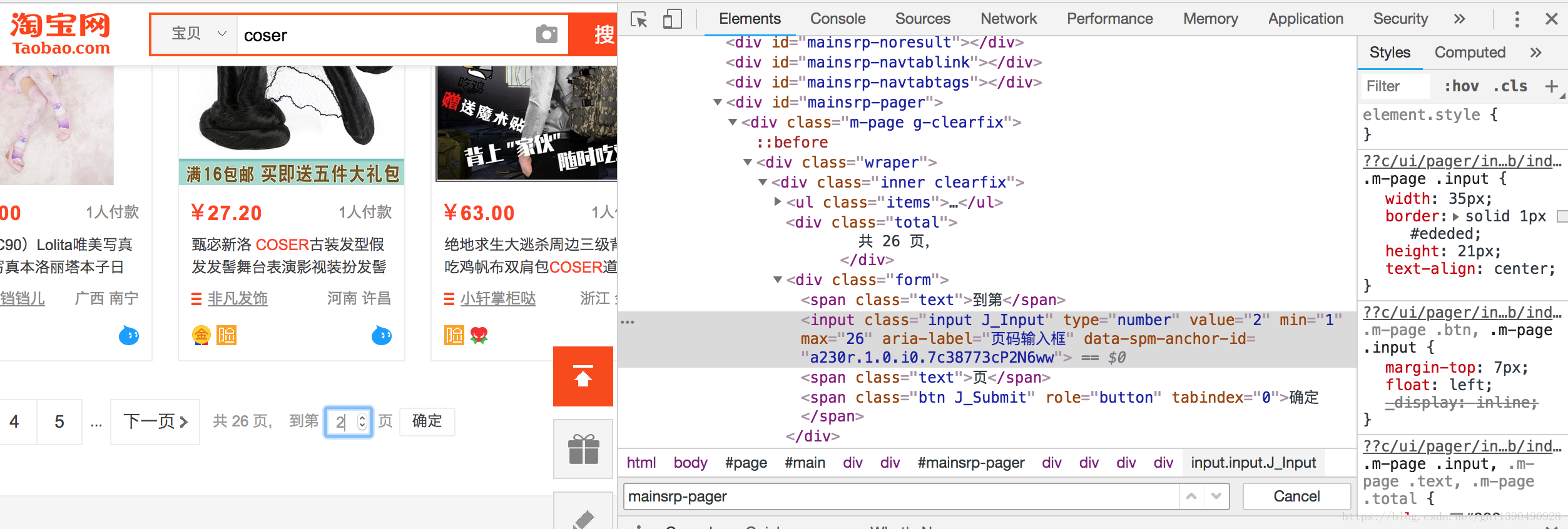

在Elements选项卡下查看网页源码,通过页码输入框切换页码:

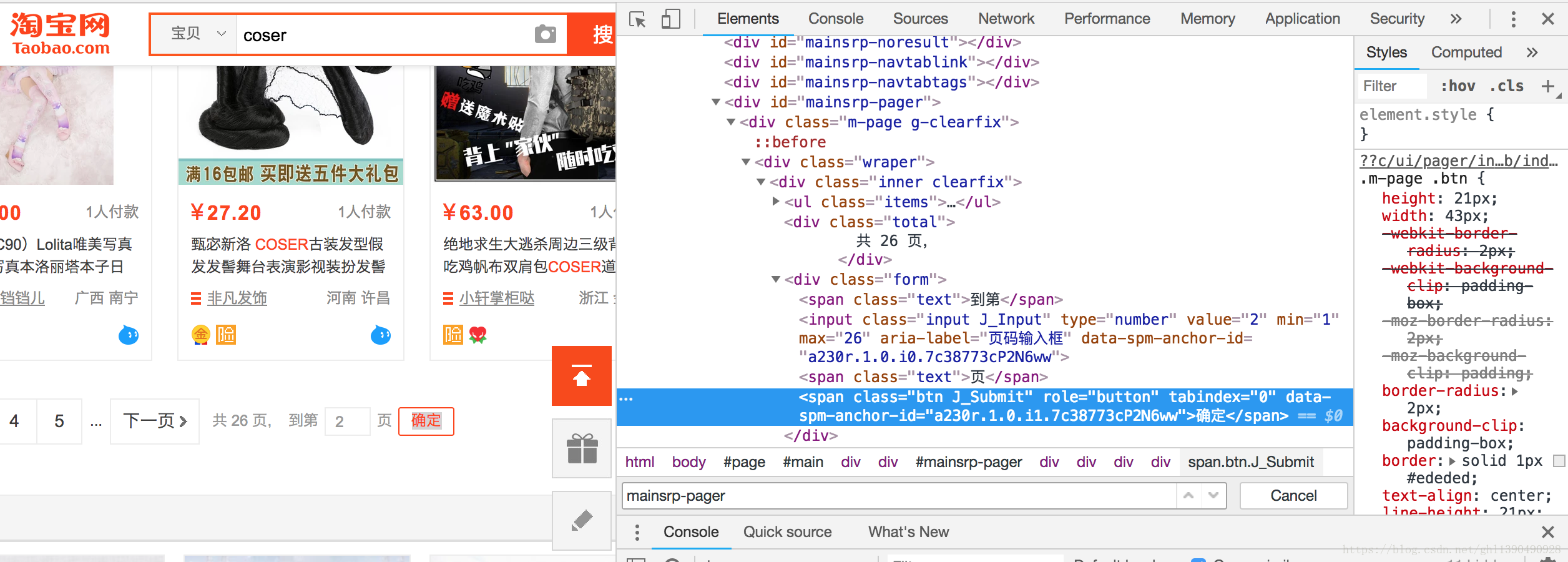

点击确定按钮进行提交:

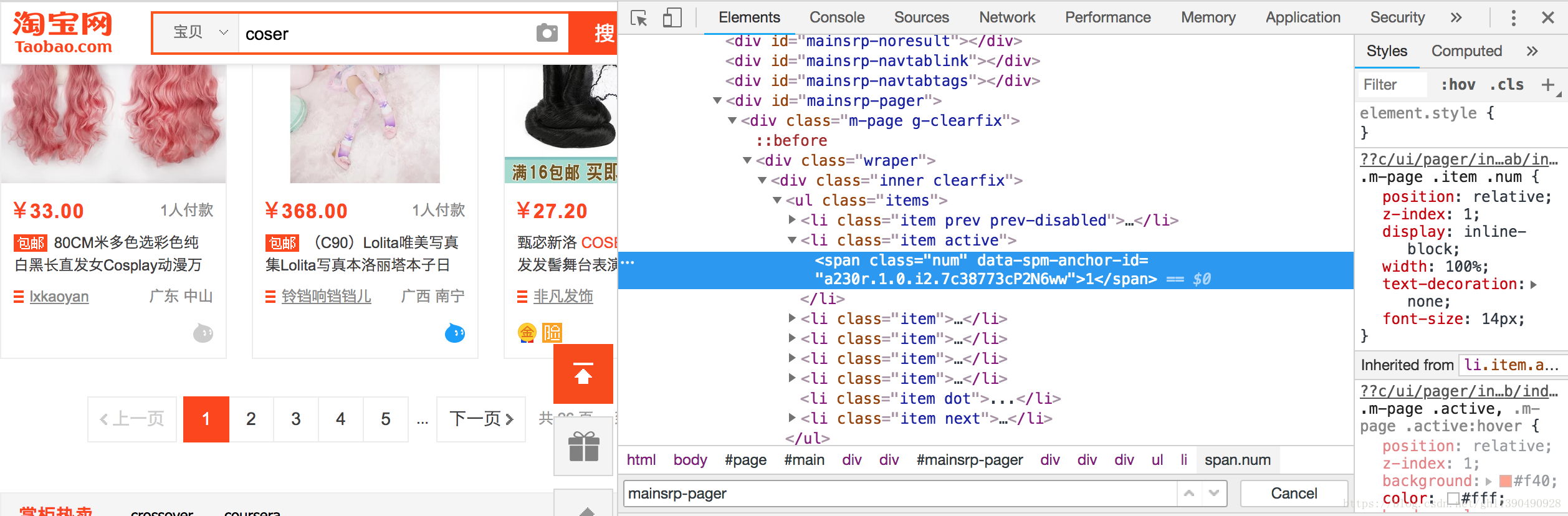

查看第一页内容:

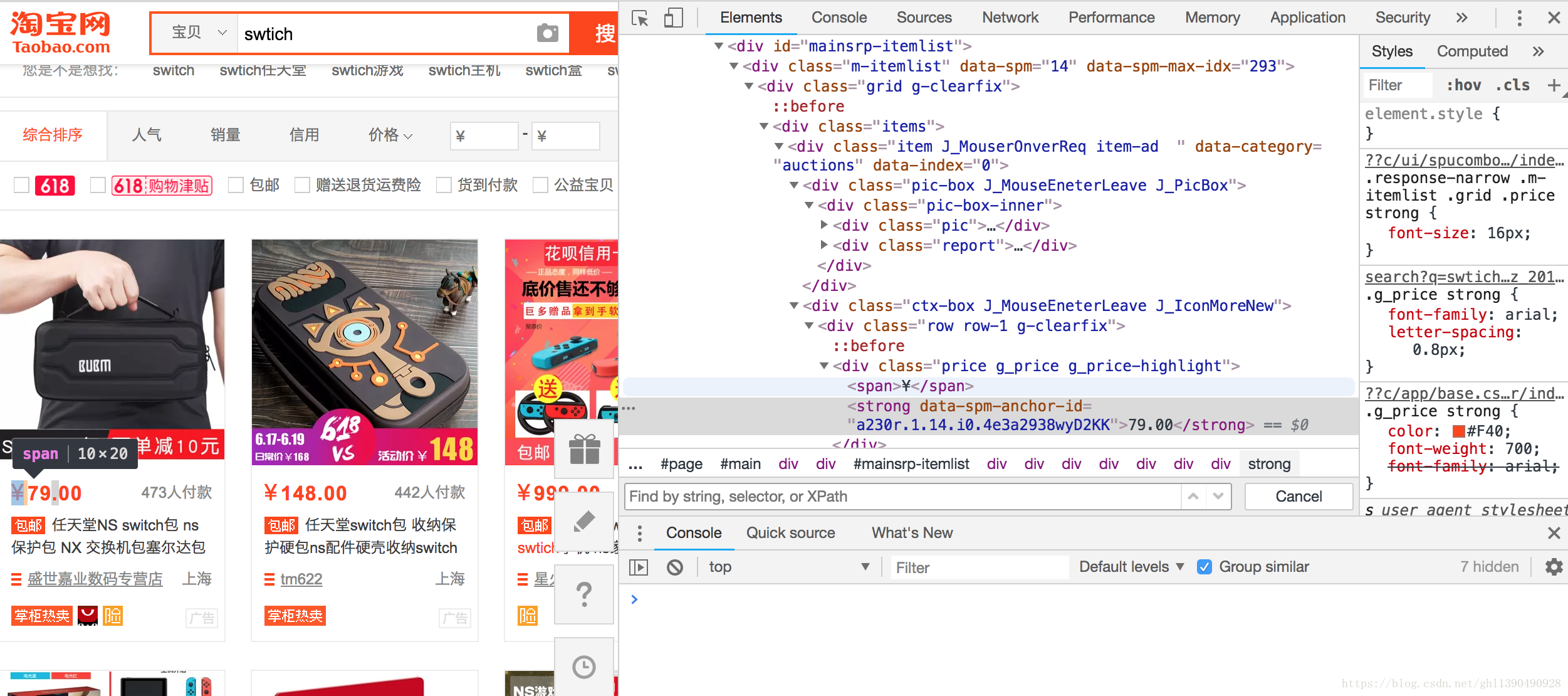

单个商品概览:

二、新建项目

scrapy startproject scrapyseleniumtest新建一个Spider

scrapy genspider taobao www.taobao.com三、定义Item对象(可以理解成初始化)

新建名为ProductItem的对象,建立与MongoDB的链接,将提取信息存放在名为products的Collections集合中。其中包含六个字段。

扫描二维码关注公众号,回复:

2602717 查看本文章

# -*- coding: utf-8 -*-

#Items.py

# Define here the models for your scraped items

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/items.html

from scrapy import Item, Field

class ProductItem(Item):

collection = 'products'

image = Field() #图片链接

price = Field() #价格

deal = Field() #成交量

title = Field() #名称

shop = Field() #店铺

location = Field() #店铺所在地四、从调度器取出一个个URL链接构造出Request请求传给下载器,下载器把资源下载下来封装成Response

# -*- coding: utf-8 -*-

#中间件

from selenium import webdriver

from selenium.common.exceptions import TimeoutException

from selenium.webdriver.common.by import By

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

from scrapy.http import HtmlResponse

from logging import getLogger

class SeleniumMiddleware():

def __init__(self, timeout=None, service_args=[]):

self.logger = getLogger(__name__)

self.timeout = timeout

self.browser = webdriver.PhantomJS(service_args=service_args)

self.browser.set_window_size(1400, 700)

self.browser.set_page_load_timeout(self.timeout)

self.wait = WebDriverWait(self.browser, self.timeout)

def __del__(self):

self.browser.close()

def process_request(self, request, spider):

"""

用PhantomJS抓取页面

:param request: Request对象

:param spider: Spider对象

:return: HtmlResponse

"""

self.logger.debug('PhantomJS is Starting')

page = request.meta.get('page', 1) #通过Request的meta属性获取当前需要爬取的页码

try:

self.browser.get(request.url) #调用PhantomJS对象的get()方法访问Request的对应的URL,相当于从Request对象里获取请求链接,然后再用PhantomJS加载,而不再使用Scrapy里的Downloader

if page > 1:

input = self.wait.until(

EC.presence_of_element_located((By.CSS_SELECTOR, '#mainsrp-pager div.form > input'))) #选择页码框

submit = self.wait.until(

EC.element_to_be_clickable((By.CSS_SELECTOR, '#mainsrp-pager div.form > span.btn.J_Submit'))) #确定按钮

input.clear()

input.send_keys(page)

submit.click()

self.wait.until(

EC.text_to_be_present_in_element((By.CSS_SELECTOR, '#mainsrp-pager li.item.active > span'), str(page))) #第一页

self.wait.until(EC.presence_of_element_located((By.CSS_SELECTOR, '.m-itemlist .items .item'))) #单个商品概览

return HtmlResponse(url=request.url, body=self.browser.page_source, request=request, encoding='utf-8',

status=200)#调用page_source属性获取页码的源代码,用它来直接构造并返回一个HtmlResponse对象,构造这个对象需要传入url,body等多个参数

except TimeoutException:

return HtmlResponse(url=request.url, status=500, request=request) #返回一个HtmlResponse对象。利用PhantomJS代替Scrapy完成页面的加载,最后将Response返回,回传给Spider内的回调函数进行解析

@classmethod

def from_crawler(cls, crawler):

return cls(timeout=crawler.settings.get('SELENIUM_TIMEOUT'),

service_args=crawler.settings.get('PHANTOMJS_SERVICE_ARGS'))五、设计Spider解析页面

通过第四步骤直接返回了一个HtmlResponse对象,它是Response的子类,返回之后会迭代调用每个Downloader Middleware的process_response()方法,进而将每个商品信息转化成HtmlResponse对象回传给Spider内的回到函数进行解析。

# -*- coding: utf-8 -*-#回调函数

from scrapy import Request, Spider

from urllib.parse import quote

from scrapyseleniumtest.items import ProductItem

class TaobaoSpider(Spider):

name = 'taobao'

allowed_domains = ['www.taobao.com']

base_url = 'https://s.taobao.com/search?q='

def start_requests(self):

for keyword in self.settings.get('KEYWORDS'):

for page in range(1, self.settings.get('MAX_PAGE') + 1):

url = self.base_url + quote(keyword)

yield Request(url=url, callback=self.parse, meta={'page': page}, dont_filter=True)

#对Downloader Middleware回传的Response对象进行解析,以下是实现该功能的回调函数

def parse(self, response):

products = response.xpath(

'//div[@id="mainsrp-itemlist"]//div[@class="items"][1]//div[contains(@class, "item")]')

for product in products:

item = ProductItem()

item['price'] = ''.join(product.xpath('.//div[contains(@class, "price")]//text()').extract()).strip()

item['title'] = ''.join(product.xpath('.//div[contains(@class, "title")]//text()').extract()).strip()

item['shop'] = ''.join(product.xpath('.//div[contains(@class, "shop")]//text()').extract()).strip()

item['image'] = ''.join(product.xpath('.//div[@class="pic"]//img[contains(@class, "img")]/@data-src').extract()).strip()

item['deal'] = product.xpath('.//div[contains(@class, "deal-cnt")]//text()').extract_first()

item['location'] = product.xpath('.//div[contains(@class, "location")]//text()').extract_first()

yield item六、存储结果

实现一个 Item Pipeline,将结果保存到MongoDB。

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

#当Spider解析完Response之后,Item会传递到Item Pipeline做数据清洗和存储操作

import pymongo

class MongoPipeline(object):

def __init__(self, mongo_uri, mongo_db):

self.mongo_uri = mongo_uri

self.mongo_db = mongo_db

@classmethod

def from_crawler(cls, crawler):

return cls(mongo_uri=crawler.settings.get('MONGO_URI'), mongo_db=crawler.settings.get('MONGO_DB'))

def open_spider(self, spider):

self.client = pymongo.MongoClient(self.mongo_uri)

self.db = self.client[self.mongo_db]

def process_item(self, item, spider):

self.db[item.collection].insert(dict(item))

return item

def close_spider(self, spider):

self.client.close()七、附加settings.py设置

# -*- coding: utf-8 -*-

# Scrapy settings for scrapyseleniumtest project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# http://doc.scrapy.org/en/latest/topics/settings.html

# http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

# http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'scrapyseleniumtest'

SPIDER_MODULES = ['scrapyseleniumtest.spiders']

NEWSPIDER_MODULE = 'scrapyseleniumtest.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

# USER_AGENT = 'scrapyseleniumtest (+http://www.yourdomain.com)'

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# Configure maximum concurrent requests performed by Scrapy (default: 16)

# CONCURRENT_REQUESTS = 32

# Configure a delay for requests for the same website (default: 0)

# See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

# DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

# CONCURRENT_REQUESTS_PER_DOMAIN = 16

# CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

# COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

# TELNETCONSOLE_ENABLED = False

# Override the default request headers:

# DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

# }

# Enable or disable spider middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

# SPIDER_MIDDLEWARES = {

# 'scrapyseleniumtest.middlewares.ScrapyseleniumtestSpiderMiddleware': 543,

# }

# Enable or disable downloader middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

DOWNLOADER_MIDDLEWARES = {

'scrapyseleniumtest.middlewares.SeleniumMiddleware': 543,

}

# Enable or disable extensions

# See http://scrapy.readthedocs.org/en/latest/topics/extensions.html

# EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

# }

# Configure item pipelines

# See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'scrapyseleniumtest.pipelines.MongoPipeline': 300,

}

# Enable and configure the AutoThrottle extension (disabled by default)

# See http://doc.scrapy.org/en/latest/topics/autothrottle.html

# AUTOTHROTTLE_ENABLED = True

# The initial download delay

# AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

# AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

# AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

# AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

# HTTPCACHE_ENABLED = True

# HTTPCACHE_EXPIRATION_SECS = 0

# HTTPCACHE_DIR = 'httpcache'

# HTTPCACHE_IGNORE_HTTP_CODES = []

# HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

KEYWORDS = ['coser']

MAX_PAGE = 100

SELENIUM_TIMEOUT = 20

PHANTOMJS_SERVICE_ARGS = ['--load-images=false', '--disk-cache=true']

MONGO_URI = 'localhost'

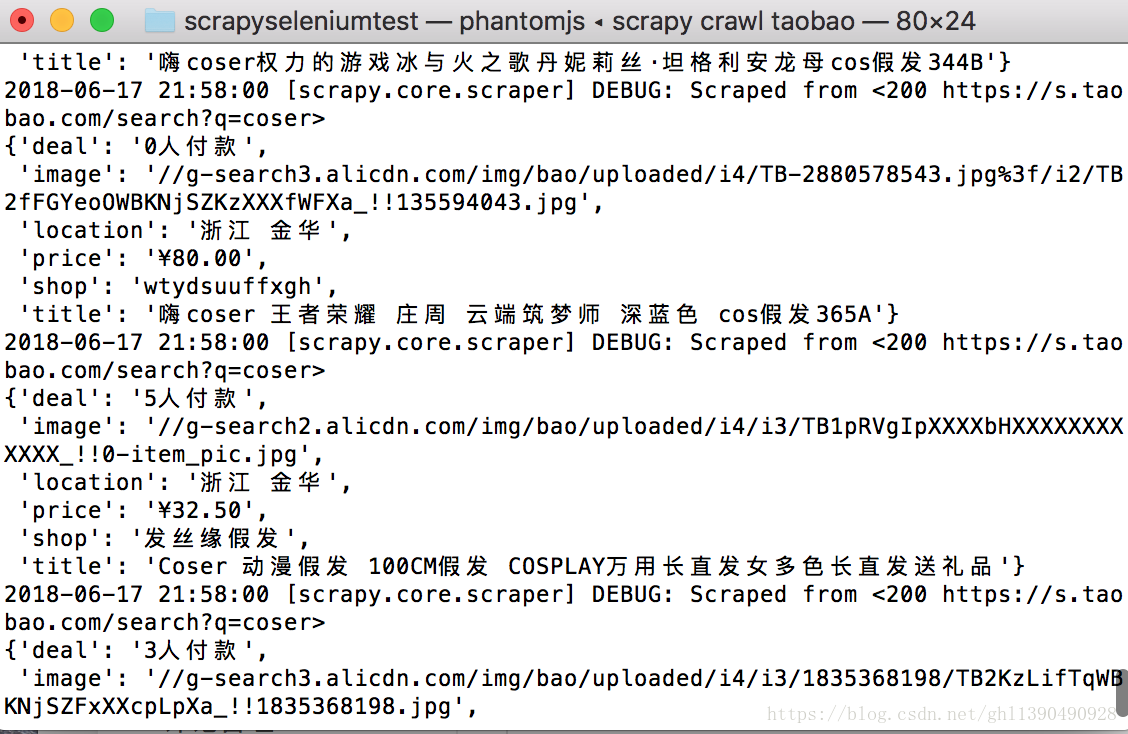

MONGO_DB = 'taobao'八、效果展示

命令行进入项目目录:

scrapy crawl taobao