论文链接:Deep residual learning for image recognition

译文地址:http://blog.csdn.net/wspba/article/details/57074389

解读博客:https://blog.csdn.net/wspba/article/details/57074389

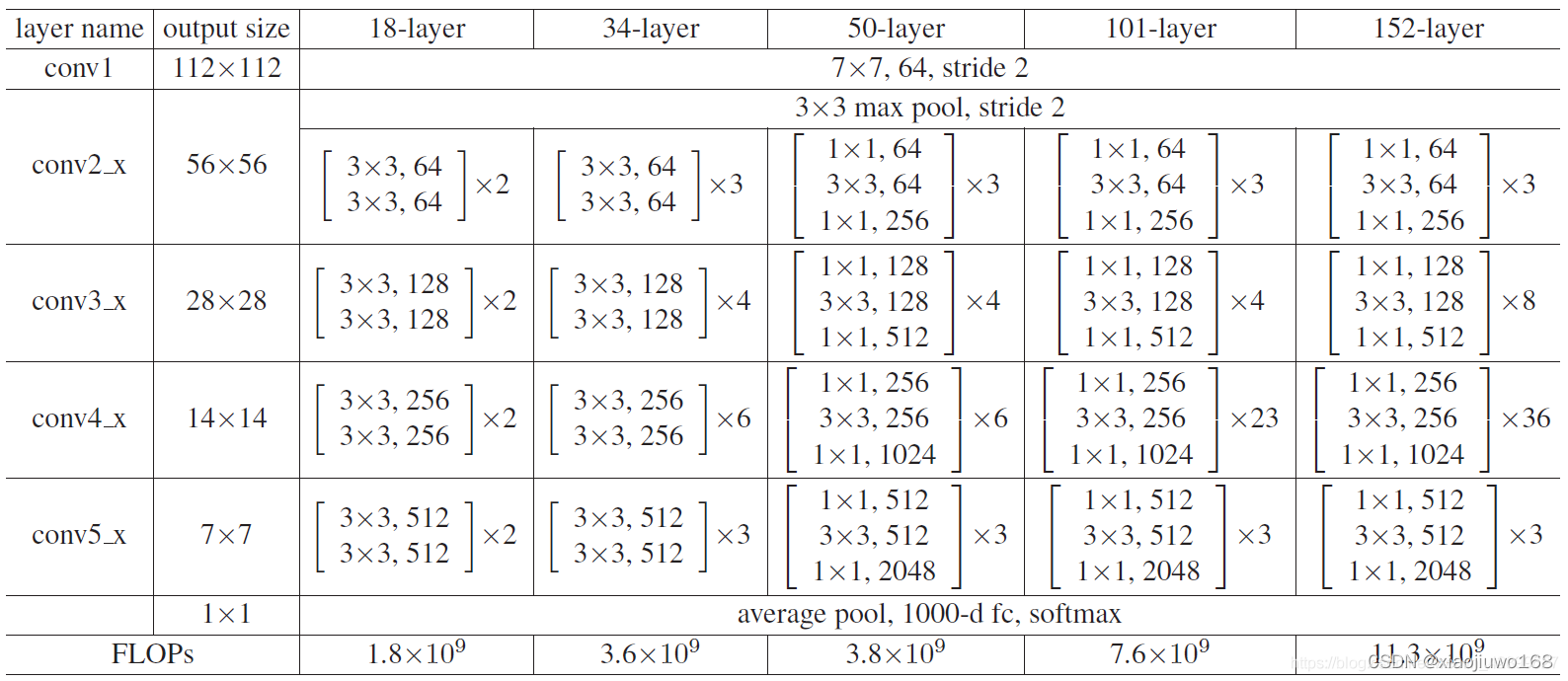

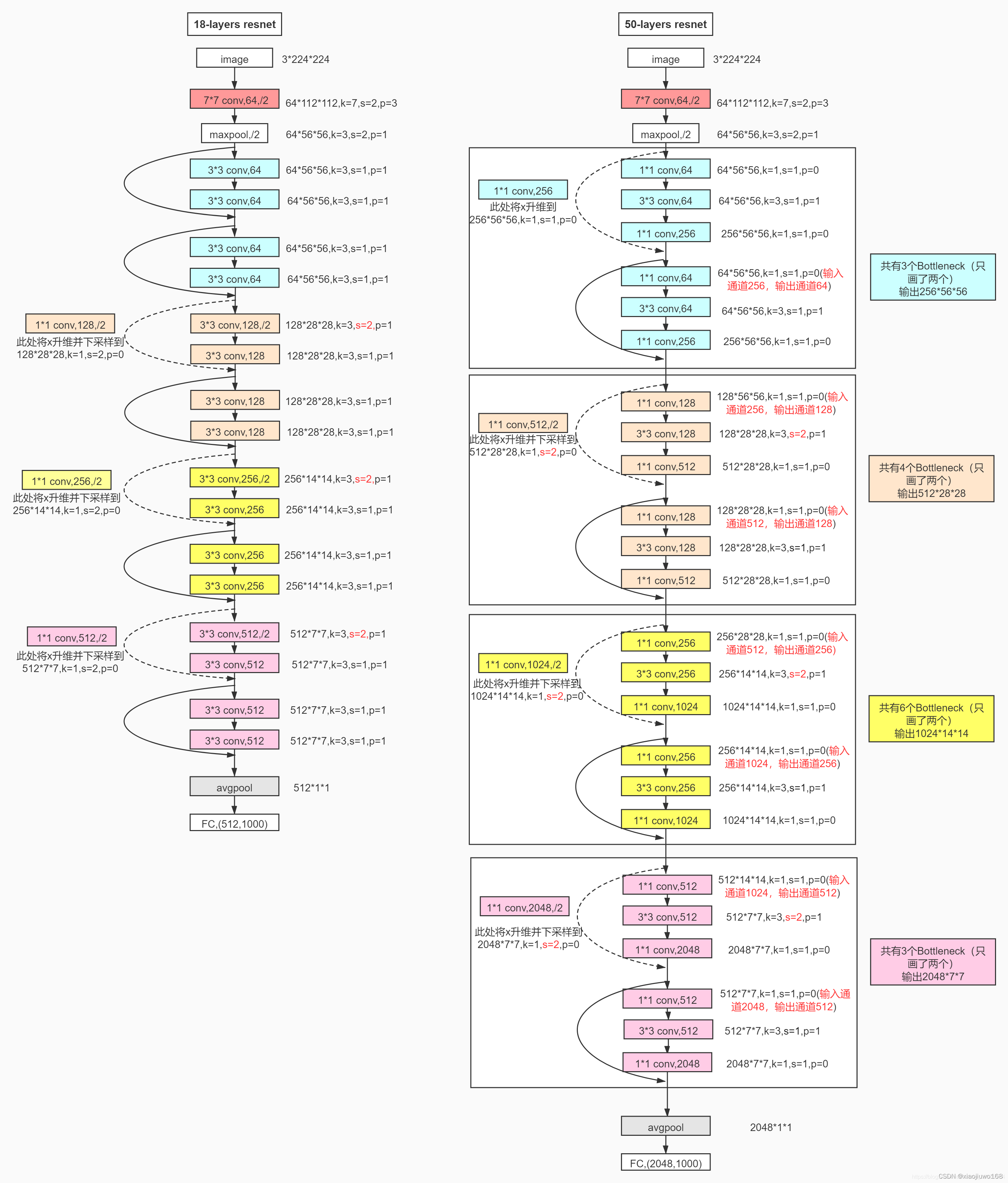

Resnet 网络简介:

ResNet-18结构为例:

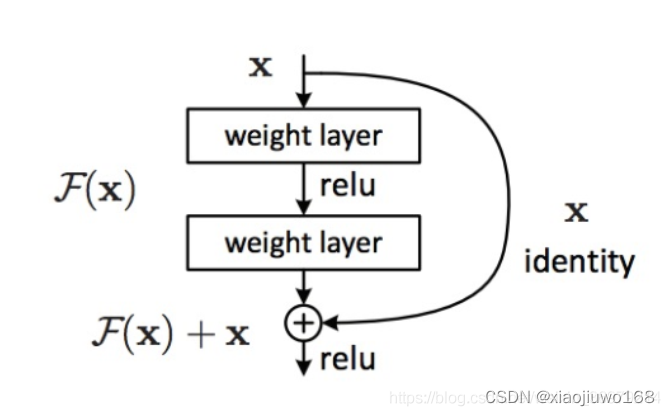

基本结点:

代码实现:

import torch

import torch.nn as nn

from torch.nn import functional as F

class RestNetBasicBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super(RestNetBasicBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1)

self.bn1 = nn.BatchNorm2d(out_channels)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=stride, padding=1)

self.bn2 = nn.BatchNorm2d(out_channels)

def forward(self, x):

output = self.conv1(x)

output = F.relu(self.bn1(output))

output = self.conv2(output)

output = self.bn2(output)

return F.relu(x + output)

class RestNetDownBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super(RestNetDownBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride[0], padding=1)

self.bn1 = nn.BatchNorm2d(out_channels)

self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3, stride=stride[1], padding=1)

self.bn2 = nn.BatchNorm2d(out_channels)

self.extra = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride[0], padding=0),

nn.BatchNorm2d(out_channels)

)

def forward(self, x):

extra_x = self.extra(x)

output = self.conv1(x)

out = F.relu(self.bn1(output))

out = self.conv2(out)

out = self.bn2(out)

return F.relu(extra_x + out)

class RestNet18(nn.Module):

def __init__(self):

super(RestNet18, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3)

self.bn1 = nn.BatchNorm2d(64)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = nn.Sequential(RestNetBasicBlock(64, 64, 1),

RestNetBasicBlock(64, 64, 1))

self.layer2 = nn.Sequential(RestNetDownBlock(64, 128, [2, 1]),

RestNetBasicBlock(128, 128, 1))

self.layer3 = nn.Sequential(RestNetDownBlock(128, 256, [2, 1]),

RestNetBasicBlock(256, 256, 1))

self.layer4 = nn.Sequential(RestNetDownBlock(256, 512, [2, 1]),

RestNetBasicBlock(512, 512, 1))

self.avgpool = nn.AdaptiveAvgPool2d(output_size=(1, 1))

self.fc = nn.Linear(512, 10)

def forward(self, x):

out = self.conv1(x)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = self.avgpool(out)

out = out.reshape(x.shape[0], -1)

out = self.fc(out)

return out

用来预测CIFAR-10数据集

数据集:

官网链接:CIFAR-10 DATASET

测试代码:

import torch

from torch import nn, optim

import torchvision.transforms as transforms

from torchvision import datasets

from torch.utils.data import DataLoader

from restnet18.restnet18 import RestNet18

# 用CIFAR-10 数据集进行实验

def main():

batchsz = 128

cifar_train = datasets.CIFAR10('cifar', True, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]), download=True)

cifar_train = DataLoader(cifar_train, batch_size=batchsz, shuffle=True)

cifar_test = datasets.CIFAR10('cifar', False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]), download=True)

cifar_test = DataLoader(cifar_test, batch_size=batchsz, shuffle=True)

x, label = iter(cifar_train).next()

print('x:', x.shape, 'label:', label.shape)

device = torch.device('cuda')

# model = Lenet5().to(device)

model = RestNet18().to(device)

criteon = nn.CrossEntropyLoss().to(device)

optimizer = optim.Adam(model.parameters(), lr=1e-3)

print(model)

for epoch in range(1000):

model.train()

for batchidx, (x, label) in enumerate(cifar_train):

# [b, 3, 32, 32]

# [b]

x, label = x.to(device), label.to(device)

logits = model(x)

# logits: [b, 10]

# label: [b]

# loss: tensor scalar

loss = criteon(logits, label)

# backprop

optimizer.zero_grad()

loss.backward()

optimizer.step()

print(epoch, 'loss:', loss.item())

model.eval()

with torch.no_grad():

# test

total_correct = 0

total_num = 0

for x, label in cifar_test:

# [b, 3, 32, 32]

# [b]

x, label = x.to(device), label.to(device)

# [b, 10]

logits = model(x)

# [b]

pred = logits.argmax(dim=1)

# [b] vs [b] => scalar tensor

correct = torch.eq(pred, label).float().sum().item()

total_correct += correct

total_num += x.size(0)

# print(correct)

acc = total_correct / total_num

print(epoch, 'test acc:', acc)

if __name__ == '__main__':

main()

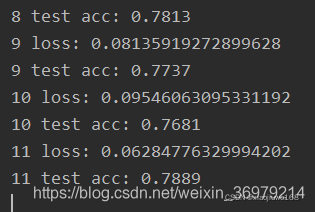

运行结果: