jsoup爬虫

1、导入pom依赖

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>com.javaxl</groupId> <artifactId>T226_jsoup</artifactId> <version>0.0.1-SNAPSHOT</version> <packaging>jar</packaging> <name>T226_jsoup</name> <url>http://maven.apache.org</url> <properties> <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> </properties> <dependencies> <!-- jdbc驱动包 --> <dependency> <groupId>mysql</groupId> <artifactId>mysql-connector-java</artifactId> <version>5.1.44</version> </dependency> <!-- 添加Httpclient支持 --> <dependency> <groupId>org.apache.httpcomponents</groupId> <artifactId>httpclient</artifactId> <version>4.5.2</version> </dependency> <!-- 添加jsoup支持 --> <dependency> <groupId>org.jsoup</groupId> <artifactId>jsoup</artifactId> <version>1.10.1</version> </dependency> <!-- 添加日志支持 --> <dependency> <groupId>log4j</groupId> <artifactId>log4j</artifactId> <version>1.2.16</version> </dependency> <!-- 添加ehcache支持 --> <dependency> <groupId>net.sf.ehcache</groupId> <artifactId>ehcache</artifactId> <version>2.10.3</version> </dependency> <!-- 添加commons io支持 --> <dependency> <groupId>commons-io</groupId> <artifactId>commons-io</artifactId> <version>2.5</version> </dependency> <dependency> <groupId>com.alibaba</groupId> <artifactId>fastjson</artifactId> <version>1.2.47</version> </dependency> </dependencies> </project>

2、网站爬取--BlogCrawlerStarter

1 package com.javaxl.crawler; 2 3 import java.io.File; 4 import java.io.IOException; 5 import java.sql.Connection; 6 import java.sql.PreparedStatement; 7 import java.sql.SQLException; 8 import java.util.HashMap; 9 import java.util.List; 10 import java.util.Map; 11 import java.util.UUID; 12 13 import org.apache.commons.io.FileUtils; 14 import org.apache.http.HttpEntity; 15 import org.apache.http.client.ClientProtocolException; 16 import org.apache.http.client.config.RequestConfig; 17 import org.apache.http.client.methods.CloseableHttpResponse; 18 import org.apache.http.client.methods.HttpGet; 19 import org.apache.http.impl.client.CloseableHttpClient; 20 import org.apache.http.impl.client.HttpClients; 21 import org.apache.http.util.EntityUtils; 22 import org.apache.log4j.Logger; 23 import org.jsoup.Jsoup; 24 import org.jsoup.nodes.Document; 25 import org.jsoup.nodes.Element; 26 import org.jsoup.select.Elements; 27 28 import com.javaxl.util.DateUtil; 29 import com.javaxl.util.DbUtil; 30 import com.javaxl.util.PropertiesUtil; 31 32 import net.sf.ehcache.Cache; 33 import net.sf.ehcache.CacheManager; 34 import net.sf.ehcache.Status; 35 36 /** 37 * @author Administrator 38 * 39 */ 40 public class BlogCrawlerStarter { 41 42 private static Logger logger = Logger.getLogger(BlogCrawlerStarter.class); 43 // https://www.csdn.net/nav/newarticles 44 private static String HOMEURL = "https://www.cnblogs.com/"; 45 private static CloseableHttpClient httpClient; 46 private static Connection con; 47 private static CacheManager cacheManager; 48 private static Cache cache; 49 50 /** 51 * httpclient解析首页,获取首页内容 52 */ 53 public static void parseHomePage() { 54 logger.info("开始爬取首页:" + HOMEURL); 55 56 cacheManager = CacheManager.create(PropertiesUtil.getValue("ehcacheXmlPath")); 57 cache = cacheManager.getCache("cnblog"); 58 59 httpClient = HttpClients.createDefault(); 60 HttpGet httpGet = new HttpGet(HOMEURL); 61 RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build(); 62 httpGet.setConfig(config); 63 CloseableHttpResponse response = null; 64 try { 65 response = httpClient.execute(httpGet); 66 if (response == null) { 67 logger.info(HOMEURL + ":爬取无响应"); 68 return; 69 } 70 71 if (response.getStatusLine().getStatusCode() == 200) { 72 HttpEntity entity = response.getEntity(); 73 String homePageContent = EntityUtils.toString(entity, "utf-8"); 74 // System.out.println(homePageContent); 75 parseHomePageContent(homePageContent); 76 } 77 78 } catch (ClientProtocolException e) { 79 logger.error(HOMEURL + "-ClientProtocolException", e); 80 } catch (IOException e) { 81 logger.error(HOMEURL + "-IOException", e); 82 } finally { 83 try { 84 if (response != null) { 85 response.close(); 86 } 87 88 if (httpClient != null) { 89 httpClient.close(); 90 } 91 } catch (IOException e) { 92 logger.error(HOMEURL + "-IOException", e); 93 } 94 } 95 96 if(cache.getStatus() == Status.STATUS_ALIVE) { 97 cache.flush(); 98 } 99 cacheManager.shutdown(); 100 logger.info("结束爬取首页:" + HOMEURL); 101 102 } 103 104 /** 105 * 通过网络爬虫框架jsoup,解析网页类容,获取想要数据(博客的连接) 106 * 107 * @param homePageContent 108 */ 109 private static void parseHomePageContent(String homePageContent) { 110 Document doc = Jsoup.parse(homePageContent); 111 //#feedlist_id .list_con .title h2 a 112 Elements aEles = doc.select("#post_list .post_item .post_item_body h3 a"); 113 for (Element aEle : aEles) { 114 // 这个是首页中的博客列表中的单个链接URL 115 String blogUrl = aEle.attr("href"); 116 if (null == blogUrl || "".equals(blogUrl)) { 117 logger.info("该博客未内容,不再爬取插入数据库!"); 118 continue; 119 } 120 if(cache.get(blogUrl) != null) { 121 logger.info("该数据已经被爬取到数据库中,数据库不再收录!"); 122 continue; 123 } 124 // System.out.println("************************"+blogUrl+"****************************"); 125 126 parseBlogUrl(blogUrl); 127 } 128 } 129 130 /** 131 * 通过博客地址获取博客的标题,以及博客的类容 132 * 133 * @param blogUrl 134 */ 135 private static void parseBlogUrl(String blogUrl) { 136 137 logger.info("开始爬取博客网页:" + blogUrl); 138 httpClient = HttpClients.createDefault(); 139 HttpGet httpGet = new HttpGet(blogUrl); 140 RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build(); 141 httpGet.setConfig(config); 142 CloseableHttpResponse response = null; 143 try { 144 response = httpClient.execute(httpGet); 145 if (response == null) { 146 logger.info(blogUrl + ":爬取无响应"); 147 return; 148 } 149 150 if (response.getStatusLine().getStatusCode() == 200) { 151 HttpEntity entity = response.getEntity(); 152 String blogContent = EntityUtils.toString(entity, "utf-8"); 153 parseBlogContent(blogContent, blogUrl); 154 } 155 156 } catch (ClientProtocolException e) { 157 logger.error(blogUrl + "-ClientProtocolException", e); 158 } catch (IOException e) { 159 logger.error(blogUrl + "-IOException", e); 160 } finally { 161 try { 162 if (response != null) { 163 response.close(); 164 } 165 } catch (IOException e) { 166 logger.error(blogUrl + "-IOException", e); 167 } 168 } 169 170 logger.info("结束爬取博客网页:" + HOMEURL); 171 172 } 173 174 /** 175 * 解析博客类容,获取博客中标题以及所有内容 176 * 177 * @param blogContent 178 */ 179 private static void parseBlogContent(String blogContent, String link) { 180 Document doc = Jsoup.parse(blogContent); 181 if(!link.contains("ansion2014")) { 182 System.out.println(blogContent); 183 } 184 Elements titleEles = doc 185 //#mainBox main .blog-content-box .article-header-box .article-header .article-title-box h1 186 .select("#topics .post h1 a"); 187 System.out.println("123"); 188 System.out.println(titleEles.toString()); 189 System.out.println("123"); 190 if (titleEles.size() == 0) { 191 logger.info("博客标题为空,不插入数据库!"); 192 return; 193 } 194 String title = titleEles.get(0).html(); 195 196 Elements blogContentEles = doc.select("#cnblogs_post_body "); 197 if (blogContentEles.size() == 0) { 198 logger.info("博客内容为空,不插入数据库!"); 199 return; 200 } 201 String blogContentBody = blogContentEles.get(0).html(); 202 203 // Elements imgEles = doc.select("img"); 204 // List<String> imgUrlList = new LinkedList<String>(); 205 // if(imgEles.size() > 0) { 206 // for (Element imgEle : imgEles) { 207 // imgUrlList.add(imgEle.attr("src")); 208 // } 209 // } 210 // 211 // if(imgUrlList.size() > 0) { 212 // Map<String, String> replaceUrlMap = downloadImgList(imgUrlList); 213 // blogContent = replaceContent(blogContent,replaceUrlMap); 214 // } 215 216 String sql = "insert into `t_jsoup_article` values(null,?,?,null,now(),0,0,null,?,0,null)"; 217 try { 218 PreparedStatement pst = con.prepareStatement(sql); 219 pst.setObject(1, title); 220 pst.setObject(2, blogContentBody); 221 pst.setObject(3, link); 222 if(pst.executeUpdate() == 0) { 223 logger.info("爬取博客信息插入数据库失败"); 224 }else { 225 cache.put(new net.sf.ehcache.Element(link, link)); 226 logger.info("爬取博客信息插入数据库成功"); 227 } 228 } catch (SQLException e) { 229 logger.error("数据异常-SQLException:",e); 230 } 231 } 232 233 /** 234 * 将别人博客内容进行加工,将原有图片地址换成本地的图片地址 235 * @param blogContent 236 * @param replaceUrlMap 237 * @return 238 */ 239 private static String replaceContent(String blogContent, Map<String, String> replaceUrlMap) { 240 for(Map.Entry<String, String> entry: replaceUrlMap.entrySet()) { 241 blogContent = blogContent.replace(entry.getKey(), entry.getValue()); 242 } 243 return blogContent; 244 } 245 246 /** 247 * 别人服务器图片本地化 248 * @param imgUrlList 249 * @return 250 */ 251 private static Map<String, String> downloadImgList(List<String> imgUrlList) { 252 Map<String, String> replaceMap = new HashMap<String, String>(); 253 for (String imgUrl : imgUrlList) { 254 CloseableHttpClient httpClient = HttpClients.createDefault(); 255 HttpGet httpGet = new HttpGet(imgUrl); 256 RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build(); 257 httpGet.setConfig(config); 258 CloseableHttpResponse response = null; 259 try { 260 response = httpClient.execute(httpGet); 261 if (response == null) { 262 logger.info(HOMEURL + ":爬取无响应"); 263 }else { 264 if (response.getStatusLine().getStatusCode() == 200) { 265 HttpEntity entity = response.getEntity(); 266 String blogImagesPath = PropertiesUtil.getValue("blogImages"); 267 String dateDir = DateUtil.getCurrentDatePath(); 268 String uuid = UUID.randomUUID().toString(); 269 String subfix = entity.getContentType().getValue().split("/")[1]; 270 String fileName = blogImagesPath + dateDir + "/" + uuid + "." + subfix; 271 272 FileUtils.copyInputStreamToFile(entity.getContent(), new File(fileName)); 273 replaceMap.put(imgUrl, fileName); 274 } 275 } 276 } catch (ClientProtocolException e) { 277 logger.error(imgUrl + "-ClientProtocolException", e); 278 } catch (IOException e) { 279 logger.error(imgUrl + "-IOException", e); 280 } catch (Exception e) { 281 logger.error(imgUrl + "-Exception", e); 282 } finally { 283 try { 284 if (response != null) { 285 response.close(); 286 } 287 } catch (IOException e) { 288 logger.error(imgUrl + "-IOException", e); 289 } 290 } 291 292 } 293 return replaceMap; 294 } 295 296 public static void start() { 297 while(true) { 298 DbUtil dbUtil = new DbUtil(); 299 try { 300 con = dbUtil.getCon(); 301 parseHomePage(); 302 } catch (Exception e) { 303 logger.error("数据库连接势失败!"); 304 } finally { 305 try { 306 if (con != null) { 307 con.close(); 308 } 309 } catch (SQLException e) { 310 logger.error("数据关闭异常-SQLException:",e); 311 } 312 } 313 try { 314 Thread.sleep(1000*60); 315 } catch (InterruptedException e) { 316 logger.error("主线程休眠异常-InterruptedException:",e); 317 } 318 } 319 } 320 321 public static void main(String[] args) { 322 start(); 323 } 324 }

博客园首页信息图片

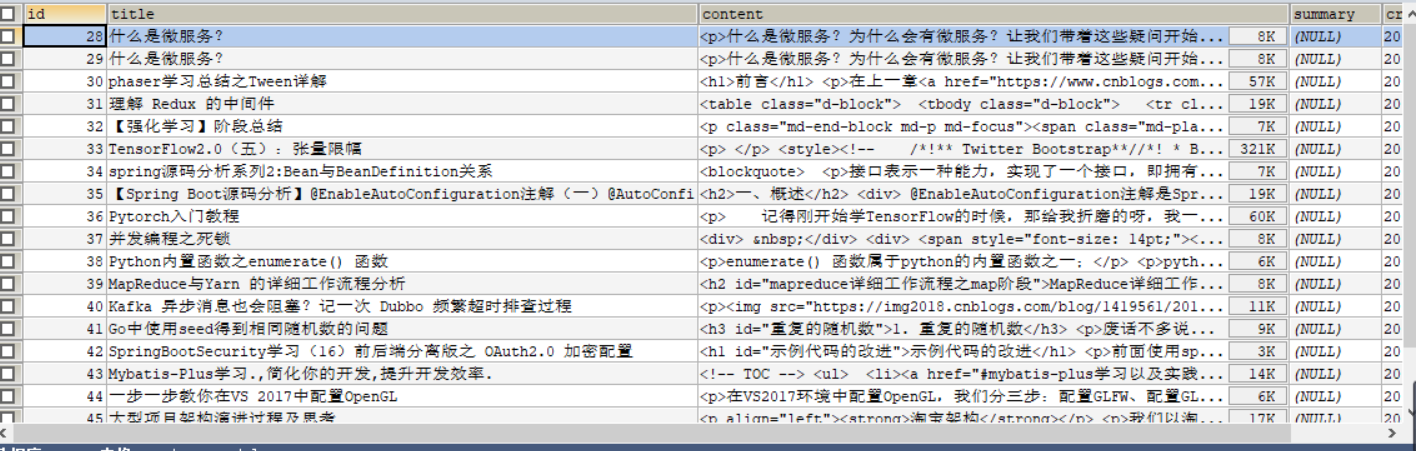

爬取到的数据

3、简单图片爬取 --DownloadImg

package com.javaxl.crawler; import java.io.File; import java.io.IOException; import java.util.UUID; import org.apache.commons.io.FileUtils; import org.apache.http.HttpEntity; import org.apache.http.client.ClientProtocolException; import org.apache.http.client.config.RequestConfig; import org.apache.http.client.methods.CloseableHttpResponse; import org.apache.http.client.methods.HttpGet; import org.apache.http.impl.client.CloseableHttpClient; import org.apache.http.impl.client.HttpClients; import org.apache.log4j.Logger; import com.javaxl.util.DateUtil; import com.javaxl.util.PropertiesUtil; public class DownloadImg { private static Logger logger = Logger.getLogger(DownloadImg.class); private static String URL = "http://photocdn.sohu.com/20120625/Img346436473.jpg"; public static void main(String[] args) { logger.info("开始爬取首页:" + URL); CloseableHttpClient httpClient = HttpClients.createDefault(); HttpGet httpGet = new HttpGet(URL); RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build(); httpGet.setConfig(config); CloseableHttpResponse response = null; try { response = httpClient.execute(httpGet); if (response == null) { logger.info("连接超时!!!"); } else { HttpEntity entity = response.getEntity(); String imgPath = PropertiesUtil.getValue("blogImages"); String dateDir = DateUtil.getCurrentDatePath(); String uuid = UUID.randomUUID().toString(); String subfix = entity.getContentType().getValue().split("/")[1]; String localFile = imgPath+dateDir+"/"+uuid+"."+subfix; // System.out.println(localFile); FileUtils.copyInputStreamToFile(entity.getContent(), new File(localFile)); } } catch (ClientProtocolException e) { logger.error(URL+"-ClientProtocolException", e); } catch (IOException e) { logger.error(URL+"-IOException", e); } catch (Exception e) { logger.error(URL+"-Exception", e); } finally { try { if (response != null) { response.close(); } if(httpClient != null) { httpClient.close(); } } catch (IOException e) { logger.error(URL+"-IOException", e); } } logger.info("结束首页爬取:" + URL); } }

爬取图片样式

爬取结果