Receptive Field Block Net for Accurate and Fast Object Detection

简介

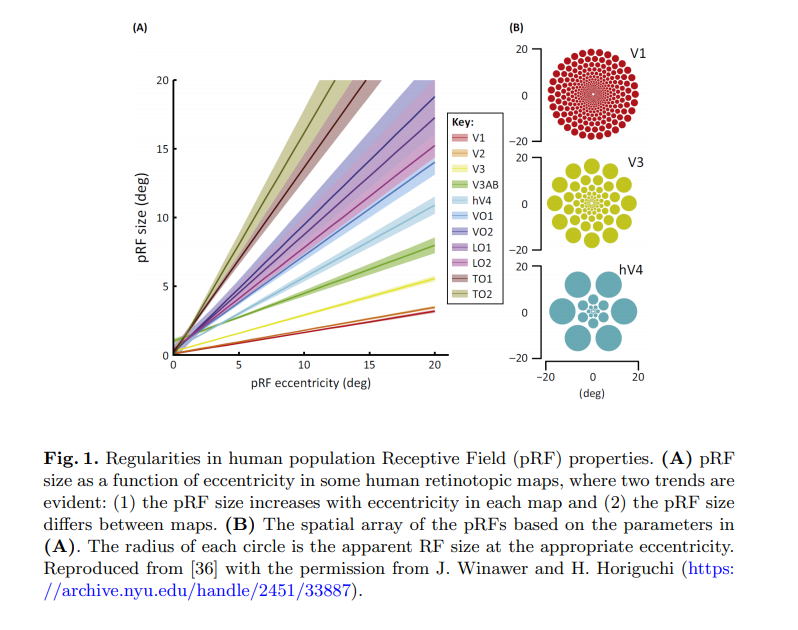

本文在SSD基础上提出了RFB Module,利用神经科学的先验知识来解释这种效果提升。本质上是设计一种新的结构来提升感受野,并表明了人类视网膜的感受野有一个特点,离视线中心越远,其感受野是越大的,越靠近视线中间,感受野越小。基于此,本文提出的RFB Module就是来模拟人类这种视觉特点的。

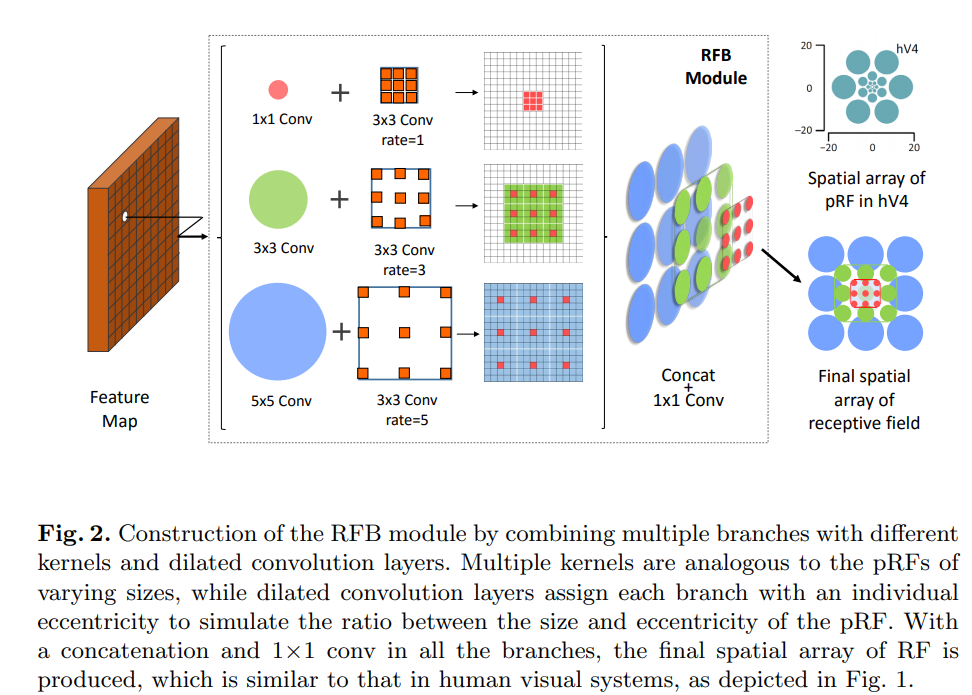

RFB Module

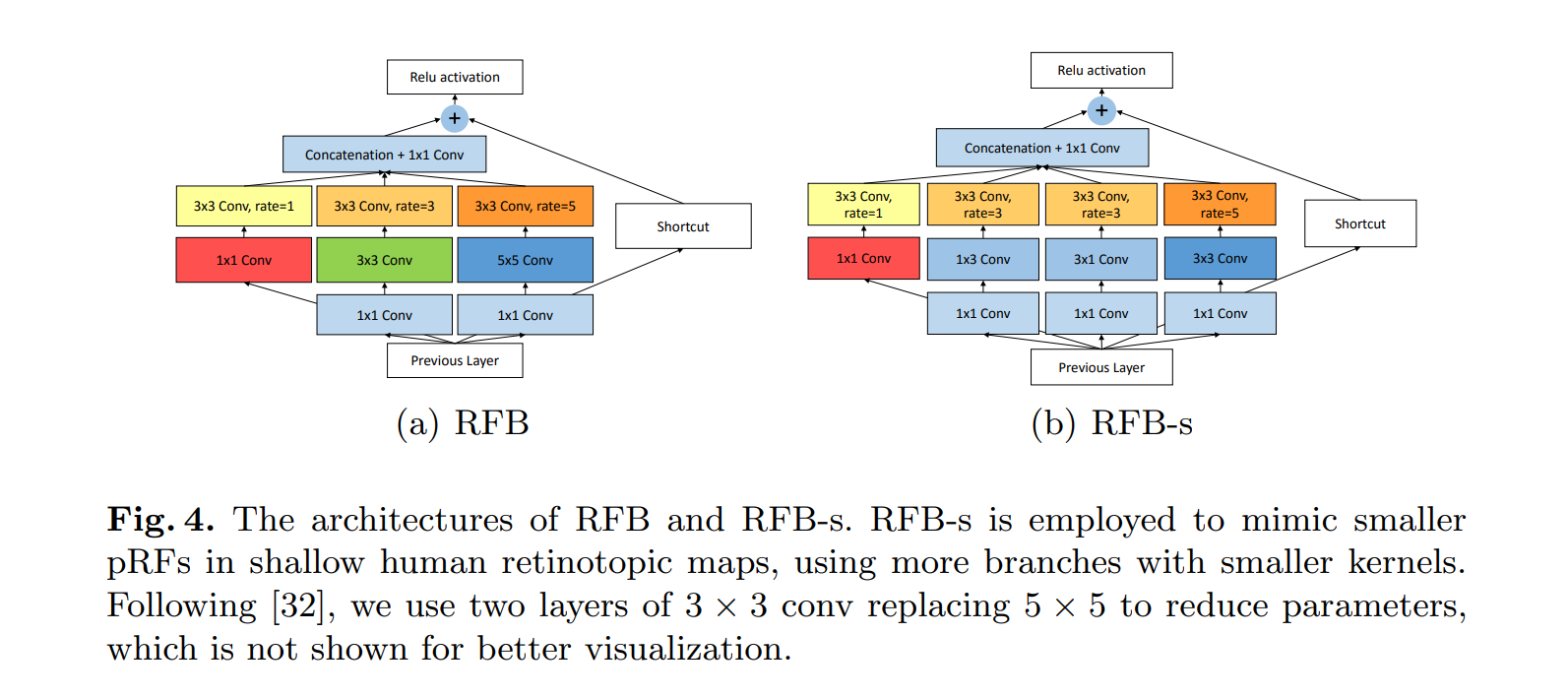

结构如下图所示。

为什么要用空洞卷积呢?

首先要提高感受野,直观的想法就是要么加深层数,要么使用更大的卷积核,要么就是卷积之前使用pooling。加深层数网络参数就会变多,没法完成轻量级的任务;更大的卷积核一样参数也会变多;pooling虽然不会增加参数,但是会使信息损失,不利于后面层的信息传递。所以作者这里很自然的想到用空洞卷积,既不增加参数量,又能够提高感受野。

为什么要用这种多分支结构呢?

这是为了捕捉不同感受野的信息,如前面提到的,人类视野的特点就是距视野中心距离不同感受野不同,所以使用多分支结构,每个分支捕捉一种感受野,最后通过concat来融合感受野信息,就能达到模拟人类视觉的效果了。作者这里也给了一张图来说明。

为什么要提出两种版本的RFB呢?

左边的结构是原始的RFB,右边的结构相比RFB把3×3的conv变成了两个1×3和3×1的分支,一是减少了参数量,二是增加了更小的感受野,这样也是在模拟人类视觉系统,捕捉更小的感受野。

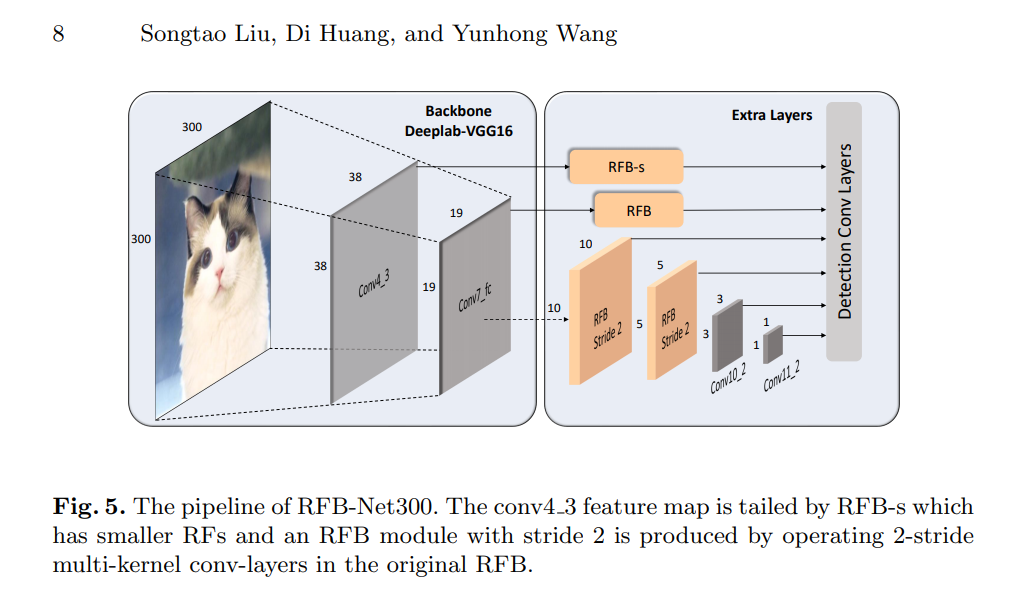

网络结构

整体网络结构如下所示,很好理解。

前面就是vgg19,然后从中间的层分出6个预测分支,比较好理解没啥记的。

代码复现

import torch

import torch.nn as nn

import torch.nn.functional as F

from torchsummary import summary

class RFBModule(nn.Module):

def __init__(self,out,stride = 1):

super(RFBModule,self).__init__()

self.s1 = nn.Sequential(

nn.Conv2d(out,out,kernel_size = 1),

nn.Conv2d(out,out,kernel_size=3,dilation = 1,padding = 1,stride = stride)

)

self.s2 = nn.Sequential(

nn.Conv2d(out,out,kernel_size =1),

nn.Conv2d(out,out,kernel_size=3,padding = 1),

nn.Conv2d(out,out,kernel_size=3,dilation = 3,padding = 3,stride = stride)

)

self.s3 = nn.Sequential(

nn.Conv2d(out,out,kernel_size =1),

nn.Conv2d(out,out,kernel_size = 5,padding =2),

nn.Conv2d(out,out,kernel_size=3,dilation=5,padding = 5,stride = stride)

)

self.shortcut = nn.Conv2d(out,out,kernel_size = 1,stride = stride)

self.conv1x1 = nn.Conv2d(out*3,out,kernel_size =1)

def forward(self,x):

s1 = self.s1(x)

s2 = self.s2(x)

s3 = self.s3(x)

#print(s1.size(),s2.size(),s3.size())

mix = torch.cat([s1,s2,s3],dim = 1)

mix = self.conv1x1(mix)

shortcut = self.shortcut(x)

return mix + shortcut

class RFBsModule(nn.Module):

def __init__(self,out,stride = 1):

super(RFBsModule,self).__init__()

self.s1 = nn.Sequential(

nn.Conv2d(out,out,kernel_size = 1),

nn.Conv2d(out,out,kernel_size=3,dilation = 1,padding = 1,stride = stride)

)

self.s2 = nn.Sequential(

nn.Conv2d(out,out,kernel_size =1),

nn.Conv2d(out,out,kernel_size=(1,3),padding = (0,1)),

nn.Conv2d(out,out,kernel_size=3,dilation = 3,padding = 3,stride = stride)

)

self.s3 = nn.Sequential(

nn.Conv2d(out,out,kernel_size =1),

nn.Conv2d(out,out,kernel_size = (3,1),padding =(1,0)),

nn.Conv2d(out,out,kernel_size=3,dilation=3,padding = 3,stride = stride)

)

self.s4 = nn.Sequential(

nn.Conv2d(out,out,kernel_size =1),

nn.Conv2d(out,out,kernel_size=3),

nn.Conv2d(out,out,kernel_size = 3,dilation = 5,stride = stride,padding = 6)

)

self.shortcut = nn.Conv2d(out,out,kernel_size = 1,stride = stride)

self.conv1x1 = nn.Conv2d(out*4,out,kernel_size =1)

def forward(self,x):

s1 = self.s1(x)

s2 = self.s2(x)

s3 = self.s3(x)

s4 = self.s4(x)

#print(s1.size(),s2.size(),s3.size(),s4.size())

#print(s1.size(),s2.size(),s3.size())

mix = torch.cat([s1,s2,s3,s4],dim = 1)

mix = self.conv1x1(mix)

shortcut = self.shortcut(x)

return mix + shortcut

class RFBNet(nn.Module):

def __init__(self):

super(RFBNet,self).__init__()

self.feature_1 = nn.Sequential(

nn.Conv2d(3,64,kernel_size = 3,padding = 1),

nn.ReLU(),

nn.Conv2d(64,64,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size = 2,stride = 2),

nn.Conv2d(64,128,kernel_size = 3,padding = 1),

nn.ReLU(),

nn.Conv2d(128,128,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size = 2,stride = 2),

nn.Conv2d(128,256,kernel_size = 3,padding = 1),

nn.ReLU(),

nn.Conv2d(256,256,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(256,256,kernel_size=3,padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size = 2,stride = 2),

nn.Conv2d(256,512,kernel_size = 3,padding = 1),

nn.ReLU(),

nn.Conv2d(512,512,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(512,512,kernel_size=3,padding=1),

nn.ReLU(),

)

self.feature_2 = nn.Sequential(

nn.MaxPool2d(kernel_size = 2,stride = 2),

nn.Conv2d(512,512,kernel_size = 3,padding = 1),

nn.ReLU(),

nn.Conv2d(512,512,kernel_size=3,padding=1),

nn.ReLU(),

nn.Conv2d(512,512,kernel_size=3,padding=1),

nn.ReLU(),

)

self.pre = nn.Conv2d(512,64,kernel_size = 1)

self.fc = nn.Conv2d(512,64,kernel_size = 1)

self.det1 = RFBsModule(out = 64,stride = 1)

self.det2 = RFBModule(out = 64,stride = 1)

self.det3 = RFBModule(out = 64,stride = 2)

self.det4 = RFBModule(out = 64,stride = 2)

self.det5 = nn.Conv2d(64,64,kernel_size = 3)

self.det6 = nn.Conv2d(64,64,kernel_size=3)

def forward(self,x):

x = self.feature_1(x)

det1 = self.det1(self.fc(x))

x = self.feature_2(x)

x = self.pre(x)

det2 = self.det2(x)

det3 = self.det3(det2)

det4 = self.det4(det3)

det5 = self.det5(det4)

det6 = self.det6(det5)

det1 = det1.permute(0,2,3,1).contiguous().view(x.size(0),-1,64)

det2 = det2.permute(0,2,3,1).contiguous().view(x.size(0),-1,64)

det3 = det3.permute(0,2,3,1).contiguous().view(x.size(0),-1,64)

det4 = det4.permute(0,2,3,1).contiguous().view(x.size(0),-1,64)

det5 = det5.permute(0,2,3,1).contiguous().view(x.size(0),-1,64)

det6 = det6.permute(0,2,3,1).contiguous().view(x.size(0),-1,64)

return torch.cat([det1,det2,det3,det4,det5,det6],dim = 1)

if __name__ == "__main__":

net = RFBNet()

x = torch.randn(2,3,300,300)

summary(net,(3,300,300),device = "cpu")

print(net(x).size())