目录

- 试验环境 hadoop-2.6.4 + zookeeper-3.4.5 + hbase-0.99.2

- 搭建过程 hbase-0.99.2

- 动态添加主节点、从节点

1、试验环境

1.1 节点为三个:

- 192.168.2.181 hbase1 (主节点)

- 192.168.2.182 hbase2 (从节点)

- 192.168.2.183 hbase3 (从节点)

1.2 安装jdk环境, jdk1.7

1.3 配置ssh : hbase1>hbase2免登陆 , hbase1>hbase3 免登陆

1.4 hadoop-2.6.4 相关配置文件如下:

| hadoop-env.sh

# The java implementation to use. export JAVA_HOME=/home/hadoop/apps/jdk1.7.0_45 |

| Core-site.xml

<configuration> <property> <name>fs.defaultFS</name> <value>hdfs://hbase1:9000</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/home/hadoop/apps/hdpdata/</value> </property> </configuration> |

| hdfs-site.xml

<configuration> <property> <name>dfs.namenode.name.dir</name> <value>/home/hadoop/data/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>/home/hadoop/data/data</value> </property> <property> <name>dfs.replication</name> <value>3</value> </property> <property> <name>dfs.secondary.http.address</name> <value>hbase1:50090</value> </property> </configuration> |

| mapred-site.xml

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration> |

| yarn-site.xml

<configuration> <property> <name>yarn.resourcemanager.hostname</name> <value>hbase1</value> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> </configuration> |

| Slaves

hbase2 hbase3 |

1.5 zookeeper-3.4.5

1.5.1 解压

tar -zxvf zookeeper-3.4.5.tar.gz -C /home/hadoop/app/

1.5.2 修改配置

cd /home/hadoop/app/zookeeper-3.4.5/conf/

cp zoo_sample.cfg zoo.cfg

vim zoo.cfg

修改:dataDir=/home/hadoop/app/zookeeper-3.4.5/tmp

在最后添加:

server.1=hbase1:2888:3888

server.2=hbase2:2888:3888

server.3=hbase3:2888:3888

保存退出

然后创建一个tmp文件夹

mkdir /home/hadoop/app/zookeeper-3.4.5/tmp

echo 1 > /home/hadoop/app/zookeeper-3.4.5/tmp/myid

1.5.3 配置从节点:

将配置好的zookeeper拷贝到其他节点(首先分别在hbase2、hbase3根目录下创建一个hadoop目录:mkdir /hadoop)

scp -r /home/hadoop/app/zookeeper-3.4.5/ hbase2:/home/hadoop/app/

scp -r /home/hadoop/app/zookeeper-3.4.5/ hbase3:/home/hadoop/app/

hbase2:

echo 2 > /home/hadoop/app/zookeeper-3.4.5/tmp/myid

hbase3:

echo 3 > /home/hadoop/app/zookeeper-3.4.5/tmp/myid

2、搭建过程 hbase-0.99.2

解压

tar –zxvf hbase-0.99.2-bin.tar.gz

mv hbase-0.99.2 hbase

修改环境变量

su – root

vi /etc/profile

添加内容如下:

| export HBASE_HOME=/home/hadoop/hbase export PATH=$PATH:$HBASE_HOME/bin |

执行命令:

source /etc/profile

su – hadoop

修改配置文件

| Hbase-env.sh

# The java implementation to use. Java 1.7+ required. export JAVA_HOME=/home/hadoop/apps/jdk1.7.0_45

# Extra Java CLASSPATH elements. Optional. export JAVA_CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

# Tell HBase whether it should manage it's own instance of Zookeeper or not. export HBASE_MANAGES_ZK=false |

| Hbase-site.xml

<configuration>

<property> <name>hbase.rootdir</name> <value>hdfs://hbase1:9000/hbase</value> </property>

<property> <name>hbase.cluster.distributed</name> <value>true</value> </property>

<property> <name>hbase.zookeeper.quorum</name> <value>hbase1,hbase2,hbase3</value> </property>

<property> <name>hbase.master.maxclockskew</name> <value>180000</value> </property>

</configuration> |

| regionservers

hbase2 hbase3 |

将hadoop中的 core-site.xml hdfs-site.xml 拷到 hbase的conf文件中

cp /home/hadoop/hadoop/etc/hadoop/hdfs-site.xml /home/hadoop/hbase/conf

cp /home/hadoop/hadoop/etc/hadoop/core-site.xml /home/hadoop/hbase/conf

发送到从节点hbase2, hbase3中

su - hadoop

scp –r /home/hadoop/hbase hbase2:/home/hadoop

scp –r /home/hadoop/hbase hbase3:/home/hadoop

启动主节点进程(先启动zookeeper,再启动hadoop,最后在启动hbase)

su – hadoop

start-hbase.sh

查看hbase1 中进程 jps

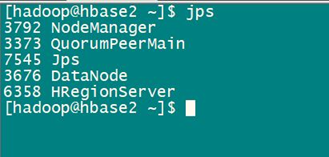

查看hbase2 中进程 jps

查看hbase3 中进程 jps

3、动态添加主节点、从节点

添加主节点:设置hbase双主命令(在某个节点可以是从节点)

$ local-master-backup.sh start 2

添加从节点:动态添加hbase节点

$ hbase-daemon.sh start regionserver