评价线性回归的指标有四种,均方误差(Mean Squared Error)、均方根误差(Root Mean Squared Error)、平均绝对值误差(Mean Absolute Error)以及R Squared方法。 sklearnz中使用的,也是大家推荐的方法是R Squared方法。

1、均方误差 MSE

MSE的值越小,说明预测模型描述实验数据具有更好的精确度

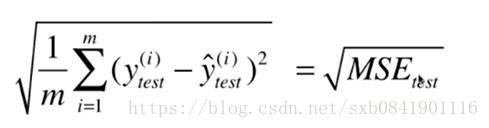

2、均方根误差 RMSE

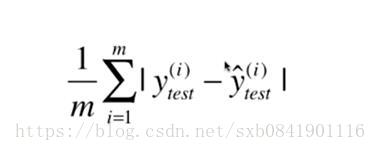

3、平均绝对值误差 MAE

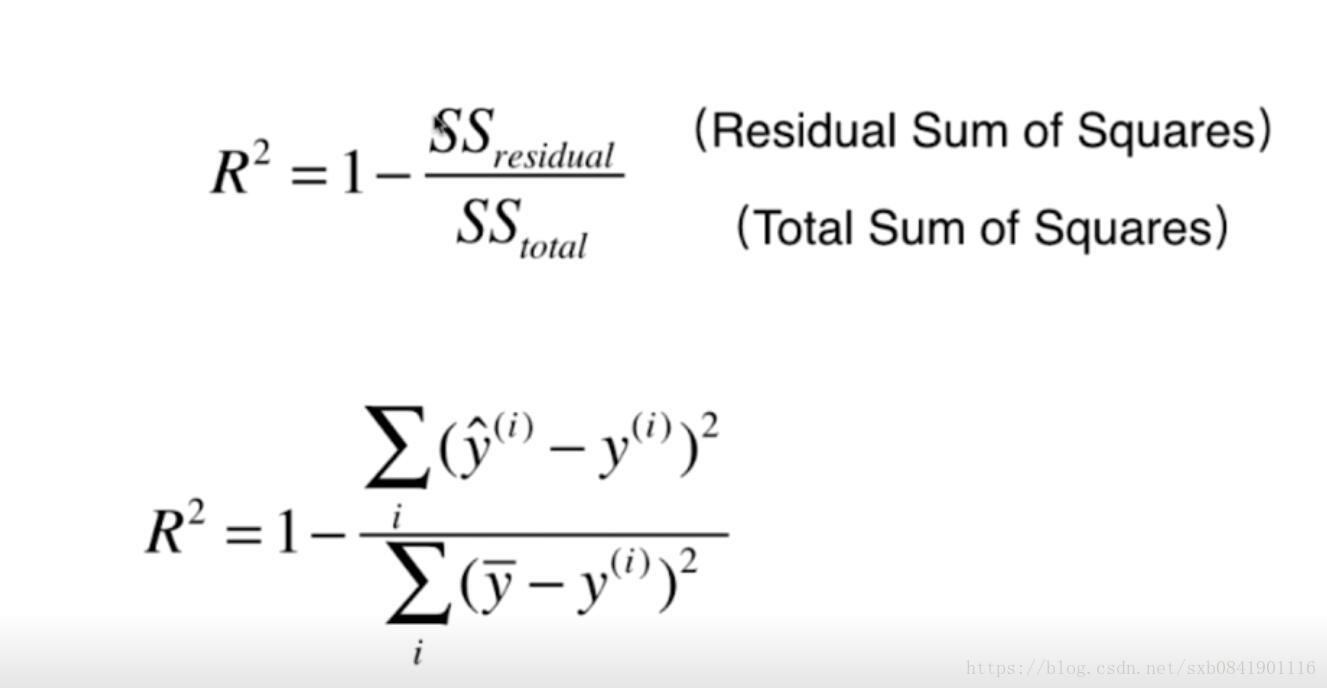

4、 R平方 R2S

四种代码实现:

def mean_squared_error(y_true, y_predict):

"""计算y_true和y_predict之间的MSE"""

assert len(y_true) == len(y_predict), \

"the size of y_true must be equal to the size of y_predict"

return np.sum((y_true - y_predict)**2) / len(y_true)

def root_mean_squared_error(y_true, y_predict):

"""计算y_true和y_predict之间的RMSE"""

return sqrt(mean_squared_error(y_true, y_predict))

def mean_absolute_error(y_true, y_predict):

"""计算y_true和y_predict之间的RMSE"""

assert len(y_true) == len(y_predict), \

"the size of y_true must be equal to the size of y_predict"

return np.sum(np.absolute(y_true - y_predict)) / len(y_true)

def r2_score(y_true, y_predict):

"""计算y_true和y_predict之间的R Square"""

return 1 - mean_squared_error(y_true, y_predict)/np.var(y_true)import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import load_boston

from model_selection import train_test_split

from simplelinerregression import SimpleLineRegession2

from metrics import *

boston = load_boston()

x = boston.data[:, 5]

y = boston.target

x = x[y < 50.0]

y = y[y < 50.0]

x_train, x_test, y_train, y_test = train_test_split(x, y)

reg = SimpleLineRegession2()

reg.fit(x_train, y_train)

print 'MAE:', mean_absoulte_error(y_test, reg.predict(x_test))

print 'RMSE:', root_mean_squared_error(y_test, reg.predict(x_test))

print 'MSE:', mean_squared_error(y_test, reg.predict(x_test))

print 'RSE:', r2_score(y_test, reg.predict(x_test))

plt.scatter(x, y)

plt.plot(x, reg.predict(x), color="red")

plt.show()以上用波士顿房价数据,其中选择一个维度进行的分析:

不同指标的分数:

MAE: 4.341835956360974

RMSE: 6.2500402273

MSE: 39.063002842901284

RSE: 0.47025653581866034

参考文章:

https://blog.csdn.net/qq_37279279/article/details/81041470?utm_source=blogxgwz1

要是你在西安,感兴趣一起学习AIOPS,欢迎加入QQ群 860794445