版权声明:所有原创,转载请在开头注明出处 https://blog.csdn.net/SELECT_BIN/article/details/83275585

导包,如果是用的maven,添加依赖:

<dependency>

<groupId>commons-httpclient</groupId>

<artifactId>commons-httpclient</artifactId>

<version>3.1</version>

</dependency>

<dependency>

<groupId>commons-httpclient</groupId>

<artifactId>commons-httpclient</artifactId>

<version>3.1</version>

</dependency>

<dependency>

<groupId>commons-httpclient</groupId>

<artifactId>commons-httpclient</artifactId>

<version>3.1</version>

</dependency>Java代码:

package com.ai.rai.group.system;

import java.io.*;

import java.net.MalformedURLException;

import java.net.URL;

import java.net.URLConnection;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

/**

* @version 1.0

* @ClassName RetrivePage

* @Description

* @Author 74981

* @Date 2018/10/19 14:32

*/

public class RetrivePage {

// 设置代理服务器

// static {

// // 设置代理服务器的 IP 地址和端口

// httpClient.getHostConfiguration().setProxy("10.21.67.39", 8088);

// }

public static void downloadPage(String path){

URL url;

URLConnection urlconn;

BufferedReader br = null;

PrintWriter pw = null;

//url匹配规则

String regex = "https://[\\w+\\.?/?]+\\.[A-Za-z]+";

Pattern p = Pattern.compile(regex);

try {

url = new URL(path);//爬取的网址

urlconn = url.openConnection();

//将爬取到的链接放到D盘的SiteURL文件中

pw = new PrintWriter(new FileWriter("D:/SiteURL.txt"), true);

br = new BufferedReader(new InputStreamReader(

urlconn.getInputStream()));

String buf;

while ((buf = br.readLine()) != null) {

Matcher buf_m = p.matcher(buf);

while (buf_m.find()) {

pw.println(buf_m.group());

}

}

System.out.println("爬取成功^_^");

} catch (MalformedURLException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} finally {

try {

br.close();

} catch (IOException e) {

e.printStackTrace();

}

pw.close();

}

}

/**

* 测试代码

*/

public static void main(String[] args) {

// 抓取 这个人博客 首页,输出

try {

RetrivePage.downloadPage("https://blog.csdn.net/SELECT_BIN");

} catch (Exception e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

}

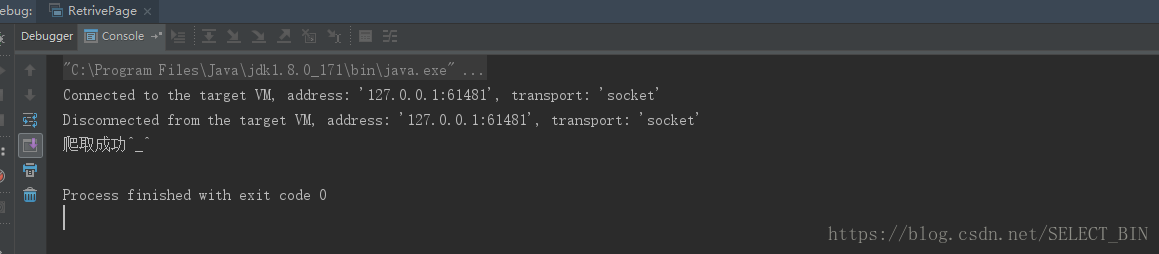

控制台输出:

输出文件: