1. 配置文件如下:

[hadoop@langzi01 conf]$ cat tail-hdfs.conf 内容如下:

#Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1

#describe/configure the source

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /home/hadoop/log/test.log

a1.sources.r1.channels = c1

#describe the sink

a1.sinks.k1.type = hdfs

a1.sinks.k1.channel = c1

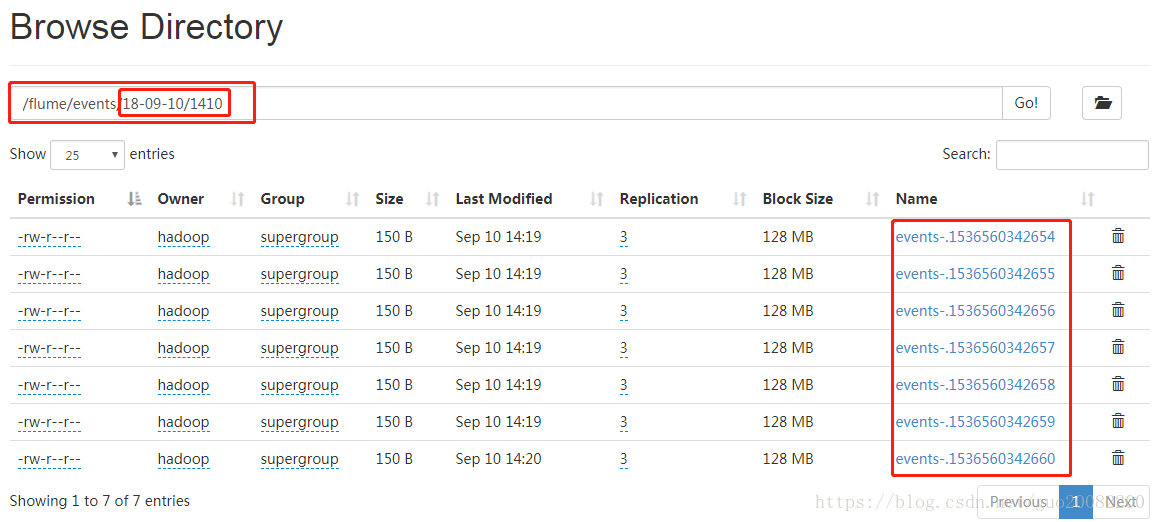

a1.sinks.k1.hdfs.path = /flume/events/%y-%m-%d/%H%M

a1.sinks.k1.hdfs.filePrefix = events-

a1.sinks.k1.hdfs.round = true

a1.sinks.k1.hdfs.roundValue = 10

a1.sinks.k1.hdfs.roundUnit = minute

a1.sinks.k1.rollInterval = 3

a1.sinks.k1.rollSize = 200

a1.sinks.k1.rollCount = 10

a1.sinks.k1.batchSize = 5

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#生成的文件类型,默认是Sequencefile,可用DataStream,则为普通文本

a1.sinks.k1.hdfs.fileType = DataStream

#Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity= 100

#bind the source and sink to thw channel

a1.sourcesr1.channels = c1

a1.sinks.k1.channel = c12. 创建/home/hadoop/log/test.log

[hadoop@langzi01 log]$ touch test.log

#向文件里面追加数据,1s追加一次

[hadoop@langzi01 log]$ while true

> do

> echo aaaaaaaaaaaaaa >> test.log

> sleep 1

> done

3. 启动flume(保证hdfs已经处于工作状态)

[hadoop@langzi01 conf]$ ../bin/flume-ng agent --conf conf --conf-file tail-hdfs.conf --name a1即可。