需求

实时监控Hive日志,并上传到HDFS中

环境配置

将commons-configuration2-2.1.1.jar、hadoop-auth-3.1.2.jar、hadoop-common-3.1.2.jar、hadoop-hdfs-3.1.2.jar、commons-io-2.5.jar、htrace-core4-4.1.0-incubating.jar拷贝到/usr/local/apache-flume-1.9.0/lib文件夹下。

实现

第一步:在flume目录下创建文件夹job,然后在其下面创建配置文件flume-telnet-logger.conf:

# Name the components on this agent

a2.sources = r2

a2.sinks = k2

a2.channels = c2

# Describe/configure the source

a2.sources.r2.type = exec

a2.sources.r2.command = tail -F /usr/local/apache-hive-3.1.1/logs/hive.log

a2.sources.r2.shell = /bin/bash -c

# Describe the sink

a2.sinks.k2.type = hdfs

a2.sinks.k2.hdfs.path = hdfs://hcmaster:8020/flume/%Y%m%d/%H

a2.sinks.k2.hdfs.filePrefix = logs-

a2.sinks.k2.hdfs.round = true

a2.sinks.k2.hdfs.roundValue = 1

a2.sinks.k2.hdfs.roundUnit = hour

a2.sinks.k2.hdfs.useLocalTimeStamp = true

a2.sinks.k2.hdfs.batchSize = 100

a2.sinks.k2.hdfs.fileType = DataStream

a2.sinks.k2.hdfs.rollInterval = 600

a2.sinks.k2.hdfs.rollSize = 134217700

a2.sinks.k2.hdfs.rollCount = 0

a2.sinks.k2.hdfs.minBlockReplicas = 1

# Use a channel which buffers events in memory

a2.channels.c2.type = memory

a2.channels.c2.capacity = 1000

a2.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r2.channels = c2

a2.sinks.k2.channel = c2

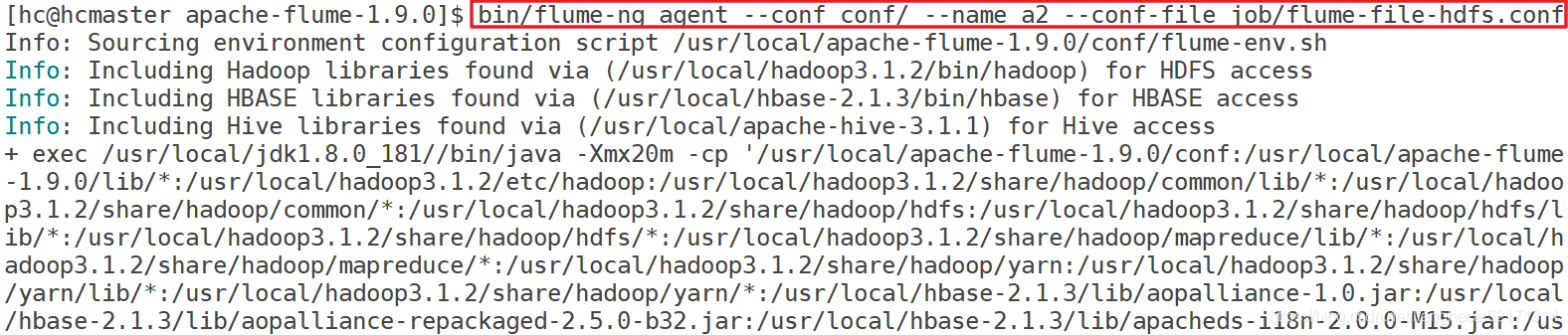

第二步:启动flume

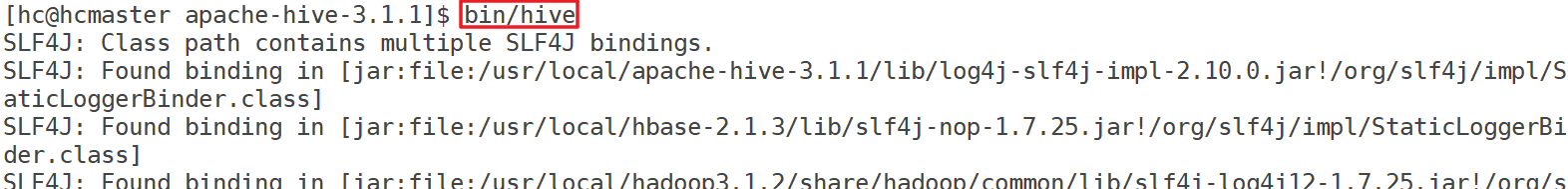

第三步:启动HDFS、启动Hive

在打开的hive shell窗口中随便输入一些命令,让hive产生一些log信息

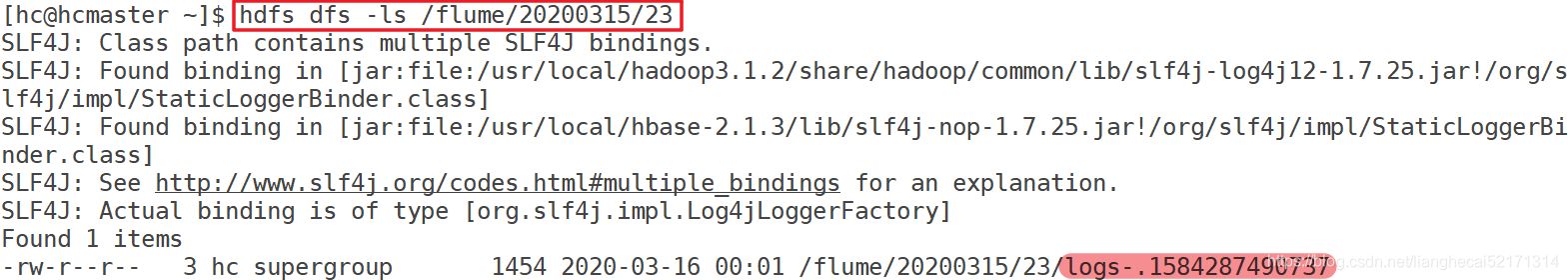

第四步:查看hdfs:

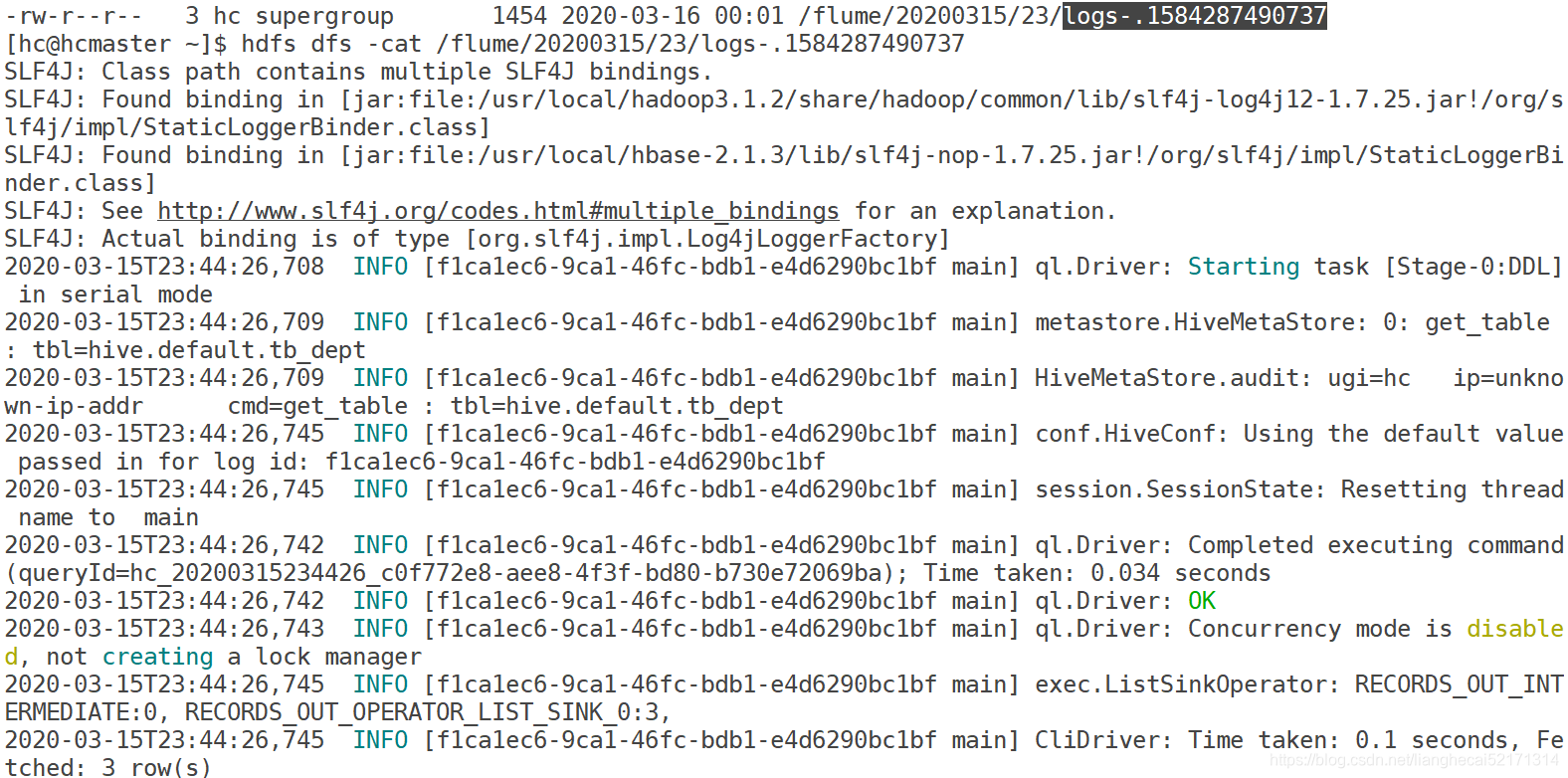

查看logs-.1584287490737: