自定义源算子

import org.apache.flink.streaming.api.functions.source.SourceFunction

import java.util.Calendar

import scala.util.Random

/**

* DATE:2022/10/4 0:03

* AUTHOR:GX

*/

case class Event(user:String,url:String,timestamp:Long)

//addSource

class ClickSource extends SourceFunction[Event]{

//ParallelSourceFunction[Event] 算子可以设置并行度

//SourceFunction[Event] 并行度必须是 1

//标志位

var running = true

override def run(sourceContext: SourceFunction.SourceContext[Event]): Unit = {

//随机数生成器

val random = new Random()

//定义随机数范围

val users = Array("Mary","Alice","Bob","Cary","Leborn")

val urls = Array("./home","./cart","./fav","./prod?id=1","./prod?id=2","./prod?id=3")

//用标志位作为循环判断条件,不停的发送数据

while (running) {

//随机生成一个event

val event = Event(users(random.nextInt(users.length)),

urls(random.nextInt(urls.length)),

Calendar.getInstance.getTimeInMillis)

//调用ctx的方法向下游发送数据

sourceContext.collect(event)

//每隔1秒发送一条数据

Thread.sleep(1000)

}

}

override def cancel(): Unit = running = false

}

Flink读取数据

import org.apache.flink.streaming.api.scala.{StreamExecutionEnvironment, createTypeInformation}

/**

* DATE:2022/10/4 0:21

* AUTHOR:GX

*/

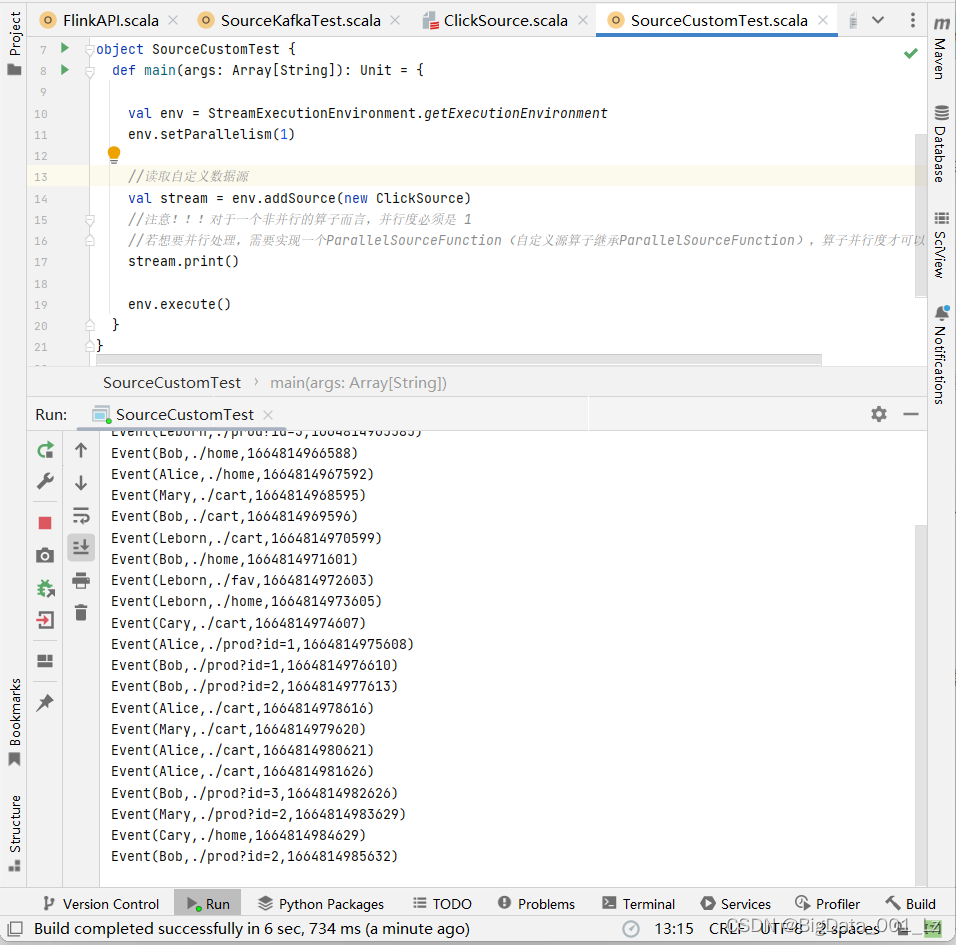

object SourceCustomTest {

def main(args: Array[String]): Unit = {

val env = StreamExecutionEnvironment.getExecutionEnvironment

env.setParallelism(1)

//读取自定义数据源

val stream = env.addSource(new ClickSource)

//注意!!!对于一个非并行的算子而言,并行度必须是 1

//若想要并行处理,需要实现一个ParallelSourceFunction(自定义源算子继承ParallelSourceFunction),算子并行度才可以设置多个

stream.print()

env.execute()

}

}