目录

当我们在生产实践中,或多或少会遇到将输入源按照需要进行切分的场景。

注意:只能使用在Stream上。

分流方法

1.filter分流 (不推荐)

可以通过定义fliter()函数进行处理,如果filter()函数返回true则保留。否则丢弃。在分流的场景下,通过多次filter,确实可以达到将需要的不同数据生成不同的流。

filter例子

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//获取数据源

List data = new ArrayList<Tuple3<Integer,Integer,Integer>>();

data.add(new Tuple3<>(0,1,0));

data.add(new Tuple3<>(0,1,1));

data.add(new Tuple3<>(0,2,2));

data.add(new Tuple3<>(0,1,3));

data.add(new Tuple3<>(1,2,5));

data.add(new Tuple3<>(1,2,9));

data.add(new Tuple3<>(1,2,11));

data.add(new Tuple3<>(1,2,13));

DataStreamSource<Tuple3<Integer,Integer,Integer>> items = env.fromCollection(data);

SingleOutputStreamOperator<Tuple3<Integer, Integer, Integer>> zeroStream = items.filter((FilterFunction<Tuple3<Integer, Integer, Integer>>) value -> value.f0 == 0);

SingleOutputStreamOperator<Tuple3<Integer, Integer, Integer>> oneStream = items.filter((FilterFunction<Tuple3<Integer, Integer, Integer>>) value -> value.f0 == 1);

zeroStream.print();

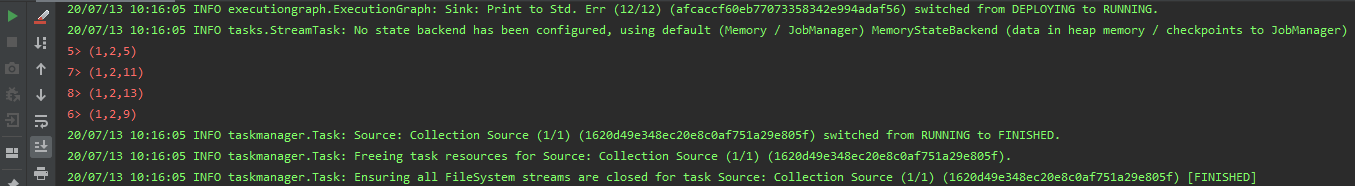

oneStream.printToErr();

//打印结果

String jobName = "user defined streaming source";

env.execute(jobName);

}

filter弊端

为了得到需要的流数据,需要多次遍历原始流,在无形中已经浪费了我们的集群资源。

2.Split分流 (过时)

split也是Flink提供给我们将流切分的方法,需要在split算子中定义OutputSelector,然后重写其中的select方法,将不同类型的数据进行标记,最后对返回的SplitStream使用select()方法将对应的数据选择出来。

Split例子

import org.apache.flink.api.java.tuple.Tuple3;

import org.apache.flink.streaming.api.collector.selector.OutputSelector;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SplitStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import java.util.ArrayList;

import java.util.List;

public class SideOutDemo {

public static void main(String[] args) throws Exception {

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//获取数据源

List data = new ArrayList<Tuple3<Integer,Integer,Integer>>();

data.add(new Tuple3<>(0,1,0));

data.add(new Tuple3<>(0,1,1));

data.add(new Tuple3<>(0,2,2));

data.add(new Tuple3<>(0,1,3));

data.add(new Tuple3<>(1,2,5));

data.add(new Tuple3<>(1,2,9));

data.add(new Tuple3<>(1,2,11));

data.add(new Tuple3<>(1,2,13));

final DataStreamSource<Tuple3<Integer,Integer,Integer>> streamSource = env.fromCollection(data);

//Split 分流已过时

final SplitStream<Tuple3<Integer, Integer, Integer>> split = streamSource.split(new OutputSelector<Tuple3<Integer, Integer, Integer>>() {

@Override

public Iterable<String> select(Tuple3<Integer, Integer, Integer> value) {

final ArrayList<String> tags = new ArrayList<>();

if (value.f0 == 0) {

tags.add("zeroStream");

} else if (value.f0 == 1) {

tags.add("oneStream");

}

return tags;

}

});

split.select("zeroStream").print();

split.select("oneStream").printToErr();

String jobName = "user defined streaming source";

env.execute(jobName);

}

}

Split弊端

使用split算子切分过的流,是不能进行二次切分的。如果在对上述的zeroStream和oneStream流再次进行切分,则会抛异常。

java.lang.IllegalStateException: Consecutive multiple splits are not supported. Splits are deprecated. Please use side-outputs.

3.SideOutPut分流

sideOutPut是Flink框架提供的最新的也是最为推荐的分流方法。步骤:

- 定义OutPutTag

- 调用特定函数进行数据切分

- ProcessFunction

- KeyedProcessFunction

- CoProcessFunction

- KeyedCoProcessFunction

- ProcessWindowFunction

- ProcessAllWindowFunction

这里使用ProcessFunction 进行操作。

SideOutPut例子

public static void main(String[] args) throws Exception {

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//获取数据源

List data = new ArrayList<Tuple3<Integer, Integer, Integer>>();

data.add(new Tuple3<>(0, 1, 0));

data.add(new Tuple3<>(0, 1, 1));

data.add(new Tuple3<>(0, 2, 2));

data.add(new Tuple3<>(0, 1, 3));

data.add(new Tuple3<>(1, 2, 5));

data.add(new Tuple3<>(1, 2, 9));

data.add(new Tuple3<>(1, 2, 11));

data.add(new Tuple3<>(1, 2, 13));

final DataStreamSource<Tuple3<Integer, Integer, Integer>> streamSource = env.fromCollection(data);

final OutputTag<Tuple3<Integer, Integer, Integer>> zeroStream = new OutputTag<Tuple3<Integer, Integer, Integer>>("zeroStream1") {

};

final OutputTag<Tuple3<Integer, Integer, Integer>> oneStream = new OutputTag<Tuple3<Integer, Integer, Integer>>("oneStream1") {

};

final SingleOutputStreamOperator<Tuple3<Integer, Integer, Integer>> process = streamSource.process(new ProcessFunction<Tuple3<Integer, Integer, Integer>, Tuple3<Integer, Integer, Integer>>() {

@Override

public void processElement(Tuple3<Integer, Integer, Integer> value, Context ctx, Collector<Tuple3<Integer, Integer, Integer>> out) throws Exception {

if (value.f0 == 0) {

ctx.output(zeroStream, value);

} else if (value.f0 == 1) {

ctx.output(oneStream, value);

}

}

});

final DataStream<Tuple3<Integer, Integer, Integer>> zeroSideOutput = process.getSideOutput(zeroStream);

final DataStream<Tuple3<Integer, Integer, Integer>> oneSideOutput = process.getSideOutput(oneStream);

zeroSideOutput.print();

oneSideOutput.printToErr();

String jobName = "user defined streaming source";

env.execute(jobName);

}

SideOutPut二次分流例子

public static void main(String[] args) throws Exception {

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//获取数据源

List data = new ArrayList<Tuple3<Integer, Integer, Integer>>();

data.add(new Tuple3<>(0, 1, 0));

data.add(new Tuple3<>(0, 1, 1));

data.add(new Tuple3<>(0, 2, 2));

data.add(new Tuple3<>(0, 1, 3));

data.add(new Tuple3<>(1, 2, 5));

data.add(new Tuple3<>(1, 2, 9));

data.add(new Tuple3<>(1, 2, 11));

data.add(new Tuple3<>(1, 2, 13));

final DataStreamSource<Tuple3<Integer, Integer, Integer>> streamSource = env.fromCollection(data);

final OutputTag<Tuple3<Integer, Integer, Integer>> zeroStream = new OutputTag<Tuple3<Integer, Integer, Integer>>("zeroStream1") {

};

final OutputTag<Tuple3<Integer, Integer, Integer>> oneStream = new OutputTag<Tuple3<Integer, Integer, Integer>>("oneStream1") {

};

final SingleOutputStreamOperator<Tuple3<Integer, Integer, Integer>> process = streamSource.process(new ProcessFunction<Tuple3<Integer, Integer, Integer>, Tuple3<Integer, Integer, Integer>>() {

@Override

public void processElement(Tuple3<Integer, Integer, Integer> value, Context ctx, Collector<Tuple3<Integer, Integer, Integer>> out) throws Exception {

if (value.f0 == 0) {

ctx.output(zeroStream, value);

} else if (value.f0 == 1) {

ctx.output(oneStream, value);

}

}

});

final DataStream<Tuple3<Integer, Integer, Integer>> zeroSideOutput = process.getSideOutput(zeroStream);

final DataStream<Tuple3<Integer, Integer, Integer>> oneSideOutput = process.getSideOutput(oneStream);

//zeroSideOutput.print();

//oneSideOutput.printToErr();

//二次分流

final OutputTag<Tuple3<Integer, Integer, Integer>> f1twoStream = new OutputTag<Tuple3<Integer, Integer, Integer>>("f1twoStream") {

};

final SingleOutputStreamOperator<Tuple3<Integer, Integer, Integer>> process1 = zeroSideOutput.process(new ProcessFunction<Tuple3<Integer, Integer, Integer>, Tuple3<Integer, Integer, Integer>>() {

@Override

public void processElement(Tuple3<Integer, Integer, Integer> value, Context ctx, Collector<Tuple3<Integer, Integer, Integer>> collector) throws Exception {

if (value.f1 == 2) {

ctx.output(f1twoStream, value);

}

}

});

final DataStream<Tuple3<Integer, Integer, Integer>> sideOutput = process1.getSideOutput(f1twoStream);

sideOutput.printToErr();

String jobName = "user defined streaming source";

env.execute(jobName);

}