XPath基础

# XPath表达式

'''

XPath与正则对比:

1. XPath效率高

2. 正则功能强大

3. 一般优先选择XPath,但是XPath解决不了的问题,则用正则解决

# 简单说明快速使用,更为完善的版本以后补上

/ 逐层提取

text() 提取标签下面的文本

//标签名A 提取所有名为A的标签

//标签名A[@属性名B='属性值b'] 提取属性B值为b的标签

@属性名 取某个属性

<html>

<head><title>我是标题</title></head>

<body>

<div class='tools'>

<div class="newhead">

<ul class="newhead_oprate">

<li>

<a target="_blank">我是内容</a>

</li>

<ul>

</div>

</div>

<div><div class="newhead"></div></div>

</body>

示例:

提取标题:/html/head/title/text()->我是标题

提取所有div标签://div

提取div中<div class='tools'>标签的内容://div[@class='tools']

提取"我是内容"://ul[@class='newhead_oprate']/li/a/text()

'''

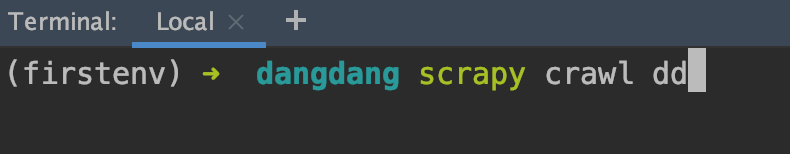

Scrapy爬虫框架简单实例

一、创建项目

scrapy startproject dangdang

二、创建爬虫

scrapy genspider dd "dangdang.com"

三、编写代码

1.item文件编写

items用于存储字段的定义,即爬取的内容存item类。

import scrapy

class DangdangItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

title = scrapy.Field()

link = scrapy.Field()

comment = scrapy.Field()

2.spider文件编写

# -*- coding: utf-8 -*-

import scrapy

from scrapy.http import Request

from ..items import DangdangItem

class DdSpider(scrapy.Spider):

name = 'dd'

allowed_domains = ['dangdang.com']

start_urls = ['http://search.dangdang.com/?key=%CE%C0%D2%C2&category_id=10010336&page_index=1']

def parse(self, response):

#创建容器

item = DangdangItem()

#信息提取

item["title"] = response.xpath("//a[@name='itemlist-title']/@title").extract()

item["link"] = response.xpath("//a[@name='itemlist-title']/@href").extract()

item["comment"] = response.xpath("//a[@name='itemlist-review']/text()").extract()

#print(item["title"])

#数据传给pipeline处理 默认pipeline关闭,需要到setting文件中取消注释

yield item

for i in range(2,6):

url = 'http://search.dangdang.com/?key=%CE%C0%D2%C2&category_id=10010336&page_index='+str(i)

yield Request(url, callback=self.parse)

3. pipeline文件编写

# -*- coding: utf-8 -*-

import pymysql

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

class DangdangPipeline(object):

def process_item(self, item, spider):

conn = pymysql.connect(host="127.0.0.1", user="root", passwd="你的数据库密码", db="dd")#, charset="utf8"

cursor = conn.cursor()

for i in range(0, len(item["title"])):

title = item["title"][i]

link = item["link"][i]

comment = item["comment"][i]

#print(title+" : "+link+" : "+comment)

sql = "insert into goods(title,link,comment) values ('"+title+"','"+link+"','"+comment+"')"

sql = "insert into goods(title,link,comment) values (%s, %s, %s)"

print(sql)

try:

#conn.query(sql)

cursor.execute(sql, (title, link, comment))

conn.commit()

except Exception as err:

print(err)

conn.close()

return item

- setting文件修改

四、测试