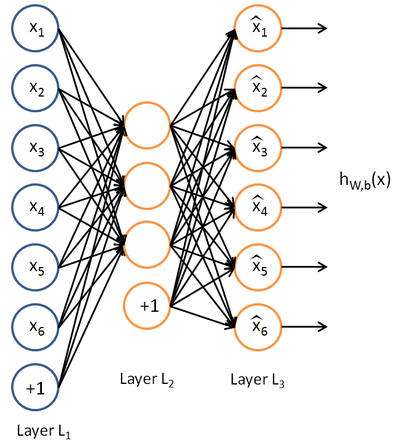

自编码器是一种特殊的神经网络(neural network),它的输出目标(target)就是输入(所以它基本上就是试图将输出重构为输入),由于它不需要任何人工标注,所以可以采用无监督的方式进行训练。

自编码器其实也是一种神经网络算法。它与神经网络的区别有:

1、自编码器适合无监督学习,即没有标注,也可以提取高阶特征;

2、输入与输出一致,期望提炼出高阶特征来还原自身数据。

3、单隐含层的自编码器,类似于主成分分析(PCA)

实际作用:

先用自编码器的方法进行无监督的预训练,提取特征并初始化权重,然后使用标注信息进行监督式的训练。

当然不局限于预训练,直接使用自编吗器进行特征提取与分析也是可以的(降维)。

TensorFlow实现:

最具代表性的是去噪自编码器。

1、定义一个类,包含:

- 网络结构,即一些数学公式:激活函数、最终层的复原函数

- 损失函数

- 一些调用成员函数:权重初始化、cost及训练、复原、权重的提取

2、加载数据,标准化处理,迭代学习

示例

import numpy as np

import sklearn.preprocessing as prep

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

# 使用一种参数初始化方法xavier initialization,它的特点是会根据某一层网络的输入,输出节点数量自动调整最合适的分布。

# 如果深度学习模型的权重初始化的太小,那么信号将在每层间传输时逐渐缩小而难以产生作用,但如果初始化得太大,那信号将在每层间传递时逐渐放大并导致发散和失效。

# Xavier就是让权重满足0均值,同时方差为2/(nin + nout),分布可以用均匀分布,

def xavier_init(fan_in, fan_out, constant=1):

low = -constant * np.sqrt(6.0 / (fan_in + fan_out))

high = constant * np.sqrt(6.0 / (fan_in + fan_out))

return tf.random_uniform((fan_in, fan_out),

minval=low, maxval=high,

dtype=tf.float32)

# 定义去噪自编吗的class,包含一个构建函数__init__(),还有一些常用的成员函数

class AdditiveGaussianNoiseAutoencoder(object):

# n_input:输入变量数;n_hidden:隐含层节点数;transfer_function:隐含层的激活函数;scale:高斯噪声系数

def __init__(self, n_input, n_hidden, transfer_function=tf.nn.softplus,

optimizer=tf.train.AdamOptimizer(), scale=0.1):

self.n_input = n_input

self.n_hidden = n_hidden

self.transfer = transfer_function

self.scale = tf.placeholder(tf.float32)

self.training_scale = scale

network_weights = self._initialize_weights()

self.weights = network_weights

# 定义网络结构

self.x = tf.placeholder(tf.float32, [None, self.n_input])

self.hidden = self.transfer(tf.add(tf.matmul(

self.x + scale * tf.random_normal((n_input,)),

self.weights['w1']), self.weights['b1']))

self.reconstruction = tf.add(tf.matmul(self.hidden,

self.weights['w2']), self.weights['b2'])

# 定义损失函数,平方误差作为cost

self.cost = 0.5 * tf.reduce_sum(tf.pow(tf.subtract(

self.reconstruction, self.x), 2.0))

self.optimizer = optimizer.minimize(self.cost)

init = tf.global_variables_initializer()

self.sess = tf.Session()

self.sess.run(init)

# 参数初始化函数定义

def _initialize_weights(self):

all_weights = dict()

all_weights['w1'] = tf.Variable(xavier_init(self.n_input, self.n_hidden))

all_weights['b1'] = tf.Variable(tf.zeros([self.n_hidden], dtype=tf.float32))

all_weights['w2'] = tf.Variable(tf.zeros([self.n_hidden, self.n_input], dtype=tf.float32))

all_weights['b2'] = tf.Variable(tf.zeros([self.n_input], dtype=tf.float32))

return all_weights

# 定义计算损失cost及执行一步训练的函数

def partial_fit(self, x):

cost, opt = self.sess.run((self.cost, self.optimizer),

feed_dict={self.x: x, self.scale: self.training_scale})

return cost

# 只求损失cost的函数

def calc_total_cost(self, x):

return self.sess.run(self.cost,

feed_dict={self.x: x, self.scale: self.training_scale})

# 返回自编码器隐含层的输出结果

def transform(self, x):

return self.sess.run(self.hidden,

feed_dict={self.x: x, self.scale: self.training_scale})

# 将隐含层的输出结果作为输入,通过重建层将提取到的高阶特征复原为原始数据

def generate(self, hidden=None):

if hidden is None:

hidden = np.random_normal(size=self.weights["b1"])

return self.sess.run(self.reconstruction,

feed_dict={self.hidden: hidden})

# 整体运行一遍复原过程,包括提取高阶特征和通过高阶特征复原数据

def reconstruct(self, x):

return self.sess.run(self.construction,

feed_dict={self.x: x, self.scale: self.training_scale})

# 获取隐含层的权重W1

def getWeights(self):

return self.sess.run(self.weights['w1'])

def getBiases(self):

return self.sess.run(self.weights['b1'])

# 使用我们定义好的类

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

# 标准化处理

def standard_scale(x_train, x_test):

preprocessor = prep.StandardScaler().fit(x_train)

x_train = preprocessor.transform(x_train)

x_test = preprocessor.transform(x_test)

return x_train, x_test

# 获取随机block,不放回抽样

def get_random_block_from_data(data, batch_size):

start_index = np.random.randint(0, len(data) - batch_size)

return data[start_index: (start_index + batch_size)]

x_train, x_test = standard_scale(mnist.train.images, mnist.test.images)

n_samples = int(mnist.train.num_examples)

training_epochs = 20

batch_size = 128

display_step = 1

# 创建一个AGN自编吗器的实例

autoencoder = AdditiveGaussianNoiseAutoencoder(n_input=784,

n_hidden=200,

transfer_function=tf.nn.softplus,

optimizer=tf.train.AdagradOptimizer(learning_rate=0.001),

scale=0.01)

for epoch in range(training_epochs):

avg_cost = 0.

total_batch = int(n_samples / batch_size)

for i in range(total_batch):

batch_xs = get_random_block_from_data(x_train, batch_size)

cost = autoencoder.partial_fit(batch_xs)

avg_cost += cost / n_samples * batch_size

if epoch % display_step == 0:

print("Epoch:", '%04d' % (epoch + 1), "cost=",

"{:.9f}".format(avg_cost))

# 计算测试集整体的cost

print("Total cost: " + str(autoencoder.calc_total_cost(x_test)))

运行结果:

Epoch: 0001 cost= 30605.952986364 Epoch: 0002 cost= 24782.857529545 Epoch: 0003 cost= 22460.788431818 Epoch: 0004 cost= 22560.084118182 Epoch: 0005 cost= 21883.631884091 Epoch: 0006 cost= 20949.763325000 Epoch: 0007 cost= 18985.162004545 Epoch: 0008 cost= 19601.635181818 Epoch: 0009 cost= 19095.008981818 Epoch: 0010 cost= 17764.048461364 Epoch: 0011 cost= 17395.307373864 Epoch: 0012 cost= 18420.430104545 Epoch: 0013 cost= 16522.635278409 Epoch: 0014 cost= 18391.607369318 Epoch: 0015 cost= 15189.384457955 Epoch: 0016 cost= 16934.368390909 Epoch: 0017 cost= 16483.074007955 Epoch: 0018 cost= 18073.844284091 Epoch: 0019 cost= 16563.650806818 Epoch: 0020 cost= 16148.637540909 Total cost: 1308946.5