Tensorboard介绍

Tensorboard可以将训练过程中各种绘制数据展示出来,包括标量,图片,音频,计算图,数据分布,直方图等。

当然Tensorboard并不会自动把代码展示出来,其实他是一个日志展示系统,需要在session计算图时,将各种类型的数据汇总并输出到日志文件中去,然后启动tensorboard服务,tensorboard读取这些日志文件,并开启6006端口提供web服务,让用户可以在浏览器中查看数据

相应api函数

- tf.summary.scalar(tags, value)#标量数据汇总,输出protobuf

- tf.summary.hisogram(tag, values)#记录变量var的直方图,输出带直方图汇总的protobuf

- tf.summary.image(tag, tensor, max_images=3)#图像数据汇总

- tf.summary.merge(inputs)#合并所有的汇总日志

- tf.summary.FileWriter#创建一个SummaryWriter类

代码示例

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

def weight_variable(shape):

inite = tf.truncated_normal(shape=shape, stddev=0.1)

return tf.Variable(inite)

def bias_variable(shape):

inite = tf.constant(0.1, shape=shape)

return tf.Variable(inite)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1,1,1,1], padding="SAME")

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

x = tf.placeholder(tf.float32, [None, 784])

y_ = tf.placeholder(tf.float32, [None, 10])

keep_prob = tf.placeholder(tf.float32)

x_image = tf.reshape(x, [-1, 28, 28, 1])

W_conv1 = weight_variable([5,5,1,32])

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

W_conv2 = weight_variable([5,5,32,64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

W_fc1 = weight_variable([7*7*64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob=keep_prob)

W_fc2 = weight_variable([1024,10])

b_fc2 = bias_variable([10])

y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2

tf.summary.histogram('y_conv', y_conv)

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)#1e-4即为0.0001

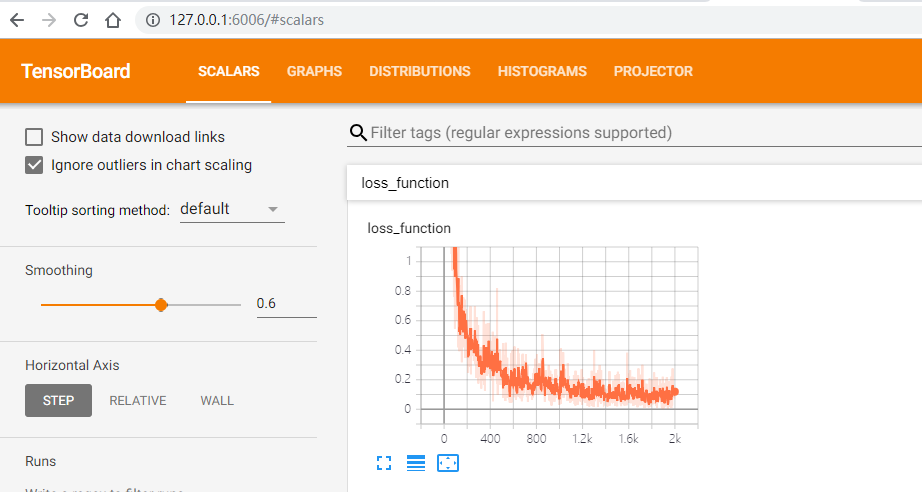

tf.summary.scalar('loss_function', cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

sess =tf.InteractiveSession()

sess.run(tf.global_variables_initializer())

sever = tf.train.Saver(max_to_keep=1)

savedir = "log/"

merged_summary_op = tf.summary.merge_all()

summary_writer = tf.summary.FileWriter('log/mnist_with_summaries', sess.graph)

for i in range(2000):

batch_x, batch_y = mnist.train.next_batch(50)

sess.run(train_step, feed_dict={x:batch_x, y_:batch_y, keep_prob:0.8})

summary_str = sess.run(merged_summary_op, feed_dict={x:batch_x, y_:batch_y, keep_prob:0.8})

summary_writer.add_summary(summary_str, i)

if i % 100 == 0:

train_accuracy = sess.run(accuracy, feed_dict={x:batch_x, y_:batch_y, keep_prob:1.0})

print("step %d, training accuracy %g" % (i, train_accuracy))

sever.save(sess, save_path=savedir + 'mnist.cpkt', global_step=i)

#print("test accuracy %g" % (sess.run(accuracy, feed_dict={x:mnist.test.images, y_:mnist.test.labels, keep_prob:1.0})))

运行Tensorboard

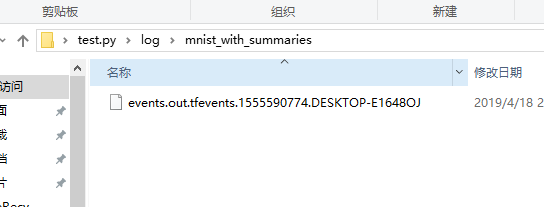

目录中生成了下列文件  启动cmd

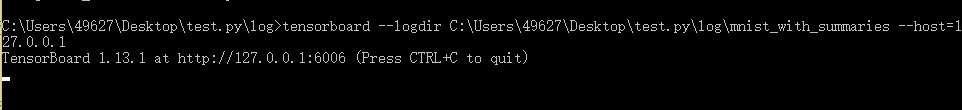

启动cmd  即可在浏览器中使用给定的url进行访问

即可在浏览器中使用给定的url进行访问  如果在cmd中不加--host 127.0.0.1 也可 那么就使用http://localhost:6006进行访问

如果在cmd中不加--host 127.0.0.1 也可 那么就使用http://localhost:6006进行访问