爬虫入坑一段时间了,准备搞点事,嘿嘿

注意:阅读本文要有一定的python基础,了解Requests和Xpath相关语法,以及正则表达式

1.关于Requests和Xpath

Requests

Requests是用python语言基于urllib编写的,采用的是Apache2 Licensed开源协议的HTTP库

如果你看过文章关于urllib库的使用,你会发现,其实urllib还是非常不方便的,而Requests它会比urllib更加方便,可以节约我们大量的工作。(用了requests之后,你基本都不愿意用urllib了)一句话,requests是python实现的最简单易用的HTTP库,建议爬虫使用requests库。

Xpath

2.代码

#正则+request+xpath from lxml import etree import requests import re import warnings import time warnings.filterwarnings("ignore") headers = {"User-Agent" : "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1 Trident/5.0;"} def get_urls(URL): Html=requests.get(URL,headers=headers,verify=False) Html.encoding = 'gbk' HTML=etree.HTML(Html.text) results=HTML.xpath('//dd/a/@href') return results def get_items(result): url='https://www.biquyun.com'+str(result) html=requests.get(url,headers=headers,verify=False) html.encoding = 'gbk' pattern=re.compile('<div.*?<h1>(.*?)</h1>.*?<div.*?content">(.*?)</div>',re.S) items='\n'*2+str(re.findall(pattern,html.text)[0][0])+'\n'*2+str(re.findall(pattern,html.text)[0][1]) items=items.replace(' ','').replace('<br />','') return items def save_to_file(items): with open ("xiaoshuo1.txt",'a',encoding='utf-8') as file: file.write(items) def main(URL): results=get_urls(URL) ii=1 for result in results: items=get_items(result) save_to_file(items) print(str(ii)+' in 1028') ii=ii+1 # time.sleep(1) if __name__ == '__main__': start_1 = time.time() URL='https://www.biquyun.com/15_15566/' main(URL) print('Done!') end_1 = time.time() print('爬虫时间1:',end_1-start_1)

运行结果:

#requests+xpath from lxml import etree import requests import re import warnings import time warnings.filterwarnings("ignore")#由于requests获取网页源代码采用verify=False,需要忽略警告 headers = {"User-Agent" : "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1 Trident/5.0;"} def get_urls(URL): Html=requests.get(URL,headers=headers,verify=False) Html.encoding = 'gbk' HTML=etree.HTML(Html.text) results=HTML.xpath('//dd/a/@href') return results def get_items(result): url='https://www.biquyun.com'+str(result) html=requests.get(url,headers=headers,verify=False) html.encoding = 'gbk' html=etree.HTML(html.text) resultstitle=html.xpath('//*[@class="bookname"]/h1/text()') resultsbody=html.xpath('//*[@id="content"]/text()') items=str(resultstitle[0])+'\n'*2+str(resultsbody).replace('\', \'','').replace('\\xa0\\xa0\\xa0\\xa0','').replace('\\r\\n\\r\\n','\n\n').replace('[\'','').replace('\']','')+'\n'*2 return items def save_to_file(items): with open ("xiaoshuo2.txt",'a',encoding='utf-8') as file: file.write(items) def main(URL): results=get_urls(URL) ii=1 for result in results: items=get_items(result) save_to_file(items) print(str(ii)+' in 1028') ii=ii+1 # time.sleep(1) if __name__ == '__main__': start_2 = time.time() URL='https://www.biquyun.com/15_15566/' main(URL) print('Done!') end_2 = time.time() print('爬虫时间2:',end_2-start_2)

运行结果:

ps: 具体爬取速度与电脑配置和网速有关。另外,利用正则匹配时间有时候会很长,建议采用xpath。

编写爬虫的坑 :

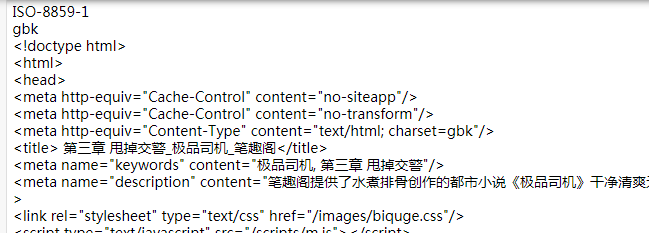

1.爬取网页中文乱码

解决方案:

print(response.encoding) # requests猜测的编码格式 print(requests.utils.get_encodings_from_content(response.text)[0])

参考链接:

http://cn.python-requests.org/zh_CN/latest/

https://www.liaoxuefeng.com/wiki/1016959663602400/1183249464292448

http://www.w3school.com.cn/xpath/index.asp

https://www.cnblogs.com/lei0213/p/7506130.html

https://blog.csdn.net/ahua_c/article/details/80942726

https://www.bilibili.com/video/av19057145

https://www.crifan.com/python_re_search_vs_re_findall/

https://www.jianshu.com/p/4c076da1b7f7

https://blog.csdn.net/u014109807/article/details/79735400

https://www.cnblogs.com/bjdx1314/p/8934031.html

https://www.cnblogs.com/ConnorShip/p/9966290.html

https://www.cnblogs.com/carlos-mm/p/8819519.html

https://blog.51cto.com/13603552/2308728

https://blog.csdn.net/sinat_35360663/article/details/78455991