这次我们爬取的网址是

http://image.so.com/z?ch=photography

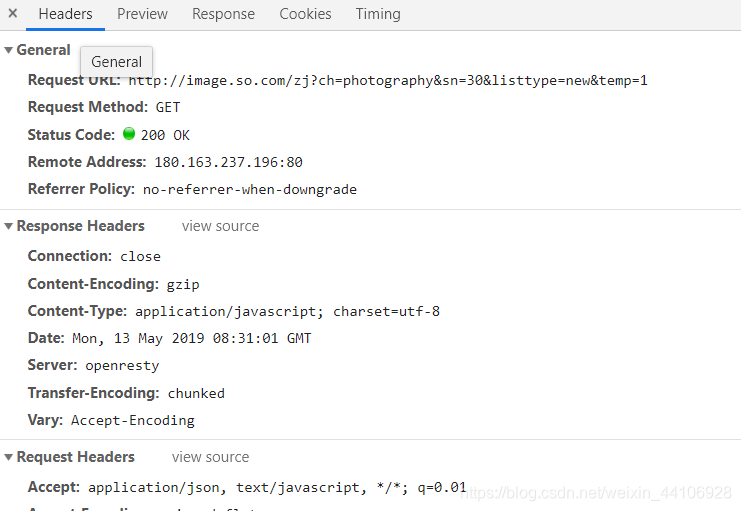

随着滚轮下滑,图片一个个加载出来,所以我们推测这是Ajax形式

我们在开发工具里选中XHR

观察请求

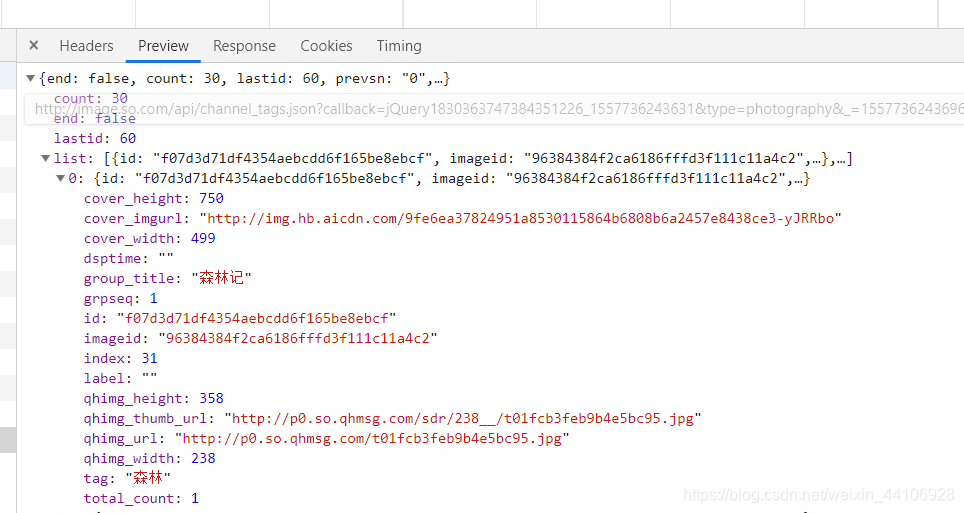

可知这个url的sn参数是以30倍数增长,我们可以利用这个特性进行url的构造

其次我们图片的具体信息都在list里面

下面我们先新建scrapy项目

在你想创建项目的路径里打开powershell

scrapy startproject images360

cd images360

scrapy genspider images images.so.com

我们先在settings.py设置一些变量

# 爬取最大页数

MAX_PAGE = 5

BOT_NAME = 'images360'

SPIDER_MODULES = ['images360.spiders']

NEWSPIDER_MODULE = 'images360.spiders'

MONGN_URI = 'localhost'

MONGO_DB = 'images360'

IMAGES_STORE = 'D:\pythonProject\Spiderr\Chapter13\images360\images360\images'

ROBOTSTXT_OBEY = False

我们使用MongoDb来存储,所以进行一下设置,然后IMAGES_STORE是我们图片下载存放的路径

ROBOTSTXT_OBEY需设置为False,默认设置为True,会导致无法爬取

初始化我们的容器,items.py

from scrapy import Item, Field

class ImageItem(Item):

collection = table = 'images'

id = Field()

url = Field()

title = Field()

thumb = Field()

然后在images.py写入相关代码

# -*- coding: utf-8 -*-

import scrapy

from scrapy import Request, Spider

from urllib.parse import urlencode

import json

from images360.items import ImageItem

class ImagesSpider(scrapy.Spider):

name = 'images'

allowed_domains = ['images.so.com']

start_urls = ['http://images.so.com/']

def start_requests(self):

data = {'ch':'photography', 'listtype':'new'}

# http://images.so.com/zj?ch=photography&sn=30&listtype=new&temp=1

base_url = 'http://images.so.com/zj?'

for page in range(1, self.settings.get('MAX_PAGE')+1):

data['sn'] = 30 * page

params = urlencode(data)

url = base_url+params

yield Request(url, self.parse)

def parse(self, response):

# self.logger.debug(response.text)

result = json.loads(response.text)

for image in result.get('list'):

item = ImageItem()

item['id'] = image.get('imageid')

item['url'] = image.get('qhimg_url')

item['title'] = image.get('group_title')

item['thumb'] = image.get('qhimg_thumb_url')

yield item

这里的两个方法实现了url的构造和解析

url构造完后返回给Request队列等待调度

pase完后记得将Item设置为生成器

最后是实现数据处理,我这里写了两个类,一个是存放到Mongodb里,另一个是使用scrapy的组件实现图片下载

import pymongo

class MongoPipeline(object):

def __init__(self, mongo_uri, mongo_db):

self.mongo_uri = mongo_uri

self.mongo_db = mongo_db

@classmethod

def from_crawler(cls, crawler):

return cls(

mongo_uri=crawler.settings.get('MONGO_URI'),

mongo_db=crawler.settings.get('MONGO_DB')

)

def open_spider(self, spider):

self.client = pymongo.MongoClient(self.mongo_uri)

self.db = self.client[self.mongo_db]

def process_item(self, item, spider):

name = 'images'

self.db[name].insert(dict(item))

return item

def close_spider(self, spider):

self.client.close()

from scrapy import Request

from scrapy.exceptions import DropItem

from scrapy.pipelines.images import ImagesPipeline

class ImagePipeline(ImagesPipeline):

def get_media_requests(self, item, info):

yield Request(item['url'])

def file_path(self, request, response=None, info=None):

url = request.url

file_name = url.split('/')[-1]

return file_name

def item_completed(self, results, item, info):

image_paths = [x['path'] for ok, x in results if ok]

if not image_paths:

raise DropItem('Image Download Failed')

return item

image_paths这里是列表推导式的写法

相当于

for ok, x in results:

if ok:

print(x['path'])

results是我们下载结果的一个列表,里面的元素是元组,包含下载信息,我们通过判断字段是否为True来得知下载是否成功

如果返回时False我们raise 一个DropItem错误

最后在settings里添加一个字段表面Pipelines的优先级

ITEM_PIPELINES = {

'images360.pipelines.MongoPipeline':300,

'images360.pipelines.ImagePipeline':301

}

参考:崔庆才《python3网络爬虫实战》