先编写MapReduce程序(对文本数据统计)。

//4个泛型中,前两个是指定mapper输入数据的类型,KEYIN是输入的key的类型,VALUEIN是输入的value的类型

//map 和 reduce 的数据输入输出都是以 key-value对的形式封装的

//默认情况下,框架传递给我们的mapper的输入数据中,key是要处理的文本中一行的起始偏移量,这一行的内容作为value

public class WCMapper extends Mapper<LongWritable, Text, Text, LongWritable>{

//mapreduce框架每读一行数据就调用一次该方法

@Override

protected void map(LongWritable key, Text value,Context context)

throws IOException, InterruptedException {

//具体业务逻辑就写在这个方法体中,而且我们业务要处理的数据已经被框架传递进来,在方法的参数中 key-value

//key 是这一行数据的起始偏移量 value 是这一行的文本内容

//将这一行的内容转换成string类型

String line = value.toString();

//对这一行的文本按特定分隔符切分

String[] words = StringUtils.split(line, " ");

//遍历这个单词数组输出为kv形式 k:单词 v : 1

for(String word : words){

context.write(new Text(word), new LongWritable(1));

}

}

}

public class WCReducer extends Reducer<Text, LongWritable, Text, LongWritable>{

//框架在map处理完成之后,将所有kv对缓存起来,进行分组,然后传递一个组<key,valus{}>,调用一次reduce方法

//<hello,{1,1,1,1,1,1.....}>

@Override

protected void reduce(Text key, Iterable<LongWritable> values,Context context)

throws IOException, InterruptedException {

long count = 0;

for(LongWritable value:values){

//遍历value的list,进行累加求和

count += value.get();

}

//输出这一个单词的统计结果

context.write(key, new LongWritable(count));

}

}

/**

* 用来描述一个特定的作业

* 比如,该作业使用哪个类作为逻辑处理中的map,哪个作为reduce

* 还可以指定该作业要处理的数据所在的路径

* 还可以指定改作业输出的结果放到哪个路径

*

*/

public class WCRunner {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job wcjob = Job.getInstance(conf);

//设置整个job所用的那些类在哪个jar包

wcjob.setJarByClass(WCRunner.class);

//本job使用的mapper和reducer的类

wcjob.setMapperClass(WCMapper.class);

wcjob.setReducerClass(WCReducer.class);

//指定reduce的输出数据kv类型

wcjob.setOutputKeyClass(Text.class);

wcjob.setOutputValueClass(LongWritable.class);

//指定mapper的输出数据kv类型

wcjob.setMapOutputKeyClass(Text.class);

wcjob.setMapOutputValueClass(LongWritable.class);

//指定要处理的输入数据存放路径

FileInputFormat.setInputPaths(wcjob, new Path("hdfs://192.168.93.132:9000/wc/srcdata/"));

//指定处理结果的输出数据存放路径

FileOutputFormat.setOutputPath(wcjob, new Path("hdfs://192.168.93.132:9000/wc/resultOutput/"));

//将job提交给集群运行

wcjob.waitForCompletion(true);

}

}

之后将程序打包提交到yarn集群。

我是在win上使用maven构建的 项目,引用的包为自己导入的。打包的加入以下配置。

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>2.3.2</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

<encoding>UTF-8</encoding>

<compilerArguments>

<extdirs>lib</extdirs>

</compilerArguments>

</configuration>

</plugin>

</plugins>

</build>

要不有可能自己建立的lib下的jar包打包的时候找不到路径。

在hdfs上建立好对应的文件夹

将要统计的文件上传到程序中指定的文件夹下

之后运行编译好的jar就可以了

hadoop jar root-1.0-SNAPSHOT.jar mr.WCRunner

[root@hadoop ~]# hadoop jar root-1.0-SNAPSHOT.jar mr.WCRunner

// 先建立连接到ResourceManager 的client

19/04/20 22:29:08 INFO client.RMProxy: Connecting to ResourceManager at hadoop/192.168.93.132:8032

19/04/20 22:29:09 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

19/04/20 22:29:10 INFO input.FileInputFormat: Total input paths to process : 1 总共需要处理的数量

19/04/20 22:29:10 INFO mapreduce.JobSubmitter: number of splits:1 1个split

19/04/20 22:29:11 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1555769992184_0001 -- jobId

19/04/20 22:29:12 INFO impl.YarnClientImpl: Submitted application application_1555769992184_0001 -- 提交应用

19/04/20 22:29:12 INFO mapreduce.Job: The url to track the job: http://hadoop:8088/proxy/application_1555769992184_0001/ --url跟踪地址

19/04/20 22:29:12 INFO mapreduce.Job: Running job: job_1555769992184_0001 -- 开始运行这个job

19/04/20 22:29:23 INFO mapreduce.Job: Job job_1555769992184_0001 running in uber mode : false

19/04/20 22:29:23 INFO mapreduce.Job: map 0% reduce 0%

19/04/20 22:29:30 INFO mapreduce.Job: map 100% reduce 0%

19/04/20 22:29:37 INFO mapreduce.Job: map 100% reduce 100%

19/04/20 22:29:37 INFO mapreduce.Job: Job job_1555769992184_0001 completed successfully -- 运行成功

19/04/20 22:29:37 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=268

FILE: Number of bytes written=231203

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=193

HDFS: Number of bytes written=58

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=4903

Total time spent by all reduces in occupied slots (ms)=3350

Total time spent by all map tasks (ms)=4903

Total time spent by all reduce tasks (ms)=3350

Total vcore-seconds taken by all map tasks=4903

Total vcore-seconds taken by all reduce tasks=3350

Total megabyte-seconds taken by all map tasks=5020672

Total megabyte-seconds taken by all reduce tasks=3430400

Map-Reduce Framework

Map input records=9

Map output records=18

Map output bytes=226

Map output materialized bytes=268

Input split bytes=111

Combine input records=0

Combine output records=0

Reduce input groups=9

Reduce shuffle bytes=268

Reduce input records=18

Reduce output records=9

Spilled Records=36

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=320

CPU time spent (ms)=2200

Physical memory (bytes) snapshot=421101568

Virtual memory (bytes) snapshot=4199149568

Total committed heap usage (bytes)=293076992

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=82

File Output Format Counters

Bytes Written=58

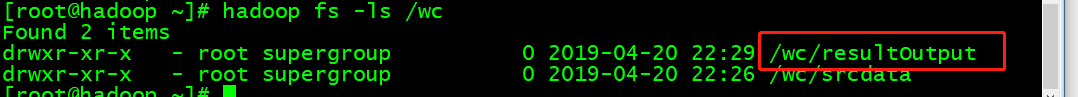

hadoop 自动生成了对应的输出结果目录

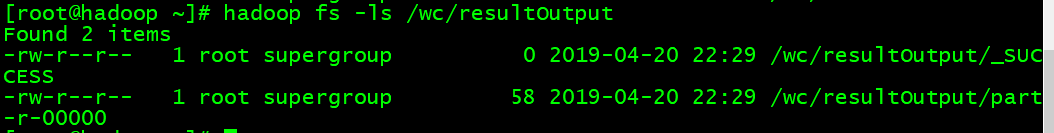

结果

MR执行流程

(1).客户端提交一个mr的jar包给JobClient(提交方式:hadoop jar ...)

(2).JobClient通过RPC和JobTracker进行通信,返回一个存放jar包的地址(HDFS)和jobId

(3).client将jar包写入到HDFS当中(path = hdfs上的地址 + jobId)

(4).开始提交任务(任务的描述信息,不是jar, 包括jobid,jar存放的位置,配置信息等等)

(5).JobTracker进行初始化任务

(6).读取HDFS上的要处理的文件,开始计算输入分片,每一个分片对应一个MapperTask

(7).TaskTracker通过心跳机制领取任务(任务的描述信息)

(8).下载所需的jar,配置文件等

(9).TaskTracker启动一个java child子进程,用来执行具体的任务(MapperTask或ReducerTask)

(10).将结果写入到HDFS当中