版权声明:欢迎分享转载 我可能会失败,但我不会一直失败 https://blog.csdn.net/u012637358/article/details/88706803

1、准备环境

- jdk1.8

- zookeeper集群

- hadoop集群

- ssh免密

1.1节点规划

| IP | hostname | 节点规划 |

|---|---|---|

| 192.168.4.14 | node1.sdp.cn | master |

| 192.168.4.15 | node2.sdp.cn | standby |

| 192.168.4.16 | node3.sdp.cn | worker |

| 192.168.4.17 | node4.sdp.cn | worker |

| 192.168.4.18 | node5.sdp.cn | worker |

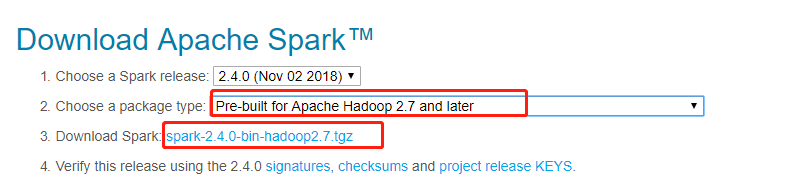

1.2 下载

现在地址:http://spark.apache.org/downloads.html

#查看当前环境hadoop版本

hadoop version

根据我们hadoop版本选择合适的spark包

1.3 上传&解压

将刚刚下载的spark-2.4.0-bin-hadoop2.7.tgz文件上传到家node1.sdp.cn节点

上传

#切换到上传目录

cd /opt/software/

#使用rz或者WinSCP工具上传

rz

解压

#解压到指定目录下

tar -zxvf spark-2.4.0-bin-hadoop2.7.tgz -C /opt/module

2、配置

切换到spark的conf目录

cd conf

2.1 配置slaves

复制slaves模板文件

cp slaves.template slaves

指定worker节点hostname

vim slaves

node3.sdp.cn

node4.sdp.cn

node5.sdp.cn

2.2 配置spark-env.sh

vim spark-env.sh

配置明细

# Alternate conf dir. (Default: ${SPARK_HOME}/conf)

export SPARK_CONF_DIR=${SPARK_CONF_DIR:-/usr/hdp/current/spark2-historyserver/conf}

# Where log files are stored.(Default:${SPARK_HOME}/logs)

#export SPARK_LOG_DIR=${SPARK_HOME:-/usr/hdp/current/spark2-historyserver}/logs

export SPARK_LOG_DIR=/var/log/spark2

# Where the pid file is stored. (Default: /tmp)

export SPARK_PID_DIR=/var/run/spark2

#Memory for Master, Worker and history server (default: 1024MB)

export SPARK_DAEMON_MEMORY=5120m

# A string representing this instance of spark.(Default: $USER)

SPARK_IDENT_STRING=$USER

# The scheduling priority for daemons. (Default: 0)

SPARK_NICENESS=0

export HADOOP_HOME=${HADOOP_HOME:-/usr/hdp/current/hadoop-client}

export HADOOP_CONF_DIR=${HADOOP_CONF_DIR:-/usr/hdp/current/hadoop-client/conf}

export SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=node3.sdp.cn:2181,node4.sdp.cn:2181,node5.sdp.cn:2181 -Dspark.deploy.zookeeper.dir=/spark"

# The java implementation to use.

export JAVA_HOME=/usr/jdk64/jdk1.8.0_112

采用zookeeper实现高可用HA配置

export SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=node3.sdp.cn:2181,node4.sdp.cn:2181,node5.sdp.cn:2181 -Dspark.deploy.zookeeper.dir=/spark"

2.3 scp到其它节点

scp -r spark-2.4.0 [email protected]:/opt/module

scp -r spark-2.4.0 [email protected]:/opt/module

scp -r spark-2.4.0 [email protected]:/opt/module

scp -r spark-2.4.0 [email protected]:/opt/module

启动集群

在master节点进入到spark的sbin目录

cd sbin

#启动整个集群

./start-all.sh

在standby节点

./start-master.sh spark://node1.sdp.cn:7077

访问spark web UI

默认端口8080 http://node1.sdp.cn:8080/