Softmax层的作用是将输入的预测向量转化为概率值,也就是每个元素介于0和1之间,其和为1。而Softmax loss是基于Softmax的输出,使用多元交叉熵损失函数得到的loss。下面我们来讨论一下他们其中的正向和反向导数推导,以及caffe中的源码实现。为了更好地将推导和代码相结合,以加深理解,本文将会在每个推导部分直接紧跟其代码实现。

1. Softmax

1.1 前向计算

1.1.1 公式推导

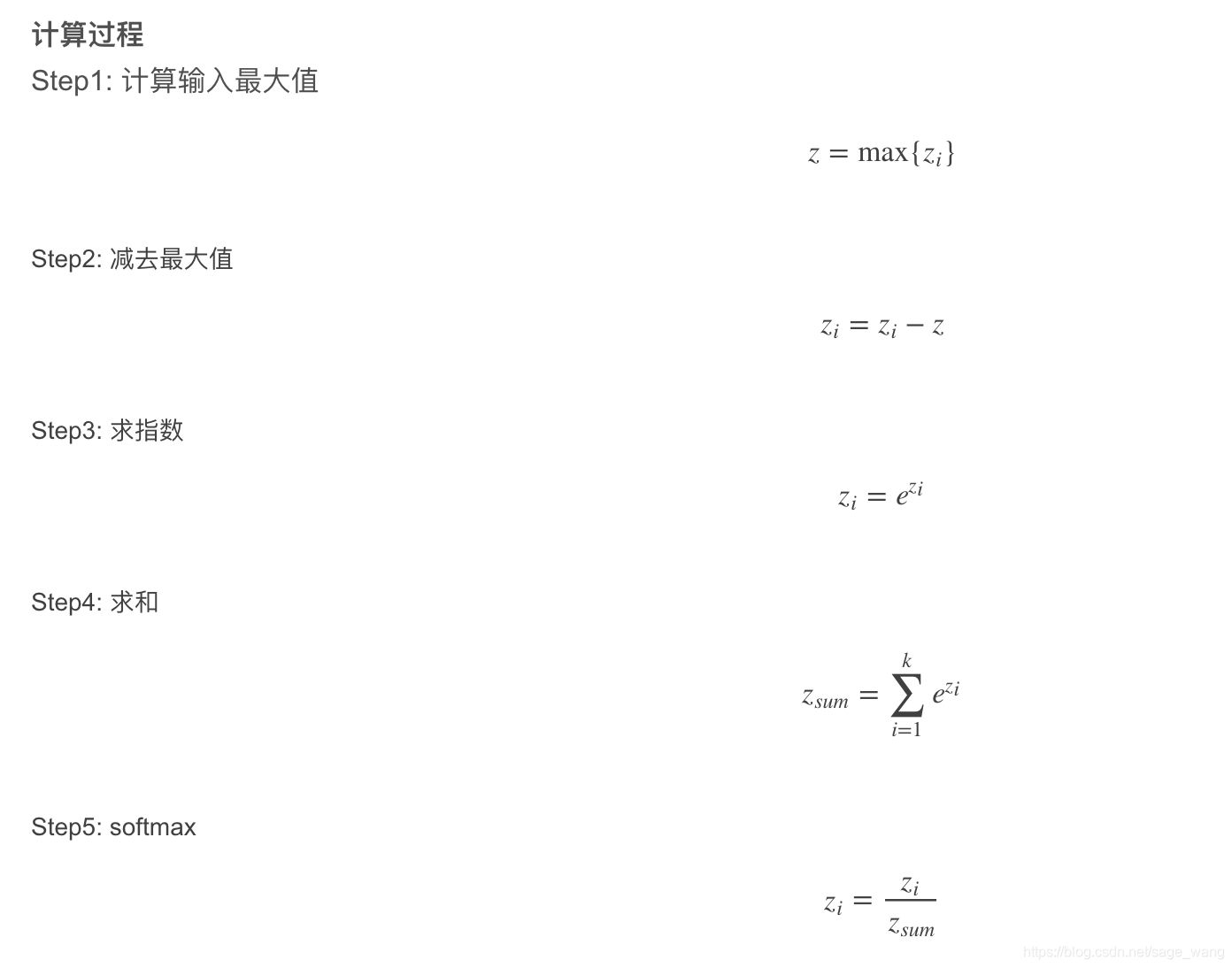

假设有K个类别,前面已得出每个类别的分值为zizi,则Softmax通过下式计算出相应的概率值:

Softmax(zi)=exp(zj) / ∑jexp(zj), i=0,1,2,…,K−1

这样就将zi映射到了[0,1],且和为1,即为输入被预测到每个类别的概率。

前向过程比较简单,下面我们来看一下具体实现。

1.1.2 源码实现

我们主要分析Softmax层的Forward_cpu函数,该函数的实现位于caffe的src/caffe/layers/softmax_layer.cpp中。需要说明的是,在caffe的实现中,输入值zizi首先减去了最大值,这样避免了后续的exp()计算中可能出现的因数值过大而造成的溢出问题。

首先来解释一下下面代码里几个不太好理解的变量:

scale_data: 是个中间变量,用来存放计算的中间结果。

inner_num_:在softmax_layer.hpp的声明中为inner_num_ = bottom[0]->count(softmax_axis_ + 1);也就是所有表示概率值的维度的像素点总数。

outer_num_:在softmax_layer.hpp的声明中为outer_num_ = bottom[0]->count(0, softmax_axis_);可以理解为样本的个数。

template <typename Dtype>

void SoftmaxLayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

const Dtype* bottom_data = bottom[0]->cpu_data();

Dtype* top_data = top[0]->mutable_cpu_data();

Dtype* scale_data = scale_.mutable_cpu_data(); // scale_data 是个中间变量,用来存放计算的中间结果

int channels = bottom[0]->shape(softmax_axis_);

int dim = bottom[0]->count() / outer_num_;

// 输出数据初始化为输入数据

caffe_copy(bottom[0]->count(), bottom_data, top_data);

// 我们需要减去最大值,计算exp,然后归一化。

for (int i = 0; i < outer_num_; ++i) {

// 将中间变量scale_data初始化为输入值的第一个样本平面

caffe_copy(inner_num_, bottom_data + i * dim, scale_data);

// 找出每个样本在每个类别的输入分值的最大值,放入scale_data中

for (int j = 0; j < channels; j++) {

for (int k = 0; k < inner_num_; k++) {

scale_data[k] = std::max(scale_data[k],

bottom_data[i * dim + j * inner_num_ + k]);

}

}

// 减去最大值

caffe_cpu_gemm<Dtype>(CblasNoTrans, CblasNoTrans, channels, inner_num_,

1, -1., sum_multiplier_.cpu_data(), scale_data, 1., top_data);

// 计算exp()

caffe_exp<Dtype>(dim, top_data, top_data);

// 求和

caffe_cpu_gemv<Dtype>(CblasTrans, channels, inner_num_, 1.,

top_data, sum_multiplier_.cpu_data(), 0., scale_data);

// 除以前面求到的和

for (int j = 0; j < channels; j++) {

caffe_div(inner_num_, top_data, scale_data, top_data);

top_data += inner_num_; // 指针后移

}

}

}

1.2 反向传播

1.2.1 公式推导

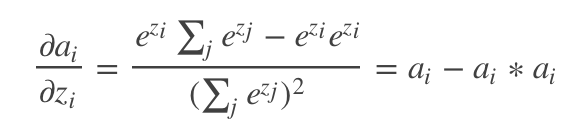

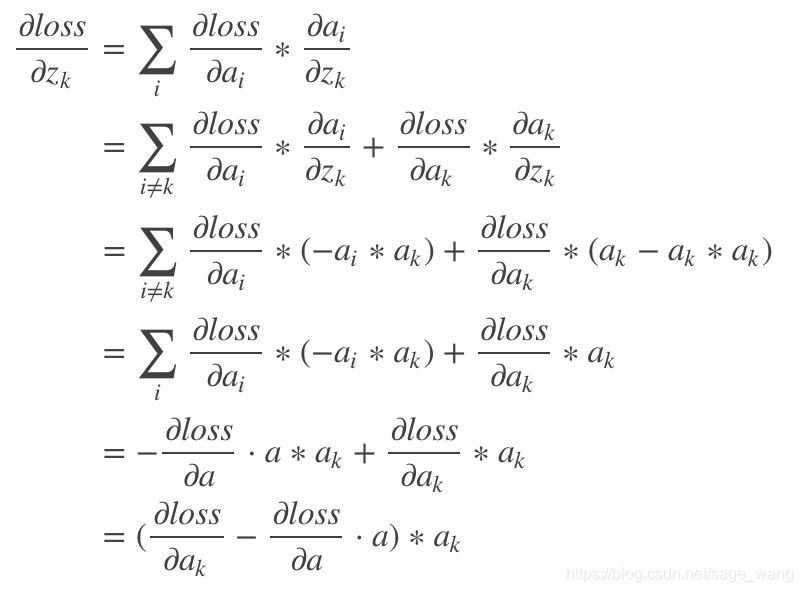

如前,设Softmax的输入为zi,输出为ai,那么由链式法则,损失loss对其输入zi的偏导可以如下计算:

= ⋅

其中

是上面的层传回来的梯度,对本层来说是已知的,所以我们只需计算

。

由ai=

当i=j,

这里∗表示标量算数乘法。

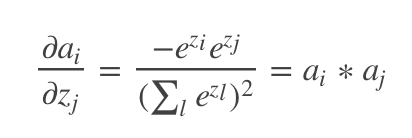

当i≠j, 等式的右边为 -ai * aj

所以,

这里的 ⋅⋅ 表示向量点乘。

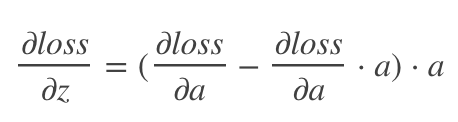

上式写成向量形式,即为:

即为 ( top_diff - top_diff ⋅ top_data) ⋅ top_data。

1.2.2 源码实现

下面我们主要分析Softmax层的Backward_cpu函数,该函数的实现位于caffe的src/caffe/layers/softmax_layer.cpp中。

template <typename Dtype>

void SoftmaxLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down,

const vector<Blob<Dtype>*>& bottom) {

const Dtype* top_diff = top[0]->cpu_diff();

const Dtype* top_data = top[0]->cpu_data();

Dtype* bottom_diff = bottom[0]->mutable_cpu_diff();

Dtype* scale_data = scale_.mutable_cpu_data();

int channels = top[0]->shape(softmax_axis_);

int dim = top[0]->count() / outer_num_;

caffe_copy(top[0]->count(), top_diff, bottom_diff); // 将bottom_diff初始化为top_diff的值

for (int i = 0; i < outer_num_; ++i) {

// 计算开始

for (int k = 0; k < inner_num_; ++k) {

// 计算dot(top_diff, top_data)

scale_data[k] = caffe_cpu_strided_dot<Dtype>(channels,

bottom_diff + i * dim + k, inner_num_,

top_data + i * dim + k, inner_num_);

}

// 相减

caffe_cpu_gemm<Dtype>(CblasNoTrans, CblasNoTrans, channels, inner_num_, 1,

-1., sum_multiplier_.cpu_data(), scale_data, 1., bottom_diff + i * dim);

}

// 对应元素相乘

caffe_mul(top[0]->count(), bottom_diff, top_data, bottom_diff);

}

2. Softmax Loss

Softmax Loss就是用Softmax的输出概率作为预测概率值,与真实label做交叉熵损失,在caffe中也是调用了Softmax layer来实现前向传播。

SoftmaxWithLoss = Multinomial Logistic Loss Layer + Softmax Layer

2.1 前向计算

2.1.1 公式推导

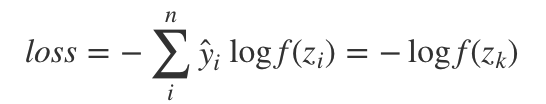

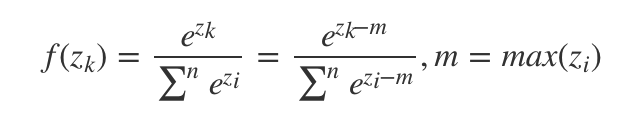

其核心公式为:

其中,其中y^为标签值,k为输入图像标签所对应的的神经元。m为输出的最大值,主要是考虑数值稳定性。

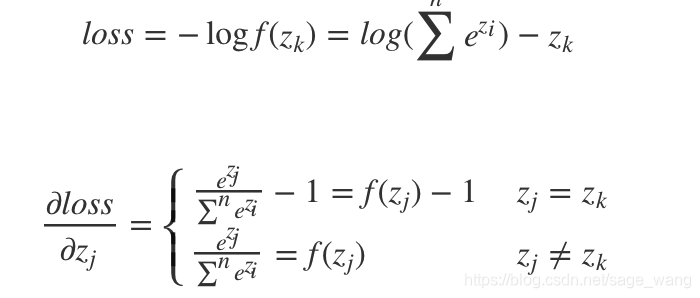

2.2 反向计算

2.2.1 公式推导

其核心公式为:

需要注意的一点是,在反向传导时SoftmaxWithLossLayer层并没有向正向传导时借用SoftmaxLayer层实现一部分,而是一手全部包办了。因此SoftmaxLayer::Backward_cpu()函数也就被闲置了。

如果网络在训练期间发散了,则最终计算结果accuracy ≈ 0.1(说明机器完全没有预测精度,纯靠蒙), loss ≈-log(0.1) = 2.3026。如果大家看见loss为2.3左右,就应该了解当前网络没有收敛,需要调节参数配置。至于怎么调节嘛,这往往就依赖经验了……

2.3 使用

2.3.1 在caffe中使用

layer {

name: "loss"

type: "SoftmaxWithLoss"

bottom: "fc8"

bottom: "label"

top: "loss"

}

caffe中softmaxloss 层的参数如下:

// Message that stores parameters shared by loss layers

message LossParameter {

// If specified, ignore instances with the given label.

//忽略那些label

optional int32 ignore_label = 1;

// How to normalize the loss for loss layers that aggregate across batches,

// spatial dimensions, or other dimensions. Currently only implemented in

// SoftmaxWithLoss and SigmoidCrossEntropyLoss layers.

enum NormalizationMode {

// Divide by the number of examples in the batch times spatial dimensions.

// Outputs that receive the ignore label will NOT be ignored in computing

// the normalization factor.

//一次前向计算的loss除以所有的label数

FULL = 0;

// Divide by the total number of output locations that do not take the

// ignore_label. If ignore_label is not set, this behaves like FULL.

//一次前向计算的loss除以所有的可用的label数

VALID = 1;

// Divide by the batch size.

//除以batchsize大小,默认为batchsize大小。

BATCH_SIZE = 2;

// Do not normalize the loss.

NONE = 3;

}

// For historical reasons, the default normalization for

// SigmoidCrossEntropyLoss is BATCH_SIZE and *not* VALID.

optional NormalizationMode normalization = 3 [default = VALID];

// Deprecated. Ignored if normalization is specified. If normalization

// is not specified, then setting this to false will be equivalent to

// normalization = BATCH_SIZE to be consistent with previous behavior.

//如果normalize==false,则normalization=BATCH_SIZE

//如果normalize==true,则normalization=Valid

optional bool normalize = 2;

}

首先来看一下softmaxwithloss的头文件:

#ifndef CAFFE_SOFTMAX_WITH_LOSS_LAYER_HPP_

#define CAFFE_SOFTMAX_WITH_LOSS_LAYER_HPP_

#include <vector>

#include "caffe/blob.hpp"

#include "caffe/layer.hpp"

#include "caffe/proto/caffe.pb.h"

#include "caffe/layers/loss_layer.hpp"

#include "caffe/layers/softmax_layer.hpp"

namespace caffe {

/**

* @brief Computes the multinomial logistic loss for a one-of-many

* classification task, passing real-valued predictions through a

* softmax to get a probability distribution over classes.

*

* This layer should be preferred over separate

* SoftmaxLayer + MultinomialLogisticLossLayer

* as its gradient computation is more numerically stable.

* At test time, this layer can be replaced simply by a SoftmaxLayer.

*

* @param bottom input Blob vector (length 2)

* -# @f$ (N \times C \times H \times W) @f$

* the predictions @f$ x @f$, a Blob with values in

* @f$ [-\infty, +\infty] @f$ indicating the predicted score for each of

* the @f$ K = CHW @f$ classes. This layer maps these scores to a

* probability distribution over classes using the softmax function

* @f$ \hat{p}_{nk} = \exp(x_{nk}) /

* \left[\sum_{k'} \exp(x_{nk'})\right] @f$ (see SoftmaxLayer).

* -# @f$ (N \times 1 \times 1 \times 1) @f$

* the labels @f$ l @f$, an integer-valued Blob with values

* @f$ l_n \in [0, 1, 2, ..., K - 1] @f$

* indicating the correct class label among the @f$ K @f$ classes

* @param top output Blob vector (length 1)

* -# @f$ (1 \times 1 \times 1 \times 1) @f$

* the computed cross-entropy classification loss: @f$ E =

* \frac{-1}{N} \sum\limits_{n=1}^N \log(\hat{p}_{n,l_n})

* @f$, for softmax output class probabilites @f$ \hat{p} @f$

*/

template <typename Dtype>

class SoftmaxWithLossLayer : public LossLayer<Dtype> {

public:

/**

* @param param provides LossParameter loss_param, with options:

* - ignore_label (optional)

* Specify a label value that should be ignored when computing the loss.

* - normalize (optional, default true)

* If true, the loss is normalized by the number of (nonignored) labels

* present; otherwise the loss is simply summed over spatial locations.

*/

explicit SoftmaxWithLossLayer(const LayerParameter& param)

: LossLayer<Dtype>(param) {}

virtual void LayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual void Reshape(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual inline const char* type() const { return "SoftmaxWithLoss"; }

virtual inline int ExactNumBottomBlobs() const { return -1; }

virtual inline int MinBottomBlobs() const { return 2; }

virtual inline int MaxBottomBlobs() const { return 3; }

virtual inline int ExactNumTopBlobs() const { return -1; }

virtual inline int MinTopBlobs() const { return 1; }

virtual inline int MaxTopBlobs() const { return 2; }

protected:

virtual void Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual void Forward_gpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

/**

* @brief Computes the softmax loss error gradient w.r.t. the predictions.

*

* Gradients cannot be computed with respect to the label inputs (bottom[1]),

* so this method ignores bottom[1] and requires !propagate_down[1], crashing

* if propagate_down[1] is set.

*

* @param top output Blob vector (length 1), providing the error gradient with

* respect to the outputs

* -# @f$ (1 \times 1 \times 1 \times 1) @f$

* This Blob's diff will simply contain the loss_weight* @f$ \lambda @f$,

* as @f$ \lambda @f$ is the coefficient of this layer's output

* @f$\ell_i@f$ in the overall Net loss

* @f$ E = \lambda_i \ell_i + \mbox{other loss terms}@f$; hence

* @f$ \frac{\partial E}{\partial \ell_i} = \lambda_i @f$.

* (*Assuming that this top Blob is not used as a bottom (input) by any

* other layer of the Net.)

* @param propagate_down see Layer::Backward.

* propagate_down[1] must be false as we can't compute gradients with

* respect to the labels.

* @param bottom input Blob vector (length 2)

* -# @f$ (N \times C \times H \times W) @f$

* the predictions @f$ x @f$; Backward computes diff

* @f$ \frac{\partial E}{\partial x} @f$

* -# @f$ (N \times 1 \times 1 \times 1) @f$

* the labels -- ignored as we can't compute their error gradients

*/

virtual void Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom);

virtual void Backward_gpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom);

/// Read the normalization mode parameter and compute the normalizer based

/// on the blob size. If normalization_mode is VALID, the count of valid

/// outputs will be read from valid_count, unless it is -1 in which case

/// all outputs are assumed to be valid.

virtual Dtype get_normalizer(

LossParameter_NormalizationMode normalization_mode, Dtype valid_count);

/// The internal SoftmaxLayer used to map predictions to a distribution.

//声明softmax layer

shared_ptr<Layer<Dtype> > softmax_layer_;

/// prob stores the output probability predictions from the SoftmaxLayer.

//存储经过softmax layer输出的概率

Blob<Dtype> prob_;

/// bottom vector holder used in call to the underlying

//softmax层前向函数的bottom

SoftmaxLayer::Forward

vector<Blob<Dtype>*> softmax_bottom_vec_;

/// top vector holder used in call to the underlying SoftmaxLayer::Forward

//softmax层前向函数的top

vector<Blob<Dtype>*> softmax_top_vec_;

// Whether to ignore instances with a certain label.

//是否需要忽略掉label

bool has_ignore_label_;

/// The label indicating that an instance should be ignored.

int ignore_label_;

bool has_hard_ratio_;

float hard_ratio_;

bool has_hard_mining_label_;

int hard_mining_label_;

bool has_class_weight_;

Blob<Dtype> class_weight_;

Blob<Dtype> counts_;

Blob<Dtype> loss_;

/// How to normalize the output loss.

//归一化loss类型

LossParameter_NormalizationMode normalization_;

bool has_cutting_point_;

Dtype cutting_point_;

std::string normalize_type_;

int softmax_axis_, outer_num_, inner_num_;

};

} // namespace caffe

具体函数实现

#include <algorithm>

#include <cfloat>

#include <vector>

#include "caffe/layers/softmax_loss_layer.hpp"

#include "caffe/util/math_functions.hpp"

namespace caffe {

template <typename Dtype>

void SoftmaxWithLossLayer<Dtype>::LayerSetUp(

const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) {

LossLayer<Dtype>::LayerSetUp(bottom, top);

normalize_type_ =

this->layer_param_.softmax_param().normalize_type();

//归一化为softmax

if (normalize_type_ == "Softmax") {

LayerParameter softmax_param(this->layer_param_);

softmax_param.set_type("Softmax");

softmax_layer_ = LayerRegistry<Dtype>::CreateLayer(softmax_param);

softmax_bottom_vec_.clear();

softmax_bottom_vec_.push_back(bottom[0]);

softmax_top_vec_.clear();

softmax_top_vec_.push_back(&prob_);

softmax_layer_->SetUp(softmax_bottom_vec_, softmax_top_vec_);

}

else if(normalize_type_ == "L2" || normalize_type_ == "L1") {

LayerParameter normalize_param(this->layer_param_);

normalize_param.set_type("Normalize");

softmax_layer_ = LayerRegistry<Dtype>::CreateLayer(normalize_param);

softmax_bottom_vec_.clear();

softmax_bottom_vec_.push_back(bottom[0]);

softmax_top_vec_.clear();

softmax_top_vec_.push_back(&prob_);

softmax_layer_->SetUp(softmax_bottom_vec_, softmax_top_vec_);

}

else {

NOT_IMPLEMENTED;

}

has_ignore_label_ =

this->layer_param_.loss_param().has_ignore_label();

if (has_ignore_label_) {

ignore_label_ = this->layer_param_.loss_param().ignore_label();

}

has_hard_ratio_ =

this->layer_param_.softmax_param().has_hard_ratio();

if (has_hard_ratio_) {

hard_ratio_ = this->layer_param_.softmax_param().hard_ratio();

CHECK_GE(hard_ratio_, 0);

CHECK_LE(hard_ratio_, 1);

}

has_cutting_point_ =

this->layer_param_.softmax_param().has_cutting_point();

if (has_cutting_point_) {

cutting_point_ = this->layer_param_.softmax_param().cutting_point();

CHECK_GE(cutting_point_, 0);

CHECK_LE(cutting_point_, 1);

}

has_hard_mining_label_ = this->layer_param_.softmax_param().has_hard_mining_label();

if (has_hard_mining_label_) {

hard_mining_label_ = this->layer_param_.softmax_param().hard_mining_label();

}

has_class_weight_ = (this->layer_param_.softmax_param().class_weight_size() != 0);

softmax_axis_ =

bottom[0]->CanonicalAxisIndex(this->layer_param_.softmax_param().axis());

if (has_class_weight_) {

class_weight_.Reshape({ bottom[0]->shape(softmax_axis_) });

CHECK_EQ(this->layer_param_.softmax_param().class_weight().size(), bottom[0]->shape(softmax_axis_));

for (int i = 0; i < bottom[0]->shape(softmax_axis_); i++) {

class_weight_.mutable_cpu_data()[i] = (Dtype)this->layer_param_.softmax_param().class_weight(i);

}

}

else {

if (bottom.size() == 3) {

class_weight_.Reshape({ bottom[0]->shape(softmax_axis_) });

for (int i = 0; i < bottom[0]->shape(softmax_axis_); i++) {

class_weight_.mutable_cpu_data()[i] = (Dtype)1.0;

}

}

}

if (!this->layer_param_.loss_param().has_normalization() &&

this->layer_param_.loss_param().has_normalize()) {

normalization_ = this->layer_param_.loss_param().normalize() ?

LossParameter_NormalizationMode_VALID :

LossParameter_NormalizationMode_BATCH_SIZE;

} else {

normalization_ = this->layer_param_.loss_param().normalization();

}

}

template <typename Dtype>

void SoftmaxWithLossLayer<Dtype>::Reshape(

const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) {

LossLayer<Dtype>::Reshape(bottom, top);

softmax_layer_->Reshape(softmax_bottom_vec_, softmax_top_vec_);

softmax_axis_ =

bottom[0]->CanonicalAxisIndex(this->layer_param_.softmax_param().axis());

outer_num_ = bottom[0]->count(0, softmax_axis_);

inner_num_ = bottom[0]->count(softmax_axis_ + 1);

counts_.Reshape({ outer_num_, inner_num_ });

loss_.Reshape({ outer_num_, inner_num_ });

CHECK_EQ(outer_num_ * inner_num_, bottom[1]->count())

<< "Number of labels must match number of predictions; "

<< "e.g., if softmax axis == 1 and prediction shape is (N, C, H, W), "

<< "label count (number of labels) must be N*H*W, "

<< "with integer values in {0, 1, ..., C-1}.";

if (bottom.size() == 3) {

CHECK_EQ(outer_num_ * inner_num_, bottom[2]->count())

<< "Number of loss weights must match number of label.";

}

if (top.size() >= 2) {

// softmax output

top[1]->ReshapeLike(*bottom[0]);

}

if (has_class_weight_) {

CHECK_EQ(class_weight_.count(), bottom[0]->shape(1));

}

}

template <typename Dtype>

Dtype SoftmaxWithLossLayer<Dtype>::get_normalizer(

LossParameter_NormalizationMode normalization_mode, Dtype valid_count) {

Dtype normalizer;

switch (normalization_mode) {

case LossParameter_NormalizationMode_FULL:

normalizer = Dtype(outer_num_ * inner_num_);

break;

case LossParameter_NormalizationMode_VALID:

if (valid_count == -1) {

normalizer = Dtype(outer_num_ * inner_num_);

} else {

normalizer = valid_count;

}

break;

case LossParameter_NormalizationMode_BATCH_SIZE:

normalizer = Dtype(outer_num_);

break;

case LossParameter_NormalizationMode_NONE:

normalizer = Dtype(1);

break;

default:

LOG(FATAL) << "Unknown normalization mode: "

<< LossParameter_NormalizationMode_Name(normalization_mode);

}

// Some users will have no labels for some examples in order to 'turn off' a

// particular loss in a multi-task setup. The max prevents NaNs in that case.

return std::max(Dtype(1.0), normalizer);

}

//前向中主要利用softmax层输出每一个样本的对应的所有类别概率。如输入一只狗,则输出狗的概率,猫的概率,猴的概率。[0.8,0.1,0.1]

template <typename Dtype>

void SoftmaxWithLossLayer<Dtype>::Forward_cpu(

const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) {

// The forward pass computes the softmax prob values.

softmax_layer_->Forward(softmax_bottom_vec_, softmax_top_vec_);

const Dtype* prob_data = prob_.cpu_data();

const Dtype* label = bottom[1]->cpu_data();

int dim = prob_.count() / outer_num_;

Dtype count = 0;

Dtype loss = 0;

if (bottom.size() == 2) {

for (int i = 0; i < outer_num_; ++i) {

for (int j = 0; j < inner_num_; j++) {

const int label_value = static_cast<int>(label[i * inner_num_ + j]);

if (has_ignore_label_ && label_value == ignore_label_) {

continue;

}

DCHECK_GE(label_value, 0);

DCHECK_LT(label_value, prob_.shape(softmax_axis_));

loss -= log(std::max(prob_data[i * dim + label_value * inner_num_ + j],

Dtype(FLT_MIN)));

count += 1;

}

}

}

else if(bottom.size() == 3) {

const Dtype* weights = bottom[2]->cpu_data();

for (int i = 0; i < outer_num_; ++i) {

for (int j = 0; j < inner_num_; j++) {

const int label_value = static_cast<int>(label[i * inner_num_ + j]);

const Dtype weight_value = weights[i * inner_num_ + j] * (has_class_weight_? class_weight_.cpu_data()[label_value] : 1.0);

if (weight_value == 0) continue;

if (has_ignore_label_ && label_value == ignore_label_) {

continue;

}

DCHECK_GE(label_value, 0);

DCHECK_LT(label_value, prob_.shape(softmax_axis_));

loss -= weight_value * log(std::max(prob_data[i * dim + label_value * inner_num_ + j],

Dtype(FLT_MIN)));

count += weight_value;

}

}

}

top[0]->mutable_cpu_data()[0] = loss / get_normalizer(normalization_, count);

if (top.size() == 2) {

top[1]->ShareData(prob_);

}

}

template <typename Dtype>

void SoftmaxWithLossLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) {

if (propagate_down[1]) {

LOG(FATAL) << this->type()

<< " Layer cannot backpropagate to label inputs.";

}

if (propagate_down[0]) {

Dtype* bottom_diff = bottom[0]->mutable_cpu_diff();

const Dtype* prob_data = prob_.cpu_data();

caffe_copy(prob_.count(), prob_data, bottom_diff);

const Dtype* label = bottom[1]->cpu_data();

int dim = prob_.count() / outer_num_;

Dtype count = 0;

if (bottom.size() == 2) {

for (int i = 0; i < outer_num_; ++i) {

for (int j = 0; j < inner_num_; ++j) {

const int label_value = static_cast<int>(label[i * inner_num_ + j]);

if (has_ignore_label_ && label_value == ignore_label_) {

for (int c = 0; c < bottom[0]->shape(softmax_axis_); ++c) {

bottom_diff[i * dim + c * inner_num_ + j] = 0;

}

}

else {

//反向求导的公式的实现

bottom_diff[i * dim + label_value * inner_num_ + j] -= 1;

count += 1;

}

}

}

}

else if (bottom.size() == 3) {

const Dtype* weights = bottom[2]->cpu_data();

for (int i = 0; i < outer_num_; ++i) {

for (int j = 0; j < inner_num_; ++j) {

const int label_value = static_cast<int>(label[i * inner_num_ + j]);

const Dtype weight_value = weights[i * inner_num_ + j];

if (has_ignore_label_ && label_value == ignore_label_) {

for (int c = 0; c < bottom[0]->shape(softmax_axis_); ++c) {

bottom_diff[i * dim + c * inner_num_ + j] = 0;

}

}

else {

bottom_diff[i * dim + label_value * inner_num_ + j] -= 1;

for (int c = 0; c < bottom[0]->shape(softmax_axis_); ++c) {

bottom_diff[i * dim + c * inner_num_ + j] *= weight_value * (has_class_weight_ ? class_weight_.cpu_data()[label_value] : 1.0);

}

if(weight_value != 0) count += weight_value;

}

}

}

}

// Scale gradient

//由归一化手段决定梯度的放缩

Dtype loss_weight = top[0]->cpu_diff()[0] /

get_normalizer(normalization_, count);

caffe_scal(prob_.count(), loss_weight, bottom_diff);

}

}

#ifdef CPU_ONLY

STUB_GPU(SoftmaxWithLossLayer);

#endif

INSTANTIATE_CLASS(SoftmaxWithLossLayer);

REGISTER_LAYER_CLASS(SoftmaxWithLoss);

}