pom文件

<!-- 添加Httpclient支持 -->

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpclient</artifactId>

<version>4.5.2</version>

</dependency>

<!-- 添加jsoup支持 -->

<dependency>

<groupId>org.jsoup</groupId>

<artifactId>jsoup</artifactId>

<version>1.10.1</version>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.16</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.44</version>

</dependency>

<!-- 添加Httpclient支持 -->

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpclient</artifactId>

<version>4.5.2</version>

</dependency>

<!-- 添加日志支持 -->

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.16</version>

</dependency>

<!-- 添加ehcache支持 -->

<dependency>

<groupId>net.sf.ehcache</groupId>

<artifactId>ehcache</artifactId>

<version>2.10.3</version>

</dependency>

<!-- 添加commons io支持 -->

<dependency>

<groupId>commons-io</groupId>

<artifactId>commons-io</artifactId>

<version>2.5</version>

</dependency>

Ehcache.xml

<?xml version="1.0" encoding="UTF-8"?>

<ehcache>

<!--

磁盘存储:将缓存中暂时不使用的对象,转移到硬盘,类似于Windows系统的虚拟内存

path:指定在硬盘上存储对象的路径

-->

<diskStore path="E:\Temp\ReptileCache" />

<!--

defaultCache:默认的缓存配置信息,如果不加特殊说明,则所有对象按照此配置项处理

maxElementsInMemory:设置了缓存的上限,最多存储多少个记录对象

eternal:代表对象是否永不过期

overflowToDisk:当内存中Element数量达到maxElementsInMemory时,Ehcache将会Element写到磁盘中

-->

<defaultCache

maxElementsInMemory="1"

eternal="true"

overflowToDisk="true"/>

<cache

name="cnblog"

maxElementsInMemory="1"

diskPersistent="true"

eternal="true"

overflowToDisk="true"/>

</ehcache>

crawler.properties 配置数据信息和文件路径

dbUrl=jdbc:mysql://localhost:3306/db_blogs?characterEncoding=UTF-8

dbUserName=数据库名

dbPassword=密码

jdbcName=com.mysql.jdbc.Driver

ehcacheXmlPath=ehcache.xml

#图片存放路径

blogImages=E://Temp/blogImages/

日志文件 log4j.properties

log4j.rootLogger=INFO, stdout,D

#Console

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.Target = System.out

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

#log4j.appender.stdout.layout.ConversionPattern=[%-5p] %d{yyyy-MM-dd HH:mm:ss,SSS} method:%l%n%m%n

#D

log4j.appender.D = org.apache.log4j.RollingFileAppender

log4j.appender.D.File = E://Temp/log4j/crawler/log.log

log4j.appender.D.MaxFileSize=100KB

log4j.appender.D.MaxBackupIndex=100

log4j.appender.D.Append = true

log4j.appender.D.layout = org.apache.log4j.PatternLayout

log4j.appender.D.layout.ConversionPattern = %-d{yyyy-MM-dd HH:mm:ss} [ %t:%r ] - [ %p ] %m%n

然后是要用到的几个帮助类

package com.hw.util;

import java.text.SimpleDateFormat;

import java.util.Date;

/**

* 日期工具类

* @author user

*

*/

public class DateUtil {

/**

* 获取当前年月日路径

* @return

* @throws Exception

*/

public static String getCurrentDatePath()throws Exception{

Date date=new Date();

SimpleDateFormat sdf=new SimpleDateFormat("yyyy/MM/dd");

return sdf.format(date);

}

public static void main(String[] args) {

try {

System.out.println(getCurrentDatePath());

} catch (Exception e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

}

package com.hw.util;

import java.sql.Connection;

import java.sql.DriverManager;

/**

* 数据库工具类

* @author user

*

*/

public class DbUtil {

/**

* 获取连接

* @return

* @throws Exception

*/

public Connection getCon()throws Exception{

Class.forName(PropertiesUtil.getValue("jdbcName"));

Connection con=DriverManager.getConnection(PropertiesUtil.getValue("dbUrl"), PropertiesUtil.getValue("dbUserName"), PropertiesUtil.getValue("dbPassword"));

return con;

}

/**

* 关闭连接

* @param con

* @throws Exception

*/

public void closeCon(Connection con)throws Exception{

if(con!=null){

con.close();

}

}

public static void main(String[] args) {

DbUtil dbUtil=new DbUtil();

try {

dbUtil.getCon();

System.out.println("数据库连接成功");

} catch (Exception e) {

// TODO Auto-generated catch block

e.printStackTrace();

System.out.println("数据库连接失败");

}

}

}

package com.hw.util;

import java.io.IOException;

import java.io.InputStream;

import java.util.Properties;

/**

* properties工具类

* @author user

*

*/

public class PropertiesUtil {

/**

* 根据key获取value值

* @param key

* @return

*/

public static String getValue(String key){

Properties prop=new Properties();

InputStream in=new PropertiesUtil().getClass().getResourceAsStream("/crawler.properties");

try {

prop.load(in);

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

return prop.getProperty(key);

}

}

爬取网页类

package com.hw.crawler;

import com.hw.util.DateUtil;

import com.hw.util.DbUtil;

import com.hw.util.PropertiesUtil;

import net.sf.ehcache.Cache;

import net.sf.ehcache.CacheManager;

import net.sf.ehcache.Status;

import org.apache.commons.io.FileUtils;

import org.apache.http.HttpEntity;

import org.apache.http.client.ClientProtocolException;

import org.apache.http.client.config.RequestConfig;

import org.apache.http.client.methods.CloseableHttpResponse;

import org.apache.http.client.methods.HttpGet;

import org.apache.http.impl.client.CloseableHttpClient;

import org.apache.http.impl.client.HttpClients;

import org.apache.http.util.EntityUtils;

import org.apache.log4j.Logger;

import org.jsoup.Jsoup;

import org.jsoup.nodes.Document;

import org.jsoup.nodes.Element;

import org.jsoup.select.Elements;

import java.io.File;

import java.io.IOException;

import java.sql.Connection;

import java.sql.PreparedStatement;

import java.sql.SQLException;

import java.util.ArrayList;

import java.util.HashMap;

import java.util.LinkedList;

import java.util.List;

import java.util.Map;

import java.util.Set;

import java.util.UUID;

/**

* @program: Maven

* @description: 爬虫类

* @author: hw

* @create: 2018-12-03 20:12

**/

public class BlogCrawlerStarter {

//单次启动爬取的数据量

private static int index=0;

//日志

private static Logger logger = Logger.getLogger(BlogCrawlerStarter.class);

//爬取的网址

private static String HOMEURL = "随你";

private static CloseableHttpClient httpClient;

//数据库

private static Connection con;

//缓存

private static CacheManager cacheManager;

private static Cache cache;

/**

* @Description: httpclient解析首页

* @Param: []

* @return: void

* @Author: hw

* @Date: 2018/12/3

*/

public static void parseHomePage() throws IOException {

logger.info("开始爬取首页" + HOMEURL);

//创建缓存

cacheManager = CacheManager.create(PropertiesUtil.getValue("ehcacheXmlPath"));

//缓存槽

cache = cacheManager.getCache("cnblog");

//比作一个浏览器

httpClient = HttpClients.createDefault();

HttpGet httpGet = new HttpGet(HOMEURL);

//设置相应时间和等待时间

RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build();

httpGet.setConfig(config);

CloseableHttpResponse response = null;

try {

response = httpClient.execute(httpGet);

//如果为空

if (response == null) {

logger.info(HOMEURL + "爬取无响应");

return;

}

//状态正常

if (response.getStatusLine().getStatusCode() == 200) {

//网络实体

HttpEntity entity = response.getEntity();

String s = EntityUtils.toString(entity, "utf-8");

// System.out.println(s);

parseHomePageContent(s);

}

} catch (ClientProtocolException e) {

logger.error(HOMEURL + "-ClientProtocolException", e);

} catch (IOException e) {

logger.error(HOMEURL + "-IOException", e);

} finally {

//关闭

if (response != null) {

response.close();

}

if (httpClient != null) {

httpClient.close();

}

}

if (cache.getStatus() == Status.STATUS_ALIVE) {

cache.flush();

}

cacheManager.shutdown();

logger.info("结束" + HOMEURL);

}

/**

* @Description: 通过网络爬虫框架jsoup, 解析网页内容, 获取想要的数据(博客的标题, 博客的链接)

* @Param: [s]

* @return: void

* @Author: hw

* @Date: 2018/12/3

*/

private static void parseHomePageContent(String s) {

Document doc = Jsoup.parse(s);

//查找该内容下的a标签

Elements select = doc.select("#feedlist_id .list_con .title h2 a");

for (Element element : select) {

//博客链接

String href = element.attr("href");

if (href == null || href.equals("")) {

logger.error("博客为空,不再爬取");

continue;

}

//当缓存有这条数据

if (cache.get(href) != null) {

logger.info("该数据已经被爬取到数据库中,不再添加,本次已爬取"+index+"个");

continue;

}

//通过博客链接获取博客内容

parseBlogUrl(href);

}

}

/**

* @Description: 通过博客链接获取博客内容

* @Param: [href]

* @return: void

* @Author: hw

* @Date: 2018/12/3

*/

private static void parseBlogUrl(String blogUrl) {

logger.info("开始爬取博客网页" + blogUrl);

//比作一个浏览器

httpClient = HttpClients.createDefault();

HttpGet httpGet = new HttpGet(blogUrl);

//设置相应时间和等待时间

RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build();

httpGet.setConfig(config);

CloseableHttpResponse response = null;

try {

response = httpClient.execute(httpGet);

//如果为空

if (response == null) {

logger.info(blogUrl + "爬取无响应");

return;

}

//状态正常

if (response.getStatusLine().getStatusCode() == 200) {

//网络实体

HttpEntity entity = response.getEntity();

String blogContent = EntityUtils.toString(entity, "utf-8");

parseBlogContent(blogContent, blogUrl);

}

} catch (ClientProtocolException e) {

logger.error(blogUrl + "-ClientProtocolException", e);

} catch (IOException e) {

logger.error(blogUrl + "-IOException", e);

} finally {

//关闭

if (response != null) {

try {

response.close();

} catch (IOException e) {

logger.error(blogUrl + "-IOException", e);

}

}

}

logger.info("结束博客网页" + HOMEURL);

}

/**

* @Description: 解析博客内容, 获取博客的标题和所有内容

* @Param: [blogContent]

* @return: void

* @Author: hw

* @Date: 2018/12/3

*/

private static void parseBlogContent(String blogContent, String Url) throws IOException {

Document parse = Jsoup.parse(blogContent);

//标题

Elements title = parse.select("#mainBox main .blog-content-box .article-header-box .article-header .article-title-box h1");

if (title.size() == 0) {

logger.info("博客标题为空,不加入数据库");

return;

}

//标题

String titles = title.get(0).html();

//内容

Elements content_views = parse.select("#content_views");

if (content_views.size() == 0) {

logger.info("内容为空,不加入数据库");

return;

}

//内容

String content = content_views.get(0).html();

//图片

// Elements imgele = parse.select("img");

// List<String> imglist = new LinkedList<>();

// if (imgele.size() >= 0) {

// for (Element element : imgele) {

// //图片链接加入集合

// imglist.add(element.attr("src"));

// }

// }

//

// if (imglist.size() > 0) {

// Map<String, String> replaceUrlMap = DownLoadImgList(imglist);

// blogContent = replaceContent(blogContent, replaceUrlMap);

// }

String sql = "insert into t_article values(null,?,?,null,now(),0,0,null,?,0,null)";

try {

PreparedStatement ps = con.prepareStatement(sql);

ps.setObject(1, titles);

ps.setObject(2, content);

ps.setObject(3, Url);

if (ps.executeUpdate() == 0) {

logger.info("爬取博客信息插入数据库失败");

} else {

index++;

//加入缓存

cache.put(new net.sf.ehcache.Element(Url, Url));

logger.info("爬取博客信息插入数据库成功,本次已爬取"+index+"个");

}

} catch (SQLException e) {

e.printStackTrace();

}

}

/**

* @Description: 将别人的博客内容进行加工, 将原有的图片地址换成本地的图片

* @Param: [blogContent, replaceUrlMap]

* @return: java.lang.String

* @Author: hw

* @Date: 2018/12/3

*/

private static String replaceContent(String blogContent, Map<String, String> replaceUrlMap) {

Set<Map.Entry<String, String>> entries = replaceUrlMap.entrySet();

for (Map.Entry<String, String> entry : entries) {

blogContent = blogContent.replace(entry.getKey(), entry.getValue());

}

return blogContent;

}

/**

* @Description:爬取图片本地化

* @Param: [imglist]

* @return: java.util.Map<java.lang.String , java.lang.String>

* @Author: hw

* @Date: 2018/12/3

*/

// private static Map<String, String> DownLoadImgList(List<String> imglist) throws IOException {

// Map<String, String> replaceMap = new HashMap<>();

// for (String imgurl : imglist) {

// //比作一个浏览器

// CloseableHttpClient httpClient = HttpClients.createDefault();

// HttpGet httpGet = new HttpGet(imgurl);

// //设置相应时间和等待时间

// RequestConfig config = RequestConfig.custom().setConnectTimeout(5000).setSocketTimeout(8000).build();

// httpGet.setConfig(config);

// CloseableHttpResponse response = null;

// try {

// response = httpClient.execute(httpGet);

// //如果为空

// if (response == null) {

// logger.info(HOMEURL + "爬取无响应");

// } else {

// //状态正常

// if (response.getStatusLine().getStatusCode() == 200) {

// //网络实体

// HttpEntity entity = response.getEntity();

// //存放图片地址

// String blogImages = PropertiesUtil.getValue("blogImages");

// //生成日期 按天生成文件夹

// String data = DateUtil.getCurrentDatePath();

// String uuid = UUID.randomUUID().toString();

// //截取图片名

// String subfix = entity.getContentType().getValue().split("/")[1];

// //最后生成路径名

// String fileName = blogImages + data + "/" + uuid + "." + subfix;

//

// FileUtils.copyInputStreamToFile(entity.getContent(), new File(fileName));

// //imgurl为别人的文件地址,filename为本地图片地址

// replaceMap.put(imgurl, fileName);

// }

// }

//

// } catch (ClientProtocolException e) {

// logger.error(imgurl + "-ClientProtocolException", e);

// } catch (IOException e) {

// logger.error(imgurl + "-IOException", e);

// } catch (Exception e) {

// logger.error(imgurl + "-Exception", e);

// } finally {

// //关闭

// if (response != null) {

// response.close();

// }

//

// }

//

// }

// return replaceMap;

// }

/**

* @Description: 开始执行

* @Param: []

* @return: void

* @Author: hw

* @Date: 2018/12/3

*/

public static void start() {

while (true) {

DbUtil dbUtil = new DbUtil();

try {

//连接数据库

con = dbUtil.getCon();

parseHomePage();

} catch (Exception e) {

logger.error("数据库连接失败");

} finally {

if (con != null) {

try {

con.close();

} catch (SQLException e) {

logger.info("数据异常", e);

}

}

}

try {

//x分钟爬取一次

Thread.sleep(1000 * 45*1);

} catch (InterruptedException e) {

logger.info("线程休眠异常", e);

}

}

}

public static void main(String[] args) {

start();

List list=new ArrayList();

list.iterator();

}

}

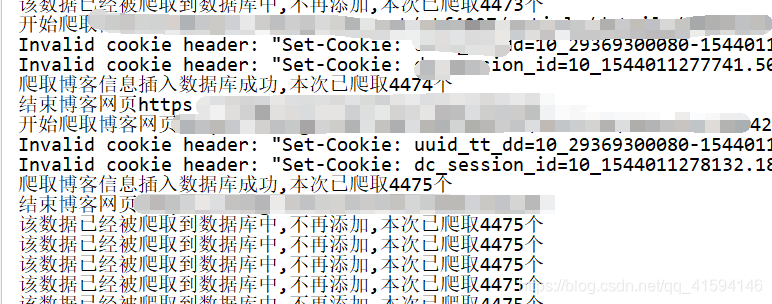

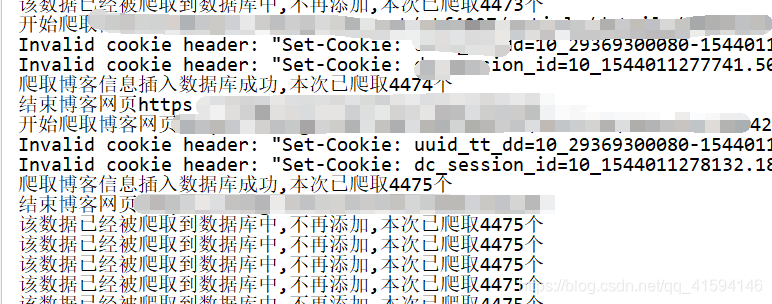

效果图: