一个网页数据的爬取

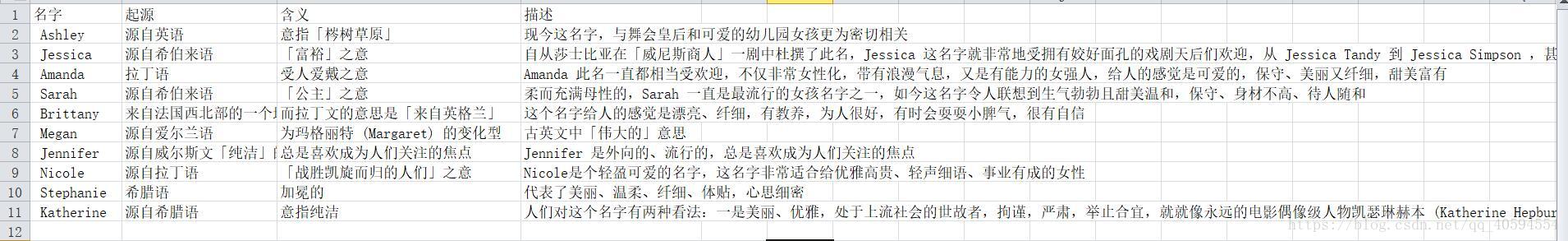

效果图如下

代码如下:

import csv, requests

from bs4 import BeautifulSoup

url = 'https://www.chunyuyisheng.com/pc/article/22127/'

html = requests.get(url).text

soup = BeautifulSoup(html, 'html.parser')

name = []

SimilarName=[]

qq=[]

Description=[]

all=[]

for x in soup.find_all('p'):

c=x.get_text()

qq.append(c)

u1 =c.split(':')

a=12

name.append(qq[4][2:])

while a<70:

name.append(qq[a][2:])

a=a+7

name[-1]=name[-1][1:]

print(name)

b=14

SimilarName.append(qq[6][3:])

while b<71:

SimilarName.append(qq[b][3:])

b=b+7

print(SimilarName)

c=16

Description.append(qq[8])

while c<73:

Description.append(qq[c])

c=c+7

print(Description)

i=0

x=()

Description1=[]

Source=[]

Mean=[]

for z in Description:

u2 = z.split('。')[1]

Description1.append(u2)

for zz in Description:

u3 = zz.split(',')[0]

Source.append(u3)

for zzz in Description:

u4 = zzz.split(',', 1)[1]

u5 = u4.split('。')[0]

Mean.append(u5)

while i<10:

x=([name[i]]+[Source[i]]+[Mean[i]]+[Description1[i]])

i=i+1

print(x)

all.append(x)

with open('E:\\textn.csv', 'w+',newline="") as f:

writer = csv.writer(f)

writer.writerow(['名字','起源',"含义",'描述'])

for row in all:

writer.writerow(row)大家可以自己尝试修改。