【一】整体流程综述

gensim底层封装了Google的Word2Vec的c接口,借此实现了word2vec。使用gensim接口非常方便,整体流程如下:

1. 数据预处理(分词后的数据)

2. 数据读取

3.模型定义与训练

4.模型保存与加载

5.模型使用(相似度计算,词向量获取)

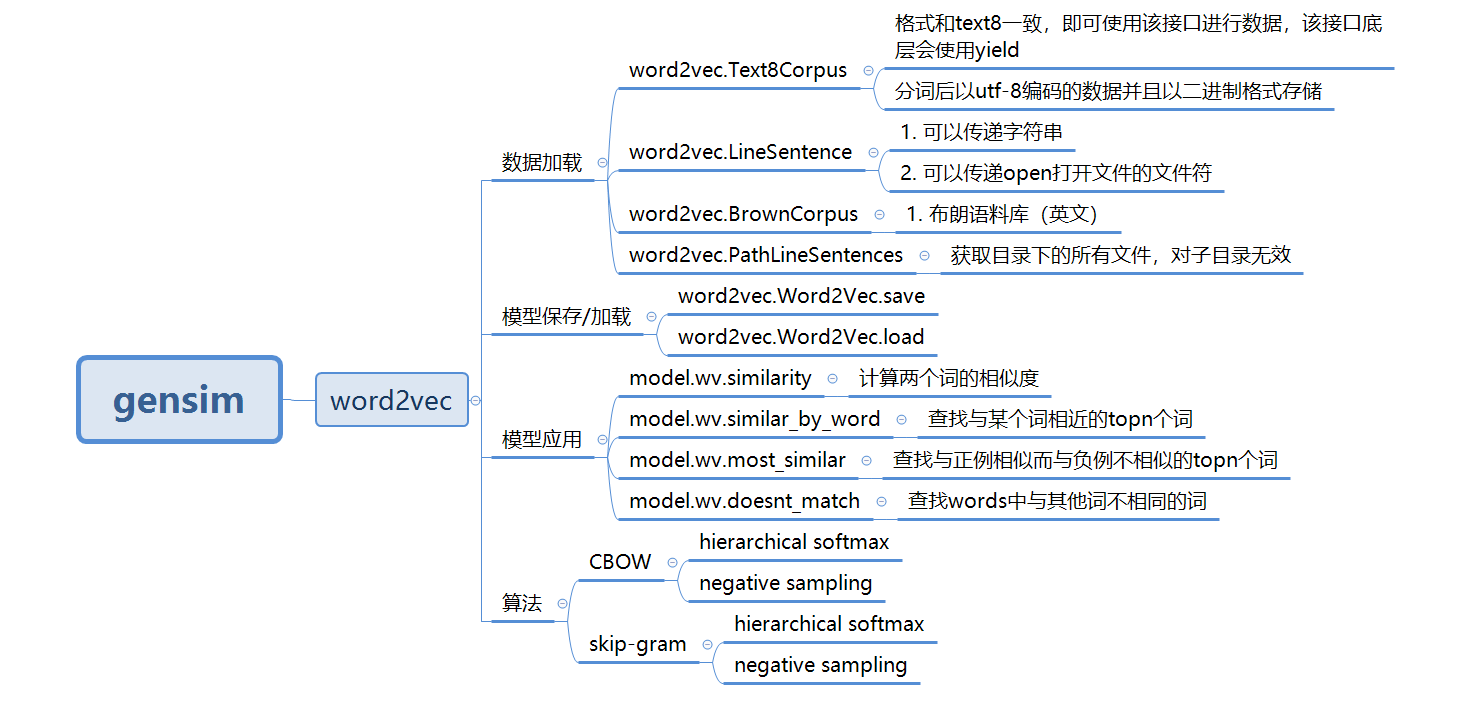

【二】gensim提供的word2vec主要功能

【三】gensim接口使用示例

1. 使用jieba进行分词。

文本数据:《人民的名义》的小说原文作为语料

百度云盘:https://pan.baidu.com/s/1ggA4QwN

# -*- coding:utf-8 -*-

import jieba

def preprocess_in_the_name_of_people():

with open("in_the_name_of_people.txt",mode='rb') as f:

doc = f.read()

doc_cut = jieba.cut(doc)

result = ' '.join(doc_cut)

result = result.encode('utf-8')

with open("in_the_name_of_people_cut.txt",mode='wb') as f2:

f2.write(result)2. 使用原始text8.zip进行词向量训练

from gensim.models import word2vec

# 引入日志配置

import logging

logging.basicConfig(format='%(asctime)s : %(levelname)s : %(message)s', level=logging.INFO)

def train_text8():

sent = word2vec.Text8Corpus(fname="text8")

model = word2vec.Word2Vec(sentences=sent)

model.save("text8.model")注意。这里是解压后的文件,不是zip包

3. 使用Text8Corpus 接口加载数据

def train_in_the_name_of_people():

sent = word2vec.Text8Corpus(fname="in_the_name_of_people_cut.txt")

model = word2vec.Word2Vec(sentences=sent)

model.save("in_the_name_of_people.model")4. 使用 LineSentence 接口加载数据

def train_line_sentence():

with open("in_the_name_of_people_cut.txt", mode='rb') as f:

# 传递open的fd

sent = word2vec.LineSentence(f)

model = word2vec.Word2Vec(sentences=sent)

model.save("line_sentnce.model")5. 使用 PathLineSentences 接口加载数据

def train_PathLineSentences():

# 传递目录,遍历目录下的所有文件

sent = word2vec.PathLineSentences("in_the_name_of_people")

model = word2vec.Word2Vec(sentences=sent)

model.save("PathLineSentences.model")6. 数据加载与训练分开

def train_left():

sent = word2vec.Text8Corpus(fname="in_the_name_of_people_cut.txt")

# 定义模型

model = word2vec.Word2Vec()

# 构造词典

model.build_vocab(sentences=sent)

# 模型训练

model.train(sentences=sent,total_examples = model.corpus_count,epochs = model.iter)

model.save("left.model")7. 模型加载与使用

model = word2vec.Word2Vec.load("text8.model")

print(model.similarity("eat","food"))

print(model.similarity("cat","dog"))

print(model.similarity("man","woman"))

print(model.most_similar("man"))

print(model.wv.most_similar(positive=['woman', 'king'], negative=['man'],topn=1))

model2 = word2vec.Word2Vec.load("in_the_name_of_people.model")

print(model2.most_similar("吃饭"))

print(model2.similarity("省长","省委书记"))

model2 = word2vec.Word2Vec.load("line_sentnce.model")

print(model2.similarity("李达康","市委书记"))

top3 = model2.wv.similar_by_word(word="李达康",topn=3)

print(top3)

model2 = word2vec.Word2Vec.load("PathLineSentences.model")

print(model2.similarity("李达康","书记"))

print(model2.wv.similarity("李达康","书记"))

print(model2.wv.doesnt_match(words=["李达康","高育良","赵立春"]))

model = word2vec.Word2Vec.load("left.model")

print(model.similarity("李达康","书记"))结果如下:

0.5434648

0.8383337

0.7435267

[('woman', 0.7435266971588135), ('girl', 0.6460582613945007), ('creature', 0.589219868183136), ('person', 0.570125937461853), ('evil', 0.5688984990119934), ('god', 0.5465947389602661), ('boy', 0.544859766960144), ('bride', 0.5401148796081543), ('soul', 0.5365912914276123), ('stranger', 0.531282901763916)]

[('queen', 0.7230167388916016)]

[('只能', 0.9983761310577393), ('招待所', 0.9983713626861572), ('深深', 0.9983667135238647), ('干警', 0.9983251094818115), ('警察', 0.9983127117156982), ('公安', 0.9983105659484863), ('赵德汉', 0.9982908964157104), ('似乎', 0.9982795715332031), ('一场', 0.9982751607894897), ('才能', 0.9982657432556152)]

0.97394305

0.99191403

[('新', 0.9974302053451538), ('赵立春', 0.9974139928817749), ('谈一谈', 0.9971731901168823)]

0.91472965

0.91472965

高育良

0.885189958. 参考链接

https://github.com/RaRe-Technologies/gensim

https://github.com/RaRe-Technologies/gensim/blob/develop/docs/notebooks/word2vec.ipynb