吴恩达神经网络与深度学习——深度神经网络习题4

构建DNN架构

a^[l]:第l层的激活函数

w^[l],b^[l]:第l层的参数

x^(i):第i个训练样本

a^[l]_i:第l层第i个神经元的激活函数

包

numpy

matplotlib

dnn_utils

import numpy as np

import h5py

import matplotlib.pyplot as plt

from testCases_v2 import *

from dnn_utils_v2 import sigmoid, sigmoid_backward, relu, relu_backward

%matplotlib inline

plt.rcParams['figure.figsize'] = (5.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

%load_ext autoreload

%autoreload 2

np.random.seed(1)

作业大纲

建一个2层NN和一个L层NN

1.初始化参数

2.实现前向传播

实现一个线性部分(Z^[l])

实现激活函数(ReLu/sigmoid)

将前两步结合起来,形成[LINEAR->ACTIVATION]正向传播

复制L-1次,最后一次使用[LINEAR->sigmoid]正向传播

3.计算损失函数

4.实现反向传播

完成反向传播的线性部分

计算激活函数的导数(relu_backward/sigmoid_backward)

结合前两步,形成 [LINEAR->ACTIVATION] 反向传播

复制L-1次,最后一次使用[LINEAR->sigmoid]反向传播

5.更新参数

初始化

2层NN

import numpy as np

def initialize_parameters(n_x, n_h, n_y):

'''

input:

n_x:input layer size

n_h:hidden layer size

n_y:outpou layer size

output:

parameters:which combine W1,W2,b1,b2

W1,b1:1th layer parameters

W1.shape = n_h,n_x

b1.shape = n_h,1

W2,b2:2th layer parameters

W2.shape = n_y,n_h

b2.shape = n_y,1

'''

np.random.seed(1)

W1 = np.random.randn(n_h, n_x) * 0.01

W2 = np.random.randn(n_y, n_h) * 0.01

b1 = np.zeros((n_h, 1))

b2 = np.zeros((n_y, 1))

assert (W1.shape == (n_h, n_x))

assert (W2.shape == (n_y, n_h))

assert (b1.shape == (n_h, 1))

assert (b2.shape == (n_y, 1))

parameters = {

"W1": W1,

"W2": W2,

"b1": b1,

"b2": b2}

return parameters

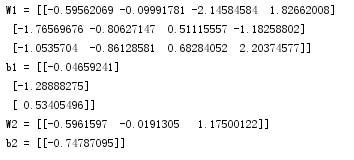

parameters = initialize_parameters(2,2,1)

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))

L层NN

import two_layer_nn

import numpy as np

def initialize_parameters_deep(layer_dims):

'''

input:

layer_dims:python array (list) containing the dimensions of each layer in our network

example:layer_dims = [2,4,1]means it's a 2 layer NN,input layer has 2 units,hidden layer has 4 units,output layer has 1 unit

output:

:parameters: which combines W1,b1,W2,b2,...,WL,bL

W1.shape = n1,n0 b1.shape = n1,1

W2.shape = n2,n1 b2.shape = n2,1

...

WL.shape = nL,nL-1,bL.shape = nL,1

'''

np.random.seed(3)

parameters = {}

L = len(layer_dims)

for i in range(1,L):

parameters["W"+str(i)] = np.random.randn(layer_dims[i],layer_dims[i-1])*0.01

parameters["b"+str(i)] = np.zeros((layer_dims[i],1))

assert (parameters["W"+str(i)].shape == (layer_dims[i],layer_dims[i-1]))

assert (parameters["b"+str(i)].shape == (layer_dims[i],1))

return parameters

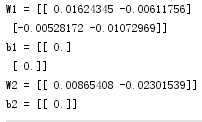

parameters = initialize_parameters_deep([5,4,3])

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))

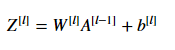

前向传播

2层NN

线性部分

def linear_forward(A, W, b):

'''

input:

:param A: input dataset

A.shape = n_x , m

:param W: 1th layer parameters

W.shape = n_h,n_x

:param b: 1th layer parameters

b.shape = n_h,1

return:

:param Z:

Z.shape = n_h,m

'''

Z = np.dot(W,A)+b

assert (Z.shape == (W.shape[0],A.shape[1]))

cache = (A,W,b)

return Z,cache

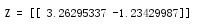

A, W, b = testCases_v2.linear_forward_test_case()

Z, linear_cache = linear_forward(A, W, b)

print("Z = " + str(Z))

激活函数

import numpy as np

def sigmoid(Z):

"""

Implements the sigmoid activation in numpy

Arguments:

Z -- numpy array of any shape

Returns:

A -- output of sigmoid(z), same shape as Z

cache -- returns Z as well, useful during backpropagation

"""

A = 1/(1+np.exp(-Z))

cache = Z

return A, cache

def relu(Z):

"""

Implement the RELU function.

Arguments:

Z -- Output of the linear layer, of any shape

Returns:

A -- Post-activation parameter, of the same shape as Z

cache -- a python dictionary containing "A" ; stored for computing the backward pass efficiently

"""

A = np.maximum(0,Z)

assert(A.shape == Z.shape)

cache = Z

return A, cache

def relu_backward(dA, cache):

"""

Implement the backward propagation for a single RELU unit.

Arguments:

dA -- post-activation gradient, of any shape

cache -- 'Z' where we store for computing backward propagation efficiently

Returns:

dZ -- Gradient of the cost with respect to Z

"""

Z = cache

dZ = np.array(dA, copy=True) # just converting dz to a correct object.

# When z <= 0, you should set dz to 0 as well.

dZ[Z <= 0] = 0

assert (dZ.shape == Z.shape)

return dZ

def sigmoid_backward(dA, cache):

"""

Implement the backward propagation for a single SIGMOID unit.

Arguments:

dA -- post-activation gradient, of any shape

cache -- 'Z' where we store for computing backward propagation efficiently

Returns:

dZ -- Gradient of the cost with respect to Z

"""

Z = cache

s = 1/(1+np.exp(-Z))

dZ = dA * s * (1-s)

assert (dZ.shape == Z.shape)

return dZ

线性+激活函数

def linear_activation_forward(A_prev, W, b, activation):

'''

input:

A_prev -- activations from previous layer (or input data): (size of previous layer, number of examples)

W -- weights matrix: numpy array of shape (size of current layer, size of previous layer)

b -- bias vector, numpy array of shape (size of the current layer, 1)

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

A -- the output of the activation function, also called the post-activation value

cache -- a python dictionary containing "linear_cache" and "activation_cache";

stored for computing the backward pass efficiently

'''

if activation == "sigmoid":

Z,linear_cache = linear_forward(A_prev,W,b)

A,activation_cache= dnn_utils_v2.sigmoid(Z)

elif activation == "relu":

Z,linear_cache = linear_forward(A_prev,W,b)

A,activation_cache = dnn_utils_v2.relu(Z)

assert (A.shape == (W.shape[0],A_prev.shape[1]))

cache = (linear_cache,activation_cache)

return A,cache

A_prev, W, b = testCases_v2.linear_activation_forward_test_case()

A, linear_activation_cache = linear_activation_forward(A_prev, W, b, activation = "sigmoid")

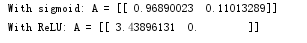

print("With sigmoid: A = " + str(A))

A, linear_activation_cache = linear_activation_forward(A_prev, W, b, activation = "relu")

print("With ReLU: A = " + str(A))

L层NN

# GRADED FUNCTION: L_model_forward

def L_model_forward(X, parameters):

"""

Implement forward propagation for the [LINEAR->RELU]*(L-1)->LINEAR->SIGMOID computation

Arguments:

X -- data, numpy array of shape (input size, number of examples)

parameters -- output of initialize_parameters_deep()

Returns:

AL -- last post-activation value

caches -- list of caches containing:

every cache of linear_relu_forward() (there are L-1 of them, indexed from 0 to L-2)

the cache of linear_sigmoid_forward() (there is one, indexed L-1)

"""

caches = []

A = X

L = len(parameters) // 2 # number of layers in the neural network

# Implement [LINEAR -> RELU]*(L-1). Add "cache" to the "caches" list.

for l in range(1, L):

A_prev = A

### START CODE HERE ### (≈ 2 lines of code)

A, cache = linear_activation_forward(A_prev,

parameters["W" + str(l)],

parameters["b" + str(l)],

activation='relu')

caches.append(cache)

### END CODE HERE ###

# Implement LINEAR -> SIGMOID. Add "cache" to the "caches" list.

### START CODE HERE ### (≈ 2 lines of code)

AL, cache = linear_activation_forward(A,

parameters["W" + str(L)],

parameters["b" + str(L)],

activation='sigmoid')

caches.append(cache)

### END CODE HERE ###

assert(AL.shape == (1,X.shape[1]))

return AL, caches

X, parameters = testCases_v2.L_model_forward_test_case()

AL, caches = L_model_forward(X, parameters)

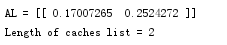

print("AL = " + str(AL))

print("Length of caches list = " + str(len(caches)))

代价函数

def compute_cost(AL, Y):

"""

Implement the cost function defined by equation (7).

Arguments:

AL -- probability vector corresponding to your label predictions, shape (1, number of examples)

Y -- true "label" vector (for example: containing 0 if non-cat, 1 if cat), shape (1, number of examples)

Returns:

cost -- cross-entropy cost

"""

m = Y.size

cost = -1/m * np.sum(Y*np.log(AL)+(1-Y)*np.log(1-AL))

assert (cost.shape == ())

return cost

Y, AL = testCases_v2.compute_cost_test_case()

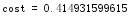

print("cost = " + str(compute_cost(AL, Y)))

反向传播

2层NN

线性部分

def linear_backward(dZ, cache):

"""

Implement the linear portion of backward propagation for a single layer (layer l)

Arguments:

dZ -- Gradient of the cost with respect to the linear output (of current layer l)

cache -- tuple of values (A_prev, W, b) coming from the forward propagation in the current layer

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

A_prev ,W , b = cache

m = dZ.shape[1]

dW = 1/m * np.dot(dZ,A_prev.T)

db = 1/m * np.sum(dZ,axis=1,keepdims=True)

dA_prev = np.dot(W.T,dZ)

assert (dA_prev.shape == A_prev.shape)

assert (dW.shape == W.shape)

assert (db.shape == b.shape)

return dA_prev,dW,db

dZ, linear_cache = testCases_v2.linear_backward_test_case()

dA_prev, dW, db = linear_backward(dZ, linear_cache)

print ("dA_prev = "+ str(dA_prev))

print ("dW = " + str(dW))

print ("db = " + str(db))

扫描二维码关注公众号,回复:

3926261 查看本文章

激活函数+线性部分

# GRADED FUNCTION: linear_activation_backward

def linear_activation_backward(dA, cache, activation):

"""

Implement the backward propagation for the LINEAR->ACTIVATION layer.

Arguments:

dA -- post-activation gradient for current layer l

cache -- tuple of values (linear_cache, activation_cache) we store for computing backward propagation efficiently

activation -- the activation to be used in this layer, stored as a text string: "sigmoid" or "relu"

Returns:

dA_prev -- Gradient of the cost with respect to the activation (of the previous layer l-1), same shape as A_prev

dW -- Gradient of the cost with respect to W (current layer l), same shape as W

db -- Gradient of the cost with respect to b (current layer l), same shape as b

"""

linear_cache, activation_cache = cache

if activation == "relu":

### START CODE HERE ### (≈ 2 lines of code)

dZ = relu_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

### END CODE HERE ###

elif activation == "sigmoid":

dZ = sigmoid_backward(dA, activation_cache)

dA_prev, dW, db = linear_backward(dZ, linear_cache)

return dA_prev, dW, db

AL, linear_activation_cache = linear_activation_backward_test_case()

dA_prev, dW, db = linear_activation_backward(AL, linear_activation_cache, activation = "sigmoid")

print ("sigmoid:")

print ("dA_prev = "+ str(dA_prev))

print ("dW = " + str(dW))

print ("db = " + str(db) + "\n")

dA_prev, dW, db = linear_activation_backward(AL, linear_activation_cache, activation = "relu")

print ("relu:")

print ("dA_prev = "+ str(dA_prev))

print ("dW = " + str(dW))

print ("db = " + str(db))

L层NN

def L_model_backward(AL, Y, caches):

"""

Implement the backward propagation for the [LINEAR->RELU] * (L-1) -> LINEAR -> SIGMOID group

Arguments:

AL -- probability vector, output of the forward propagation (L_model_forward())

Y -- true "label" vector (containing 0 if non-cat, 1 if cat)

caches -- list of caches containing:

every cache of linear_activation_forward() with "relu" (it's caches[l], for l in range(L-1) i.e l = 0...L-2)

the cache of linear_activation_forward() with "sigmoid" (it's caches[L-1])

Returns:

grads -- A dictionary with the gradients

grads["dA" + str(l)] = ...

grads["dW" + str(l)] = ...

grads["db" + str(l)] = ...

"""

grads = {}

L = len(caches) # the number of layers

m = AL.shape[1]

Y = Y.reshape(AL.shape) # after this line, Y is the same shape as AL

# Initializing the backpropagation

### START CODE HERE ### (1 line of code)

dAL = -np.divide(Y, AL) + np.divide((1 - Y), (1 - AL))

### END CODE HERE ###

# Lth layer (SIGMOID -> LINEAR) gradients. Inputs: "AL, Y, caches". Outputs: "grads["dAL"], grads["dWL"], grads["dbL"]

### START CODE HERE ### (approx. 2 lines)

current_cache = caches[-1]

grads["dA" + str(L)], grads["dW" + str(L)], grads["db" + str(L)] = two_layer_nn.linear_activation_backward(dAL, current_cache,

activation="sigmoid")

### END CODE HERE ###

for l in reversed(range(L - 1)):

# lth layer: (RELU -> LINEAR) gradients.

# Inputs: "grads["dA" + str(l + 2)], caches". Outputs: "grads["dA" + str(l + 1)] , grads["dW" + str(l + 1)] , grads["db" + str(l + 1)]

### START CODE HERE ### (approx. 5 lines)

current_cache = caches[l]

dA_prev_temp, dW_temp, db_temp = two_layer_nn.linear_activation_backward(grads["dA" + str(l + 2)], current_cache,

activation="relu")

grads["dA" + str(l + 1)] = dA_prev_temp

grads["dW" + str(l + 1)] = dW_temp

grads["db" + str(l + 1)] = db_temp

### END CODE HERE ###

return grads

AL, Y_assess, caches = testCases_v2.L_model_backward_test_case()

grads = L_model_backward(AL, Y_assess, caches)

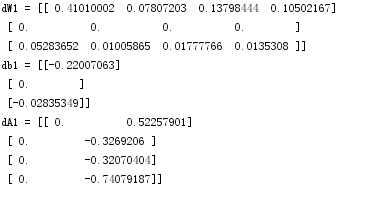

print ("dW1 = "+ str(grads["dW1"]))

print ("db1 = "+ str(grads["db1"]))

print ("dA1 = "+ str(grads["dA1"]))

更新参数

def update_parameters(parameters, grads, learning_rate):

"""

Update parameters using gradient descent

Arguments:

parameters -- python dictionary containing your parameters

grads -- python dictionary containing your gradients, output of L_model_backward

Returns:

parameters -- python dictionary containing your updated parameters

parameters["W" + str(l)] = ...

parameters["b" + str(l)] = ...

"""

L = len(parameters)//2

for i in range(1,L):

parameters["W"+str(i)] = parameters["W"+str(i)]-learning_rate*grads["dW"+str(i)]

parameters["b"+str(i)] = parameters["b"+str(i)]-learning_rate*grads["db"+str(i)]

assert (parameters["W"+str(i)].shape ==grads["dW"+str(i)].shape )

assert (parameters["b" + str(i)].shape == grads["db" + str(i)].shape)

return parameters

parameters, grads = testCases_v2.update_parameters_test_case()

parameters = update_parameters(parameters, grads, 0.1)

print ("W1 = "+ str(parameters["W1"]))

print ("b1 = "+ str(parameters["b1"]))

print ("W2 = "+ str(parameters["W2"]))

print ("b2 = "+ str(parameters["b2"]))