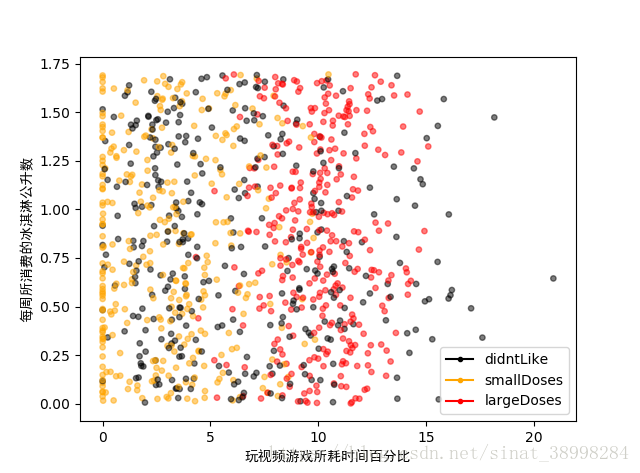

可视化

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

import matplotlib.lines as mlines

def file2matrix(filename):

fr=open(filename)

arrayOLines=fr.readlines()

numberOfLines=len(arrayOLines)

returnMat=np.zeros((numberOfLines,3))

classLabelVector=[]

index=0

for line in arrayOLines:

line=line.strip()

listFromLine=line.split('\t')

returnMat[index,:]=listFromLine[0:3]

classLabelVector.append(str(listFromLine[-1]))

index +=1

return returnMat,classLabelVector

#返回数据和相应的标签

def showdata(datingDataMat,datingLabels):

color=[]

for i,value in enumerate (datingLabels):

if value=='largeDoses':

color.append('red')

if value=='smallDoses':

color.append('orange')

if value=='didntLike':

color.append('black')

fig,axs=plt.subplots()

axs.scatter(x=datingDataMat[:,1], y=datingDataMat[:,2],color=color,s=15, alpha=.5)

axs.set_xlabel(u'玩视频游戏所耗时间百分比',fontproperties='SimHei')

axs.set_ylabel(u'每周所消费的冰淇淋公升数',fontproperties='SimHei')

#设置图例

didntLike = mlines.Line2D([], [], color='black', marker='.',markersize=6, label='didntLike')

smallDoses = mlines.Line2D([], [], color='orange', marker='.',markersize=6, label='smallDoses')

largeDoses = mlines.Line2D([], [], color='red', marker='.',markersize=6, label='largeDoses')

#添加图例

axs.legend(handles=[didntLike,smallDoses,largeDoses])

plt.show()

datingDataMat,datingLabels=file2matrix('D:/anicode/spyderworkspace/datingTestSet.txt')

showdata(datingDataMat,datingLabels)str(listFromLine[-1],机器学习实战上为int有误

归一化+分类

def file2matrix(filename):

fr=open(filename)

arrayOLines=fr.readlines()

numberOfLines=len(arrayOLines)

returnMat=np.zeros((numberOfLines,3))

classLabelVector=[]

index=0

for line in arrayOLines:

line=line.strip()

listFromLine=line.split('\t')

returnMat[index,:]=listFromLine[0:3]

classLabelVector.append(str(listFromLine[-1]))

index +=1

return returnMat,classLabelVector

#归一化

def autoNorm(dataSet):

minVals=dataSet.min(0)#返回每列的最小值,共3列,返回三个值

maxVals=dataSet.max(0)#返回每列的最大值,共3列,返回三个值

ranges=maxVals-minVals

normDataSet=np.zeros(dataSet.shape)

m=dataSet.shape[0]

normDataSet=dataSet-np.tile(minVals,(m,1))

normDataSet=normDataSet/np.tile(ranges,(m,1))

return normDataSet,ranges,minVals

#分类

def classify0(inX,dataSet,labels,k):

dataSetSize=dataSet.shape[0]

diffMat=np.tile(inX,(dataSetSize,1))-dataSet

sqDiffMat=diffMat**2

sqDistances=sqDiffMat.sum(axis=1)

distances=sqDistances**0.5

sortedDistIndicies=distances.argsort()#返回数组从小到大的索引值

classCount={}

for i in range(k):

voteIlabel=labels[sortedDistIndicies[i]]#下标对应着相应的标签

classCount[voteIlabel]=classCount.get(voteIlabel,0)+1#返回的是字典

#items()将字典中的项按列表返回

#key,按照key给的关键字进行排序

sortedClassCount=sorted(classCount.items(),key=operator.itemgetter(1),

reverse=True)

return sortedClassCount[0][0]

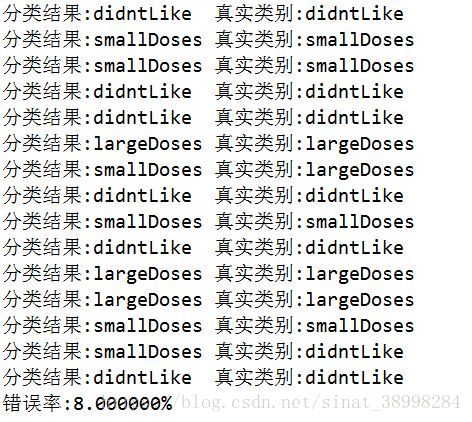

#测试1

datingDataMat,datingLabels=file2matrix('D:/anicode/spyderworkspace/datingTestSet.txt')

normDataSet,ranges,minVals=autoNorm(datingDataMat)

#获取所有数据的10%

hoRatio=0.10

m=normDataSet.shape[0]

numTestVecs=int(m*hoRatio)

#分类错误计数

errorCount=0.0

for i in range(numTestVecs):

clsResult=classify0(normDataSet[i,:],normDataSet[numTestVecs:m,:],

datingLabels[numTestVecs:m],4)

print("分类结果:%s\t真实类别:%s"%(clsResult,datingLabels[i]))

if clsResult!=datingLabels[i]:

errorCount+=1.0

print("错误率:%f%%"%(errorCount/float(numTestVecs)*100))#测试2

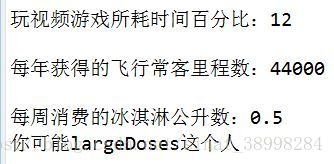

percentTats=float(input("玩视频游戏所耗时间百分比:"))

ffMiles=float(input("每年获得的飞行常客里程数:"))

iceCream=float(input("每周消费的冰淇淋公升数:"))

datingDataMat,datingLabels=file2matrix('D:/anicode/spyderworkspace/datingTestSet.txt')

normDataSet,ranges,minVals=autoNorm(datingDataMat)

#生成numpy数组,以便进行测试

inArr=np.array([ffMiles,percentTats,iceCream])

#测试集归一化

norminArr=(inArr-minVals)/ranges

#返回分类结果

clsResult=classify0(norminArr,normDataSet,datingLabels,3)

print("你可能%s这个人"%(clsResult))