本篇博客主要介绍TensorFlow中的迁移学习(transfering learning)。

节约计算资源,在训练好的模型的基础上扩展内容,充分利用原模型的理解力。(迁移前的数据和迁移后的数据相似或相关)

接下来介绍在tensorflow中将VGG16对动物的识别迁移到识别动物的长度。

下面是示例代码(需要数据集和VGG已经训练好的权值文件可留下邮箱):

# encoding:utf-8

from urllib.request import urlretrieve

import os

import numpy as np

import tensorflow as tf

import skimage.io

import skimage.transform

import matplotlib.pyplot as plt

'''

下载训练数据 tiger 和 kittycat 图片

'''

def download():

categories = ['tiger', 'kittycat']

for category in categories:

os.makedirs('./for_transfer_learning/data/%s' % category, exist_ok=True)

with open('./for_transfer_learning/imagenet_%s.txt' % category, 'r') as file:

urls = file.readlines()

n_urls = len(urls)

for i, url in enumerate(urls):

try:

urlretrieve(url.strip(), './for_transfer_learning/data/%s/%s' % (category, url.strip().split('/')[-1]))

print('%s %i/%i' % (category, i, n_urls))

except:

print('%s %i/%i' % (category, i, n_urls), 'no image')

'''

加载图片数据

'''

def load_img(path):

img = skimage.io.imread(path)

img = img / 255.0

# print "Original Image Shape: ", img.shape

# we crop image from center

short_edge = min(img.shape[:2])

yy = int((img.shape[0] - short_edge) / 2)

xx = int((img.shape[1] - short_edge) / 2)

crop_img = img[yy: yy + short_edge, xx: xx + short_edge]

# resize to 224, 224

resized_img = skimage.transform.resize(crop_img, (224, 224))[None, :, :, :] # shape [1, 224, 224, 3]

return resized_img

'''

加载训练数据,包括图片数据和标签数据

'''

def load_data():

imgs = {'tiger': [], 'kittycat': []}

for k in imgs.keys():

dir = './for_transfer_learning/data/' + k

for file in os.listdir(dir):

if not file.lower().endswith('.jpg'):

continue

try:

resized_img = load_img(os.path.join(dir, file))

except OSError:

continue

imgs[k].append(resized_img) # [1, height, width, depth] * n

if len(imgs[k]) == 400: # only use 400 imgs to reduce my memory load

break

# fake length data for tiger and cat

tigers_y = np.maximum(20, np.random.randn(len(imgs['tiger']), 1) * 30 + 100)

cat_y = np.maximum(10, np.random.randn(len(imgs['kittycat']), 1) * 8 + 40)

return imgs['tiger'], imgs['kittycat'], tigers_y, cat_y

class Vgg16:

vgg_mean = [103.939, 116.779, 123.68]

def __init__(self, vgg16_npy_path=None, restore_from=None):

# pre-trained parameters

try:

self.data_dict = np.load(vgg16_npy_path, encoding='latin1').item()

except FileNotFoundError:

print('Please download VGG16 parameters from here https://mega.nz/#!YU1FWJrA!O1ywiCS2IiOlUCtCpI6HTJOMrneN-Qdv3ywQP5poecM\nOr from my Baidu Cloud: https://pan.baidu.com/s/1Spps1Wy0bvrQHH2IMkRfpg')

self.tfx = tf.placeholder(tf.float32, [None, 224, 224, 3])

self.tfy = tf.placeholder(tf.float32, [None, 1])

# Convert RGB to BGR

red, green, blue = tf.split(axis=3, num_or_size_splits=3, value=self.tfx * 255.0)

bgr = tf.concat(axis=3, values=[

blue - self.vgg_mean[0],

green - self.vgg_mean[1],

red - self.vgg_mean[2],

])

# pre-trained VGG layers are fixed in fine-tune

conv1_1 = self.conv_layer(bgr, "conv1_1")

conv1_2 = self.conv_layer(conv1_1, "conv1_2")

pool1 = self.max_pool(conv1_2, 'pool1')

conv2_1 = self.conv_layer(pool1, "conv2_1")

conv2_2 = self.conv_layer(conv2_1, "conv2_2")

pool2 = self.max_pool(conv2_2, 'pool2')

conv3_1 = self.conv_layer(pool2, "conv3_1")

conv3_2 = self.conv_layer(conv3_1, "conv3_2")

conv3_3 = self.conv_layer(conv3_2, "conv3_3")

pool3 = self.max_pool(conv3_3, 'pool3')

conv4_1 = self.conv_layer(pool3, "conv4_1")

conv4_2 = self.conv_layer(conv4_1, "conv4_2")

conv4_3 = self.conv_layer(conv4_2, "conv4_3")

pool4 = self.max_pool(conv4_3, 'pool4')

conv5_1 = self.conv_layer(pool4, "conv5_1")

conv5_2 = self.conv_layer(conv5_1, "conv5_2")

conv5_3 = self.conv_layer(conv5_2, "conv5_3")

pool5 = self.max_pool(conv5_3, 'pool5')

# detach original VGG fc layers and

# reconstruct your own fc layers serve for your own purpose

self.flatten = tf.reshape(pool5, [-1, 7*7*512])

self.fc6 = tf.layers.dense(self.flatten, 256, tf.nn.relu, name='fc6')

self.out = tf.layers.dense(self.fc6, 1, name='out')

self.sess = tf.Session()

if restore_from:

saver = tf.train.Saver()

saver.restore(self.sess, restore_from)

else: # training graph

self.loss = tf.losses.mean_squared_error(labels=self.tfy, predictions=self.out)

self.train_op = tf.train.RMSPropOptimizer(0.001).minimize(self.loss)

self.sess.run(tf.global_variables_initializer())

def max_pool(self, bottom, name):

return tf.nn.max_pool(bottom, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME', name=name)

def conv_layer(self, bottom, name):

with tf.variable_scope(name):

conv = tf.nn.conv2d(bottom, self.data_dict[name][0], [1, 1, 1, 1], padding='SAME')

lout = tf.nn.relu(tf.nn.bias_add(conv, self.data_dict[name][1]))

return lout

def train(self, x, y):

loss, _ = self.sess.run([self.loss, self.train_op], {self.tfx: x, self.tfy: y})

return loss

def predict(self, paths):

fig, axs = plt.subplots(1, 2)

for i, path in enumerate(paths):

x = load_img(path)

length = self.sess.run(self.out, {self.tfx: x})

axs[i].imshow(x[0])

axs[i].set_title('Len: %.1f cm' % length)

axs[i].set_xticks(()); axs[i].set_yticks(())

plt.show()

def save(self, path='./for_transfer_learning/model/transfer_learn'):

saver = tf.train.Saver()

saver.save(self.sess, path, write_meta_graph=False)

def train():

tigers_x, cats_x, tigers_y, cats_y = load_data()

# plot fake length distribution

plt.hist(tigers_y, bins=20, label='Tigers')

plt.hist(cats_y, bins=10, label='Cats')

plt.legend()

plt.xlabel('length')

plt.show()

xs = np.concatenate(tigers_x + cats_x, axis=0)

ys = np.concatenate((tigers_y, cats_y), axis=0)

vgg = Vgg16(vgg16_npy_path='./for_transfer_learning/vgg16.npy')

print('Net built')

for i in range(100):

b_idx = np.random.randint(0, len(xs), 6)

train_loss = vgg.train(xs[b_idx], ys[b_idx])

print(i, 'train loss: ', train_loss)

# 存储后面添加的全连接层参数

vgg.save('./for_transfer_learning/model/transfer_learn')

def eval():

vgg = Vgg16(vgg16_npy_path='./for_transfer_learning/vgg16.npy',

restore_from='./for_transfer_learning/model/transfer_learn')

vgg.predict(

['./for_transfer_learning/data/kittycat/000129037.jpg', './for_transfer_learning/data/tiger/391412.jpg'])

if __name__ == '__main__':

# 下载数据

# download()

# 训练

train()

# 测试

# eval()

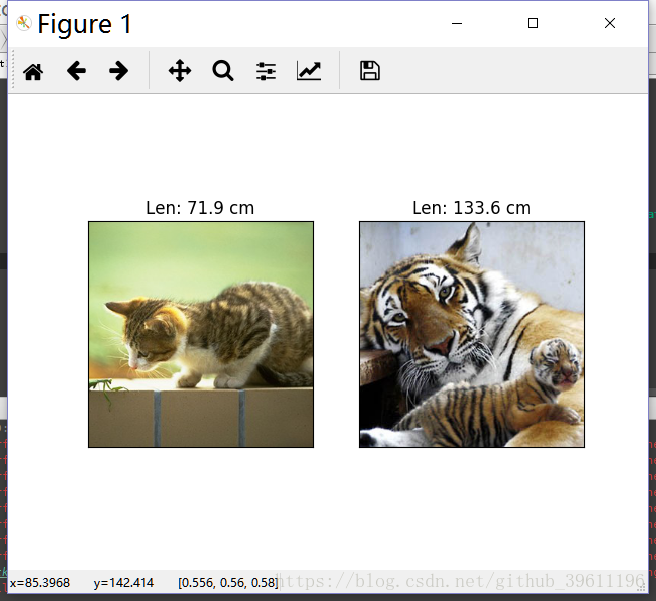

预测结果: